5_For_and_not_for_profit_comparison

ZZ, APB, TFJ

2022-11-24

Last updated: 2022-11-24

Checks: 7 0

Knit directory:

workflowr-policy-landscape/

This reproducible R Markdown analysis was created with workflowr (version 1.7.0). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20220505) was run prior to running

the code in the R Markdown file. Setting a seed ensures that any results

that rely on randomness, e.g. subsampling or permutations, are

reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version dbe46b2. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for

the analysis have been committed to Git prior to generating the results

(you can use wflow_publish or

wflow_git_commit). workflowr only checks the R Markdown

file, but you know if there are other scripts or data files that it

depends on. Below is the status of the Git repository when the results

were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Unstaged changes:

Modified: Policy_landscape_workflowr.R

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were

made to the R Markdown

(analysis/5_For_and_not_for_profit_comparison.Rmd) and HTML

(docs/5_For_and_not_for_profit_comparison.html) files. If

you’ve configured a remote Git repository (see

?wflow_git_remote), click on the hyperlinks in the table

below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| html | fb90a00 | Andrew Beckerman | 2022-11-24 | Build site. |

| Rmd | e08d7ac | Andrew Beckerman | 2022-11-24 | more organising and editing of workflowR mappings |

| html | e08d7ac | Andrew Beckerman | 2022-11-24 | more organising and editing of workflowR mappings |

| html | 0a21152 | zuzannazagrodzka | 2022-09-21 | Build site. |

| html | 796aa8e | zuzannazagrodzka | 2022-09-21 | Build site. |

| Rmd | efb1202 | zuzannazagrodzka | 2022-09-21 | Publish other files |

There are two questions we did not address formally in the manuscript because of too small sample size.

- What language do for-profit and not-for-profit publishers use in their mission statements and aims?

- What organisations use more common language to the UNESCO Recommendations in Open Science and which use business words?

We conducted the same language analysis that were conducted on the main stakeholders groups to compare for-profit vs. not-for-profit publishers and nonOA journals vs. OA journals.

R Step and Packages

# Clearing R

rm(list=ls())

# Libraries used for text/data analysis

library(tidyverse)

library(dplyr)

library(tidytext)

# Libraries used to create plots

library(ggplot2)

# Library to create a table when converting to html

library("kableExtra") Importing data

# Documents data

df_corpuses <- read_csv("./output/created_datasets/cleaned_data.csv")Rows: 22822 Columns: 10

── Column specification ────────────────────────────────────────────────────────

Delimiter: ","

chr (10): txt, filename, name, doc_type, stakeholder, sentence_doc, orig_wor...

ℹ Use `spec()` to retrieve the full column specification for this data.

ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.data_words <- df_corpuses

# Dictionary data 100 words per dictionary

# all_dict <- read_csv("./output/created_datasets/freq_dict_total_100.csv")

# All words

all_dict <- read_csv("./output/created_datasets/freq_dict_total_all.csv")Rows: 9587 Columns: 6

── Column specification ────────────────────────────────────────────────────────

Delimiter: ","

chr (1): word

dbl (5): present_in_dict, present_BUS, present_UNESCO, present_book, sum

ℹ Use `spec()` to retrieve the full column specification for this data.

ℹ Specify the column types or set `show_col_types = FALSE` to quiet this message.Data Prep and Cleaning

# Data preparation

# Changing name of the dictionary variable

new_dictionary <- all_dict

## Getting a data set with the words

data_words <- df_corpuses

dim(data_words)[1] 22822 10colnames(data_words) [1] "txt" "filename" "name" "doc_type"

[5] "stakeholder" "sentence_doc" "orig_word" "word_mix"

[9] "word" "org_subgroups"unique(data_words$org_subgroups)[1] "advocates" "funders" "journals_nonOA"

[4] "journals_OA" "publishers_nonProfit" "publishers_Profit"

[7] "repositories" "societies" # Merging and removing duplicates

data_words <- data_words %>%

rename(document = name) %>%

select(document, org_subgroups, word) %>%

left_join(new_dictionary, by = c("word" = "word")) %>% # merging

filter(org_subgroups %in% c("publishers_nonProfit", "publishers_Profit", "journals_nonOA", "journals_OA"))

# Transforming the data frame

# data_words <- transform(data_words, present_BUS = as.numeric(present_BUS),

# present_UNESCO = as.numeric(present_UNESCO),

# present_book = as.numeric(present_book))

# Adding a column with stakeholder and word together to allow merging later

data_words$stake_word <- paste(data_words$org_subgroups, "_", data_words$word)

# Adding a column with a document name and word together to allow merging later

data_words$doc_word <- paste(data_words$document, "_", data_words$word)

# Adding a column that will be used later to calculate the total of unique words in the document (dictionaries without removed words)

data_words$doc_pres <- 1

# Adding two columns that will be used later to calculate the total of unique words in the document (new dictionaries with removed common words)

# Replace NAs with 0 in all absence/presence columns

data_words_ND <- data_words %>%

mutate_at(vars(present_BUS, present_UNESCO, present_book, sum), ~replace_na(., 0)) # replacing NAs

head(as.data.frame(data_words_ND,3)) document org_subgroups word present_in_dict present_BUS

1 American Naturalist journals_nonOA inception 1 0

2 American Naturalist journals_nonOA maintain NA 0

3 American Naturalist journals_nonOA position NA 0

4 American Naturalist journals_nonOA world NA 0

5 American Naturalist journals_nonOA premier NA 0

6 American Naturalist journals_nonOA peer NA 0

present_UNESCO present_book sum stake_word

1 0 1 1 journals_nonOA _ inception

2 0 0 0 journals_nonOA _ maintain

3 0 0 0 journals_nonOA _ position

4 0 0 0 journals_nonOA _ world

5 0 0 0 journals_nonOA _ premier

6 0 0 0 journals_nonOA _ peer

doc_word doc_pres

1 American Naturalist _ inception 1

2 American Naturalist _ maintain 1

3 American Naturalist _ position 1

4 American Naturalist _ world 1

5 American Naturalist _ premier 1

6 American Naturalist _ peer 1colnames(data_words_ND) [1] "document" "org_subgroups" "word" "present_in_dict"

[5] "present_BUS" "present_UNESCO" "present_book" "sum"

[9] "stake_word" "doc_word" "doc_pres" ## Preparing columns used to creating ternary plots

# Select columns of my interest (stakeholders) and aggregate

data_words_ND <- data_words_ND %>%

select(document, org_subgroups, word, present_BUS, present_UNESCO, present_book, doc_pres, sum) # selecting columns, sum - column with the information about in how many dictionaries a certain word occurs

df_sum_pres_ND <- aggregate(x = data_words_ND[,4:8], by = list(data_words_ND$document), FUN = sum, na.rm = TRUE)

# By doing aggregate I lost info about the org_subgroups the doc come from, I want to add it

df_doc_ord <- data_words_ND %>%

select(document, org_subgroups) %>%

distinct(document, .keep_all = TRUE)

df_sum_pres_ND <- df_sum_pres_ND %>%

left_join(df_doc_ord, by = c("Group.1" = "document")) %>%

rename(document = Group.1)

# Creating % columns in a new df_sum_proc_ND data frame

df_sum_proc_ND <- df_sum_pres_ND

# View(df_sum_proc_ND)

df_sum_proc_ND$proc_BUS <-df_sum_proc_ND$present_BUS/df_sum_proc_ND$doc_pres*100

df_sum_proc_ND$proc_UNESCO <- df_sum_proc_ND$present_UNESCO/df_sum_proc_ND$doc_pres*100

df_sum_proc_ND$proc_book <- df_sum_proc_ND$present_book/df_sum_proc_ND$doc_pres*100

# Additional information about the data

# Stakeholders (2 from each of the stakeholders) that shared the highest no of words with UNESCO recommendation

UNESCO_stak_top <- df_sum_proc_ND %>%

group_by(org_subgroups) %>%

arrange(desc(proc_UNESCO)) %>%

slice_head(n=2) %>%

select(org_subgroups, document, proc_UNESCO)

UNESCO_stak_top %>%

kbl(caption = "Stakeholders that shared the higest no of words with UNESCO recommendation:") %>%

kable_classic("hover", full_width = T)| org_subgroups | document | proc_UNESCO |

|---|---|---|

| journals_nonOA | Ecology Letters | 9.677419 |

| journals_nonOA | Frontiers in Ecology and the Environment | 8.411215 |

| journals_OA | Frontiers in Ecology and Evolution | 13.636364 |

| journals_OA | Conservation Letters | 8.823529 |

| publishers_nonProfit | Resilience Alliance | 12.060301 |

| publishers_nonProfit | BioOne | 10.447761 |

| publishers_Profit | Cell Press | 9.292035 |

| publishers_Profit | Springer Nature | 8.196721 |

# Stakeholders (2 from each of the stakeholders) that shared the highest no of words with business dictionary

business_stak_top <- df_sum_proc_ND %>%

group_by(org_subgroups) %>%

arrange(desc(proc_BUS)) %>%

slice_head(n=2) %>%

select(org_subgroups, document, proc_BUS)

business_stak_top %>%

kbl(caption = "Stakeholders that shared the higest no of words with business dictionary:") %>%

kable_classic("hover", full_width = T)| org_subgroups | document | proc_BUS |

|---|---|---|

| journals_nonOA | Evolution | 21.212121 |

| journals_nonOA | Ecology | 8.212560 |

| journals_OA | Evolution Letters | 6.349206 |

| journals_OA | Biogeosciences | 5.917160 |

| publishers_nonProfit | Resilience Alliance | 5.527638 |

| publishers_nonProfit | Annual Reviews | 4.666667 |

| publishers_Profit | Elsevier | 3.433476 |

| publishers_Profit | Pensoft | 3.092783 |

# Stakeholders (2 from each of the stakeholders) that shared the highest no of words with book dictionary (control)

book_stak_top <- df_sum_proc_ND %>%

group_by(org_subgroups) %>%

arrange(desc(proc_book)) %>%

slice_head(n=2) %>%

select(org_subgroups, document, proc_book)

book_stak_top %>%

kbl(caption = "Stakeholders that shared the higest no of words with book dictionary (control):") %>%

kable_classic("hover", full_width = T)| org_subgroups | document | proc_book |

|---|---|---|

| journals_nonOA | Conservation Biology | 18.81188 |

| journals_nonOA | Trends in Ecology & Evolution | 18.07229 |

| journals_OA | Evolution Letters | 22.22222 |

| journals_OA | Remote Sensing in Ecology and Conservation | 20.38835 |

| publishers_nonProfit | The University of Chicago Press | 22.36025 |

| publishers_nonProfit | The Royal Society Publishing | 20.83333 |

| publishers_Profit | Cell Press | 16.37168 |

| publishers_Profit | Wiley | 12.84916 |

Creating graphs

no <- nrow(df_sum_proc_ND)

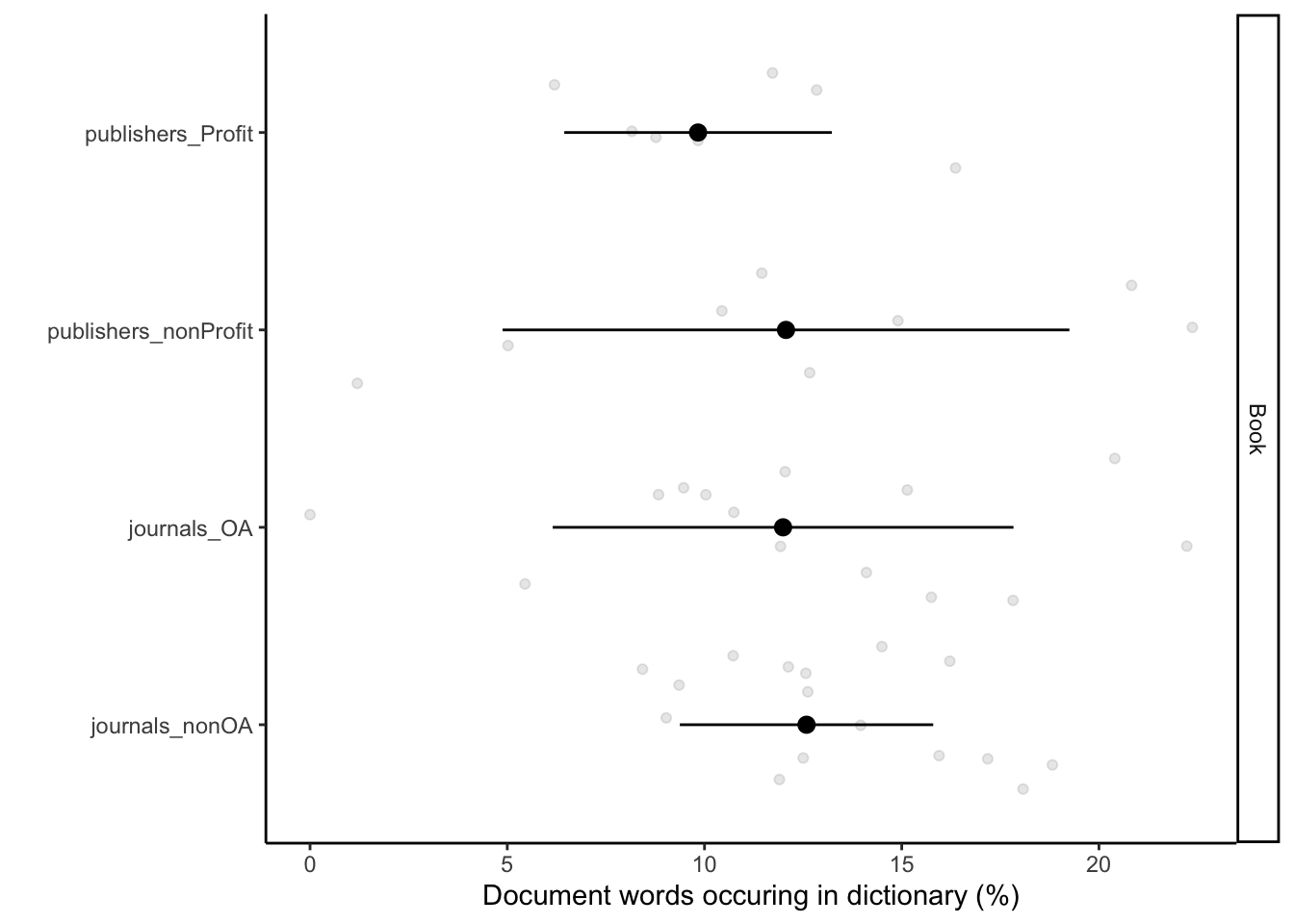

no[1] 45# Plotting them separately book

df_sum_proc_ND_book = data.frame(

document = rep(df_sum_proc_ND$document,1),

org_subgroups = rep(df_sum_proc_ND$org_subgroups,1),

type = c(rep("Book",no)),

perc = c(df_sum_proc_ND$proc_book),

perc2 = c(df_sum_proc_ND$proc_book))

sum_df_sum_proc_ND_book =

df_sum_proc_ND_book %>%

group_by(org_subgroups, type) %>%

# dplyr::summarise(perc = mean(perc), SD = sd(perc2))

dplyr::summarise(perc = median(perc), SD = sd(perc2))`summarise()` has grouped output by 'org_subgroups'. You can override using the

`.groups` argument.ggplot() +

geom_point(data = df_sum_proc_ND_book, aes(x = perc, y = org_subgroups), alpha = 0.1, position = position_jitter()) +

geom_pointrange(data = sum_df_sum_proc_ND_book, aes(x = perc, xmin = perc - SD, xmax = perc + SD, y = org_subgroups)) +

facet_grid(type~.) +

labs(x = "Document words occuring in dictionary (%)", y = "") +

scale_colour_discrete(guide = "none") +

theme_classic()

| Version | Author | Date |

|---|---|---|

| 796aa8e | zuzannazagrodzka | 2022-09-21 |

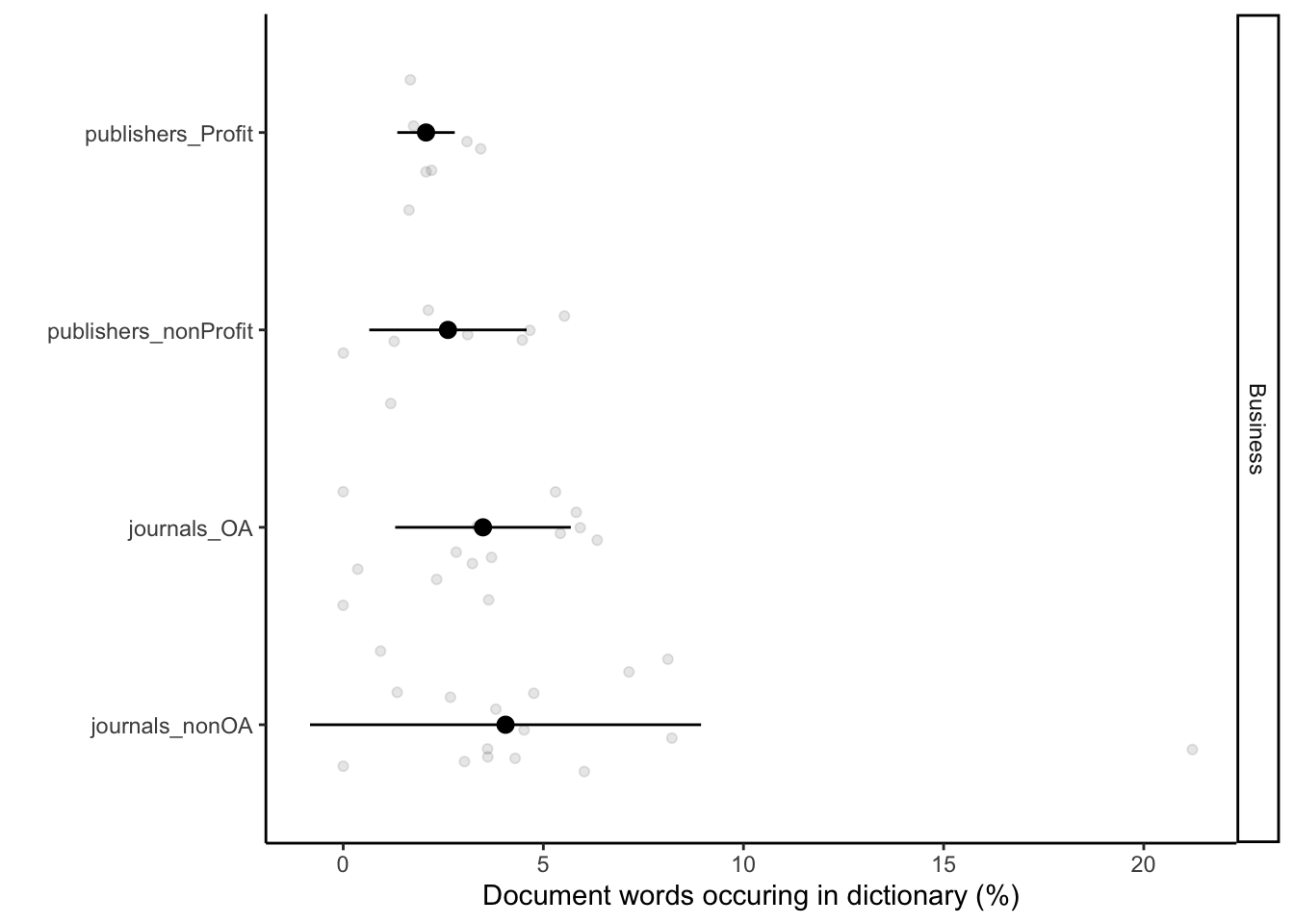

# Plotting them separately Business

df_sum_proc_ND_BUS = data.frame(

document = rep(df_sum_proc_ND$document,1),

org_subgroups = rep(df_sum_proc_ND$org_subgroups,1),

type = c(rep("Business",no)),

perc = c(df_sum_proc_ND$proc_BUS),

perc2 = c(df_sum_proc_ND$proc_BUS))

sum_df_sum_proc_ND_BUS =

df_sum_proc_ND_BUS %>%

group_by(org_subgroups, type) %>%

# dplyr::summarise(perc = mean(perc), SD = sd(perc2))

dplyr::summarise(perc = median(perc), SD = sd(perc2))`summarise()` has grouped output by 'org_subgroups'. You can override using the

`.groups` argument.ggplot() +

geom_point(data = df_sum_proc_ND_BUS, aes(x = perc, y = org_subgroups), alpha = 0.1, position = position_jitter()) +

geom_pointrange(data = sum_df_sum_proc_ND_BUS, aes(x = perc, xmin = perc - SD, xmax = perc + SD, y = org_subgroups)) +

facet_grid(type~.) +

labs(x = "Document words occuring in dictionary (%)", y = "") +

scale_colour_discrete(guide = "none") +

theme_classic()

| Version | Author | Date |

|---|---|---|

| 796aa8e | zuzannazagrodzka | 2022-09-21 |

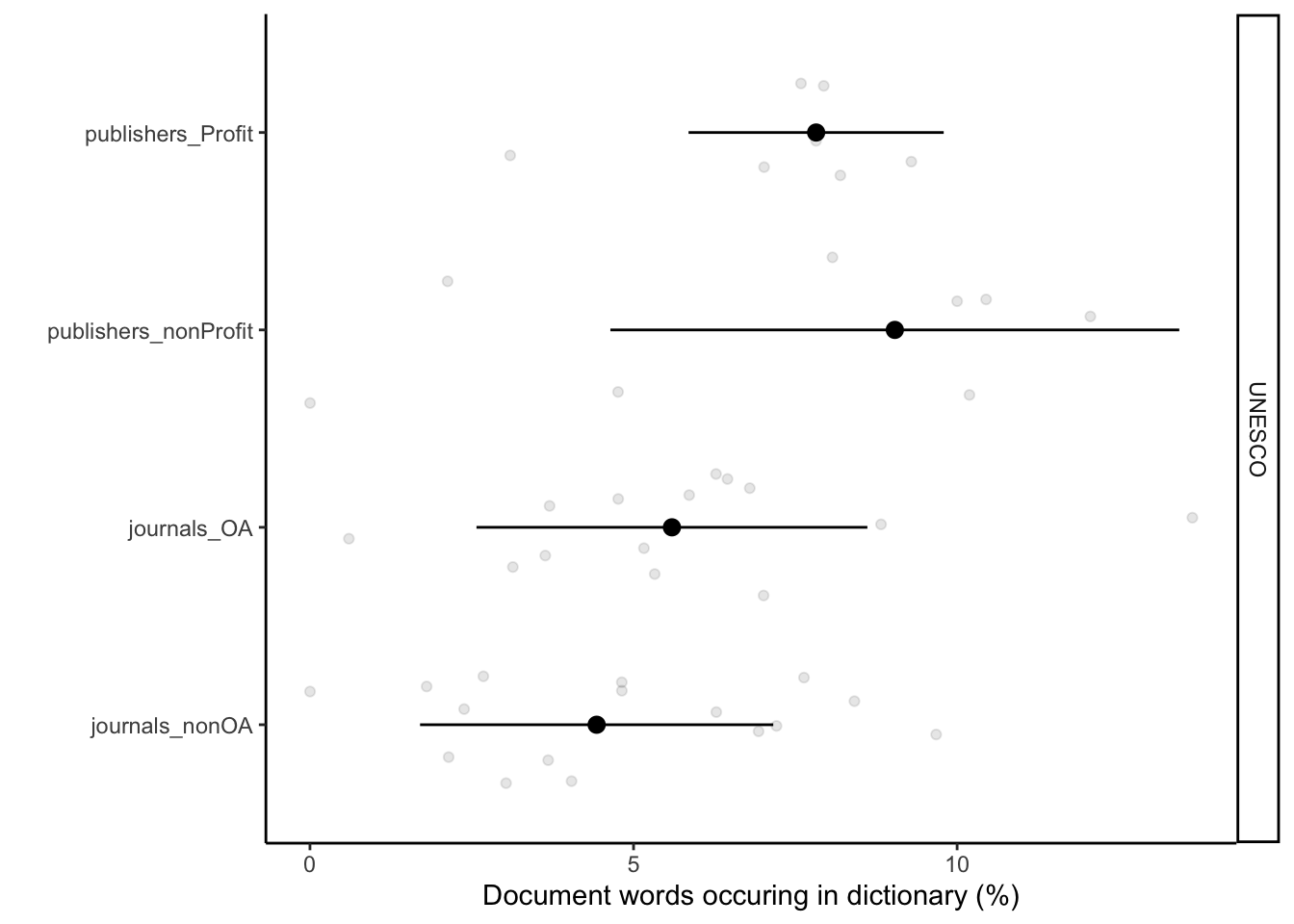

# Plotting them separately UNESCO

df_sum_proc_ND_UNESCO = data.frame(

document = rep(df_sum_proc_ND$document,1),

org_subgroups = rep(df_sum_proc_ND$org_subgroups,1),

type = c(rep("UNESCO",no)),

perc = c(df_sum_proc_ND$proc_UNESCO),

perc2 = c(df_sum_proc_ND$proc_UNESCO))

sum_df_sum_proc_ND_UNESCO =

df_sum_proc_ND_UNESCO %>%

group_by(org_subgroups, type) %>%

# dplyr::summarise(perc = mean(perc), SD = sd(perc2))

dplyr::summarise(perc = median(perc), SD = sd(perc2))`summarise()` has grouped output by 'org_subgroups'. You can override using the

`.groups` argument.ggplot() +

geom_point(data = df_sum_proc_ND_UNESCO, aes(x = perc, y = org_subgroups), alpha = 0.1, position = position_jitter()) +

geom_pointrange(data = sum_df_sum_proc_ND_UNESCO, aes(x = perc, xmin = perc - SD, xmax = perc + SD, y = org_subgroups)) +

facet_grid(type~.) +

labs(x = "Document words occuring in dictionary (%)", y = "") +

scale_colour_discrete(guide = "none") +

theme_classic()

| Version | Author | Date |

|---|---|---|

| 796aa8e | zuzannazagrodzka | 2022-09-21 |

Session information

sessionInfo()R version 4.2.1 (2022-06-23)

Platform: x86_64-apple-darwin17.0 (64-bit)

Running under: macOS Big Sur ... 10.16

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRlapack.dylib

locale:

[1] en_GB.UTF-8/en_GB.UTF-8/en_GB.UTF-8/C/en_GB.UTF-8/en_GB.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] kableExtra_1.3.4 tidytext_0.3.4 forcats_0.5.2 stringr_1.4.1

[5] dplyr_1.0.10 purrr_0.3.5 readr_2.1.3 tidyr_1.2.1

[9] tibble_3.1.8 ggplot2_3.3.6 tidyverse_1.3.2 workflowr_1.7.0

loaded via a namespace (and not attached):

[1] fs_1.5.2 lubridate_1.8.0 bit64_4.0.5

[4] webshot_0.5.4 httr_1.4.4 rprojroot_2.0.3

[7] SnowballC_0.7.0 tools_4.2.1 backports_1.4.1

[10] bslib_0.4.0 utf8_1.2.2 R6_2.5.1

[13] DBI_1.1.3 colorspace_2.0-3 withr_2.5.0

[16] tidyselect_1.2.0 processx_3.7.0 bit_4.0.4

[19] compiler_4.2.1 git2r_0.30.1 cli_3.4.1

[22] rvest_1.0.3 xml2_1.3.3 labeling_0.4.2

[25] sass_0.4.2 scales_1.2.1 callr_3.7.2

[28] systemfonts_1.0.4 digest_0.6.29 rmarkdown_2.16

[31] svglite_2.1.0 pkgconfig_2.0.3 htmltools_0.5.3

[34] highr_0.9 dbplyr_2.2.1 fastmap_1.1.0

[37] rlang_1.0.6 readxl_1.4.1 rstudioapi_0.14

[40] farver_2.1.1 jquerylib_0.1.4 generics_0.1.3

[43] jsonlite_1.8.3 vroom_1.6.0 tokenizers_0.2.3

[46] googlesheets4_1.0.1 magrittr_2.0.3 Matrix_1.4-1

[49] Rcpp_1.0.9 munsell_0.5.0 fansi_1.0.3

[52] lifecycle_1.0.3 stringi_1.7.8 whisker_0.4

[55] yaml_2.3.6 grid_4.2.1 parallel_4.2.1

[58] promises_1.2.0.1 crayon_1.5.2 lattice_0.20-45

[61] haven_2.5.1 hms_1.1.2 knitr_1.40

[64] ps_1.7.1 pillar_1.8.1 reprex_2.0.2

[67] glue_1.6.2 evaluate_0.16 getPass_0.2-2

[70] modelr_0.1.9 vctrs_0.5.0 tzdb_0.3.0

[73] httpuv_1.6.6 cellranger_1.1.0 gtable_0.3.1

[76] assertthat_0.2.1 cachem_1.0.6 xfun_0.33

[79] broom_1.0.1 janeaustenr_1.0.0 later_1.3.0

[82] googledrive_2.0.0 viridisLite_0.4.1 gargle_1.2.1

[85] ellipsis_0.3.2

sessionInfo()R version 4.2.1 (2022-06-23)

Platform: x86_64-apple-darwin17.0 (64-bit)

Running under: macOS Big Sur ... 10.16

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.2/Resources/lib/libRlapack.dylib

locale:

[1] en_GB.UTF-8/en_GB.UTF-8/en_GB.UTF-8/C/en_GB.UTF-8/en_GB.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] kableExtra_1.3.4 tidytext_0.3.4 forcats_0.5.2 stringr_1.4.1

[5] dplyr_1.0.10 purrr_0.3.5 readr_2.1.3 tidyr_1.2.1

[9] tibble_3.1.8 ggplot2_3.3.6 tidyverse_1.3.2 workflowr_1.7.0

loaded via a namespace (and not attached):

[1] fs_1.5.2 lubridate_1.8.0 bit64_4.0.5

[4] webshot_0.5.4 httr_1.4.4 rprojroot_2.0.3

[7] SnowballC_0.7.0 tools_4.2.1 backports_1.4.1

[10] bslib_0.4.0 utf8_1.2.2 R6_2.5.1

[13] DBI_1.1.3 colorspace_2.0-3 withr_2.5.0

[16] tidyselect_1.2.0 processx_3.7.0 bit_4.0.4

[19] compiler_4.2.1 git2r_0.30.1 cli_3.4.1

[22] rvest_1.0.3 xml2_1.3.3 labeling_0.4.2

[25] sass_0.4.2 scales_1.2.1 callr_3.7.2

[28] systemfonts_1.0.4 digest_0.6.29 rmarkdown_2.16

[31] svglite_2.1.0 pkgconfig_2.0.3 htmltools_0.5.3

[34] highr_0.9 dbplyr_2.2.1 fastmap_1.1.0

[37] rlang_1.0.6 readxl_1.4.1 rstudioapi_0.14

[40] farver_2.1.1 jquerylib_0.1.4 generics_0.1.3

[43] jsonlite_1.8.3 vroom_1.6.0 tokenizers_0.2.3

[46] googlesheets4_1.0.1 magrittr_2.0.3 Matrix_1.4-1

[49] Rcpp_1.0.9 munsell_0.5.0 fansi_1.0.3

[52] lifecycle_1.0.3 stringi_1.7.8 whisker_0.4

[55] yaml_2.3.6 grid_4.2.1 parallel_4.2.1

[58] promises_1.2.0.1 crayon_1.5.2 lattice_0.20-45

[61] haven_2.5.1 hms_1.1.2 knitr_1.40

[64] ps_1.7.1 pillar_1.8.1 reprex_2.0.2

[67] glue_1.6.2 evaluate_0.16 getPass_0.2-2

[70] modelr_0.1.9 vctrs_0.5.0 tzdb_0.3.0

[73] httpuv_1.6.6 cellranger_1.1.0 gtable_0.3.1

[76] assertthat_0.2.1 cachem_1.0.6 xfun_0.33

[79] broom_1.0.1 janeaustenr_1.0.0 later_1.3.0

[82] googledrive_2.0.0 viridisLite_0.4.1 gargle_1.2.1

[85] ellipsis_0.3.2