nonlinear_growth_analysis

Haider Inam

4/17/2020

Last updated: 2020-08-14

Checks: 6 1

Knit directory: duplex_sequencing_screen/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

The R Markdown file has unstaged changes. To know which version of the R Markdown file created these results, you’ll want to first commit it to the Git repo. If you’re still working on the analysis, you can ignore this warning. When you’re finished, you can run wflow_publish to commit the R Markdown file and build the HTML.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20200402) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version f368371. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Untracked files:

Untracked: 10kmutants_nowt.csv

Untracked: 10kmutants_wt_1_1.csv

Untracked: Rplot.png

Untracked: Rplot01.pdf

Untracked: allele_freq_enrichment_sim.pdf

Untracked: allmuts_dose_response.pdf

Untracked: analysis/bcrabl_hill_ic50s.csv

Untracked: analysis/column_definitions_for_twinstrand_data_06062020.csv

Untracked: analysis/dose_response_curve_fitting_with_errorbars.Rmd

Untracked: analysis/multinomial_sims.Rmd

Untracked: analysis/mutagenesis_radar_chart.Rmd

Untracked: analysis/signatures_barplot.pdf

Untracked: analysis/simple_data_generation.Rmd

Untracked: analysis/twinstrand_growthrates_simple.csv

Untracked: analysis/twinstrand_maf_merge_simple.csv

Untracked: analysis/wildtype_growthrates_sequenced.csv

Untracked: bcrablwt_dose_response.pdf

Untracked: code/microvariation.normalizer.R

Untracked: count_enrichment_sim.pdf

Untracked: data/Combined_data_frame_IC_Mutprob_abundance.csv

Untracked: data/IC50HeatMap.csv

Untracked: data/Twinstrand/

Untracked: data/gfpenrichmentdata.csv

Untracked: data/heatmap_concat_data.csv

Untracked: data/mcmc_inferred_doses.csv

Untracked: dosing_error_doseresponse.pdf

Untracked: dosing_error_doseresponse_forgrant.pdf

Untracked: dosing_normalization_standard_deviations.pdf

Untracked: dosing_normalization_stdevs_paired.pdf

Untracked: e255k_dose_response.pdf

Untracked: e255k_initial_spikins_figure.pdf

Untracked: enrichment_simulations_3mutant_MAF.pdf

Untracked: enrichment_simulations_3mutant_count.pdf

Untracked: figures_archive/

Untracked: inferred_doses_barplot_werrorbars.pdf

Untracked: inferred_doses_barplot_werrorbars_wt.pdf

Untracked: inferred_doses_caterpillar.pdf

Untracked: inferred_doses_caterpillar2.pdf

Untracked: inferred_doses_violin.pdf

Untracked: m351t_deviation.pdf

Untracked: output/archive/

Untracked: output/bmes_abstract_51220.pdf

Untracked: output/clinicalabundancepredictions_BMES_abstract_51320.pdf

Untracked: output/clinicalabundancepredictions_BMES_abstract_52020.pdf

Untracked: output/enrichment_simulations_3mutants_52020.pdf

Untracked: output/grant_fig.pdf

Untracked: output/grant_fig_v2.pdf

Untracked: output/grant_fig_v2updated.pdf

Untracked: output/ic50data_all_conc.csv

Untracked: output/ic50data_all_confidence_intervals_individual_logistic_fits.csv

Untracked: output/ic50data_all_confidence_intervals_raw_data.csv

Untracked: output/ic50heatmap_sd_ci.csv

Untracked: output/twinstrand_microvariations_normalized.csv

Untracked: shinyapp/

Untracked: wildtype_growthrates_sequenced.csv

Unstaged changes:

Modified: analysis/4_7_20_update.Rmd

Modified: analysis/clinical_abundance_predictions.Rmd

Modified: analysis/enrichment_simulations.Rmd

Modified: analysis/nonlinear_growth_analysis.Rmd

Modified: analysis/spikeins_depthofcoverages.Rmd

Modified: analysis/twinstrand_spikeins_data_generation.Rmd

Deleted: data/README.md

Modified: output/twinstrand_maf_merge.csv

Modified: output/twinstrand_simple_melt_merge.csv

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/nonlinear_growth_analysis.Rmd) and HTML (docs/nonlinear_growth_analysis.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| html | eaca616 | haiderinam | 2020-04-20 | Build site. |

| Rmd | 2bba93e | haiderinam | 2020-04-20 | wflow_publish("analysis/*.Rmd") |

# # 1. Look at whether the nonlinear Enrich 2, on its own, improves performance

# # Make a version of Enrich 2 that includes your counts and use it to model out your stuf

# twinstrand_maf_merge2=twinstrand_maf_merge%>%filter(!VariationType%in%"indel",tki_resistant_mutation%in%"True",!mutant%in%NA)%>%dplyr::select(!c(X,Sample,Chromosome))

#

# sort(unique(twinstrand_maf_merge2$Start))

# sort(unique(twinstrand_maf_merge2$mutant))

#

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M3",time_point%in%"D6")

# sum(a$AltDepth)

# sort(unique(a$mutant))

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M3",time_point%in%"D0")

# sum(a$AltDepth)

# sum(a$AltDepth)

# mean(a$Depth)

# #Based on a 1 in 1000 frequency and 18 mutants, we would expect the mean variant/Wt frequ to be 18 in 1000 at Day 0

# #For a mean coverage of 32000, we would expect 568 mutants, which is almost exactly what we see!!!!

# # 18/1000*31569

# #Plot Wt decay rates for all experiments from D0 to D6

# #M3 D0:

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M3",time_point%in%"D0")

# (mean(a$Depth)-sum(a$AltDepth))/mean(a$Depth)

# #M3 D3:

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M3",time_point%in%"D3")

# (mean(a$Depth)-sum(a$AltDepth))/mean(a$Depth)

# #M3 D6:

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M3",time_point%in%"D6")

# (mean(a$Depth)-sum(a$AltDepth))/mean(a$Depth)

#

# 0.7775412/0.9832434 #D0D3

# 0.09033963/0.9832434 #D0D6

# 0.09033963/0.7775412#D3D6

# #D0D3=WtD0/WtD3

# #M5

# #M5 D3:

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M5",time_point%in%"D3")

# (mean(a$Depth)-sum(a$AltDepth))/mean(a$Depth)

# #M5 D6:

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M5",time_point%in%"D6")

# (mean(a$Depth)-sum(a$AltDepth))/mean(a$Depth)

#

# #M7

# #M7 D0:

# #M7 D3:

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M7",time_point%in%"D3")

# (mean(a$Depth)-sum(a$AltDepth))/mean(a$Depth)

# #M7 D6:

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M7",time_point%in%"D6")

# (mean(a$Depth)-sum(a$AltDepth))/mean(a$Depth) # twinstrand_simple_melt_merge=twinstrand_simple_melt_merge%>%mutate(wt_ratio=case_when(experiment%in%"M3"&&duration%in%"d0d3"~0.7775412/0.9832434,

# experiment%in%"M3"&&duration%in%"d0d6"~0.09033963/0.9832434,

# experiment%in%"M3"&&duration%in%"d3d6"~0.09033963/0.7775412))

#

# twinstrand_simple_melt_merge=twinstrand_simple_melt_merge%>%filter(experiment%in%"M3")%>%mutate(wt_ratio=case_when((experiment%in%"M3"&duration%in%"d0d3")~0.7775412/0.9832434,

# (experiment%in%"M3"&duration%in%"d0d6")~0.09033963/0.9832434,

# (experiment%in%"M3"&duration%in%"d3d6")~0.09033963/0.7775412))

#

# #Subtract the log of the Wt ratio from the log ratios

# twinstrand_simple_melt_merge=twinstrand_simple_melt_merge%>%mutate()Manually adding D0 values for all experiments

#First, creating day 0 values for M4,M5,M7, and sp_enu_3. Whenever you see any of these experiments, add M3's or M6's or Sp_Enu4's D0 counts for its counts.

#Ideally the mutate should also change CustomerName to M7D0 etc

M3D0=twinstrand_maf_merge%>%filter(experiment=="M3",time_point=="D0")

M5D0=M3D0%>%mutate(experiment="M5")

M7D0=M3D0%>%mutate(experiment="M7")

M6D0=twinstrand_maf_merge%>%filter(experiment=="M6",time_point=="D0")

M4D0=M6D0%>%mutate(experiment="M4")

Enu3_D0=twinstrand_maf_merge%>%filter(experiment=="Enu_3",time_point=="D0")

Enu4_D0=Enu3_D0%>%mutate(experiment="Enu_4")

twinstrand_maf_merge=rbind(twinstrand_maf_merge,M5D0,M7D0,M4D0,Enu4_D0)4/20/20 Analysis of Enrich2 vs Shendure vs our method

twinstrand_maf_merge2=twinstrand_maf_merge%>%filter(!VariationType%in%"indel",tki_resistant_mutation%in%"True",!mutant%in%NA)%>%dplyr::select(!c(X,Sample,Chromosome))

# twinstrand_maf_merge2=twinstrand_maf_merge2%>%filter(experiment=="M5")

#Wt count is the same for all variants on a given time_point for a given experiment

wt_count=twinstrand_maf_merge2%>%group_by(experiment,time_point)%>%summarize(wt_count=mean(Depth)-sum(AltDepth))

twinstrand_maf_merge2=merge(twinstrand_maf_merge2,wt_count,by = c("experiment","time_point"))

twinstrand_maf_merge2=twinstrand_maf_merge2%>%mutate(log_ratio=log10(AltDepth/wt_count))

###Adding Shendure's log ratio

twinstrand_maf_merge2=twinstrand_maf_merge2%>%mutate(log_ratio_shendure=log10(AltDepth/Depth))

###

###Converting time from factor to numeral

twinstrand_maf_merge2=twinstrand_maf_merge2%>%mutate(time=case_when(time_point=="D0"~0,

time_point=="D3"~72,

time_point=="D6"~144))

###

plotly=ggplot(twinstrand_maf_merge2%>%filter(experiment%in%c("M3","M6")),aes(x=time,y=log_ratio,color=factor(experiment)))+geom_point()+geom_line()+facet_wrap(~factor(mutant))

ggplotly(plotly)plotly=ggplot(twinstrand_maf_merge2,aes(x=time,y=log_ratio,color=factor(experiment)))+geom_point()+geom_line()+facet_wrap(~factor(mutant))

ggplotly(plotly)#Next steps: regress the slope of this line for M3 and then for M6. See how well these R1 and R2 compare relative to Shendure's estimates

# lm(log_ratio~time,data = twinstrand_maf_merge2%>%filter(experiment=="M3",mutant=="T315I"))

# lm(log_ratio~time,data = twinstrand_maf_merge2%>%filter(experiment=="M6",mutant=="T315I"))

# lm(log_ratio~time,data = twinstrand_maf_merge2%>%filter(experiment=="M6",mutant=="T315I"))

# a=lm(log_ratio~time,data = twinstrand_maf_merge2%>%filter(experiment=="M6",mutant=="T315I"))

# a$coefficients[2]

# twinstrand_maf_merge2$a=twinstrand_maf_merge2$totalmutant/twinstrand_maf_merge2$time

twinstrand_lm=twinstrand_maf_merge2%>%

filter(!experiment%in%c("M7"),!mutant%in%c("Y320C","D276G","F359L"))%>% #Honestly don't know why including M7 is throwing an error

group_by(experiment,mutant)%>%

filter(n()>=3)%>% #This is filtering for mutants that have data for all 3 time points

summarise(lm_slope_enrich2=lm(log_ratio~time)$coefficients[2],

lm_slope_shendure=lm(log_ratio_shendure~time)$coefficients[2],

average_logratio_shendure=log_ratio_shendure[time_point=="D6"]/log_ratio_shendure[time_point=="D0"]/144,

netgr_obs_d0d6=log10(totalmutant[time_point=="D6"]/totalmutant[time_point=="D0"])/144,

lm_slope_netgr_obs=lm(log10(totalmutant)~time)$coefficients[2])

# unique(twinstrand_lm$experiment)

# a=twinstrand_maf_merge2%>%filter(experiment=="M4",mutant=="T315I")

twinstrand_lm_melt=melt(twinstrand_lm,id.vars=c("experiment","mutant"),value.name = "log_ratio",variable.name = "ratio_type")

lm_cast=dcast(twinstrand_lm_melt,mutant+ratio_type~experiment,value.var="log_ratio")

plotly=ggplot(lm_cast,aes(x=M6,y=M3,label=mutant,color=ratio_type))+geom_text()+geom_abline()

ggplotly(plotly)plotly=ggplot(lm_cast,aes(x=M4,y=M3,label=mutant,color=ratio_type))+geom_text()+geom_abline()

ggplotly(plotly)plotly=ggplot(lm_cast,aes(x=M6,y=M4,label=mutant,color=ratio_type))+geom_text()+geom_abline()

ggplotly(plotly)plotly=ggplot(lm_cast,aes(x=M3,y=M5,label=mutant,color=ratio_type))+geom_text()+geom_abline()

ggplotly(plotly)# ggplot(lm_cast,aes(x=M5,y=M7,label=mutant,color=ratio_type))+geom_text()+geom_abline()

#Things to add to dataset: Right now you only have M3 and M6. Take M3D0 and add it to M5D0, and M7D0. Take M6D0 and add it to M4D0. Then look at correlations of all populations. Done on 4/20/20.Is Enrich2 better than Shendure?

Are the D6 FACs counts off?

# twinstrand_simple_melt_merge=read.csv("../output/twinstrand_simple_melt_merge.csv",header = T,stringsAsFactors = F)

twinstrand_simple_melt_merge=read.csv("output/twinstrand_simple_melt_merge.csv",header = T,stringsAsFactors = F)

#Plot growth rates of all mutants next to each other. If our replicates have such a low error, why are we worried about correcting that "error"? The source of error is probably the non-conformity between IC50s and pooled measurements. Answer: our mutants aren't THAT close together. It's worth looking at whether the non-linear transformation improves results.

twinstrand_simple_melt_merge$mutant=factor(twinstrand_simple_melt_merge$mutant,levels = as.character(unique(twinstrand_simple_melt_merge$mutant[order((twinstrand_simple_melt_merge$netgr_pred),decreasing = T)])))

plotly=ggplot(twinstrand_simple_melt_merge%>%filter(duration%in%"d3d6"),aes(x=factor(mutant),y=netgr_obs,color=experiment))+geom_point()+cleanup

ggplotly(plotly)a=twinstrand_simple_melt_merge%>%filter(experiment%in%"M3",mutant%in%"T315I")

plotly=ggplot(twinstrand_simple_melt_merge%>%filter(experiment%in%c("M6","M3")),aes(x=factor(mutant),y=netgr_obs,color=duration))+geom_point()+facet_wrap(~experiment)+cleanup

ggplotly(plotly)plotly=ggplot(twinstrand_simple_melt_merge,aes(x=factor(mutant),y=netgr_obs,color=duration))+geom_point()+facet_wrap(~experiment)+cleanup

ggplotly(plotly)a=twinstrand_simple_melt_merge%>%

mutate(netgr_obs=case_when(experiment=="M6"~netgr_obs+.03,

experiment=="M5"~netgr_obs+.015,

experiment%in%c("M3","M5","M4","M7")~netgr_obs))

plotly=ggplot(a%>%filter(experiment%in%c("M6","M3")),aes(x=factor(mutant),y=netgr_obs,color=duration))+geom_point()+facet_wrap(~experiment)+cleanup

ggplotly(plotly)#Looks like F359I is at ALTDEPTH of 1 at D6 which is why it's so erroneous. Also notice that you only see E355A in 3 of the experiments. All of this points to the fact that scaling error bars on growth rates based on mutant coverage is a good idea.

a=twinstrand_maf_merge%>%filter(experiment%in%"M6",mutant%in%"F359I")

a=twinstrand_maf_merge%>%filter(experiment%in%"M6",!mutant%in%"NA",!tki_resistant_mutation%in%NA)

a=twinstrand_maf_merge%>%filter(experiment%in%"M4",!mutant%in%"NA",!tki_resistant_mutation%in%NA)

a=twinstrand_maf_merge%>%filter(experiment%in%"M7",mutant%in%"E459K")

#What even is the point of doing duplex sequencing if your sequencing coverage is 30,000?Trying to derive WT growth rates for MCMC 060520

#Getting IC50 predictions for %age alive WT at 1.25uM

# ic50data_long_wt=read.csv("../data/IC50HeatMap.csv",header = T,stringsAsFactors = F)

ic50data_long_wt=read.csv("data/IC50HeatMap.csv",header = T,stringsAsFactors = F)

ic50data_long_wt=ic50data_long_wt%>%filter(species=="WT")

wt_y_mean=mean(ic50data_long_wt$X1.25)

wt_y_sd=sd(ic50data_long_wt$X1.25)

#Getting observed WT net growth rate and hence %age alive

# a=twinstrand_maf_merge2%>%filter(experiment%in%"M3",mutant%in%"T315I")%>%mutate(wt_netgr=log(wt_count[time_point=="D6"]/wt_count[time_point=="D3"])/72)

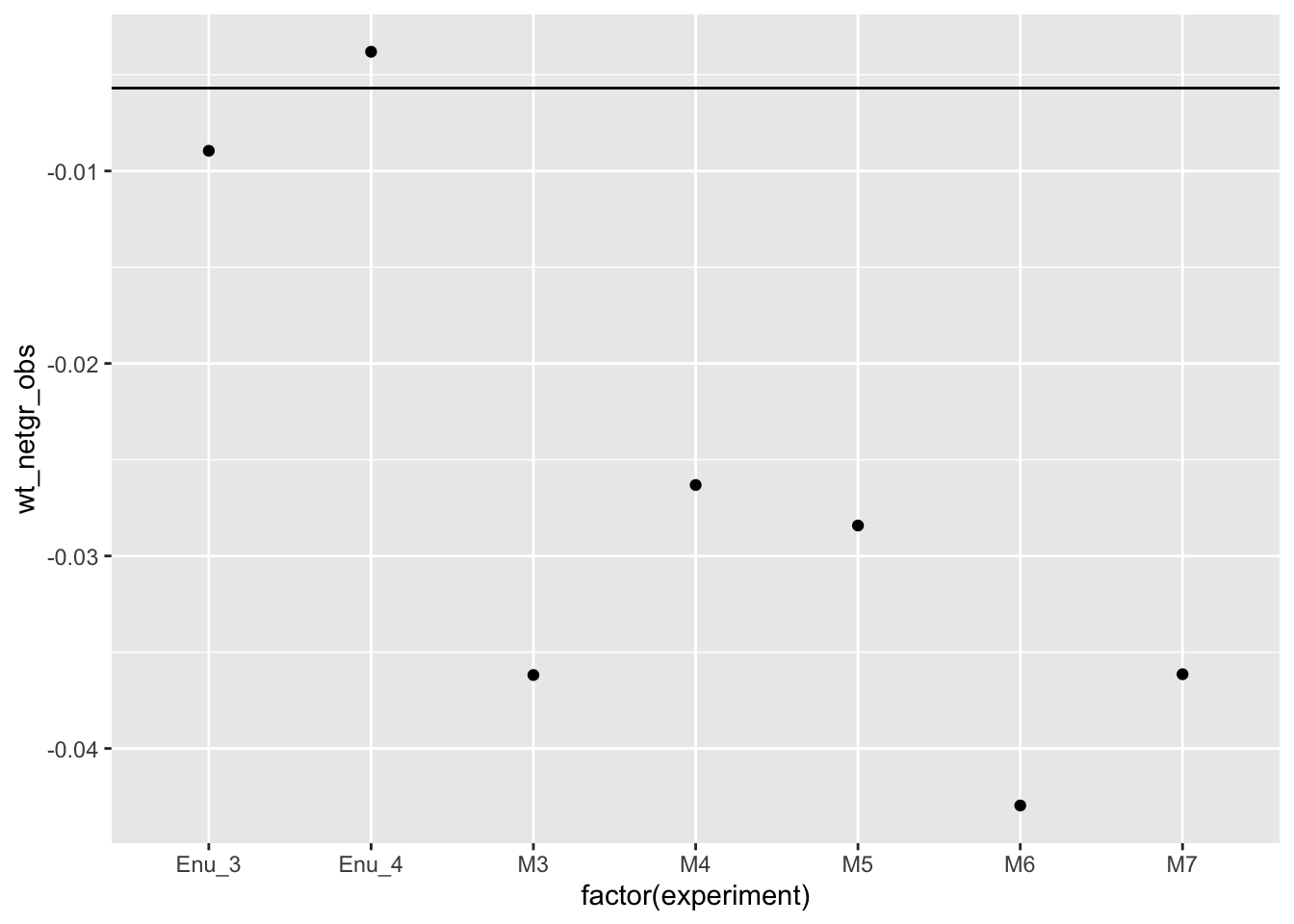

wt_df=twinstrand_maf_merge2%>%filter(mutant%in%"T315I")%>%group_by(experiment)%>%summarize(wt_netgr_obs=log(wt_count[time_point=="D6"]/wt_count[time_point=="D3"])/72)

wt_df=wt_df%>%mutate(netgr_wo_drug=0.055,drug_effect_wt=netgr_wo_drug-wt_netgr_obs)

write.csv(wt_df,"wildtype_growthrates_sequenced.csv")

wt_df=wt_df%>%mutate(y_wt_obs=exp(-(drug_effect_wt*72)),wt_y_mean_pred=wt_y_mean,wt_y_sd_pred=wt_y_sd,wt_drug_effect_mean=-log(wt_y_mean)/72,wt_netgr_mean=0.055-wt_drug_effect_mean)

ggplot(wt_df,aes(x=factor(experiment),y=wt_netgr_obs))+geom_point()+geom_hline(yintercept = wt_df$wt_netgr_mean[1])

sessionInfo()R version 4.0.0 (2020-04-24)

Platform: x86_64-apple-darwin17.0 (64-bit)

Running under: macOS Catalina 10.15.6

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.0/Resources/lib/libRblas.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.0/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] reshape2_1.4.4 plotly_4.9.2.1 dplyr_0.8.5

[4] boot_1.3-24 lme4_1.1-23 Matrix_1.2-18

[7] fitdistrplus_1.0-14 npsurv_0.4-0.1 lsei_1.2-0.1

[10] survival_3.1-12 MASS_7.3-51.5 ggplot2_3.3.0

[13] lmtest_0.9-37 zoo_1.8-8

loaded via a namespace (and not attached):

[1] statmod_1.4.34 tidyselect_1.1.0 xfun_0.13 purrr_0.3.4

[5] splines_4.0.0 lattice_0.20-41 colorspace_1.4-1 vctrs_0.3.0

[9] viridisLite_0.3.0 htmltools_0.4.0 yaml_2.2.1 rlang_0.4.6

[13] later_1.0.0 pillar_1.4.4 nloptr_1.2.2.1 glue_1.4.1

[17] withr_2.2.0 plyr_1.8.6 lifecycle_0.2.0 stringr_1.4.0

[21] munsell_0.5.0 gtable_0.3.0 workflowr_1.6.2 htmlwidgets_1.5.1

[25] evaluate_0.14 labeling_0.3 knitr_1.28 crosstalk_1.1.0.1

[29] httpuv_1.5.2 Rcpp_1.0.4.6 promises_1.1.0 scales_1.1.1

[33] backports_1.1.7 jsonlite_1.6.1 farver_2.0.3 fs_1.4.1

[37] digest_0.6.25 stringi_1.4.6 grid_4.0.0 rprojroot_1.3-2

[41] tools_4.0.0 magrittr_1.5 lazyeval_0.2.2 tibble_3.0.1

[45] tidyr_1.0.3 crayon_1.3.4 whisker_0.4 pkgconfig_2.0.3

[49] ellipsis_0.3.1 data.table_1.12.8 httr_1.4.1 assertthat_0.2.1

[53] minqa_1.2.4 rmarkdown_2.1 R6_2.4.1 nlme_3.1-147

[57] git2r_0.27.1 compiler_4.0.0