Human Origins Array Global Data

jhmarcus

2019-03-04

Last updated: 2019-03-04

Checks: 5 1

Knit directory: drift-workflow/analysis/

This reproducible R Markdown analysis was created with workflowr (version 1.2.0). The Report tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

The R Markdown is untracked by Git. To know which version of the R Markdown file created these results, you’ll want to first commit it to the Git repo. If you’re still working on the analysis, you can ignore this warning. When you’re finished, you can run wflow_publish to commit the R Markdown file and build the HTML.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20190211) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility. The version displayed above was the version of the Git repository at the time these results were generated.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: analysis/.Rhistory

Ignored: analysis/flash_cache/

Ignored: data.tar.gz

Ignored: data/datasets/

Ignored: data/raw/

Ignored: output.tar.gz

Ignored: output/admixture/

Ignored: output/admixture_benchmark/

Ignored: output/flash_greedy/

Ignored: output/log/

Ignored: output/sim/

Untracked files:

Untracked: analysis/data_hoa_global.Rmd

Unstaged changes:

Modified: analysis/index.Rmd

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

There are no past versions. Publish this analysis with wflow_publish() to start tracking its development.

Imports

Lets import some needed packages:

library(ggplot2)

library(tidyr)

library(dplyr)

library(lfa)Read Genotypes

Here I read the full genotype matrix of the Human Origins dataset:

Y = t(lfa:::read.bed("../data/raw/NearEastPublic/HumanOriginsPublic2068"))[1] "reading in 2068 individuals"

[1] "reading in 621799 snps"

[1] "snp major mode"

[1] "reading snp 20000"

[1] "reading snp 40000"

[1] "reading snp 60000"

[1] "reading snp 80000"

[1] "reading snp 100000"

[1] "reading snp 120000"

[1] "reading snp 140000"

[1] "reading snp 160000"

[1] "reading snp 180000"

[1] "reading snp 200000"

[1] "reading snp 220000"

[1] "reading snp 240000"

[1] "reading snp 260000"

[1] "reading snp 280000"

[1] "reading snp 300000"

[1] "reading snp 320000"

[1] "reading snp 340000"

[1] "reading snp 360000"

[1] "reading snp 380000"

[1] "reading snp 400000"

[1] "reading snp 420000"

[1] "reading snp 440000"

[1] "reading snp 460000"

[1] "reading snp 480000"

[1] "reading snp 500000"

[1] "reading snp 520000"

[1] "reading snp 540000"

[1] "reading snp 560000"

[1] "reading snp 580000"

[1] "reading snp 600000"

[1] "reading snp 620000"# number of individuals

n = nrow(Y)

# number of SNPs

p = ncol(Y)Missingness per SNP

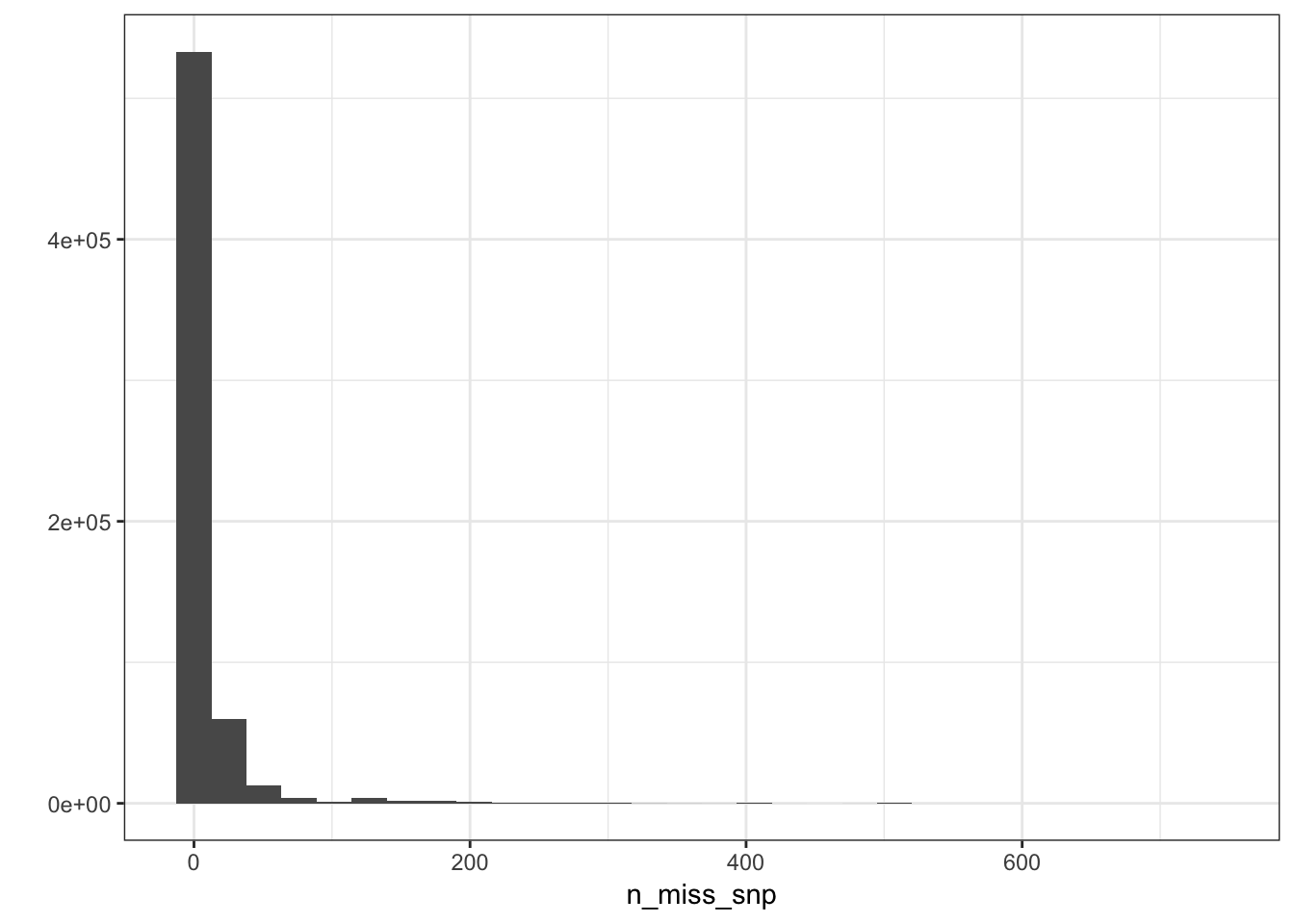

n_miss_snp = colSums(is.na(Y))

p_snpmss = qplot(n_miss_snp) + geom_histogram() + theme_bw()

p_snpmss`stat_bin()` using `bins = 30`. Pick better value with `binwidth`.

`stat_bin()` using `bins = 30`. Pick better value with `binwidth`.

There are very few SNPs with high levels of missing data so we can use a very stringent missingness threshold without losing much information.

sum(n_miss_snp==1)[1] 74216sum(n_miss_snp==2)[1] 91135sum(n_miss_snp==3)[1] 86207sum(n_miss_snp %in% 1:10)[1] 481592snp_idx = which(n_miss_snp <= 10)

10 / n[1] 0.00483559It seems like .995% is reasonable cutoff for missingness.

Missingness per Individual

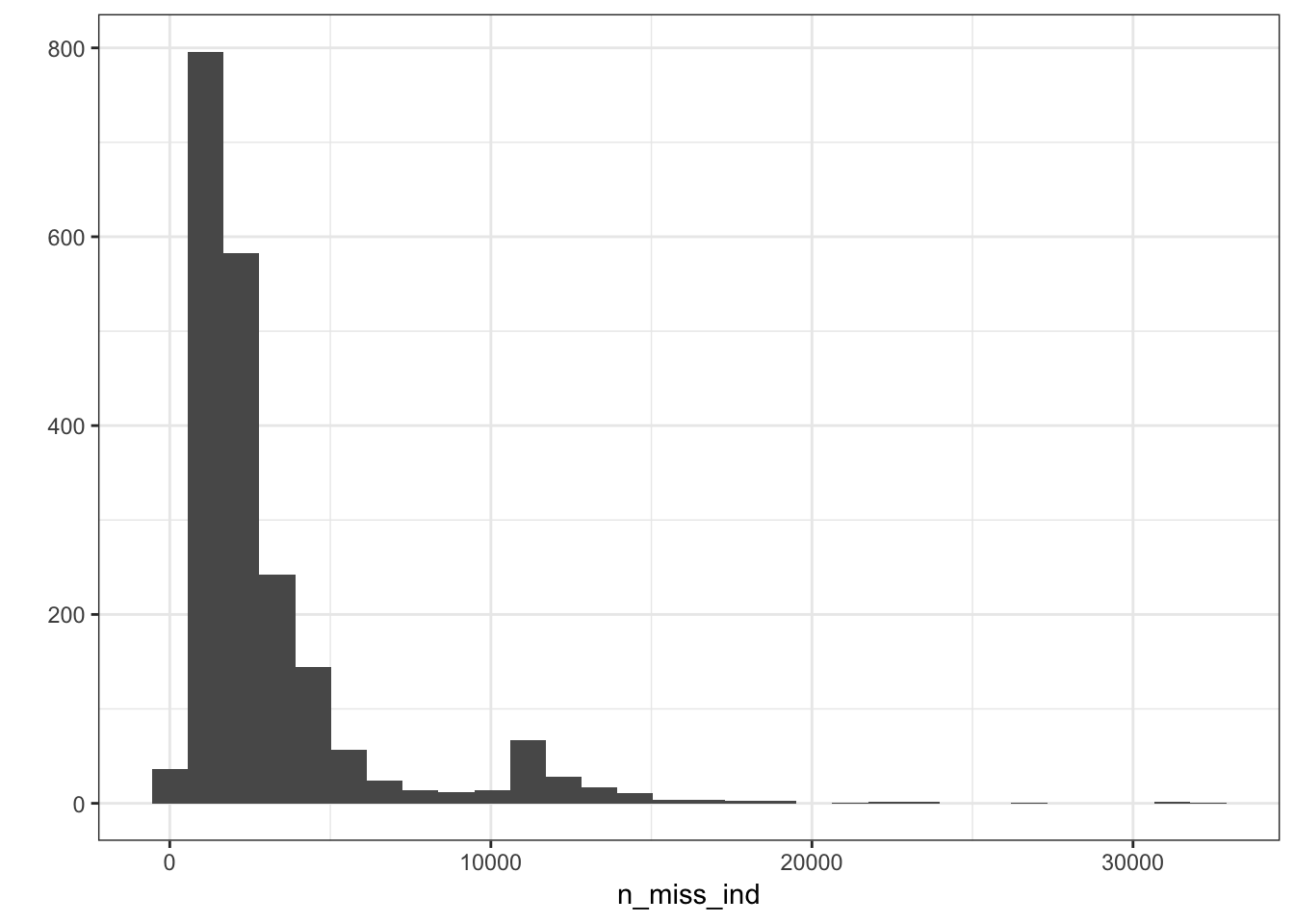

n_miss_ind = rowSums(is.na(Y))

p_indmss = qplot(n_miss_ind) + geom_histogram() + theme_bw()

p_indmss`stat_bin()` using `bins = 30`. Pick better value with `binwidth`.

`stat_bin()` using `bins = 30`. Pick better value with `binwidth`.

sum(n_miss_ind > 20000)[1] 920000 / p[1] 0.03216473It seems like a few individuals are missing about 3% of their SNPs which is a bit worrisome maybe they should be remove from the analysis? For now I will in include them and see if they pop up as any outliers in the PCs.

sessionInfo()R version 3.5.1 (2018-07-02)

Platform: x86_64-apple-darwin13.4.0 (64-bit)

Running under: macOS 10.14.2

Matrix products: default

BLAS/LAPACK: /Users/jhmarcus/miniconda3/lib/R/lib/libRblas.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] lfa_1.12.0 dplyr_0.8.0.1 tidyr_0.8.2 ggplot2_3.1.0

loaded via a namespace (and not attached):

[1] Rcpp_1.0.0 compiler_3.5.1 pillar_1.3.1 git2r_0.23.0

[5] plyr_1.8.4 workflowr_1.2.0 tools_3.5.1 digest_0.6.18

[9] evaluate_0.12 tibble_2.0.1 gtable_0.2.0 pkgconfig_2.0.2

[13] rlang_0.3.1 yaml_2.2.0 xfun_0.4 withr_2.1.2

[17] stringr_1.4.0 knitr_1.21 fs_1.2.6 rprojroot_1.3-2

[21] grid_3.5.1 tidyselect_0.2.5 glue_1.3.0 R6_2.4.0

[25] rmarkdown_1.11 purrr_0.3.0 corpcor_1.6.9 magrittr_1.5

[29] backports_1.1.3 scales_1.0.0 htmltools_0.3.6 assertthat_0.2.0

[33] colorspace_1.4-0 labeling_0.3 stringi_1.2.4 lazyeval_0.2.1

[37] munsell_0.5.0 crayon_1.3.4