NFL attendance

Jim Gruman

February 4, 2020

Last updated: 2021-09-24

Checks: 7 0

Knit directory: myTidyTuesday/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20210907) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version cb9348b. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: catboost_info/

Ignored: data/2021-09-08/

Ignored: data/CNHI_Excel_Chart.xlsx

Ignored: data/CommunityTreemap.jpeg

Ignored: data/Community_Roles.jpeg

Ignored: data/YammerDigitalDataScienceMembership.xlsx

Ignored: data/acs_poverty.rds

Ignored: data/fmhpi.rds

Ignored: data/grainstocks.rds

Ignored: data/hike_data.rds

Ignored: data/us_states.rds

Ignored: data/us_states_hexgrid.geojson

Ignored: data/weatherstats_toronto_daily.csv

Untracked files:

Untracked: code/YammerReach.R

Untracked: code/work list batch targets.R

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/NFLattendance.Rmd) and HTML (docs/NFLattendance.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | cb9348b | opus1993 | 2021-09-24 | adopt dots on weekly attendance plot |

| html | bb0b0d2 | opus1993 | 2021-09-09 | Build site. |

| Rmd | 239143c | opus1993 | 2021-09-09 | adopt common theme |

| html | fed0b95 | opus1993 | 2021-09-09 | Build site. |

| Rmd | c3fea06 | opus1993 | 2021-09-09 | adopt common theme |

suppressPackageStartupMessages({

library(tidyverse)

library(tidymodels)

})

source(here::here("code","_common.R"),

verbose = FALSE,

local = knitr::knit_global())

ggplot2::theme_set(theme_jim(base_size = 12))Let’s build a very simple model for NFL attendance

Explore data

Load the attendance and team standings data from Github

attendance <- read_csv("https://raw.githubusercontent.com/rfordatascience/tidytuesday/master/data/2020/2020-02-04/attendance.csv")

standings <- read_csv("https://raw.githubusercontent.com/rfordatascience/tidytuesday/master/data/2020/2020-02-04/standings.csv")

attendance_joined <- attendance %>%

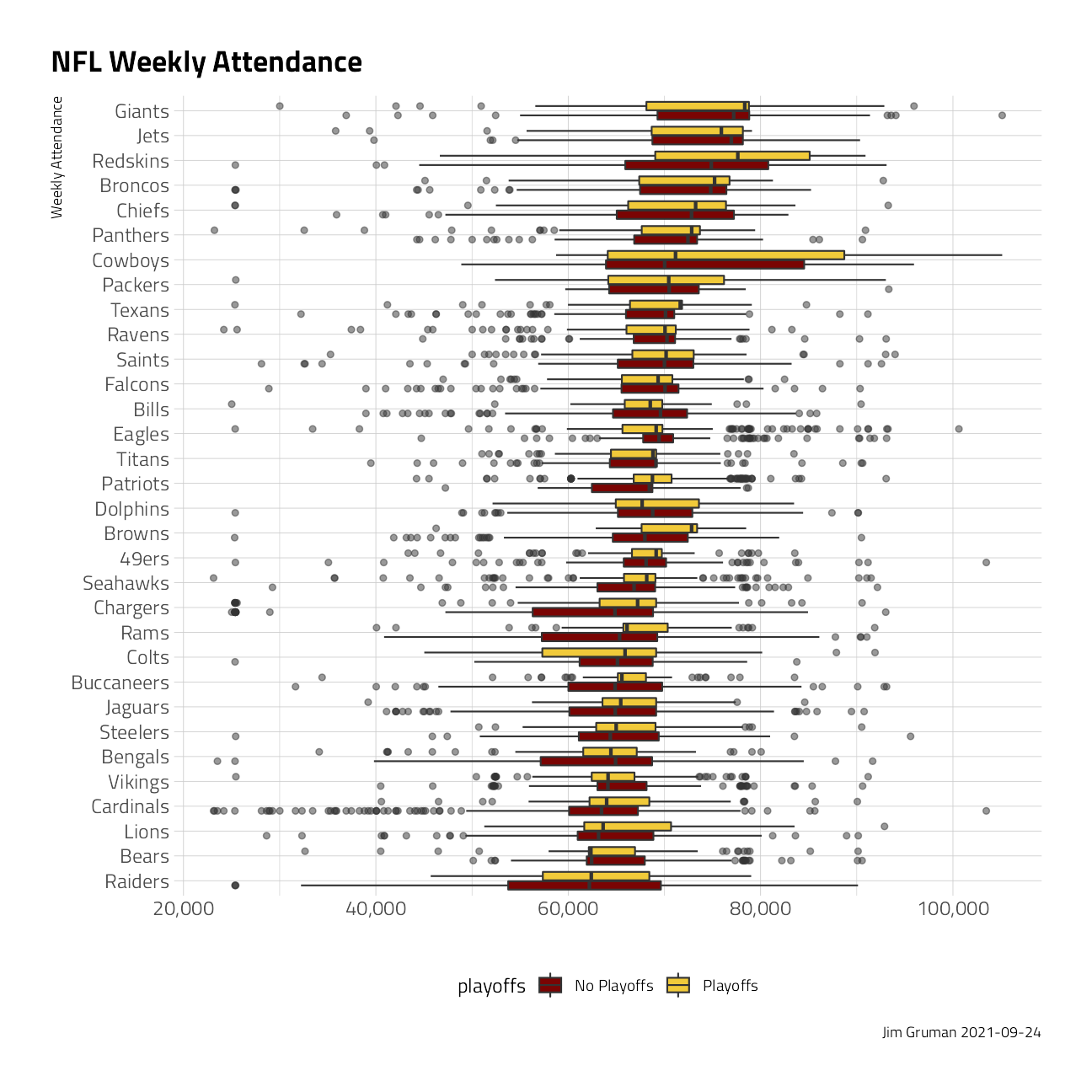

left_join(standings, by = c("year", "team_name", "team"))Explore the files and look for trends. In this boxplot visual, some teams certainly expect higher weekly attendance.

attendance_joined %>%

filter(!is.na(weekly_attendance)) %>%

ggplot(aes(

y = fct_reorder(team_name, weekly_attendance),

x = weekly_attendance,

fill = playoffs

)) +

geom_boxplot(outlier.alpha = 0.5) +

scale_x_continuous(labels = scales::label_comma()) +

labs(

title = "NFL Weekly Attendance",

caption = paste0("Jim Gruman ", Sys.Date()),

x = "",

y = "Weekly Attendance"

) +

theme(legend.position = "bottom")

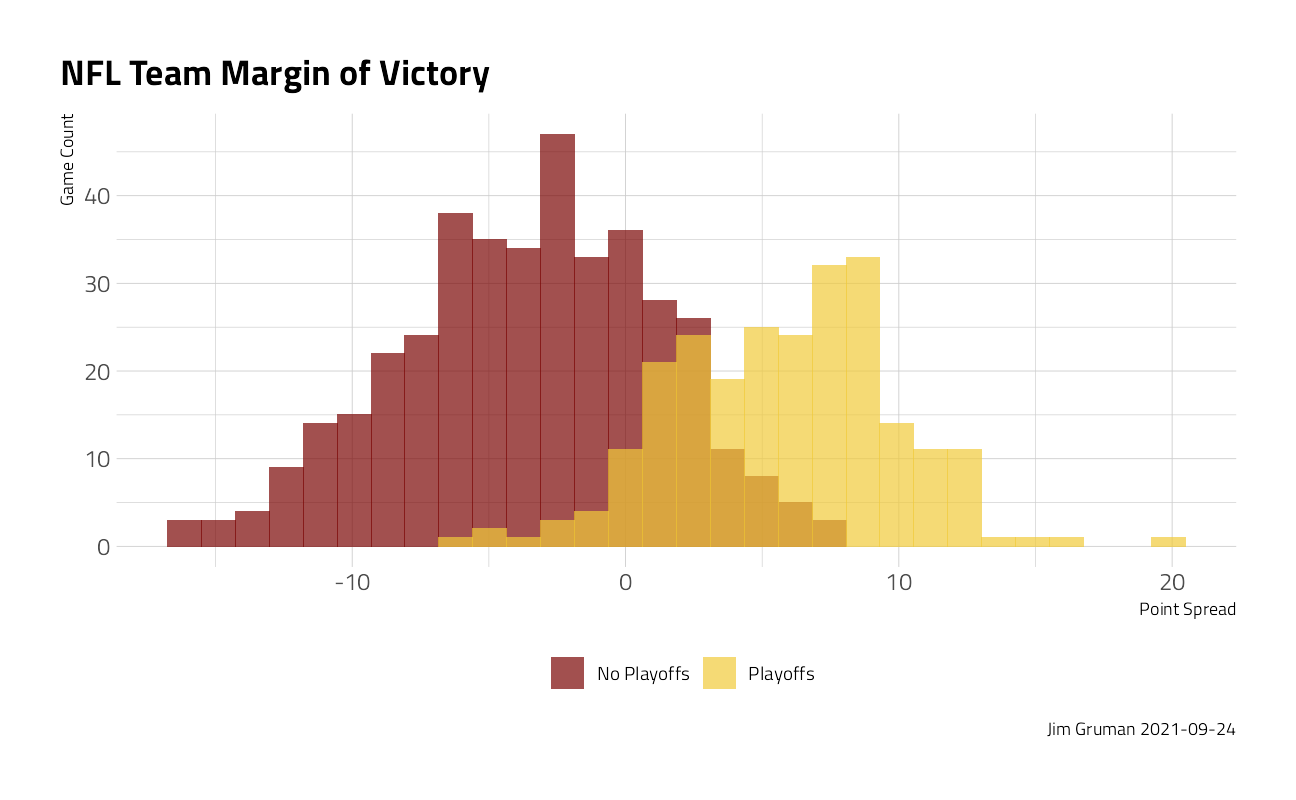

In this histogram, playoff-bound teams generally have higher point spread margins over the course of many games.

attendance_joined %>%

distinct(team_name, year, margin_of_victory, playoffs) %>%

ggplot(aes(margin_of_victory, fill = playoffs)) +

geom_histogram(position = "identity", alpha = 0.7) +

labs(

title = "NFL Team Margin of Victory",

fill = NULL,

caption = paste0("Jim Gruman ", Sys.Date()),

x = "Point Spread", y = "Game Count"

) +

theme(legend.position = "bottom")

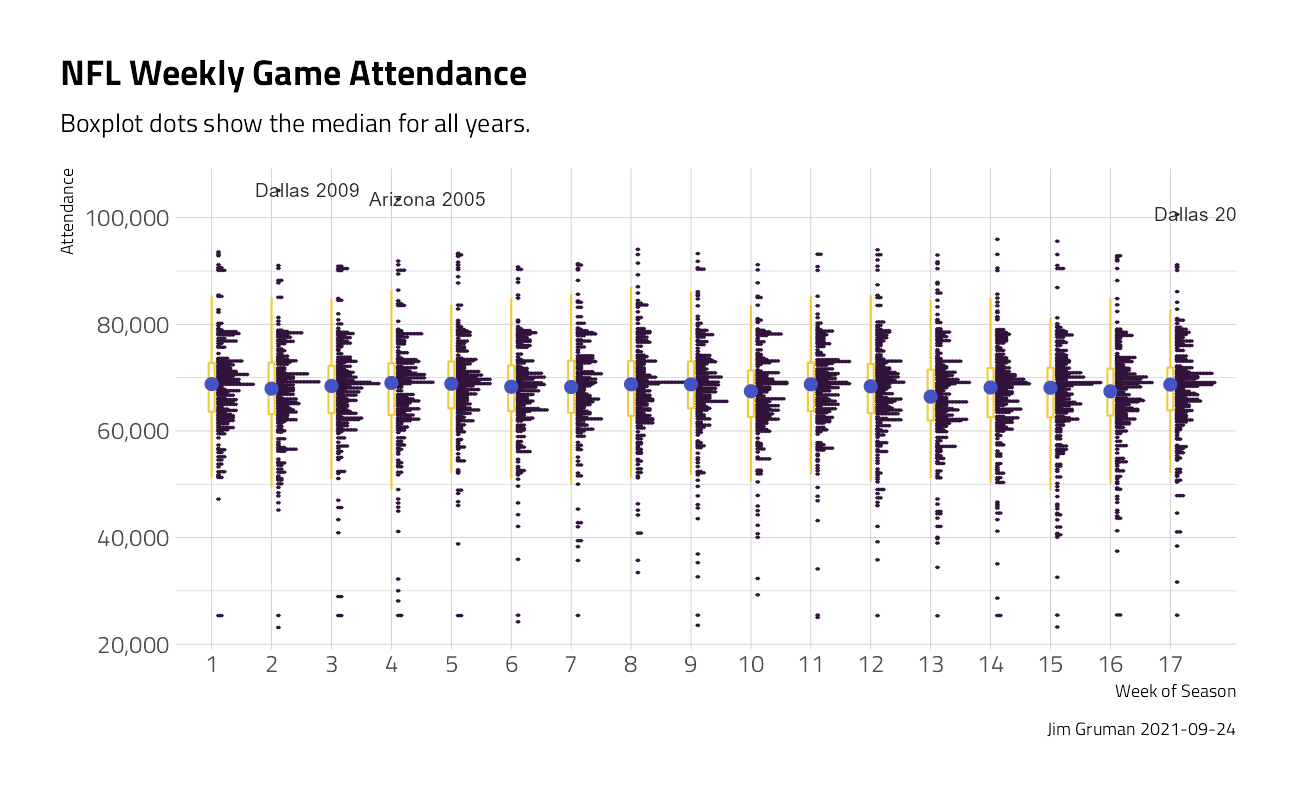

Across the weeks of the season, this data visualization shows the distribution of attendance by week number.

attendance_joined %>%

ggplot(aes(

x = factor(week),

y = weekly_attendance

)) +

ggdist::stat_dots(

side = "top",

justification = -0.1,

binwidth = 250,

show.legend = FALSE,

color = "#30123BFF"

) +

geom_boxplot(

width = 0.1,

outlier.shape = NA,

show.legend = FALSE,

color = "#F1CA3AFF"

) +

geom_text(

data = . %>% filter(weekly_attendance > 100000),

aes(label = glue::glue("{team} {year}")),

check_overlap = TRUE,

nudge_x = .6,

face = "bold",

show.legend = FALSE

) +

stat_summary(

fun = median,

color = "#4454C4FF",

show.legend = FALSE

) +

scale_y_continuous(labels = scales::label_comma()) +

labs(

title = "NFL Weekly Game Attendance",

subtitle = "Boxplot dots show the median for all years.",

caption = paste0("Jim Gruman ", Sys.Date()),

x = "Week of Season",

y = "Attendance"

)

To build models for the prediction of weekly attendance, we will select for features arbitrarily on the team_name, the year, the week of the game, and the margin of victory.

attendance_df <- attendance_joined %>%

filter(!is.na(weekly_attendance)) %>%

dplyr::select(

weekly_attendance, team_name, year, week,

margin_of_victory, strength_of_schedule, playoffs

)Train a Model

First, the data are split into training and testing sets at about 75/25, stratifying for similar playoff outcomes in both.

attendance_split <- attendance_df %>%

initial_split(strata = playoffs)

nfl_train <- training(attendance_split)

nfl_test <- testing(attendance_split)A simple linear model is specified and fit, and the estimates of coefficients are shown here:

lm_spec <- linear_reg(mode = "regression") %>%

set_engine(engine = "lm")

lm_fit <- lm_spec %>%

fit(weekly_attendance ~ ., data = nfl_train)

tidy(lm_fit) %>%

arrange(-p.value) %>%

filter(p.value < 0.05) %>%

knitr::kable()| term | estimate | std.error | statistic | p.value |

|---|---|---|---|---|

| team_namePanthers | 1788.47742 | 766.51134 | 2.333269 | 0.0196599 |

| team_nameEagles | 1867.67406 | 781.27721 | 2.390540 | 0.0168478 |

| (Intercept) | -81456.07886 | 33471.27892 | -2.433611 | 0.0149719 |

| team_nameChiefs | 2123.44169 | 771.24254 | 2.753274 | 0.0059142 |

| team_nameRams | -2619.25130 | 757.80765 | -3.456354 | 0.0005505 |

| team_nameBroncos | 2772.20172 | 764.62900 | 3.625551 | 0.0002902 |

| strength_of_schedule | 222.75149 | 61.34503 | 3.631126 | 0.0002840 |

| team_nameLions | -2864.45966 | 760.61254 | -3.765991 | 0.0001671 |

| team_nameVikings | -2991.38247 | 768.17257 | -3.894154 | 0.0000994 |

| team_nameBears | -3080.42995 | 773.12053 | -3.984411 | 0.0000683 |

| team_nameJaguars | -3120.72506 | 766.39104 | -4.071975 | 0.0000471 |

| team_nameSteelers | -3311.75689 | 766.55313 | -4.320323 | 0.0000158 |

| week | -86.43370 | 19.26394 | -4.486813 | 0.0000073 |

| year | 74.82422 | 16.65373 | 4.492941 | 0.0000071 |

| team_nameColts | -3449.02877 | 764.99840 | -4.508544 | 0.0000066 |

| team_nameBuccaneers | -3516.29291 | 766.37815 | -4.588196 | 0.0000045 |

| team_nameBengals | -4321.88943 | 773.42204 | -5.588009 | 0.0000000 |

| team_nameJets | 4745.24063 | 768.62954 | 6.173638 | 0.0000000 |

| margin_of_victory | 146.78930 | 23.15908 | 6.338305 | 0.0000000 |

| team_nameChargers | -5655.03038 | 758.66937 | -7.453880 | 0.0000000 |

| team_nameRedskins | 5931.63951 | 767.66935 | 7.726816 | 0.0000000 |

| team_nameRaiders | -6343.25992 | 775.39938 | -8.180636 | 0.0000000 |

| team_nameGiants | 6407.18859 | 769.46531 | 8.326806 | 0.0000000 |

| team_nameCardinals | -6470.53879 | 758.95065 | -8.525638 | 0.0000000 |

| team_nameCowboys | 6613.72537 | 763.52992 | 8.662038 | 0.0000000 |

A comparable random forest regression is specified and fit here:

rf_spec <- rand_forest(mode = "regression") %>%

set_engine(engine = "ranger")

rf_fit <- rf_spec %>%

fit(weekly_attendance ~ ., data = nfl_train)

tidy(lm_fit) %>%

arrange(-estimate) %>%

knitr::kable()| term | estimate | std.error | statistic | p.value |

|---|---|---|---|---|

| team_nameCowboys | 6613.72537 | 763.52992 | 8.6620382 | 0.0000000 |

| team_nameGiants | 6407.18859 | 769.46531 | 8.3268063 | 0.0000000 |

| team_nameRedskins | 5931.63951 | 767.66935 | 7.7268156 | 0.0000000 |

| team_nameJets | 4745.24063 | 768.62954 | 6.1736382 | 0.0000000 |

| team_nameBroncos | 2772.20172 | 764.62900 | 3.6255514 | 0.0002902 |

| team_nameChiefs | 2123.44169 | 771.24254 | 2.7532736 | 0.0059142 |

| team_nameEagles | 1867.67406 | 781.27721 | 2.3905395 | 0.0168478 |

| team_namePanthers | 1788.47742 | 766.51134 | 2.3332694 | 0.0196599 |

| team_namePackers | 971.42811 | 760.29413 | 1.2777004 | 0.2013940 |

| team_nameSaints | 637.54436 | 762.73835 | 0.8358625 | 0.4032585 |

| team_nameTexans | 355.51809 | 785.64903 | 0.4525152 | 0.6509108 |

| team_nameDolphins | 332.35203 | 762.20818 | 0.4360384 | 0.6628212 |

| strength_of_schedule | 222.75149 | 61.34503 | 3.6311255 | 0.0002840 |

| team_nameBrowns | 222.40629 | 773.64517 | 0.2874784 | 0.7737538 |

| margin_of_victory | 146.78930 | 23.15908 | 6.3383047 | 0.0000000 |

| year | 74.82422 | 16.65373 | 4.4929414 | 0.0000071 |

| team_nameFalcons | -68.93885 | 773.47578 | -0.0891286 | 0.9289820 |

| week | -86.43370 | 19.26394 | -4.4868125 | 0.0000073 |

| playoffsPlayoffs | -211.03783 | 288.70801 | -0.7309732 | 0.4648180 |

| team_nameRavens | -278.23920 | 772.80223 | -0.3600393 | 0.7188277 |

| team_nameBills | -416.98841 | 763.14401 | -0.5464085 | 0.5848011 |

| team_namePatriots | -545.67186 | 786.66152 | -0.6936552 | 0.4879196 |

| team_nameTitans | -807.91944 | 762.16059 | -1.0600383 | 0.2891608 |

| team_nameSeahawks | -1442.91948 | 779.67975 | -1.8506566 | 0.0642577 |

| team_nameRams | -2619.25130 | 757.80765 | -3.4563537 | 0.0005505 |

| team_nameLions | -2864.45966 | 760.61254 | -3.7659906 | 0.0001671 |

| team_nameVikings | -2991.38247 | 768.17257 | -3.8941542 | 0.0000994 |

| team_nameBears | -3080.42995 | 773.12053 | -3.9844110 | 0.0000683 |

| team_nameJaguars | -3120.72506 | 766.39104 | -4.0719749 | 0.0000471 |

| team_nameSteelers | -3311.75689 | 766.55313 | -4.3203227 | 0.0000158 |

| team_nameColts | -3449.02877 | 764.99840 | -4.5085438 | 0.0000066 |

| team_nameBuccaneers | -3516.29291 | 766.37815 | -4.5881957 | 0.0000045 |

| team_nameBengals | -4321.88943 | 773.42204 | -5.5880091 | 0.0000000 |

| team_nameChargers | -5655.03038 | 758.66937 | -7.4538799 | 0.0000000 |

| team_nameRaiders | -6343.25992 | 775.39938 | -8.1806358 | 0.0000000 |

| team_nameCardinals | -6470.53879 | 758.95065 | -8.5256383 | 0.0000000 |

| (Intercept) | -81456.07886 | 33471.27892 | -2.4336112 | 0.0149719 |

Evaluate Models

results_train <- lm_fit %>%

predict(new_data = nfl_train) %>%

mutate(

truth = nfl_train$weekly_attendance,

model = "lm"

) %>%

bind_rows(rf_fit %>%

predict(new_data = nfl_train) %>%

mutate(

truth = nfl_train$weekly_attendance,

model = "rf"

))

results_test <- lm_fit %>%

predict(new_data = nfl_test) %>%

mutate(

truth = nfl_test$weekly_attendance,

model = "lm"

) %>%

bind_rows(rf_fit %>%

predict(new_data = nfl_test) %>%

mutate(

truth = nfl_test$weekly_attendance,

model = "rf"

))On the training dataset

results_train %>%

group_by(model) %>%

rmse(truth = truth, estimate = .pred) %>%

knitr::kable()| model | .metric | .estimator | .estimate |

|---|---|---|---|

| lm | rmse | standard | 8367.940 |

| rf | rmse | standard | 6079.739 |

On the testing data:

results_test %>%

group_by(model) %>%

rmse(truth = truth, estimate = .pred) %>%

knitr::kable()| model | .metric | .estimator | .estimate |

|---|---|---|---|

| lm | rmse | standard | 8170.598 |

| rf | rmse | standard | 8580.718 |

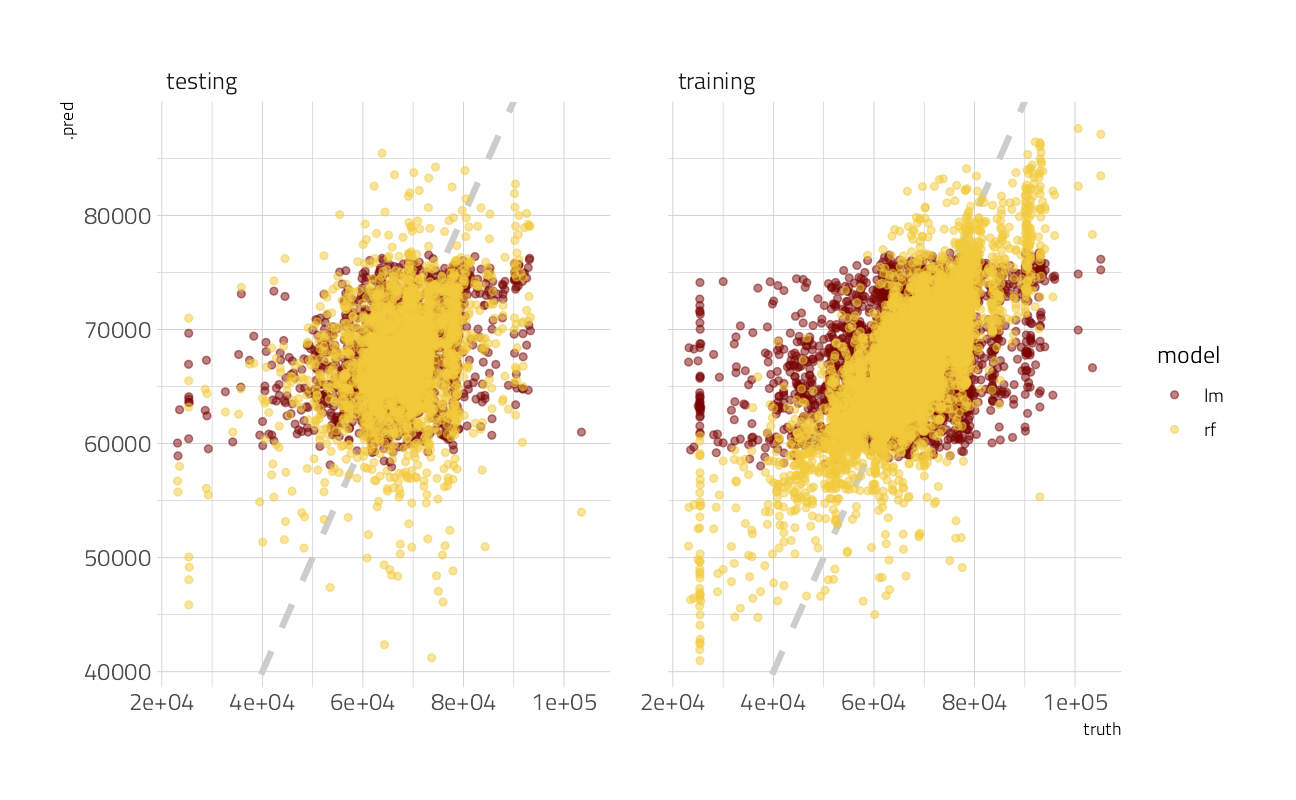

The random forest model here appears to overfit the training data set, with disappointing results on new data.

results_test %>%

mutate(train = "testing") %>%

bind_rows(results_train %>%

mutate(train = "training")) %>%

ggplot(aes(truth, .pred, color = model)) +

geom_abline(lty = 2, color = "gray80", size = 1.5) +

geom_point(alpha = 0.5) +

facet_wrap(~train) ### Lets try again, with resampling on the training

### Lets try again, with resampling on the training

all_cores <- parallelly::availableCores(omit = 1)

all_coressystem

11 future::plan("multisession", workers = all_cores) # on Windows

nfl_folds <- vfold_cv(nfl_train, strata = playoffs)

rf_res <- fit_resamples(

workflow(weekly_attendance ~ ., rf_spec),

nfl_folds,

control = control_resamples(save_pred = TRUE)

)

rf_res %>%

collect_metrics() %>%

knitr::kable()| .metric | .estimator | mean | n | std_err | .config |

|---|---|---|---|---|---|

| rmse | standard | 8674.5906122 | 10 | 110.4737449 | Preprocessor1_Model1 |

| rsq | standard | 0.1248592 | 10 | 0.0080805 | Preprocessor1_Model1 |

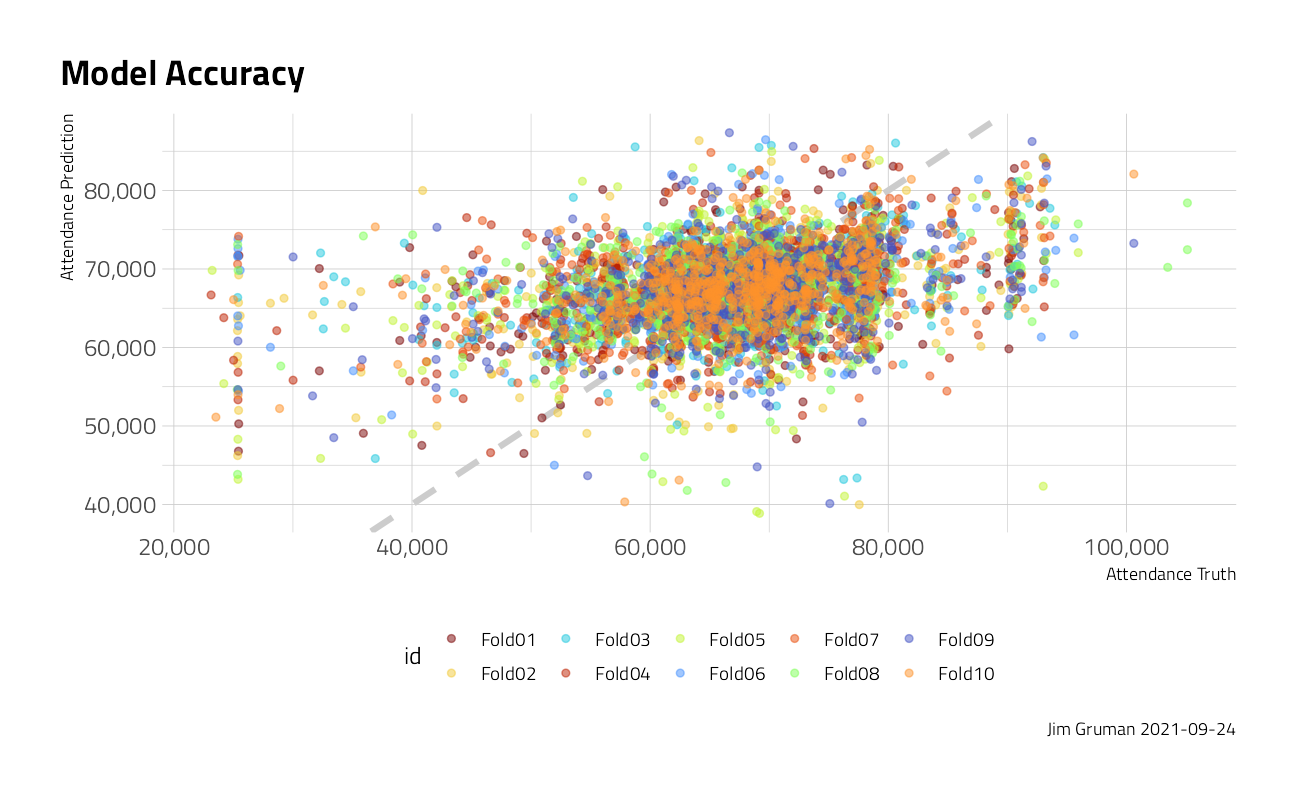

rf_res %>%

unnest(.predictions) %>%

ggplot(aes(weekly_attendance, .pred, color = id)) +

geom_abline(lty = 2, color = "gray80", size = 1.5) +

geom_point(alpha = 0.5) +

labs(

title = "Model Accuracy",

caption = paste0("Jim Gruman ", Sys.Date()),

x = "Attendance Truth", y = "Attendance Prediction"

) +

theme(legend.position = "bottom") +

scale_y_continuous(labels = scales::label_comma()) +

scale_x_continuous(labels = scales::label_comma())

After resampling, the root mean squared error of the random forest model on test data is improved only marginally, compared to the conventional linear model.

Credits: Julia Silge, RStudio Thomas Mock, RStudio

sessionInfo()R version 4.1.1 (2021-08-10)

Platform: x86_64-w64-mingw32/x64 (64-bit)

Running under: Windows 10 x64 (build 19043)

Matrix products: default

locale:

[1] LC_COLLATE=English_United States.1252

[2] LC_CTYPE=English_United States.1252

[3] LC_MONETARY=English_United States.1252

[4] LC_NUMERIC=C

[5] LC_TIME=English_United States.1252

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] ranger_0.13.1 vctrs_0.3.8 rlang_0.4.11 yardstick_0.0.8

[5] workflowsets_0.1.0 workflows_0.2.3 tune_0.1.6 rsample_0.1.0

[9] recipes_0.1.16 parsnip_0.1.7.900 modeldata_0.1.1 infer_1.0.0

[13] dials_0.0.10 scales_1.1.1 broom_0.7.9 tidymodels_0.1.3

[17] forcats_0.5.1 stringr_1.4.0 dplyr_1.0.7 purrr_0.3.4

[21] readr_2.0.1 tidyr_1.1.3 tibble_3.1.4 ggplot2_3.3.5

[25] tidyverse_1.3.1 workflowr_1.6.2

loaded via a namespace (and not attached):

[1] readxl_1.3.1 backports_1.2.1 systemfonts_1.0.2

[4] plyr_1.8.6 splines_4.1.1 listenv_0.8.0

[7] digest_0.6.27 foreach_1.5.1 htmltools_0.5.2

[10] viridis_0.6.1 fansi_0.5.0 magrittr_2.0.1

[13] tzdb_0.1.2 globals_0.14.0 modelr_0.1.8

[16] gower_0.2.2 extrafont_0.17 vroom_1.5.5

[19] R.utils_2.10.1 extrafontdb_1.0 hardhat_0.1.6

[22] colorspace_2.0-2 rvest_1.0.1 ggdist_3.0.0

[25] textshaping_0.3.5 haven_2.4.3 xfun_0.26

[28] crayon_1.4.1 jsonlite_1.7.2 survival_3.2-11

[31] iterators_1.0.13 glue_1.4.2 gtable_0.3.0

[34] ipred_0.9-12 distributional_0.2.2 R.cache_0.15.0

[37] Rttf2pt1_1.3.9 future.apply_1.8.1 DBI_1.1.1

[40] Rcpp_1.0.7 viridisLite_0.4.0 bit_4.0.4

[43] GPfit_1.0-8 lava_1.6.10 prodlim_2019.11.13

[46] httr_1.4.2 ellipsis_0.3.2 farver_2.1.0

[49] pkgconfig_2.0.3 R.methodsS3_1.8.1 nnet_7.3-16

[52] sass_0.4.0 dbplyr_2.1.1 utf8_1.2.2

[55] here_1.0.1 labeling_0.4.2 tidyselect_1.1.1

[58] DiceDesign_1.9 later_1.3.0 munsell_0.5.0

[61] cellranger_1.1.0 tools_4.1.1 cachem_1.0.6

[64] cli_3.0.1 generics_0.1.0 evaluate_0.14

[67] fastmap_1.1.0 yaml_2.2.1 ragg_1.1.3

[70] rematch2_2.1.2 bit64_4.0.5 knitr_1.34

[73] fs_1.5.0 future_1.22.1 whisker_0.4

[76] R.oo_1.24.0 xml2_1.3.2 compiler_4.1.1

[79] rstudioapi_0.13 curl_4.3.2 reprex_2.0.1

[82] lhs_1.1.3 bslib_0.3.0 stringi_1.7.4

[85] highr_0.9 gdtools_0.2.3 hrbrthemes_0.8.0

[88] lattice_0.20-44 Matrix_1.3-4 styler_1.6.1

[91] conflicted_1.0.4 pillar_1.6.2 lifecycle_1.0.1

[94] furrr_0.2.3 jquerylib_0.1.4 httpuv_1.6.3

[97] R6_2.5.1 promises_1.2.0.1 gridExtra_2.3

[100] parallelly_1.28.1 codetools_0.2-18 MASS_7.3-54

[103] assertthat_0.2.1 rprojroot_2.0.2 withr_2.4.2

[106] parallel_4.1.1 hms_1.1.0 grid_4.1.1

[109] rpart_4.1-15 timeDate_3043.102 class_7.3-19

[112] rmarkdown_2.11 git2r_0.28.0 pROC_1.18.0

[115] lubridate_1.7.10