Simple examples of the Metropolis-Hastings algorithm

Matthew Stephens

University of ChicagoJanuary 23, 2026

Last updated: 2026-01-23

Checks: 7 0

Knit directory: fiveMinuteStats/analysis/

This reproducible R Markdown analysis was created with workflowr (version 1.7.1). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(12345) was run prior to running the

code in the R Markdown file. Setting a seed ensures that any results

that rely on randomness, e.g. subsampling or permutations, are

reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 7007f5b. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for

the analysis have been committed to Git prior to generating the results

(you can use wflow_publish or

wflow_git_commit). workflowr only checks the R Markdown

file, but you know if there are other scripts or data files that it

depends on. Below is the status of the Git repository when the results

were generated:

working directory clean

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were

made to the R Markdown (analysis/MH-examples1.Rmd) and HTML

(docs/MH-examples1.html) files. If you’ve configured a

remote Git repository (see ?wflow_git_remote), click on the

hyperlinks in the table below to view the files as they were in that

past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 7007f5b | Peter Carbonetto | 2026-01-23 | wflow_publish("analysis/MH-examples1.Rmd") |

| html | db49bb6 | Peter Carbonetto | 2026-01-23 | Ran wflow_publish("analysis/MH-examples1.Rmd"). |

| Rmd | 3e3246e | Peter Carbonetto | 2026-01-23 | Updates to the rest of MH-examples1 vignette. |

| Rmd | c88c3e6 | Peter Carbonetto | 2026-01-23 | Updates to Example 2 from the MH-examples1 vignette. |

| Rmd | ddbf10a | Peter Carbonetto | 2026-01-23 | Updated to example 1 in MH-examples1 vignette. |

| Rmd | 8fa8658 | Peter Carbonetto | 2026-01-23 | Added rule for MH-examples1 vignette. |

| html | 60908f5 | Matthew Stephens | 2023-07-18 | Build site. |

| Rmd | 366ef4e | Matthew Stephens | 2023-07-18 | workflowr::wflow_publish("analysis/MH-examples1.Rmd") |

| html | c6b20e2 | Matthew Stephens | 2022-04-26 | Build site. |

| Rmd | 09da4d4 | Matthew Stephens | 2022-04-26 | workflowr::wflow_publish("MH-examples1.Rmd") |

| html | 1543692 | Matthew Stephens | 2022-04-26 | Build site. |

| Rmd | dadc549 | Matthew Stephens | 2022-04-26 | workflowr::wflow_publish("MH-examples1.Rmd") |

| html | 5f62ee6 | Matthew Stephens | 2019-03-31 | Build site. |

| Rmd | 0cd28bd | Matthew Stephens | 2019-03-31 | workflowr::wflow_publish(all = TRUE) |

| html | 34bcc51 | John Blischak | 2017-03-06 | Build site. |

| Rmd | c7339fc | John Blischak | 2017-03-06 | Minor updates. |

| Rmd | 5fbc8b5 | John Blischak | 2017-03-06 | Update workflowr project with wflow_update (version 0.4.0). |

| Rmd | 391ba3c | John Blischak | 2017-03-06 | Remove front and end matter of non-standard templates. |

| html | 8e61683 | Marcus Davy | 2017-03-03 | rendered html using wflow_build(all=TRUE) |

| Rmd | d674141 | Marcus Davy | 2017-02-26 | typos, refs |

| html | a0309bf | stephens999 | 2017-02-16 | Build site. |

| Rmd | 0a96ec4 | stephens999 | 2017-02-16 | Files commited by wflow_commit. |

| html | d54f326 | stephens999 | 2017-02-15 | Build site. |

| Rmd | 0207794 | stephens999 | 2017-02-15 | Files commited by wflow_commit. |

| html | ad91ca8 | stephens999 | 2017-01-25 | update html |

| Rmd | 1997420 | stephens999 | 2017-01-25 | Files commited by wflow_commit. |

| html | ea1f50e | stephens999 | 2017-01-25 | update MH examples |

| Rmd | ba83f29 | stephens999 | 2017-01-24 | Files commited by wflow_commit. |

| html | 86ebaed | stephens999 | 2017-01-24 | Build site. |

| Rmd | 17331b4 | stephens999 | 2017-01-24 | Files commited by wflow_commit. |

See here for a PDF version of this vignette.

Prerequisites

You should be familiar with the Metropolis-Hastings algorithm, introduced here, and elaborated on here.

Caveat on code

Note that the code is designed to be readable by a beginner, rather than “efficient”. The idea is that you can use this code to learn about the basics of MCMC, but not as a model for how to program well in R!

Example 1: Sampling from an exponential distribution using MCMC

Any MCMC scheme aims to produce (dependent) samples from a “target” distribution. In this case we are going to use the exponential distribution with mean 1 as our target distribution. Here we define this function (on the log scale):

log_exp_target <- function (x)

dexp(x,rate = 1,log = TRUE)The following code implements a simple M-H algorithm. (Note that the parameter “log_target” is a function which computes the log of the target distribution; you may be unfamiliar with the idea of passing a function as a parameter, but it works just like any other type of parameter)

easyMCMC <- function (log_target, niter, startval, proposalsd) {

x <- rep(0,niter)

x[1] <- startval

for (i in 2:niter) {

currentx <- x[i-1]

proposedx <- rnorm(1,currentx,proposalsd)

A <- exp(log_target(proposedx) - log_target(currentx))

u <- runif(1)

if(u < A)

x[i] <- proposedx

else

x[i] <- currentx

}

return(x)

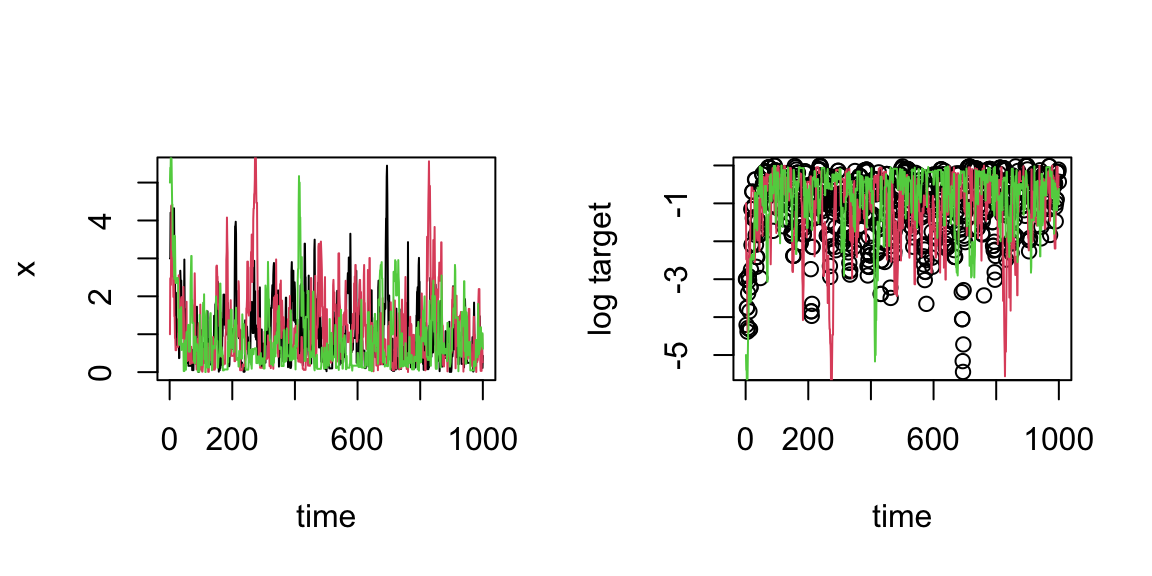

}Now we run the MCMC three times from different starting points and compare the results:

par(mfrow = c(1,2))

z1 <- easyMCMC(log_exp_target,1000,3,1)

z2 <- easyMCMC(log_exp_target,1000,1,1)

z3 <- easyMCMC(log_exp_target,1000,5,1)

plot(z1,type = "l",xlab = "time",ylab = "x")

lines(z2,col = 2)

lines(z3,col = 3)

plot(log_exp_target(z1),xlab = "time",ylab = "log target")

lines(log_exp_target(z2),col = 2)

lines(log_exp_target(z3),col = 3)

| Version | Author | Date |

|---|---|---|

| db49bb6 | Peter Carbonetto | 2026-01-23 |

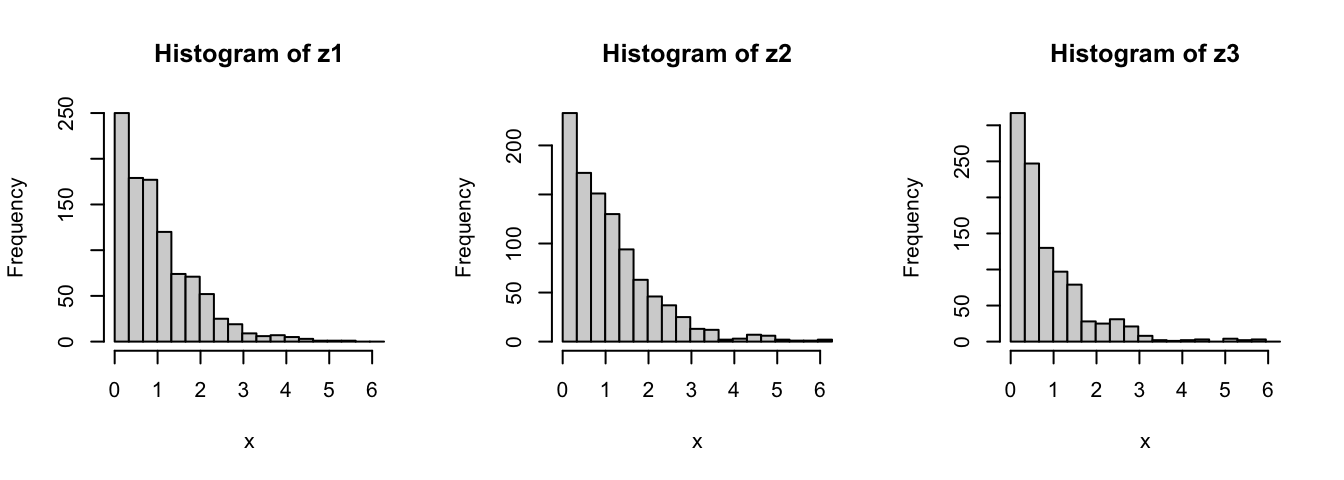

par(mfrow = c(1,3))

maxz <- max(c(z1,z2,z3))

hist(z1,breaks = seq(0,maxz,length.out = 20),xlab = "x")

hist(z2,breaks = seq(0,maxz,length.out = 20),xlab = "x")

hist(z3,breaks = seq(0,maxz,length.out = 20),xlab = "x")

| Version | Author | Date |

|---|---|---|

| db49bb6 | Peter Carbonetto | 2026-01-23 |

Exercise

Use the function “easyMCMC” to explore the following:

How do different starting values affect the MCMC scheme? (Try some extreme starting points.)

What is the effect of having a bigger (or smaller) proposal standard deviation? (Again, try some extreme values.)

Try changing the (log) target function to “log_target_bimodal” below. What does this target distribution look like? What happens if the proposal standard deviation is too small here? (e.g., try 1 and 0.1 and see what happens)

log_target_bimodal <- function (x)

log(0.8*dnorm(x,-4,1) + 0.2*dnorm(x,4,1))Example 2: Estimating an allele frequency

A standard assumption when modelling genotypes of biallelic loci (e.g., loci with alleles \(A\) and \(a\)) is that the population is “mating at random”. From this assumption, it follows that the population will be in “Hardy-Weinberg equilibrium” (HWE), which means that if \(p\) is the frequency of the \(A\) allele, then the genotypes \(AA\), \(Aa\) and \(aa\) have frequencies \(p^2\), \(2p(1-p)\) and \((1-p)^2\), respectively.

A simple prior for \(p\) is to assume it is uniform on \([0,1]\). Suppose that we sample \(n\) individuals, and observe \(n_{AA}\) individuals with genotype \(AA\), \(n_{Aa}\) individuals with genotype \(Aa\), and \(n_{aa}\) individuals with genotype \(aa\).

The following R code implements a M-H algorithm to sample from the posterior distribution of \(p\). Try to go through the code to see how it works.

The first function returns the (log) prior density:

log_prior <- function (p) {

if((p < 0) || (p > 1))

return(-Inf)

else

return(0)

}The second function returns the (log) likelihood:

log_likelihood_hwe <- function (p, nAA, nAa, naa)

return(2*nAA*log(p) +

nAa*log(2*p*(1-p)) +

2*naa*log(1-p))And now this function implements the M-H algorithm:

psampler <- function (nAA, nAa, naa, niter, pstartval, pproposalsd) {

p <- rep(0,niter)

p[1] <- pstartval

for (i in 2:niter) {

currentp <- p[i-1]

newp <- currentp + rnorm(1,0,pproposalsd)

A <- exp(log_prior(newp)

- log_prior(currentp)

+ log_likelihood_hwe(newp,nAA,nAa,naa)

- log_likelihood_hwe(currentp,nAA,nAa,naa))

if (runif(1) < A)

p[i] <- newp

else

p[i] <- currentp

}

return(p)

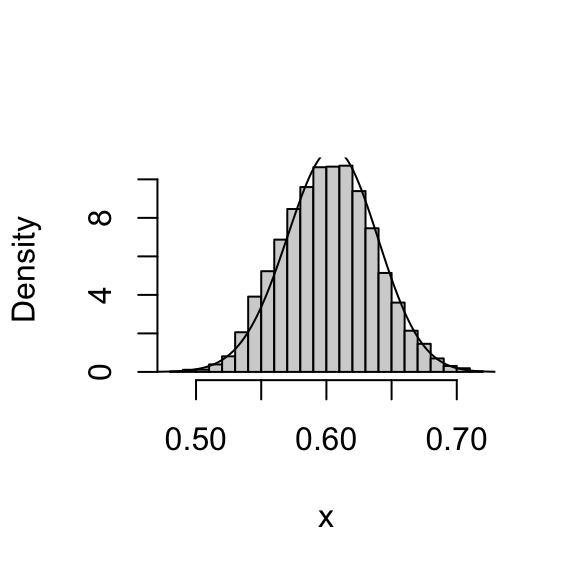

}Try running this algorithm for \(n_{AA} = 50\), \(n_{Aa} = 21\), \(n_{aa} = 29\):

z <- psampler(50,21,29,10000,0.5,0.01)Now here’s some R code to compare the samples generated by the M-H algorithm with the theoretical posterior. In this case, the theoretical posterior is available analytically; since we observed \(A\) 121 times and \(a\) 79 times, the posterior for \(p\) is \(\mathrm{Beta}(121 + 1,79 + 1)\).

x <- seq(0,1,length.out = 1000)

hist(z,breaks = 32,probability = TRUE,xlab = "x",main = "")

lines(x,dbeta(x,122,80))

| Version | Author | Date |

|---|---|---|

| db49bb6 | Peter Carbonetto | 2026-01-23 |

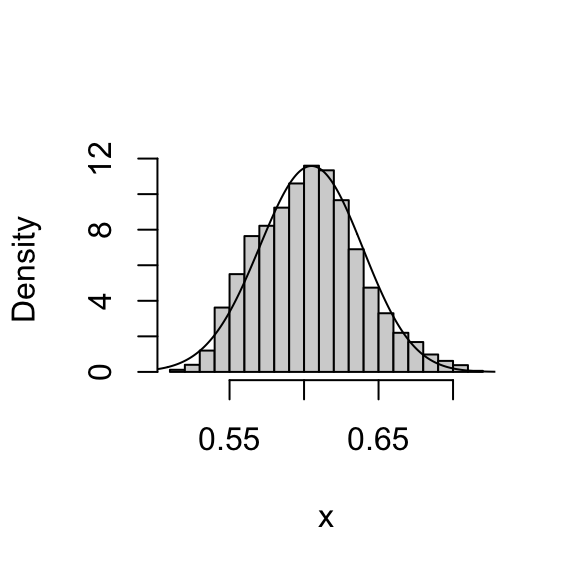

You might also like to discard the first 5,000 samples as “burnin”:

hist(z[5001:10000],breaks = 16,probability = TRUE,xlab = "x",main = "")

lines(x,dbeta(x,122,80))

| Version | Author | Date |

|---|---|---|

| db49bb6 | Peter Carbonetto | 2026-01-23 |

Exercise

Investigate how the starting point and proposal standard deviation affect the convergence of the algorithm.

Example 3: Estimating an allele frequency and inbreeding coefficient

A slightly more complex alternative than HWE is to assume that there is a tendency for people to mate with others who are slightly more related than “random” (as might happen in a geographically structured population, for example). This will result in an excess of homozygotes compared with HWE. A simple way to capture this is to introduce an extra parameter, the “inbreeding coefficient” \(f\), and assume that the genotypes \(AA\), \(Aa\) and \(aa\) have frequencies \(fp + (1-f)p^2\), \(2(1-f) p(1-p)\), and \(f(1-p) + (1-f)(1-p)^2\), respectively.

In most cases, it would be natural to treat \(f\) as a feature of the population, and therefore assume \(f\) is constant across loci. For simplicity, we will consider just a single locus.

Note that both \(f\) and \(p\) are constrained to lie between 0 and 1 (inclusive). A simple prior for each of these two parameters is to assume that they are independent and uniform on \([0,1]\).

Suppose that we sample \(n\) individuals, and observe \(n_{AA}\) individuals with genotype \(AA\), \(n_{Aa}\) individuals with genotype \(Aa\), and \(n_{aa}\) individuals with genotype \(aa\).

Exercise

- Write an M-H algorithm to sample from the joint distribution of \(f\) and \(p\). Hint: Below is some code to get you started. (You’ll need to fill in the “…”) As a first step, I suggest writing the function to compute the log-likelihood for the inbreeding model.

log_likelihood_inbreeding <- function (...) {

# ...

}fpsampler <- function (nAA, nAa, naa, niter, fstartval, pstartval,

fproposalsd, pproposalsd) {

f <- rep(0,niter)

p <- rep(0,niter)

f[1] <- fstartval

p[1] <- pstartval

for (i in 2:niter) {

currentf <- f[i-1]

currentp <- p[i-1]

# newf <- currentf + ...

# newp <- currentp + ...

# ...

}

return(list(f = f,p = p))

}- Use the output of your M-H algorithm to compute point estimates for \(f\) and \(p\) (e.g., posterior means) as well as interval estimates for \(f\) and \(p\) (e.g., 90% posterior credible intervals) when the data are \(n_{AA} = 50\), \(n_{Aa} = 21\), \(n_{aa} = 29\).

Addendum: Gibbs sampling

You could also tackle this problem with a Gibbs sampler (see also the gibbs1 and gibbs2 vignettes). To do so, you will want to use the following “latent variable” representation of the model: \[ \begin{aligned} \Pr(g_i = AA \mid z_i = 0) &= p^2 \\ \Pr(g_i = Aa \mid z_i = 0) &= 2p(1-p) \\ \Pr(g_i = aa \mid z_i = 0) &= (1-p)^2, \end{aligned} \] \[ \begin{aligned} \Pr(g_i = AA \mid z_i = 1) &= p \\ \Pr(g_i = Aa \mid z_i = 1) &= 0 \\ \Pr(g_i = aa \mid z_i = 1) &= 1-p, \end{aligned} \] and \[ z_i \sim \mathrm{Bernoulli}(f). \]

Note that summing over \(z_i\) gives the same model as above, e.g., \(\Pr(g_i = AA) = fp + (1-f)p^2\). (As an exercise, show that the probabilities of \(g_i = aa\) and \(g_i = Aa\) from this “latent variable augmented” model are the same as above.)

Reflection: What does the latent variable \(z_i\) represent?

Exercise

Using the latent variable representation above, implement a Gibbs sampler to generate samples from the joint distribution of \(z, f\) and \(p\) given the genotype data \(g\). Hint: this involves iterating the following steps:

Sample \(z\) from \(p(z \mid g, f, p)\).

Sample \(f, p\) from \(p(f, p \mid g, z)\).

sessionInfo()

# R version 4.3.3 (2024-02-29)

# Platform: aarch64-apple-darwin20 (64-bit)

# Running under: macOS 15.7.1

#

# Matrix products: default

# BLAS: /Library/Frameworks/R.framework/Versions/4.3-arm64/Resources/lib/libRblas.0.dylib

# LAPACK: /Library/Frameworks/R.framework/Versions/4.3-arm64/Resources/lib/libRlapack.dylib; LAPACK version 3.11.0

#

# locale:

# [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

#

# time zone: America/Chicago

# tzcode source: internal

#

# attached base packages:

# [1] stats graphics grDevices utils datasets methods base

#

# loaded via a namespace (and not attached):

# [1] vctrs_0.6.5 cli_3.6.5 knitr_1.50 rlang_1.1.6

# [5] xfun_0.52 stringi_1.8.7 promises_1.3.3 jsonlite_2.0.0

# [9] workflowr_1.7.1 glue_1.8.0 rprojroot_2.0.4 git2r_0.33.0

# [13] htmltools_0.5.8.1 httpuv_1.6.14 sass_0.4.10 rmarkdown_2.29

# [17] evaluate_1.0.4 jquerylib_0.1.4 tibble_3.3.0 fastmap_1.2.0

# [21] yaml_2.3.10 lifecycle_1.0.4 whisker_0.4.1 stringr_1.5.1

# [25] compiler_4.3.3 fs_1.6.6 Rcpp_1.1.0 pkgconfig_2.0.3

# [29] later_1.4.2 digest_0.6.37 R6_2.6.1 pillar_1.11.0

# [33] magrittr_2.0.3 bslib_0.9.0 tools_4.3.3 cachem_1.1.0