simple_transform_simulation

Matthew Stephens

2019-05-10

Last updated: 2019-05-10

Checks: 6 0

Knit directory: misc/analysis/

This reproducible R Markdown analysis was created with workflowr (version 1.2.0). The Report tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(12345) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility. The version displayed above was the version of the Git repository at the time these results were generated.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: analysis/.RData

Ignored: analysis/.Rhistory

Ignored: analysis/ALStruct_cache/

Ignored: data/.Rhistory

Ignored: data/pbmc/

Ignored: docs/figure/.DS_Store

Untracked files:

Untracked: .dropbox

Untracked: Icon

Untracked: _workflowr.yml

Untracked: analysis/GTEX-cogaps.Rmd

Untracked: analysis/SPCAvRP.rmd

Untracked: analysis/compare-transformed-models.Rmd

Untracked: analysis/cormotif.Rmd

Untracked: analysis/eQTL.perm.rand.pdf

Untracked: analysis/flash_test_tree.Rmd

Untracked: analysis/ieQTL.perm.rand.pdf

Untracked: analysis/m6amash.Rmd

Untracked: analysis/mash_bhat_z.Rmd

Untracked: analysis/mash_ieqtl_permutations.Rmd

Untracked: analysis/mixsqp.Rmd

Untracked: analysis/normalize.Rmd

Untracked: analysis/pbmc.Rmd

Untracked: analysis/poisson_transform.Rmd

Untracked: analysis/pseudodata.Rmd

Untracked: analysis/sc_bimodal.Rmd

Untracked: analysis/susie_en.Rmd

Untracked: analysis/susie_z_investigate.Rmd

Untracked: analysis/svd-timing.Rmd

Untracked: analysis/test-figure/

Untracked: analysis/test.Rpres

Untracked: analysis/test.md

Untracked: analysis/test_sparse.Rmd

Untracked: analysis/z.txt

Untracked: code/multivariate_testfuncs.R

Untracked: data/4matthew/

Untracked: data/4matthew2/

Untracked: data/E-MTAB-2805.processed.1/

Untracked: data/ENSG00000156738.Sim_Y2.RDS

Untracked: data/GDS5363_full.soft.gz

Untracked: data/GSE41265_allGenesTPM.txt

Untracked: data/Muscle_Skeletal.ACTN3.pm1Mb.RDS

Untracked: data/Thyroid.FMO2.pm1Mb.RDS

Untracked: data/bmass.HaemgenRBC2016.MAF01.Vs2.MergedDataSources.200kRanSubset.ChrBPMAFMarkerZScores.vs1.txt.gz

Untracked: data/bmass.HaemgenRBC2016.Vs2.NewSNPs.ZScores.hclust.vs1.txt

Untracked: data/bmass.HaemgenRBC2016.Vs2.PreviousSNPs.ZScores.hclust.vs1.txt

Untracked: data/finemap_data/fmo2.sim/b.txt

Untracked: data/finemap_data/fmo2.sim/dap_out.txt

Untracked: data/finemap_data/fmo2.sim/dap_out2.txt

Untracked: data/finemap_data/fmo2.sim/dap_out2_snp.txt

Untracked: data/finemap_data/fmo2.sim/dap_out_snp.txt

Untracked: data/finemap_data/fmo2.sim/data

Untracked: data/finemap_data/fmo2.sim/fmo2.sim.config

Untracked: data/finemap_data/fmo2.sim/fmo2.sim.k

Untracked: data/finemap_data/fmo2.sim/fmo2.sim.k4.config

Untracked: data/finemap_data/fmo2.sim/fmo2.sim.k4.snp

Untracked: data/finemap_data/fmo2.sim/fmo2.sim.ld

Untracked: data/finemap_data/fmo2.sim/fmo2.sim.snp

Untracked: data/finemap_data/fmo2.sim/fmo2.sim.z

Untracked: data/finemap_data/fmo2.sim/pos.txt

Untracked: data/logm.csv

Untracked: data/m.cd.RDS

Untracked: data/m.cdu.old.RDS

Untracked: data/m.new.cd.RDS

Untracked: data/m.old.cd.RDS

Untracked: data/mainbib.bib.old

Untracked: data/mat.csv

Untracked: data/mat.txt

Untracked: data/mat_new.csv

Untracked: data/matrix_lik.rds

Untracked: data/paintor_data/

Untracked: data/temp.txt

Untracked: data/y.txt

Untracked: data/y_f.txt

Untracked: data/zscore_jointLCLs_m6AQTLs_susie_eQTLpruned.rds

Untracked: data/zscore_jointLCLs_random.rds

Untracked: docs/figure/eigen.Rmd/

Untracked: docs/figure/fmo2.sim.Rmd/

Untracked: docs/figure/newVB.elbo.Rmd/

Untracked: docs/figure/poisson_transform.Rmd/

Untracked: docs/figure/rbc_zscore_mash2.Rmd/

Untracked: docs/figure/rbc_zscore_mash2_analysis.Rmd/

Untracked: docs/figure/rbc_zscores.Rmd/

Untracked: docs/figure/susie_en.Rmd/

Untracked: docs/trend_files/

Untracked: docs/z.txt

Untracked: explore_udi.R

Untracked: output/fit.varbvs.RDS

Untracked: output/glmnet.fit.RDS

Untracked: output/test.bv.txt

Untracked: output/test.gamma.txt

Untracked: output/test.hyp.txt

Untracked: output/test.log.txt

Untracked: output/test.param.txt

Untracked: output/test2.bv.txt

Untracked: output/test2.gamma.txt

Untracked: output/test2.hyp.txt

Untracked: output/test2.log.txt

Untracked: output/test2.param.txt

Untracked: output/test3.bv.txt

Untracked: output/test3.gamma.txt

Untracked: output/test3.hyp.txt

Untracked: output/test3.log.txt

Untracked: output/test3.param.txt

Untracked: output/test4.bv.txt

Untracked: output/test4.gamma.txt

Untracked: output/test4.hyp.txt

Untracked: output/test4.log.txt

Untracked: output/test4.param.txt

Untracked: output/test5.bv.txt

Untracked: output/test5.gamma.txt

Untracked: output/test5.hyp.txt

Untracked: output/test5.log.txt

Untracked: output/test5.param.txt

Unstaged changes:

Modified: analysis/_site.yml

Deleted: analysis/chunks.R

Modified: analysis/eigen.Rmd

Modified: analysis/fmo2.sim.Rmd

Modified: analysis/newVB.Rmd

Modified: analysis/selective_inference.Rmd

Modified: analysis/wSVD.Rmd

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the R Markdown and HTML files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view them.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | a713b11 | Matthew Stephens | 2019-05-10 | workflowr::wflow_publish(“simple_transform_simulation.Rmd”) |

Introduction

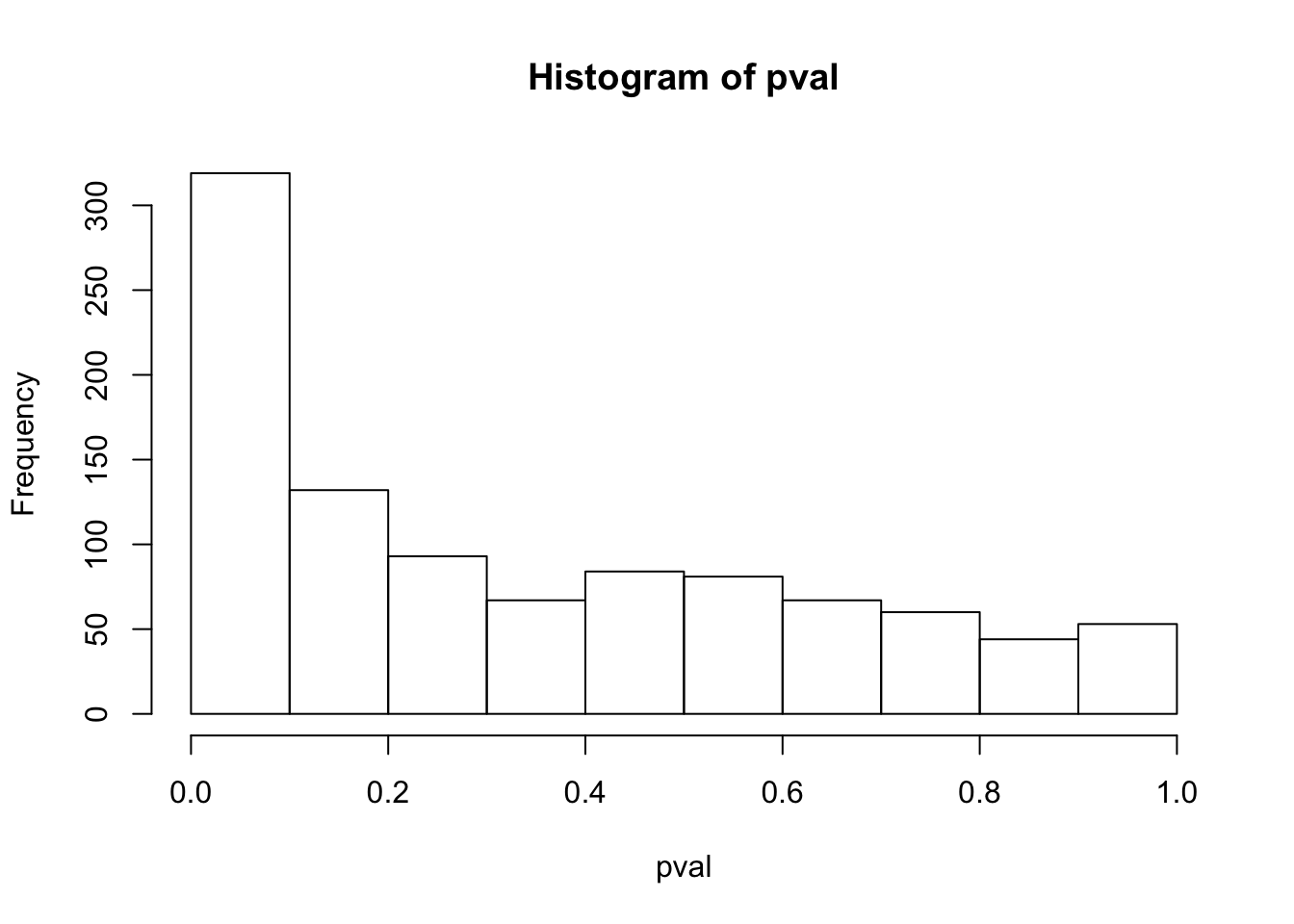

Let’s try a simple simulation to test t test on log-tranformed count data.

Specifically I simulate \(X_i | s_i \sim Poi(s_i \lambda_i)\) for two groups where the library size \(s_i\) differs between the groups (by a factor of 10, so quite extreme) but distribution of \(\lambda_i\) is the same in each group.

Indeed, here i just fix the \(\lambda_i\) to be all equal, to a value such that the data have mean 1 in one group and mean 10 in the other group.

Then do transform \(Y_i = \log(X_i/(s_i/median(s_i)) + 1)\)

set.seed(1)

n = 100

s = c(rep(10^5,n), rep(10^4,n))

l = rep(1/10^4,2*n)

niter = 1000

pval = rep(0,niter)

for(i in 1:niter){

x = rpois(2*n, s*l)

y = log(x/(s/median(s))+1)

pval[i] = t.test(y[1:100],y[101:200])$p.value

}

hist(pval)

So we see the t test p values are very non-uniform. One can see why one might worry about this….

Plot one example:

plot(y)

mean(y[1:100])[1] 1.883674mean(y[101:200])[1] 1.390502smaller difference in library size

Try the same thing but with only a factor 2 in library size

set.seed(1)

n = 100

s = c(rep(10^5,n), rep(0.5*10^5,n))

l = rep(1/(0.5*10^5),2*n)

niter = 1000

pval = rep(0,niter)

for(i in 1:niter){

x = rpois(2*n, s*l)

y = log(x/(s/median(s))+1)

pval[i] = t.test(y[1:100],y[101:200])$p.value

}

hist(pval)

Briefly explore bias correction

(Not sure this is 100% correct… needs checking)

According to Taylor series expansion https://users.rcc.uchicago.edu/~aksarkar/singlecell-modes/transforms.html the bias should be V(x)/2(E(x) + 1)^2 where in the first simulation x is x/(1.8) or x/0.18 in the two groups (because this is s/median(s))

(10/1.8^2) /(2*(10/1.8+1)^2) - (1/0.18^2) / (2*(1/0.18+1)^2)[1] -0.323183So if that is right, to second order, the difference in mean of y between two groups should be 0.32 in our first simulation…so try correcting for this….

set.seed(1)

n = 100

s = c(rep(10^5,n), rep(10^4,n))

l = rep(1/10^4,2*n)

niter = 1000

pval = rep(0,niter)

for(i in 1:niter){

x = rpois(2*n, s*l)

y = log(x/(s/median(s))+1)

pval[i] = t.test(y[1:100],y[101:200]+0.32)$p.value

}

hist(pval)

sessionInfo()R version 3.5.2 (2018-12-20)

Platform: x86_64-apple-darwin15.6.0 (64-bit)

Running under: macOS Mojave 10.14.4

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/3.5/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

loaded via a namespace (and not attached):

[1] workflowr_1.2.0 Rcpp_1.0.1 digest_0.6.18 rprojroot_1.3-2

[5] backports_1.1.3 git2r_0.24.0 magrittr_1.5 evaluate_0.12

[9] stringi_1.2.4 fs_1.2.6 whisker_0.3-2 rmarkdown_1.11

[13] tools_3.5.2 stringr_1.3.1 glue_1.3.0 xfun_0.4

[17] yaml_2.2.0 compiler_3.5.2 htmltools_0.3.6 knitr_1.21