Initial method evaluation: power

Joyce Hsiao

2019-04-26

Last updated: 2019-04-26

Checks: 6 0

Knit directory: dsc-log-fold-change/

This reproducible R Markdown analysis was created with workflowr (version 1.3.0). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20181115) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility. The version displayed above was the version of the Git repository at the time these results were generated.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: .sos/

Ignored: analysis/.sos/

Ignored: dsc/.sos/

Ignored: dsc/benchmark/

Ignored: dsc/dsc_test/.sos/

Ignored: output/

Untracked files:

Untracked: analysis/eval_initial_type1_libsize.Rmd

Untracked: dsc/Rplots.pdf

Unstaged changes:

Modified: analysis/index.Rmd

Modified: dsc/benchmark.dsc

Modified: dsc/benchmark.sh

Modified: dsc/modules/limma_voom.R

Modified: dsc/modules/qvalue.R

Modified: dsc/modules/sva.R

Modified: dsc/modules/sva_voom.R

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the R Markdown and HTML files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view them.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | eebda16 | Joyce Hsiao | 2019-04-26 | initial power results |

Introduction

Evaluate power of some DE methods for data with potential confounded design

Experimental data: PBMC of 2,683 samples and ~ 11,000 genes, including 7+ cell types. This data has large number of zeros (93% zeros in the count matrix).

- Simulation parameters

- number of genes: 1,000 randomly sampled from experimental data

- number of samples per group: (50, 50), (250, 250); draw n1+n2 from experimental data, then randomly assigned to group 1 or group 2

- fraction of true effects: .1

- distribution of true effects: normal distribution with mean 0 and sd 1

Extract dsc results

knitr::opts_chunk$set(warning=F, message=F)

library(dscrutils)

library(tidyverse)── Attaching packages ───────────────────────────────────────────────────────────── tidyverse 1.2.1 ──✔ ggplot2 3.1.0 ✔ purrr 0.3.2

✔ tibble 2.1.1 ✔ dplyr 0.8.0.1

✔ tidyr 0.8.3 ✔ stringr 1.3.1

✔ readr 1.3.1 ✔ forcats 0.3.0 ── Conflicts ──────────────────────────────────────────────────────────────── tidyverse_conflicts() ──

✖ dplyr::filter() masks stats::filter()

✖ dplyr::lag() masks stats::lag()extract dsc output and get p-values, q-values, true signals, etc.

dir_dsc <- "/scratch/midway2/joycehsiao/dsc-log-fold-change/pipe_power"

dsc_res <- dscquery(dir_dsc,

targets=c("data_poisthin_power",

"data_poisthin_power.seed",

"data_poisthin_power.n1",

"method", "pval_rank"),

ignore.missing.file = T)

method_vec <- as.factor(dsc_res$method)

n_methods <- nlevels(method_vec)

res <- vector("list",n_methods)

for (i in 1:nrow(dsc_res)) {

print(i)

fl_pval <- readRDS(file.path(dir_dsc,

paste0(as.character(dsc_res$method.output.file[i]), ".rds")))

fl_beta <- readRDS(file.path(dir_dsc,

paste0(as.character(dsc_res$data_poisthin_power.output.file[i]), ".rds")))

seed <- dsc_res$data_poisthin_power.seed[i]

n1 <- dsc_res$data_poisthin_power.n1[i]

fl_qval <- readRDS(file.path(dir_dsc,

paste0(as.character(dsc_res$pval_rank.output.file[i]), ".rds")))

res[[i]] <- data.frame(method = as.character(dsc_res$method)[i],

seed = seed,

n1=n1,

truth_vec = fl_beta$beta != 0,

pval = fl_pval$pval,

qval = fl_qval$qval,

stringsAsFactors = F)

roc_output <- pROC::roc(truth_vec ~ pval, data=res[[i]])

res[[i]]$auc <- roc_output$auc

}

res_merge <- do.call(rbind, res)

saveRDS(res_merge, file = "output/eval_initial_power.Rmd/res_merge_power.rds")False discovery rate control

res_merge <- readRDS(file = "output/eval_initial_power.Rmd/res_merge_power.rds")

make_plots <- function(res, args=list(n1, labels), title) {

fdr_thres <- .1

n_methods <- length(unique(res$method))

cols <- RColorBrewer::brewer.pal(n_methods,name="Dark2")

library(cowplot)

title <- ggdraw() + draw_label(title, fontface='bold')

p1 <- res %>% group_by(method, seed) %>%

filter(n1 == args$n1) %>%

summarise(power = sum(qval < fdr_thres & truth_vec==TRUE, na.rm=T)/sum(truth_vec==TRUE)) %>%

ggplot(., aes(x=method, y=power, col=method)) +

geom_boxplot() + geom_point() +

xlab("") + ylab("Power") +

scale_x_discrete(position = "top",

labels=args$labels) +

scale_color_manual(values=cols) +

ggtitle(paste("Power at q-value < ", fdr_thres, "(total 1K)")) +

theme(axis.text.x=element_text(angle = 20, vjust = -.3, hjust=-.1))

p2 <- res %>% group_by(method, seed) %>%

filter(n1 == args$n1) %>%

summarise(false_pos_rate = sum(qval < fdr_thres & truth_vec==F, na.rm=T)/sum(qval < fdr_thres,

na.rm=T)) %>%

ggplot(., aes(x=method, y=false_pos_rate, col=method)) +

geom_boxplot() + geom_point() +

xlab("") + ylab("False discovery rate") +

scale_x_discrete(position = "top",

labels=args$labels) +

scale_color_manual(values=cols) +

geom_hline(yintercept=.1, col="gray40", lty=3) +

ggtitle(paste("FDR at q-value < ", fdr_thres, "(total 1K)")) +

theme(axis.text.x=element_text(angle = 20, vjust = -.3, hjust=-.1))

print(plot_grid(title, plot_grid(p1,p2), ncol=1, rel_heights = c(.1,1)))

}

levels(factor(res_merge$method))[1] "deseq2" "edger" "limma_voom"

[4] "t_test" "t_test_log2cpm_quant" "wilcoxon" labels <- c("deseq2", "edger", "limma_v", "t_test", "t_log2cpm_q", "wilcoxon")

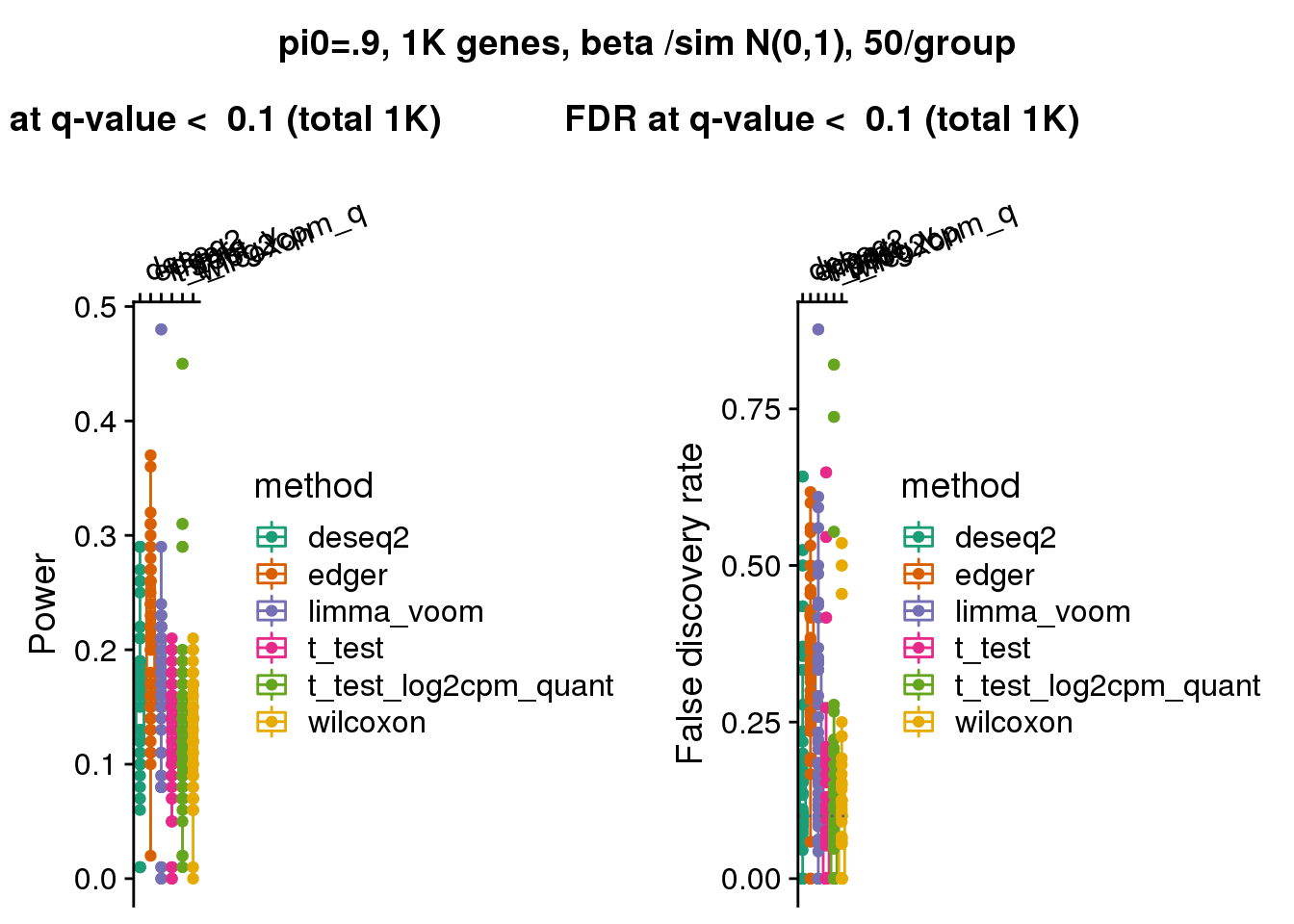

make_plots(res_merge, args=list(n1=50, labels=labels),

title="pi0=.9, 1K genes, beta /sim N(0,1), 50/group")

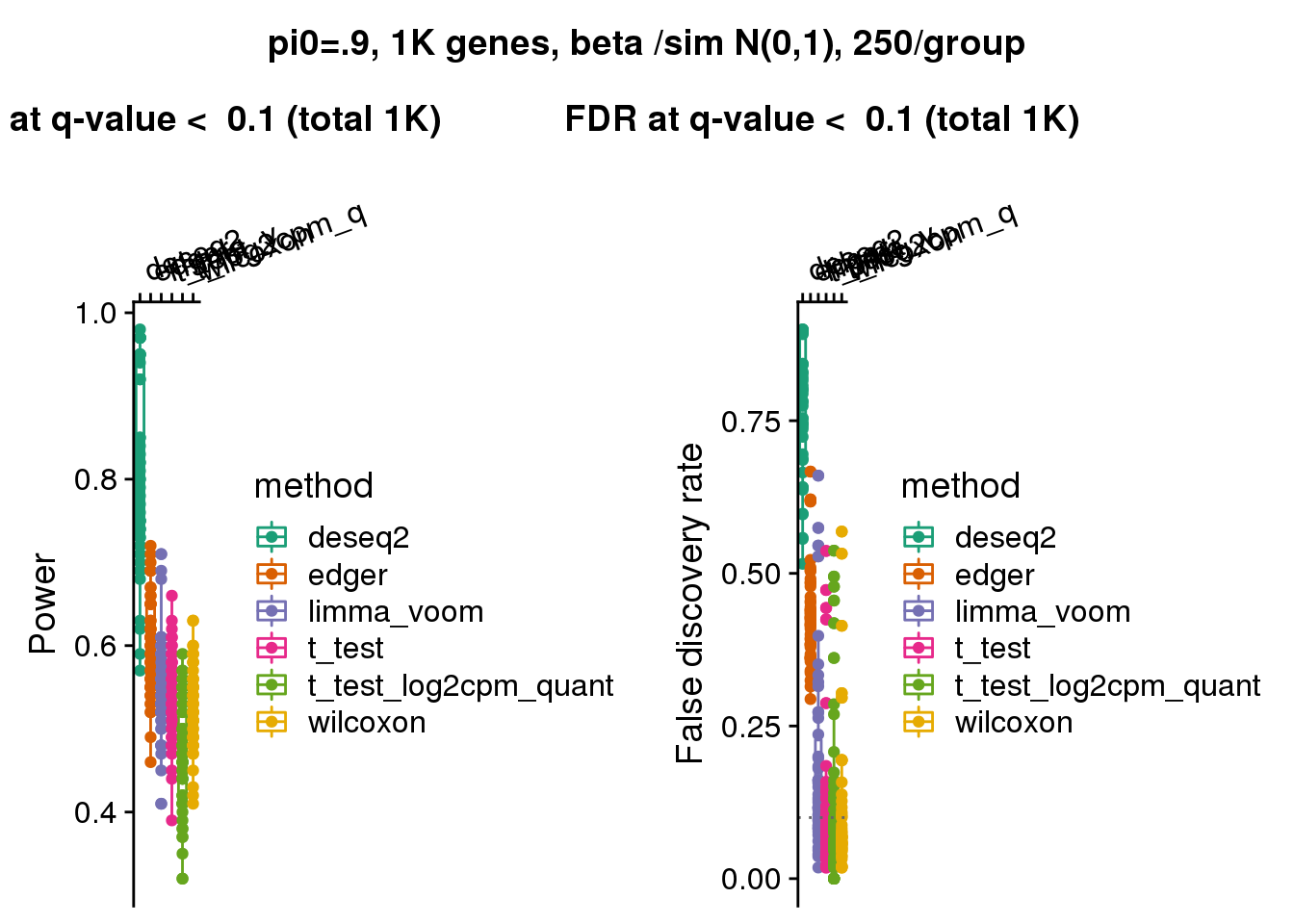

make_plots(res_merge, args=list(n1=250, labels=labels),

title="pi0=.9, 1K genes, beta /sim N(0,1), 250/group")

AUC

res_merge <- readRDS(file = "output/eval_initial_power.Rmd/res_merge_power.rds")

library(dplyr)

res_merge_auc <- res_merge %>% group_by(method, seed, n1) %>% slice(1)

make_plots_auc <- function(res, args=list(n1, labels), title) {

n_methods <- length(unique(res$method))

cols <- RColorBrewer::brewer.pal(n_methods,name="Dark2")

res %>% group_by(method) %>%

filter(n1 == args$n1) %>%

ggplot(., aes(x=method, y=auc, col=method)) +

geom_boxplot() + geom_point() +

xlab("") + ylab("Area under the ROC curve") +

scale_x_discrete(position = "top",

labels=args$labels) +

scale_color_manual(values=cols) +

ggtitle("AUC") +

theme(axis.text.x=element_text(angle = 20, vjust = -.3, hjust=-.1))

}

levels(factor(res_merge_auc$method))[1] "deseq2" "edger" "limma_voom"

[4] "t_test" "t_test_log2cpm_quant" "wilcoxon" labels <- c("deseq2", "edger", "limma_v", "t_test", "t_log2cpm_q", "wilcoxon")

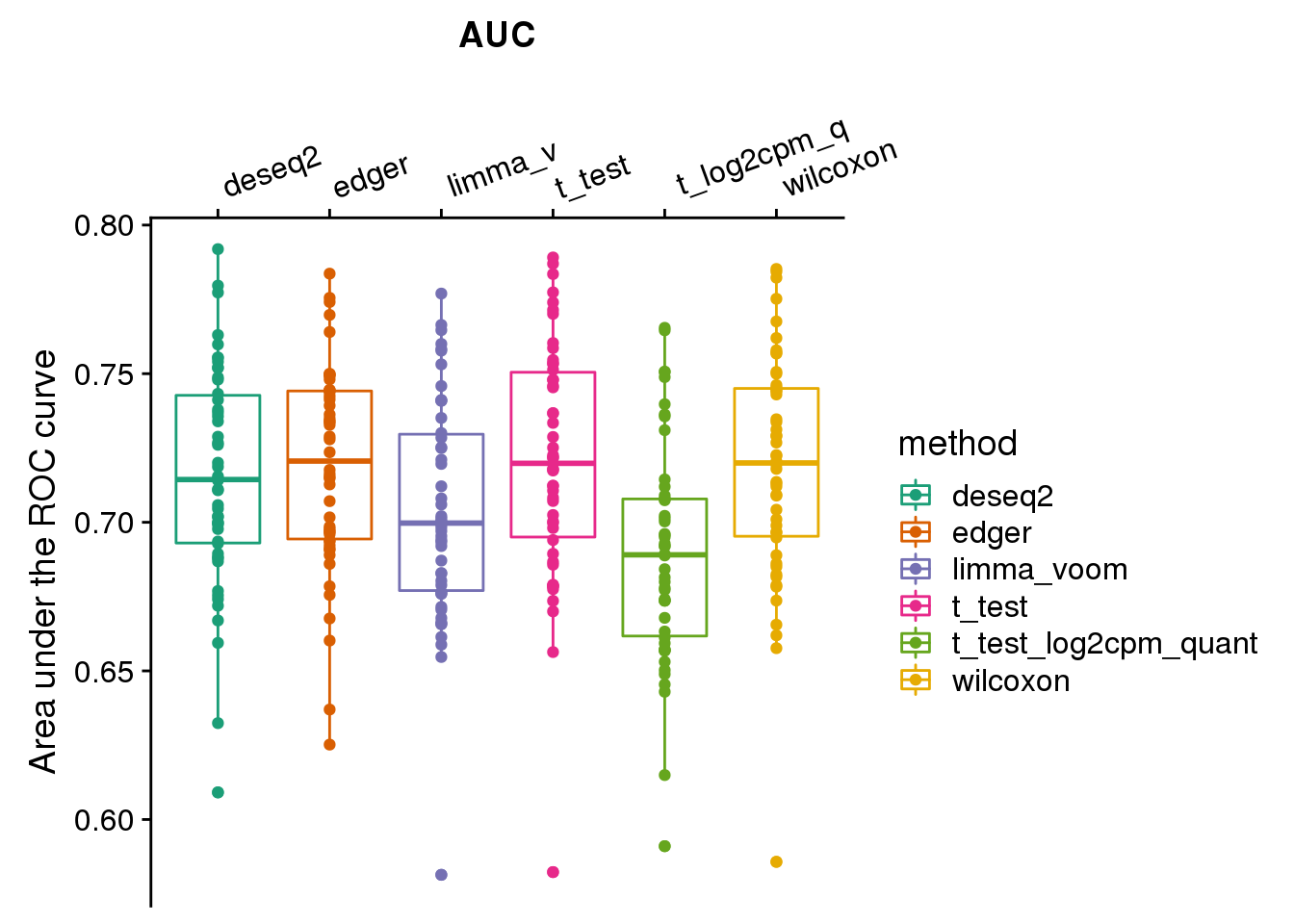

make_plots_auc(res_merge_auc, args=list(n1=50, labels=labels))

levels(factor(res_merge_auc$method))[1] "deseq2" "edger" "limma_voom"

[4] "t_test" "t_test_log2cpm_quant" "wilcoxon" labels <- c("deseq2", "edger", "limma_v", "t_test", "t_log2cpm_q", "wilcoxon")

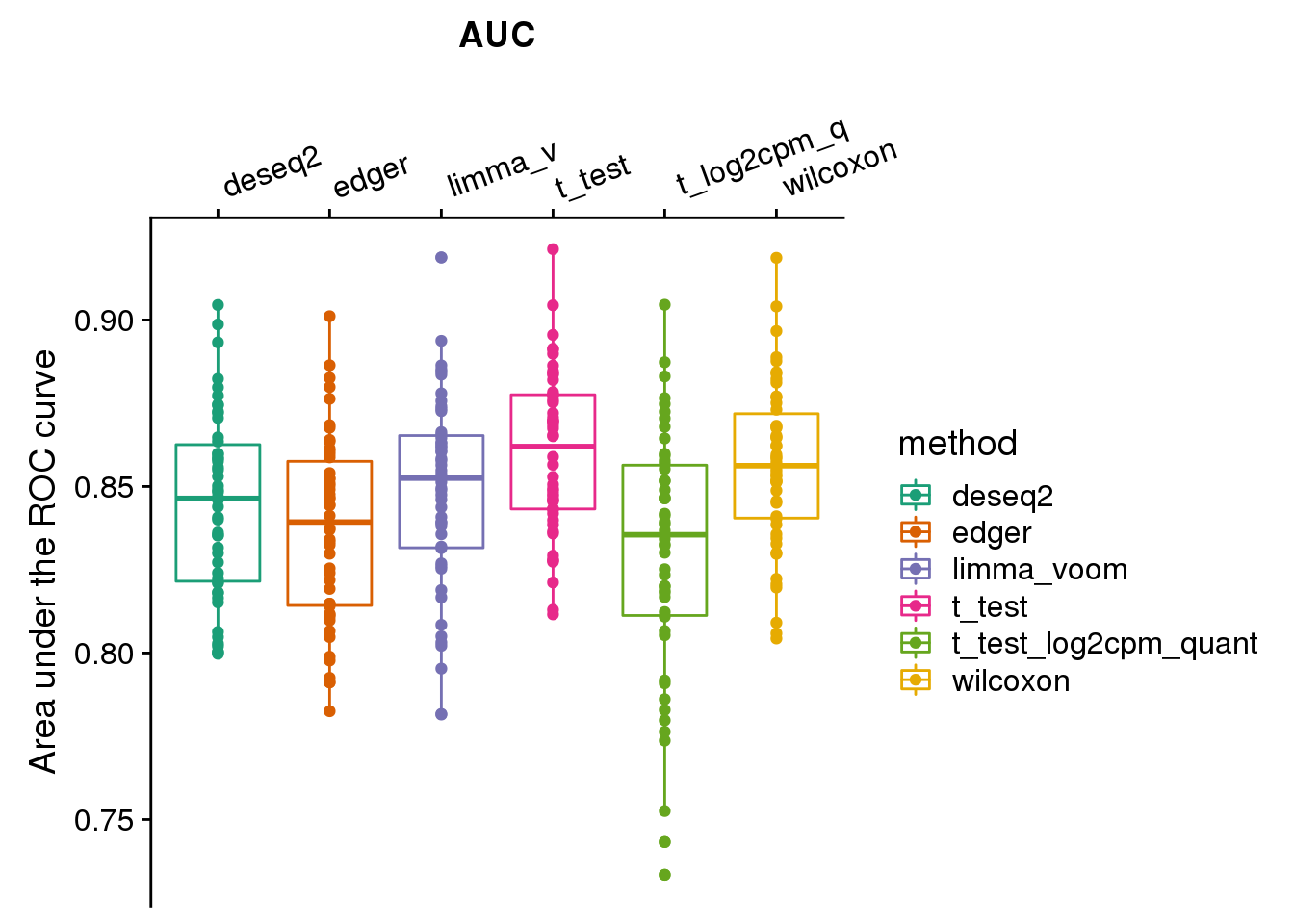

make_plots_auc(res_merge_auc, args=list(n1=250, labels=labels))

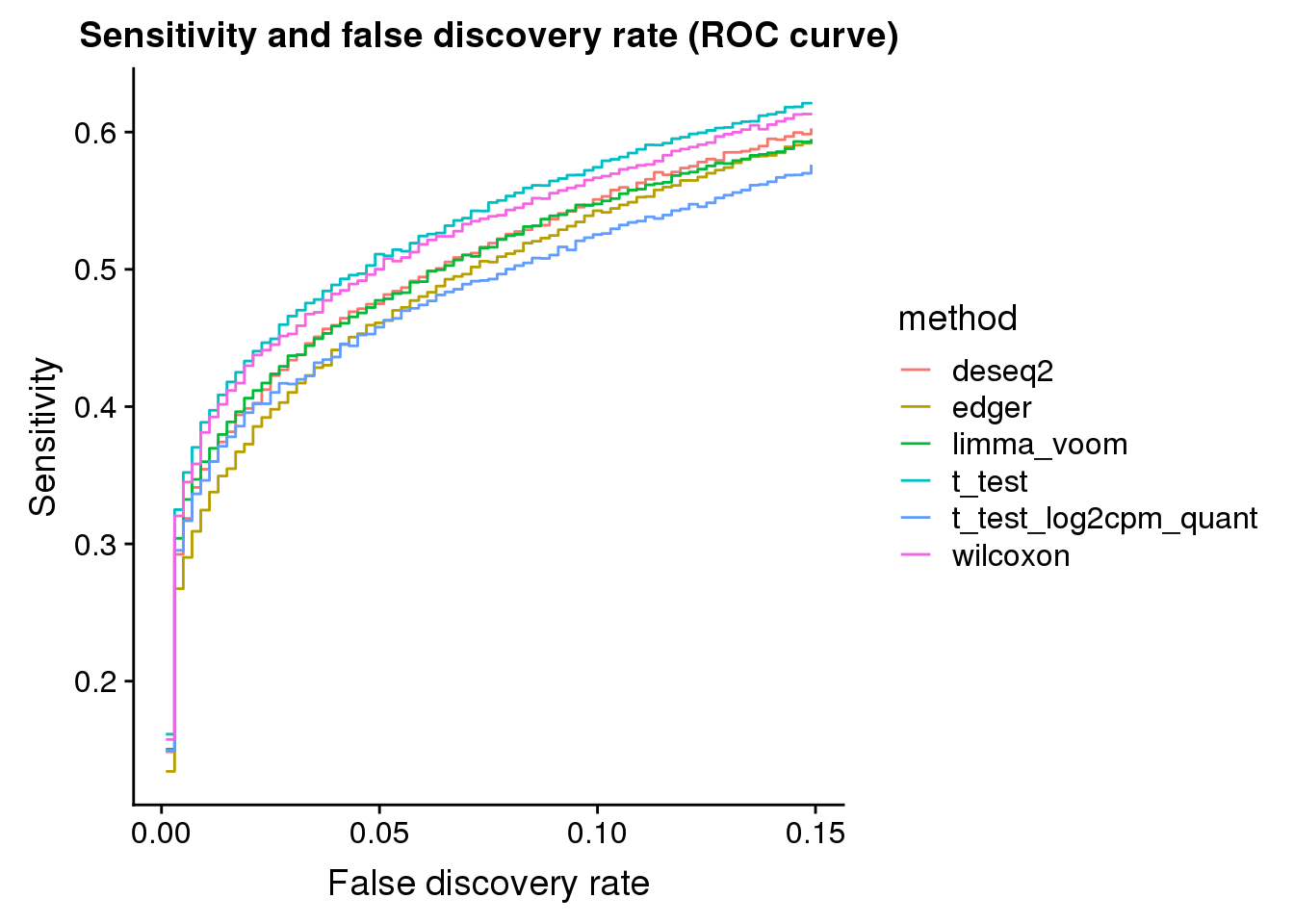

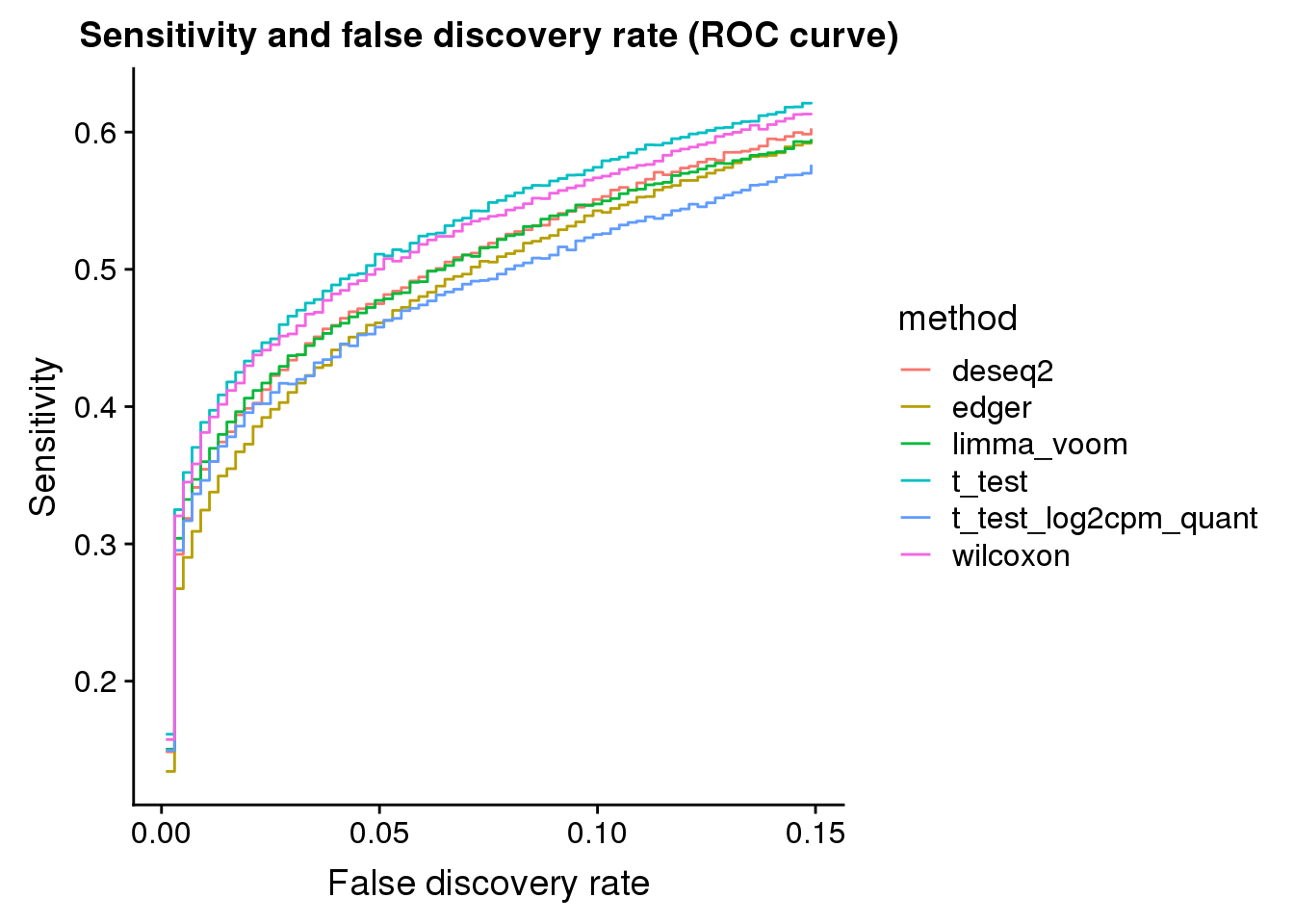

ROC

get_roc_est <- function(res_merge, n1, fpr_nbin=500) {

method_list <- levels(factor(res_merge$method))

seed_list <- unique(res_merge$seed)

out_roc_est <- lapply(1:length(method_list), function(i) {

df_sub <- res_merge %>% filter(method==method_list[i] & n1==n1)

roc_est_seed <- lapply(1:length(seed_list), function(j) {

roc_set_seed_one <- with(df_sub[df_sub$seed==seed_list[j],],

pROC::auc(response=truth_vec, predictor=pval))

fpr <- 1-attr(roc_set_seed_one, "roc")$specificities

tpr <- attr(roc_set_seed_one, "roc")$sensitivities

data.frame(fpr=fpr,tpr=tpr,seed=seed_list[j])

})

roc_est_seed <- do.call(rbind, roc_est_seed)

fpr_range <- range(roc_est_seed$fpr)

fpr_seq <- seq.int(from=fpr_range[1], to = fpr_range[2], length.out = fpr_nbin+1)

tpr_est_mean <- rep(NA, fpr_nbin)

for (index in 1:fpr_nbin) {

tpr_est_mean[index] <- mean( roc_est_seed$tpr[which(roc_est_seed$fpr >= fpr_seq[index] & roc_est_seed$fpr < fpr_seq[index+1])], na.rm=T)

}

fpr_bin_mean <- fpr_seq[-length(fpr_seq)]+(diff(fpr_seq)/2)

roc_bin_est <- data.frame(fpr_bin_mean=fpr_bin_mean,tpr_est_mean=tpr_est_mean)

roc_bin_est <- roc_bin_est[!is.na(roc_bin_est$tpr_est_mean),]

roc_bin_est$method <- method_list[i]

return(roc_bin_est)

})

out <- do.call(rbind, out_roc_est)

out$method <- factor(out$method)

return(out)

}

roc_est_50 <- get_roc_est(res_merge, n1=50, fpr_nbin=500)

roc_est_50$method <- factor(roc_est_50$method)

ggplot(subset(roc_est_50, fpr_bin_mean < .15 | tpr_est_mean < .15),

aes(x=fpr_bin_mean, y=tpr_est_mean, col=method)) +

geom_step() + xlab("False discovery rate") + ylab("Sensitivity") +

ggtitle("Sensitivity and false discovery rate (ROC curve)")

roc_est_250 <- get_roc_est(res_merge, n1=250, fpr_nbin=500)

roc_est_250$method <- factor(roc_est_250$method)

ggplot(subset(roc_est_250, fpr_bin_mean < .15 | tpr_est_mean < .15),

aes(x=fpr_bin_mean, y=tpr_est_mean, col=method)) +

geom_step() + xlab("False discovery rate") + ylab("Sensitivity") +

ggtitle("Sensitivity and false discovery rate (ROC curve)")

Old

Some plotting and summary functions

# type I error related functions ----------

plot_oneiter_pval <- function(pvals_res_oneiter, cols, seed=1, bins=30) {

n_methods <- length(unique(pvals_res_oneiter$method))

print(

ggplot(pvals_res_oneiter, aes(x=pval, fill=method)) +

facet_wrap(~method) +

geom_histogram(bins=bins) +

# xlim(xlims[1],xlims[2]) +

scale_fill_manual(values=cols) )

}

plot_oneiter_qq <- function(pvals_res_oneiter, cols, plot_overlay=T,

title_label=NULL, xlims=c(0,1), pch.type="S") {

methods <- unique(pvals_res_oneiter$method)

n_methods <- length(methods)

if(plot_overlay) {

print(

ggplot(pvals_res_oneiter, aes(sample=pval, col=method)) +

stat_qq(cex=.7) +

scale_color_manual(values=cols) )

} else {

print(

ggplot(pvals_res_oneiter, aes(sample=pval, col=method)) +

facet_wrap(~method) +

stat_qq(cex=.7) +

scale_color_manual(values=cols) )

}

}

# power related functions ----------

get_roc_est <- function(pvals_res, fpr_nbin=100) {

method_list <- levels(factor(pvals_res$method))

seed_list <- unique(pvals_res$seed)

out_roc_est <- lapply(1:length(method_list), function(i) {

df_sub <- pvals_res %>% filter(method==method_list[i] & prop_null==prop_null)

roc_est_seed <- lapply(1:length(seed_list), function(j) {

roc_set_seed_one <- with(df_sub[df_sub$seed==seed_list[j],],

pROC::auc(response=truth_vec, predictor=qval))

fpr <- 1-attr(roc_set_seed_one, "roc")$specificities

tpr <- attr(roc_set_seed_one, "roc")$sensitivities

data.frame(fpr=fpr,tpr=tpr,seed=seed_list[j])

})

roc_est_seed <- do.call(rbind, roc_est_seed)

fpr_range <- range(roc_est_seed$fpr)

fpr_seq <- seq.int(from=fpr_range[1], to = fpr_range[2], length.out = fpr_nbin+1)

tpr_est_mean <- rep(NA, fpr_nbin)

for (index in 1:fpr_nbin) {

tpr_est_mean[index] <- mean( roc_est_seed$tpr[which(roc_est_seed$fpr >= fpr_seq[index] & roc_est_seed$fpr < fpr_seq[index+1])], na.rm=T)

}

fpr_bin_mean <- fpr_seq[-length(fpr_seq)]+(diff(fpr_seq)/2)

roc_bin_est <- data.frame(fpr_bin_mean=fpr_bin_mean,tpr_est_mean=tpr_est_mean)

roc_bin_est <- roc_bin_est[!is.na(roc_bin_est$tpr_est_mean),]

roc_bin_est$method <- method_list[i]

return(roc_bin_est)

})

out <- do.call(rbind, out_roc_est)

out$method <- factor(out$method)

return(out)

}

# Type I error

library(tidyverse)

plot_type1 <- function(res, alpha, labels,

args=list(prop_null, shuffle_sample, betasd,

labels)) {

n_methods <- length(unique(res$method))

cols <- RColorBrewer::brewer.pal(n_methods,name="Dark2")

res %>% filter(prop_null==args$prop_null & shuffle_sample == args$shuffle_sample & betasd == args$betasd) %>%

group_by(method, seed) %>%

summarise(type1=mean(pval<alpha, na.rm=T), nvalid=sum(!is.na(pval))) %>%

# mutate(type1=replace(type1, type1==0, NA)) %>%

group_by(method) %>%

summarise(mn=mean(type1, na.rm=T),

n=sum(!is.na(type1)), se=sd(type1, na.rm=T)/sqrt(n)) %>%

ggplot(., aes(x=method, y=mn, col=method)) +

geom_errorbar(aes(ymin=mn+se, ymax=mn-se), width=.3) +

geom_line() + geom_point() + xlab("") +

ylab("mean Type I error +/- s.e.") +

scale_x_discrete(position = "top",

labels=args$labels) +

scale_color_manual(values=cols)

}

## FDR control at .1

plot_fdr <- function(res, args=list(prop_null, shuffle_sample, betasd), title) {

fdr_thres <- .1

n_methods <- length(unique(res$method))

cols <- RColorBrewer::brewer.pal(n_methods,name="Dark2")

library(cowplot)

title <- ggdraw() + draw_label(title, fontface='bold')

p1 <- res %>% group_by(method, seed, prop_null) %>%

filter(prop_null == args$prop_null & shuffle_sample==args$shuffle_sample & args$betasd==betasd) %>%

summarise(pos_sum = sum(qval < fdr_thres, na.rm=T)) %>%

group_by(method, prop_null) %>%

summarise(pos_sum_mn = mean(pos_sum),

pos_sum_n = sum(!is.na(pos_sum)),

pos_sum_se = sd(pos_sum)/sqrt(pos_sum_n)) %>%

ggplot(., aes(x=method, y=pos_sum_mn, col=method)) +

geom_errorbar(aes(ymin=pos_sum_mn-pos_sum_se,

ymax=pos_sum_mn+pos_sum_se), width=.3) +

geom_line() + geom_point() + xlab("") +

ylab("mean count of significant cases +/- s.e.") +

scale_x_discrete(position = "top",

labels=c("deseq2", "edger", "glm_q",

"limma_v", "mast", "t_test", "wilcox")) +

scale_color_manual(values=cols) +

ggtitle(paste("No. genes at q-value < ", fdr_thres, "(total 1K)"))

p2 <- res %>% group_by(method, seed, prop_null) %>%

filter(prop_null == args$prop_null & shuffle_sample==args$shuffle_sample & betasd ==args$betasd) %>%

summarise(false_pos_rate = sum(qval < fdr_thres & truth_vec==F, na.rm=T)/sum(qval < fdr_thres,

na.rm=T)) %>%

group_by(method, prop_null) %>%

summarise(false_pos_rate_mn = mean(false_pos_rate),

false_pos_rate_n = sum(!is.na(false_pos_rate)),

false_pos_rate_se = sd(false_pos_rate)/sqrt(false_pos_rate_n)) %>%

ggplot(., aes(x=method, y=false_pos_rate_mn, col=method)) +

geom_errorbar(aes(ymin=false_pos_rate_mn-false_pos_rate_se,

ymax=false_pos_rate_mn+false_pos_rate_se), width=.3) +

geom_line() + geom_point() + xlab("") +

geom_hline(yintercept=.1, col="gray40", lty=3) +

ylab("mean false postive rate +/- s.e.") +

ggtitle(paste("Mean false discovery rate at q-value < ", fdr_thres)) +

scale_x_discrete(position = "top",

labels=c("deseq2", "edger", "glm_q",

"limma_v", "mast", "t_test", "wilcox")) +

scale_color_manual(values=cols)

print(plot_grid(title, plot_grid(p1,p2), ncol=1, rel_heights = c(.1,1)))

}

## Power: Mean AUC

plot_roc <- function(roc_est, cols,

title_label=NULL) {

n_methods <- length(unique(roc_est$method))

print(

ggplot(roc_est, aes(x=fpr_bin_mean,

y=tpr_est_mean, col=method)) +

# geom_hline(yintercept=alpha,

# color = "red", size=.5) +

geom_step() +

scale_color_manual(values=cols)

)

}

# AUC ----------

plot_auc <- function(res, args=list(prop_null, shuffle_sample, betasd)) {

library(pROC)

n_methods <- length(unique(res$method))

cols <- RColorBrewer::brewer.pal(n_methods,name="Dark2")

res %>% group_by(method, seed) %>%

filter(prop_null == args$prop_null & shuffle_sample == args$shuffle_sample & betasd == args$betasd) %>%

summarise(auc_est=roc(response=truth_vec, predictor=qval)$auc) %>%

group_by(method) %>%

summarise(auc_mean=mean(auc_est),

auc_n = sum(!is.na(auc_est)),

auc_se = sd(auc_est)/sqrt(auc_n)) %>%

ggplot(., aes(x=method, y=auc_mean, col=method)) +

geom_errorbar(aes(ymin=auc_mean-auc_se,

ymax=auc_mean+auc_se), width=.3) +

geom_line() + geom_point() + xlab("") +

ylab("mean AUC +/- s.e.") +

scale_color_manual(values=cols) +

scale_x_discrete(position = "top",

labels=c("deseq2", "edger", "glm_q",

"limma_v", "mast", "t_test", "wilcox"))

}

sessionInfo()R version 3.5.1 (2018-07-02)

Platform: x86_64-pc-linux-gnu (64-bit)

Running under: Scientific Linux 7.4 (Nitrogen)

Matrix products: default

BLAS/LAPACK: /software/openblas-0.2.19-el7-x86_64/lib/libopenblas_haswellp-r0.2.19.so

locale:

[1] LC_CTYPE=en_US.UTF-8 LC_NUMERIC=C

[3] LC_TIME=en_US.UTF-8 LC_COLLATE=en_US.UTF-8

[5] LC_MONETARY=en_US.UTF-8 LC_MESSAGES=en_US.UTF-8

[7] LC_PAPER=en_US.UTF-8 LC_NAME=C

[9] LC_ADDRESS=C LC_TELEPHONE=C

[11] LC_MEASUREMENT=en_US.UTF-8 LC_IDENTIFICATION=C

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] cowplot_0.9.4 forcats_0.3.0 stringr_1.3.1 dplyr_0.8.0.1

[5] purrr_0.3.2 readr_1.3.1 tidyr_0.8.3 tibble_2.1.1

[9] ggplot2_3.1.0 tidyverse_1.2.1 dscrutils_0.3.8

loaded via a namespace (and not attached):

[1] Rcpp_1.0.1 RColorBrewer_1.1-2 cellranger_1.1.0

[4] plyr_1.8.4 compiler_3.5.1 pillar_1.3.1

[7] git2r_0.23.0 workflowr_1.3.0 tools_3.5.1

[10] digest_0.6.18 lubridate_1.7.4 jsonlite_1.6

[13] evaluate_0.12 nlme_3.1-137 gtable_0.2.0

[16] lattice_0.20-38 pkgconfig_2.0.2 rlang_0.3.4

[19] cli_1.0.1 rstudioapi_0.10 yaml_2.2.0

[22] haven_1.1.2 withr_2.1.2 xml2_1.2.0

[25] httr_1.3.1 knitr_1.20 pROC_1.13.0

[28] hms_0.4.2 generics_0.0.2 fs_1.2.6

[31] rprojroot_1.3-2 grid_3.5.1 tidyselect_0.2.5

[34] glue_1.3.0 R6_2.4.0 readxl_1.1.0

[37] rmarkdown_1.10 modelr_0.1.2 magrittr_1.5

[40] whisker_0.3-2 backports_1.1.2 scales_1.0.0

[43] htmltools_0.3.6 rvest_0.3.2 assertthat_0.2.0

[46] colorspace_1.3-2 labeling_0.3 stringi_1.2.4

[49] lazyeval_0.2.1 munsell_0.5.0 broom_0.5.1

[52] crayon_1.3.4