Identify clusters in 68k PBMC data using topic model

Peter Carbonetto

Last updated: 2021-01-08

Checks: 7 0

Knit directory: single-cell-topics/analysis/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2.9000). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(1) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 6bb17bb. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: data/droplet.RData

Ignored: data/pbmc_68k.RData

Ignored: data/pbmc_purified.RData

Ignored: data/pulseseq.RData

Ignored: output/droplet/diff-count-droplet.RData

Ignored: output/droplet/fits-droplet.RData

Ignored: output/droplet/rds/

Ignored: output/pbmc-68k/fits-pbmc-68k.RData

Ignored: output/pbmc-68k/rds/

Ignored: output/pbmc-purified/diff-count-pbmc-purified.RData

Ignored: output/pbmc-purified/fits-pbmc-purified.RData

Ignored: output/pbmc-purified/rds/

Ignored: output/pulseseq/diff-count-pulseseq.RData

Ignored: output/pulseseq/fits-pulseseq.RData

Ignored: output/pulseseq/rds/

Untracked files:

Untracked: analysis/plots_purified_pbmc_cache/

Untracked: plots/

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/clusters_68k_pbmc.Rmd) and HTML (docs/clusters_68k_pbmc.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 6bb17bb | Peter Carbonetto | 2021-01-08 | workflowr::wflow_publish(“clusters_68k_pbmc.Rmd”) |

| Rmd | d857fc3 | Peter Carbonetto | 2021-01-08 | Added more clusters to 68k data. |

| Rmd | 7b0b312 | Peter Carbonetto | 2021-01-08 | Working on clustering of 68k pbmc data. |

| html | 3797287 | Peter Carbonetto | 2021-01-08 | First (very preliminary) build of clusters_68k_pbmc analysis. |

| Rmd | 7898f7d | Peter Carbonetto | 2021-01-08 | workflowr::wflow_publish(“clusters_68k_pbmc.Rmd”) |

| Rmd | b9b3185 | Peter Carbonetto | 2020-12-30 | A little more re-organizing. |

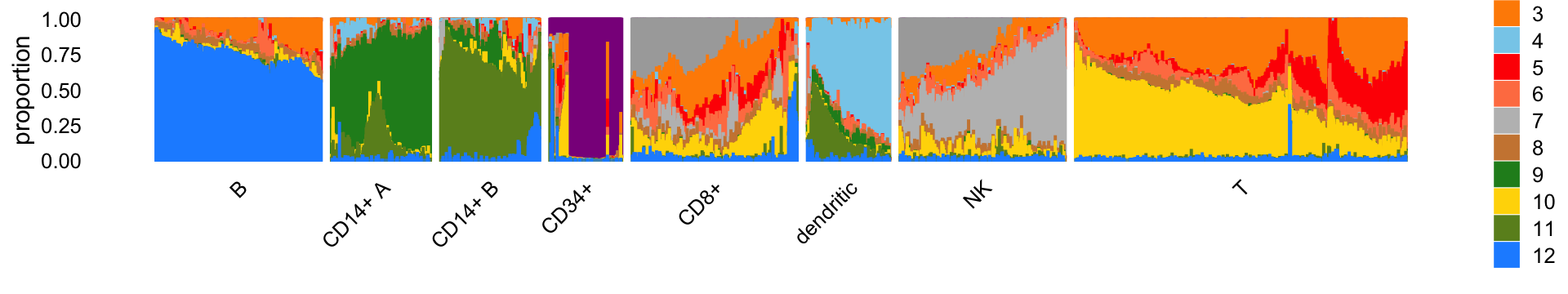

Here we identify clusters of cells from the mixture proportions estimated in the 68k PBMC data.

Load the packages used in the analysis below, as well as additional functions that we will use to generate some of the plots.

library(Matrix)

library(fastTopics)

library(ggplot2)

library(cowplot)Load the count data.

load("../data/pbmc_68k.RData")Load the \(K = 12\) Poisson NMF model fit.

fit <- readRDS("../output/pbmc-68k/rds/fit-pbmc-68k-scd-ex-k=12.rds")$fit

fit <- poisson2multinom(fit)From the PCs of the mixture proportions, we define clusters…

pca <- prcomp(fit$L)$x

n <- nrow(pca)

x <- rep("U",n)

pc2 <- pca[,2]

pc4 <- pca[,4]

pc8 <- pca[,8]

pc10 <- pca[,10]

x[pc2 < -0.4] <- "B"

x[pc4 < -0.15] <- "CD14+"

x[pc8 > 0.2] <- "dendritic"

x[pc10 > 0.1] <- "CD34+"Add text here.

rows <- which(x == "U")

n <- length(rows)

fit2 <- select_loadings(fit,loadings = rows)

pca <- prcomp(fit2$L)$x

y <- rep("T",n)

pc1 <- pca[,1]

pc2 <- pca[,2]

y[pc2 > -1.7*pc1 - 0.11] <- "CD8+"

y[pc2 > -1.55*pc1 + 0.55] <- "NK"

x[rows] <- yAdd text here.

rows <- which(x == "CD14+")

n <- length(rows)

fit2 <- select_loadings(fit,loadings = rows)

pca <- prcomp(fit2$L)$x

y <- rep("CD14+ A",n)

pc1 <- pca[,1]

y[pc1 > 0] <- "CD14+ B"

x[rows] <- yAdd text here.

rows <- which(x == "T")

n <- length(rows)

fit2 <- select_loadings(fit,loadings = rows)

pca <- prcomp(fit2$L)$x

y <- rep("T",n)

pc1 <- pca[,1]

pc2 <- pca[,2]

x[rows] <- yIn summary, we have subdivided the cells into 8 subsets:

samples$cluster <- factor(x)

table(samples$cluster)

#

# B CD14+ A CD14+ B CD34+ CD8+ dendritic NK T

# 3492 1714 2432 218 11354 774 9873 38722The Structure plot summarizes the mixture proportions in each of the 8 clusters:

set.seed(1)

topic_colors <- c("darkmagenta", # CD34+

"darkgray", # NK 2

"darkorange", # T cells 2

"skyblue", # dendritic

"red", # T cells 3

"coral",

"gray", # NK 1

"peru",

"forestgreen", # CD14+ A

"gold", # T cells 1

"olivedrab", # CD14+ B

"dodgerblue") # B cells

x <- samples$cluster

rows <- sort(c(sample(which(x == "B"),500),

sample(which(x == "CD14+ A"),300),

sample(which(x == "CD14+ B"),300),

which(x == "CD34+"),

sample(which(x == "CD8+"),500),

sample(which(x == "dendritic"),250),

sample(which(x == "NK"),500),

sample(which(x == "T"),1000)))

p1 <- structure_plot(select_loadings(fit,loadings = rows),

grouping = x[rows],topics = 1:12,

colors = topic_colors,

n = Inf,gap = 30,

num_threads = 4,verbose = TRUE)

# Perplexity automatically changed to 98 because original setting of 100 was too large for the number of samples (300)

# Perplexity automatically changed to 98 because original setting of 100 was too large for the number of samples (300)

# Perplexity automatically changed to 71 because original setting of 100 was too large for the number of samples (218)

# Perplexity automatically changed to 82 because original setting of 100 was too large for the number of samples (250)

print(p1)

# Read the 500 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 100.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.43 seconds (sparsity = 0.742920)!

# Learning embedding...

# Iteration 50: error is 47.638464 (50 iterations in 0.15 seconds)

# Iteration 100: error is 47.638462 (50 iterations in 0.14 seconds)

# Iteration 150: error is 47.638413 (50 iterations in 0.14 seconds)

# Iteration 200: error is 47.637309 (50 iterations in 0.14 seconds)

# Iteration 250: error is 47.613655 (50 iterations in 0.14 seconds)

# Iteration 300: error is 0.609044 (50 iterations in 0.13 seconds)

# Iteration 350: error is 0.608733 (50 iterations in 0.14 seconds)

# Iteration 400: error is 0.608737 (50 iterations in 0.14 seconds)

# Iteration 450: error is 0.608735 (50 iterations in 0.14 seconds)

# Iteration 500: error is 0.608735 (50 iterations in 0.14 seconds)

# Iteration 550: error is 0.608735 (50 iterations in 0.14 seconds)

# Iteration 600: error is 0.608735 (50 iterations in 0.13 seconds)

# Iteration 650: error is 0.608735 (50 iterations in 0.13 seconds)

# Iteration 700: error is 0.608735 (50 iterations in 0.13 seconds)

# Iteration 750: error is 0.608735 (50 iterations in 0.13 seconds)

# Iteration 800: error is 0.608735 (50 iterations in 0.14 seconds)

# Iteration 850: error is 0.608735 (50 iterations in 0.13 seconds)

# Iteration 900: error is 0.608735 (50 iterations in 0.13 seconds)

# Iteration 950: error is 0.608735 (50 iterations in 0.14 seconds)

# Iteration 1000: error is 0.608735 (50 iterations in 0.13 seconds)

# Fitting performed in 2.73 seconds.

# Read the 300 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 98.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.26 seconds (sparsity = 0.996111)!

# Learning embedding...

# Iteration 50: error is 41.898241 (50 iterations in 0.08 seconds)

# Iteration 100: error is 41.895377 (50 iterations in 0.12 seconds)

# Iteration 150: error is 41.893294 (50 iterations in 0.09 seconds)

# Iteration 200: error is 41.888640 (50 iterations in 0.12 seconds)

# Iteration 250: error is 41.890229 (50 iterations in 0.11 seconds)

# Iteration 300: error is 0.575567 (50 iterations in 0.10 seconds)

# Iteration 350: error is 0.572624 (50 iterations in 0.09 seconds)

# Iteration 400: error is 0.572603 (50 iterations in 0.08 seconds)

# Iteration 450: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 500: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 550: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 600: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 650: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 700: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 750: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 800: error is 0.572604 (50 iterations in 0.07 seconds)

# Iteration 850: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 900: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 950: error is 0.572604 (50 iterations in 0.08 seconds)

# Iteration 1000: error is 0.572604 (50 iterations in 0.08 seconds)

# Fitting performed in 1.72 seconds.

# Read the 300 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 98.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.26 seconds (sparsity = 0.996111)!

# Learning embedding...

# Iteration 50: error is 41.760346 (50 iterations in 0.09 seconds)

# Iteration 100: error is 41.760525 (50 iterations in 0.09 seconds)

# Iteration 150: error is 41.757688 (50 iterations in 0.09 seconds)

# Iteration 200: error is 41.756017 (50 iterations in 0.09 seconds)

# Iteration 250: error is 41.754217 (50 iterations in 0.09 seconds)

# Iteration 300: error is 0.407453 (50 iterations in 0.08 seconds)

# Iteration 350: error is 0.406339 (50 iterations in 0.08 seconds)

# Iteration 400: error is 0.406324 (50 iterations in 0.08 seconds)

# Iteration 450: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 500: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 550: error is 0.406325 (50 iterations in 0.09 seconds)

# Iteration 600: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 650: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 700: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 750: error is 0.406325 (50 iterations in 0.09 seconds)

# Iteration 800: error is 0.406325 (50 iterations in 0.09 seconds)

# Iteration 850: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 900: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 950: error is 0.406325 (50 iterations in 0.08 seconds)

# Iteration 1000: error is 0.406325 (50 iterations in 0.09 seconds)

# Fitting performed in 1.70 seconds.

# Read the 218 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 71.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.11 seconds (sparsity = 0.994740)!

# Learning embedding...

# Iteration 50: error is 43.099303 (50 iterations in 0.06 seconds)

# Iteration 100: error is 42.599924 (50 iterations in 0.06 seconds)

# Iteration 150: error is 42.020502 (50 iterations in 0.06 seconds)

# Iteration 200: error is 41.467851 (50 iterations in 0.06 seconds)

# Iteration 250: error is 41.513416 (50 iterations in 0.06 seconds)

# Iteration 300: error is 0.431792 (50 iterations in 0.06 seconds)

# Iteration 350: error is 0.429353 (50 iterations in 0.06 seconds)

# Iteration 400: error is 0.429361 (50 iterations in 0.05 seconds)

# Iteration 450: error is 0.429362 (50 iterations in 0.06 seconds)

# Iteration 500: error is 0.429362 (50 iterations in 0.06 seconds)

# Iteration 550: error is 0.429361 (50 iterations in 0.06 seconds)

# Iteration 600: error is 0.429361 (50 iterations in 0.06 seconds)

# Iteration 650: error is 0.429361 (50 iterations in 0.06 seconds)

# Iteration 700: error is 0.429362 (50 iterations in 0.06 seconds)

# Iteration 750: error is 0.429362 (50 iterations in 0.06 seconds)

# Iteration 800: error is 0.429361 (50 iterations in 0.06 seconds)

# Iteration 850: error is 0.429362 (50 iterations in 0.06 seconds)

# Iteration 900: error is 0.429361 (50 iterations in 0.06 seconds)

# Iteration 950: error is 0.429361 (50 iterations in 0.06 seconds)

# Iteration 1000: error is 0.429361 (50 iterations in 0.06 seconds)

# Fitting performed in 1.18 seconds.

# Read the 500 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 100.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.45 seconds (sparsity = 0.757144)!

# Learning embedding...

# Iteration 50: error is 47.605878 (50 iterations in 0.15 seconds)

# Iteration 100: error is 47.605878 (50 iterations in 0.17 seconds)

# Iteration 150: error is 47.605878 (50 iterations in 0.19 seconds)

# Iteration 200: error is 47.605878 (50 iterations in 0.20 seconds)

# Iteration 250: error is 47.605878 (50 iterations in 0.21 seconds)

# Iteration 300: error is 0.793471 (50 iterations in 0.19 seconds)

# Iteration 350: error is 0.750964 (50 iterations in 0.15 seconds)

# Iteration 400: error is 0.746936 (50 iterations in 0.14 seconds)

# Iteration 450: error is 0.746925 (50 iterations in 0.14 seconds)

# Iteration 500: error is 0.746926 (50 iterations in 0.15 seconds)

# Iteration 550: error is 0.746926 (50 iterations in 0.14 seconds)

# Iteration 600: error is 0.746926 (50 iterations in 0.16 seconds)

# Iteration 650: error is 0.746926 (50 iterations in 0.14 seconds)

# Iteration 700: error is 0.746926 (50 iterations in 0.17 seconds)

# Iteration 750: error is 0.746926 (50 iterations in 0.17 seconds)

# Iteration 800: error is 0.746926 (50 iterations in 0.15 seconds)

# Iteration 850: error is 0.746926 (50 iterations in 0.14 seconds)

# Iteration 900: error is 0.746926 (50 iterations in 0.15 seconds)

# Iteration 950: error is 0.746926 (50 iterations in 0.14 seconds)

# Iteration 1000: error is 0.746926 (50 iterations in 0.15 seconds)

# Fitting performed in 3.18 seconds.

# Read the 250 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 82.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.16 seconds (sparsity = 0.995744)!

# Learning embedding...

# Iteration 50: error is 42.049296 (50 iterations in 0.07 seconds)

# Iteration 100: error is 42.000455 (50 iterations in 0.07 seconds)

# Iteration 150: error is 42.012136 (50 iterations in 0.07 seconds)

# Iteration 200: error is 42.001540 (50 iterations in 0.07 seconds)

# Iteration 250: error is 42.006459 (50 iterations in 0.08 seconds)

# Iteration 300: error is 0.314057 (50 iterations in 0.07 seconds)

# Iteration 350: error is 0.310530 (50 iterations in 0.06 seconds)

# Iteration 400: error is 0.310511 (50 iterations in 0.06 seconds)

# Iteration 450: error is 0.310510 (50 iterations in 0.06 seconds)

# Iteration 500: error is 0.310511 (50 iterations in 0.06 seconds)

# Iteration 550: error is 0.310512 (50 iterations in 0.06 seconds)

# Iteration 600: error is 0.310510 (50 iterations in 0.07 seconds)

# Iteration 650: error is 0.310511 (50 iterations in 0.06 seconds)

# Iteration 700: error is 0.310511 (50 iterations in 0.06 seconds)

# Iteration 750: error is 0.310510 (50 iterations in 0.06 seconds)

# Iteration 800: error is 0.310511 (50 iterations in 0.06 seconds)

# Iteration 850: error is 0.310510 (50 iterations in 0.06 seconds)

# Iteration 900: error is 0.310511 (50 iterations in 0.06 seconds)

# Iteration 950: error is 0.310510 (50 iterations in 0.06 seconds)

# Iteration 1000: error is 0.310512 (50 iterations in 0.07 seconds)

# Fitting performed in 1.30 seconds.

# Read the 500 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 100.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.39 seconds (sparsity = 0.697040)!

# Learning embedding...

# Iteration 50: error is 47.598205 (50 iterations in 0.15 seconds)

# Iteration 100: error is 44.533920 (50 iterations in 0.14 seconds)

# Iteration 150: error is 44.532870 (50 iterations in 0.14 seconds)

# Iteration 200: error is 44.532870 (50 iterations in 0.14 seconds)

# Iteration 250: error is 44.532869 (50 iterations in 0.14 seconds)

# Iteration 300: error is 0.527663 (50 iterations in 0.14 seconds)

# Iteration 350: error is 0.527308 (50 iterations in 0.14 seconds)

# Iteration 400: error is 0.527307 (50 iterations in 0.14 seconds)

# Iteration 450: error is 0.527308 (50 iterations in 0.13 seconds)

# Iteration 500: error is 0.527308 (50 iterations in 0.13 seconds)

# Iteration 550: error is 0.527308 (50 iterations in 0.13 seconds)

# Iteration 600: error is 0.527307 (50 iterations in 0.14 seconds)

# Iteration 650: error is 0.527307 (50 iterations in 0.13 seconds)

# Iteration 700: error is 0.527308 (50 iterations in 0.14 seconds)

# Iteration 750: error is 0.527308 (50 iterations in 0.13 seconds)

# Iteration 800: error is 0.527308 (50 iterations in 0.13 seconds)

# Iteration 850: error is 0.527308 (50 iterations in 0.13 seconds)

# Iteration 900: error is 0.527308 (50 iterations in 0.13 seconds)

# Iteration 950: error is 0.527308 (50 iterations in 0.14 seconds)

# Iteration 1000: error is 0.527308 (50 iterations in 0.14 seconds)

# Fitting performed in 2.74 seconds.

# Read the 1000 x 12 data matrix successfully!

# OpenMP is working. 4 threads.

# Using no_dims = 1, perplexity = 100.000000, and theta = 0.100000

# Computing input similarities...

# Building tree...

# Done in 0.89 seconds (sparsity = 0.379990)!

# Learning embedding...

# Iteration 50: error is 54.552828 (50 iterations in 0.36 seconds)

# Iteration 100: error is 51.879913 (50 iterations in 0.34 seconds)

# Iteration 150: error is 51.879523 (50 iterations in 0.28 seconds)

# Iteration 200: error is 51.879521 (50 iterations in 0.28 seconds)

# Iteration 250: error is 51.879528 (50 iterations in 0.29 seconds)

# Iteration 300: error is 0.862462 (50 iterations in 0.30 seconds)

# Iteration 350: error is 0.829645 (50 iterations in 0.29 seconds)

# Iteration 400: error is 0.825700 (50 iterations in 0.31 seconds)

# Iteration 450: error is 0.825325 (50 iterations in 0.30 seconds)

# Iteration 500: error is 0.825298 (50 iterations in 0.29 seconds)

# Iteration 550: error is 0.825303 (50 iterations in 0.30 seconds)

# Iteration 600: error is 0.825302 (50 iterations in 0.29 seconds)

# Iteration 650: error is 0.825301 (50 iterations in 0.29 seconds)

# Iteration 700: error is 0.825301 (50 iterations in 0.29 seconds)

# Iteration 750: error is 0.825302 (50 iterations in 0.29 seconds)

# Iteration 800: error is 0.825302 (50 iterations in 0.29 seconds)

# Iteration 850: error is 0.825301 (50 iterations in 0.29 seconds)

# Iteration 900: error is 0.825301 (50 iterations in 0.29 seconds)

# Iteration 950: error is 0.825302 (50 iterations in 0.30 seconds)

# Iteration 1000: error is 0.825301 (50 iterations in 0.29 seconds)

# Fitting performed in 5.96 seconds.Save the clustering of the 68k PBMC data to an RDS file.

saveRDS(samples,"clustering-pbmc-68k.rds")facs_colors <- c("forestgreen",

"dodgerblue",

"darkmagenta",

"firebrick",

"gray",

"tomato",

"yellow",

"magenta",

"darkorange",

"gold",

"darkblue",

"greenyellow")

p1 <- pca_plot(fit2,pcs = 1:2,fill = samples$celltype[rows]) +

scale_fill_manual(values = facs_colors)

p2 <- pca_plot(fit2,pcs = 1:2,fill = factor(x[rows]))

p3 <- pca_hexbin_plot(fit2,pcs = 1:2) +

scale_x_continuous(breaks = seq(-1,1,0.1)) +

scale_y_continuous(breaks = seq(-1,1,0.1)) +

theme_cowplot(font_size = 8)

plot_grid(p1,p2,p3,rel_widths = c(4,3,3),ncol = 3)

sessionInfo()

# R version 3.6.2 (2019-12-12)

# Platform: x86_64-apple-darwin15.6.0 (64-bit)

# Running under: macOS Catalina 10.15.7

#

# Matrix products: default

# BLAS: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRblas.0.dylib

# LAPACK: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRlapack.dylib

#

# locale:

# [1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

#

# attached base packages:

# [1] stats graphics grDevices utils datasets methods base

#

# other attached packages:

# [1] cowplot_1.0.0 ggplot2_3.3.0 fastTopics_0.4-13 Matrix_1.2-18

#

# loaded via a namespace (and not attached):

# [1] ggrepel_0.9.0 Rcpp_1.0.5 lattice_0.20-38

# [4] tidyr_1.0.0 prettyunits_1.1.1 assertthat_0.2.1

# [7] zeallot_0.1.0 rprojroot_1.3-2 digest_0.6.23

# [10] R6_2.4.1 backports_1.1.5 MatrixModels_0.4-1

# [13] evaluate_0.14 coda_0.19-3 httr_1.4.2

# [16] pillar_1.4.3 rlang_0.4.5 progress_1.2.2

# [19] lazyeval_0.2.2 data.table_1.12.8 irlba_2.3.3

# [22] SparseM_1.78 whisker_0.4 rmarkdown_2.3

# [25] labeling_0.3 Rtsne_0.15 stringr_1.4.0

# [28] htmlwidgets_1.5.1 munsell_0.5.0 compiler_3.6.2

# [31] httpuv_1.5.2 xfun_0.11 pkgconfig_2.0.3

# [34] mcmc_0.9-6 htmltools_0.4.0 tidyselect_0.2.5

# [37] tibble_2.1.3 workflowr_1.6.2.9000 quadprog_1.5-8

# [40] viridisLite_0.3.0 crayon_1.3.4 dplyr_0.8.3

# [43] withr_2.1.2 later_1.0.0 MASS_7.3-51.4

# [46] grid_3.6.2 jsonlite_1.6 gtable_0.3.0

# [49] lifecycle_0.1.0 git2r_0.26.1 magrittr_1.5

# [52] scales_1.1.0 RcppParallel_4.4.2 stringi_1.4.3

# [55] farver_2.0.1 fs_1.3.1 promises_1.1.0

# [58] vctrs_0.2.1 tools_3.6.2 glue_1.3.1

# [61] purrr_0.3.3 hms_0.5.2 yaml_2.2.0

# [64] colorspace_1.4-1 plotly_4.9.2 knitr_1.26

# [67] quantreg_5.54 MCMCpack_1.4-5