Analysis of Experiment 2

Last updated: 2020-04-25

Checks: 7 0

Knit directory: social_immunity/

This reproducible R Markdown analysis was created with workflowr (version 1.6.0). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20191017) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

These are the previous versions of the R Markdown and HTML files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view them.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 76a317d | lukeholman | 2020-04-25 | tweaks |

| html | 2235ae4 | lukeholman | 2020-04-25 | Build site. |

| Rmd | 99649a7 | lukeholman | 2020-04-25 | tweaks |

| html | 99649a7 | lukeholman | 2020-04-25 | tweaks |

| html | 0ede6e3 | lukeholman | 2020-04-24 | Build site. |

| Rmd | a1f8dc2 | lukeholman | 2020-04-24 | tweaks |

| html | 8c3b471 | lukeholman | 2020-04-21 | Build site. |

| Rmd | 1ce9e19 | lukeholman | 2020-04-21 | First commit 2020 |

| html | 1ce9e19 | lukeholman | 2020-04-21 | First commit 2020 |

| Rmd | aae65cf | lukeholman | 2019-10-17 | First commit |

| html | aae65cf | lukeholman | 2019-10-17 | First commit |

Load data and R packages

# All but 1 of these packages can be easily installed from CRAN.

# However it was harder to install the showtext package. On Mac, I did this:

# installed 'homebrew' using Terminal: ruby -e "$(curl -fsSL https://raw.githubusercontent.com/Homebrew/install/master/install)"

# installed 'libpng' using Terminal: brew install libpng

# installed 'showtext' in R using: devtools::install_github("yixuan/showtext")

library(showtext)

library(brms)

library(bayesplot)

library(tidyverse)

library(gridExtra)

library(kableExtra)

library(bayestestR)

library(tidybayes)

library(cowplot)

source("code/helper_functions.R")

# set up nice font for figure

nice_font <- "PT Serif"

font_add_google(name = nice_font, family = nice_font, regular.wt = 400, bold.wt = 700)

showtext_auto()

exp2_treatments <- c("Ringers", "LPS")

durations <- read_csv("data/data_collection_sheets/experiment_durations.csv") %>%

filter(experiment == 2) %>% select(-experiment)

outcome_tally <- read_csv(file = "data/clean_data/experiment_2_outcome_tally.csv") %>%

mutate(

outcome = str_replace_all(outcome, "Stayed inside the hive", "Stayed inside"),

outcome = str_replace_all(outcome, "Left of own volition", "Left voluntarily"),

outcome = factor(outcome, levels = c("Stayed inside", "Left voluntarily", "Forced out")),

treatment = str_replace_all(treatment, "Ringer CHC", "Ringers"),

treatment = str_replace_all(treatment, "LPS CHC", "LPS"),

treatment = factor(treatment, levels = exp2_treatments))

# Re-formatted version of the same data, where each row is an individual bee. We need this format to run the brms model.

data_for_categorical_model <- outcome_tally %>%

mutate(id = 1:n()) %>%

split(.$id) %>%

map(function(x){

if(x$n[1] == 0) return(NULL)

data.frame(

treatment = x$treatment[1],

hive = x$hive[1],

colour = x$colour[1],

outcome = rep(x$outcome[1], x$n))

}) %>% do.call("rbind", .) %>% as_tibble() %>%

arrange(hive, treatment) %>%

mutate(outcome_numeric = as.numeric(outcome),

hive = as.character(hive),

treatment = factor(treatment, levels = exp2_treatments)) %>%

left_join(durations, by = "hive") %>%

mutate(hive = C(factor(hive), sum)) # use "sum coding" for hive, since there is no obvious reference levelInspect the raw data

Sample sizes by treatment

sample_sizes <- data_for_categorical_model %>%

group_by(treatment) %>%

summarise(n = n())

sample_sizes %>%

kable() %>% kable_styling(full_width = FALSE)| treatment | n |

|---|---|

| Ringers | 294 |

| LPS | 291 |

Sample sizes by treatment and hive

data_for_categorical_model %>%

group_by(hive, treatment) %>%

summarise(n = n()) %>%

kable() %>% kable_styling(full_width = FALSE)| hive | treatment | n |

|---|---|---|

| Arts | Ringers | 70 |

| Arts | LPS | 68 |

| Garden | Ringers | 75 |

| Garden | LPS | 75 |

| Skylab | Ringers | 99 |

| Skylab | LPS | 100 |

| Zoology | Ringers | 50 |

| Zoology | LPS | 48 |

Inspect the results

outcome_tally %>%

select(-colour) %>%

kable(digits = 3) %>% kable_styling(full_width = FALSE) %>%

scroll_box(height = "380px")| hive | treatment | outcome | n |

|---|---|---|---|

| Arts | LPS | Stayed inside | 56 |

| Arts | LPS | Left voluntarily | 5 |

| Arts | LPS | Forced out | 7 |

| Arts | Ringers | Stayed inside | 64 |

| Arts | Ringers | Left voluntarily | 5 |

| Arts | Ringers | Forced out | 1 |

| Garden | LPS | Stayed inside | 70 |

| Garden | LPS | Left voluntarily | 2 |

| Garden | LPS | Forced out | 3 |

| Garden | Ringers | Stayed inside | 73 |

| Garden | Ringers | Left voluntarily | 2 |

| Garden | Ringers | Forced out | 0 |

| Skylab | LPS | Stayed inside | 93 |

| Skylab | LPS | Left voluntarily | 2 |

| Skylab | LPS | Forced out | 5 |

| Skylab | Ringers | Stayed inside | 97 |

| Skylab | Ringers | Left voluntarily | 1 |

| Skylab | Ringers | Forced out | 1 |

| Zoology | LPS | Stayed inside | 38 |

| Zoology | LPS | Left voluntarily | 4 |

| Zoology | LPS | Forced out | 6 |

| Zoology | Ringers | Stayed inside | 42 |

| Zoology | Ringers | Left voluntarily | 2 |

| Zoology | Ringers | Forced out | 6 |

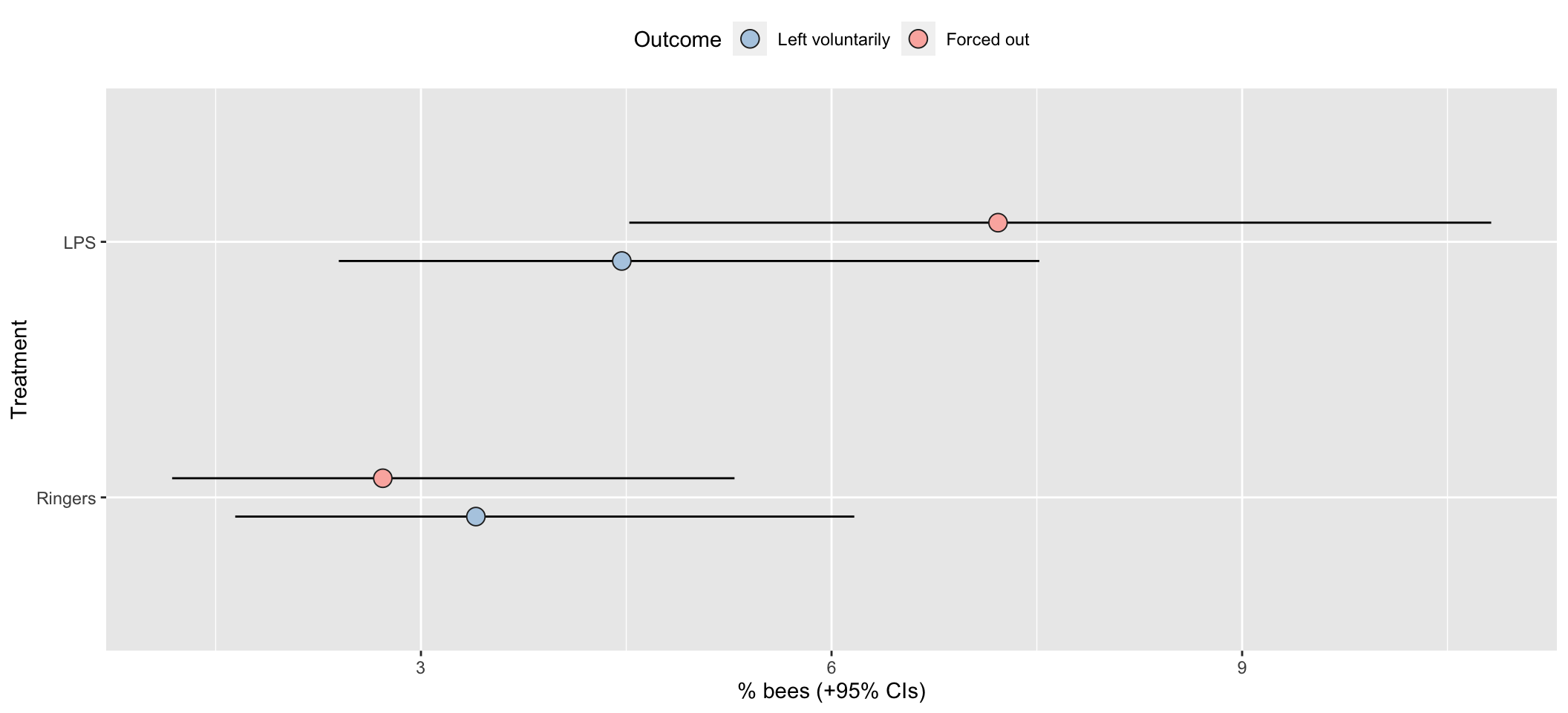

Raw results as a bar chart

pd <- position_dodge(.3)

outcome_tally %>%

group_by(treatment, outcome) %>%

summarise(n = sum(n)) %>% mutate() %>%

group_by(treatment) %>%

mutate(total_n = sum(n),

percent = 100 * n / sum(n),

SE = sqrt(total_n * (percent/100) * (1-(percent/100)))) %>%

ungroup() %>%

mutate(lowerCI = map_dbl(1:n(), ~ 100 * binom.test(n[.x], total_n[.x])$conf.int[1]),

upperCI = map_dbl(1:n(), ~ 100 * binom.test(n[.x], total_n[.x])$conf.int[2])) %>%

filter(outcome != "Stayed inside") %>%

ggplot(aes(treatment, percent, fill = outcome)) +

geom_errorbar(aes(ymin=lowerCI, ymax=upperCI), position = pd, width = 0) +

geom_point(stat = "identity", position = pd, colour = "grey15", pch = 21, size = 4) +

scale_fill_brewer(palette = "Pastel1", name = "Outcome", direction = -1) +

xlab("Treatment") + ylab("% bees (+95% CIs)") +

theme(legend.position = "top") +

coord_flip()

| Version | Author | Date |

|---|---|---|

| 1ce9e19 | lukeholman | 2020-04-21 |

Multinomial model of outcome

Run the models

Fit a multinomial logisitic model, with 3 possible outcomes describing what happened to each bee introduced to the hive: stayed inside, left of its own volition, or forced out by the other workers. To assess the effects of our predictor variables, we compare 5 models with different fixed factors, ranking them by posterior model probability.

if(!file.exists("output/exp2_model.rds")){

exp2_model_v1 <- brm(

outcome_numeric ~ treatment * hive + observation_time_minutes,

data = data_for_categorical_model,

prior = c(set_prior("normal(0, 3)", class = "b", dpar = "mu2"),

set_prior("normal(0, 3)", class = "b", dpar = "mu3")),

family = "categorical", save_all_pars = TRUE, sample_prior = TRUE,

chains = 4, cores = 1, iter = 5000, seed = 1)

exp2_model_v2 <- brm(

outcome_numeric ~ treatment + hive + observation_time_minutes,

data = data_for_categorical_model,

prior = c(set_prior("normal(0, 3)", class = "b", dpar = "mu2"),

set_prior("normal(0, 3)", class = "b", dpar = "mu3")),

family = "categorical", save_all_pars = TRUE, sample_prior = TRUE,

chains = 4, cores = 1, iter = 5000, seed = 1)

exp2_model_v3 <- brm(

outcome_numeric ~ hive + observation_time_minutes,

data = data_for_categorical_model,

prior = c(set_prior("normal(0, 3)", class = "b", dpar = "mu2"),

set_prior("normal(0, 3)", class = "b", dpar = "mu3")),

family = "categorical", save_all_pars = TRUE, sample_prior = TRUE,

chains = 4, cores = 1, iter = 5000, seed = 1)

posterior_model_probabilities <- tibble(

Model = c("treatment * hive + observation_time_minutes",

"treatment + hive + observation_time_minutes",

"hive + observation_time_minutes"),

post_prob = as.numeric(post_prob(exp2_model_v1,

exp2_model_v2,

exp2_model_v3))) %>%

arrange(-post_prob)

saveRDS(exp2_model_v2, "output/exp2_model.rds") # save the top model, treatment + hive

saveRDS(posterior_model_probabilities, "output/exp2_post_prob.rds")

}

exp2_model <- readRDS("output/exp2_model.rds")

posterior_model_probabilities <- readRDS("output/exp2_post_prob.rds")Posterior model probabilites

posterior_model_probabilities %>%

kable(digits = 3) %>% kable_styling()| Model | post_prob |

|---|---|

| hive + observation_time_minutes | 0.530 |

| treatment + hive + observation_time_minutes | 0.466 |

| treatment * hive + observation_time_minutes | 0.003 |

Fixed effects from the top model

The code chunk below wrangles the raw output of the summary() functions for brms models into a more readable table of results, and also adds ‘Bayesian p-values’ (i.e. the posterior probability that the true effect size has the same sign as the reported effect).

tableS3 <- get_fixed_effects_with_p_values(exp2_model) %>%

mutate(mu = map_chr(str_extract_all(Parameter, "mu[:digit:]"), ~ .x[1]),

Parameter = str_remove_all(Parameter, "mu[:digit:]_"),

Parameter = str_replace_all(Parameter, "treatment", "Treatment: "),

Parameter = str_replace_all(Parameter, "observation_time_minutes", "Observation duration (minutes)")) %>%

arrange(mu) %>%

select(-mu, -Rhat, -Bulk_ESS, -Tail_ESS)

names(tableS3)[3:5] <- c("Est. Error", "Lower 95% CI", "Upper 95% CI")

saveRDS(tableS3, file = "figures/tableS3.rds")

tableS3 %>%

kable(digits = 3) %>%

kable_styling(full_width = FALSE) %>%

pack_rows("% bees leaving voluntarily", 1, 6) %>%

pack_rows("% bees forced out", 7, 12)| Parameter | Estimate | Est. Error | Lower 95% CI | Upper 95% CI | p | |

|---|---|---|---|---|---|---|

| % bees leaving voluntarily | ||||||

| Intercept | -6.368 | 6.669 | -19.689 | 6.679 | 0.168 | |

| Treatment: LPS | 0.377 | 0.439 | -0.480 | 1.245 | 0.197 | |

| hive1 | 0.111 | 1.522 | -2.868 | 3.075 | 0.466 | |

| hive2 | -0.183 | 0.679 | -1.513 | 1.160 | 0.390 | |

| hive3 | 0.082 | 2.531 | -4.835 | 5.113 | 0.491 | |

| Observation duration (minutes) | 0.029 | 0.068 | -0.103 | 0.165 | 0.337 | |

| % bees forced out | ||||||

| Intercept | -5.179 | 6.708 | -18.321 | 7.894 | 0.222 | |

| Treatment: LPS | 1.105 | 0.433 | 0.291 | 1.989 | 0.004 | ** |

| hive1 | -0.030 | 1.544 | -3.059 | 2.971 | 0.490 | |

| hive2 | -0.847 | 0.705 | -2.266 | 0.490 | 0.112 | |

| hive3 | 0.049 | 2.554 | -4.892 | 5.089 | 0.493 | |

| Observation duration (minutes) | 0.015 | 0.069 | -0.118 | 0.149 | 0.414 | |

Table S3: Table summarising the posterior estimates of each fixed effect in the best-fitting model of Experiment 2. This was a multinomial model with three possible outcomes (stay inside, leave voluntarily, be forced out), and so there are two parameter estimates for the intercept and for each predictor in the model. ‘Treatment’ is a fixed factor with two levels, and the effect of LPS shown here is expressed relative to the ‘Ringers’ treatment. ‘Hive’ was a fixed factor with four levels; unlike for treatment, we modelled hive using deviation coding, such that the intercept term represents the mean across all hives (in the Ringers treatment), and the three hive terms represent the deviation from this mean for three of the four hives. Lastly, observation duration was a continuous variable expressed to the nearest minute. The \(p\) column gives the posterior probability that the true effect size is opposite in sign to what is reported in the Estimate column, similarly to a \(p\)-value.

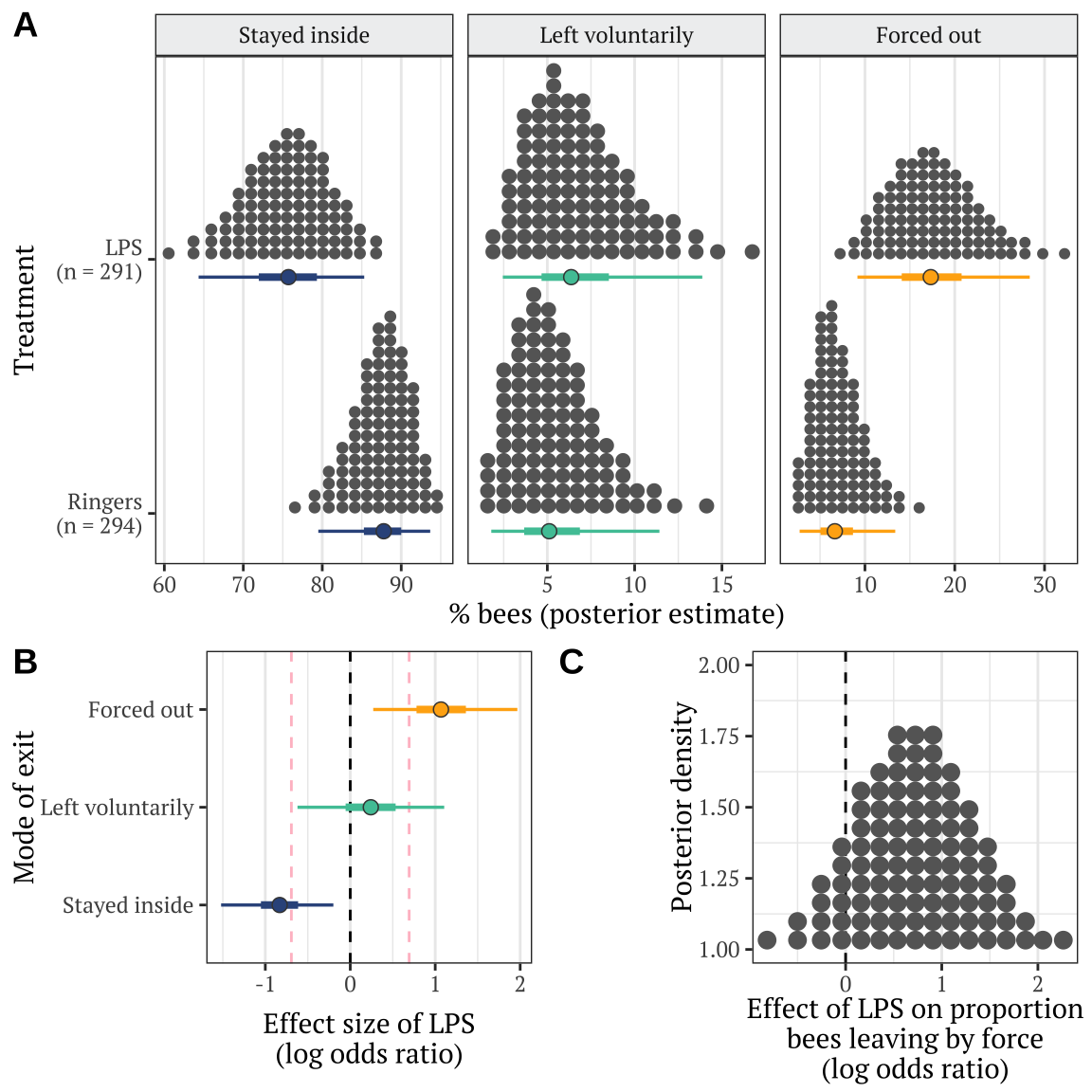

Plotting estimates from the model

Derive prediction from the posterior

get_posterior_preds <- function(hive){

new <- expand.grid(treatment = levels(data_for_categorical_model$treatment),

hive = "Zoology",

observation_time_minutes = 120)

preds <- fitted(exp2_model, newdata = new, summary = FALSE)

dimnames(preds) <- list(NULL, paste(new$treatment, new$hive, sep = "~"), NULL)

rbind(

as.data.frame(preds[,, 1]) %>% mutate(outcome = "Stayed inside", posterior_sample = 1:n()),

as.data.frame(preds[,, 2]) %>% mutate(outcome = "Left voluntarily", posterior_sample = 1:n()),

as.data.frame(preds[,, 3]) %>% mutate(outcome = "Forced out", posterior_sample = 1:n())) %>%

gather(treatment, prop, contains("~")) %>%

mutate(treatment = strsplit(treatment, split = "~"),

hive = map_chr(treatment, ~ .x[2]),

treatment = map_chr(treatment, ~ .x[1]),

treatment = factor(treatment, c("Ringers", "LPS")),

outcome = factor(outcome, c("Stayed inside", "Left voluntarily", "Forced out"))) %>%

arrange(treatment, outcome) %>% as_tibble() %>% select(-hive)

}

# plotting data for panel A: one specific hive

plotting_data <- get_posterior_preds(hive = "Zoology")

# stats data: for comparing means across all hives

stats_data <- get_posterior_preds(hive = NA)Make Figure 2

cols <- c("#34558b", "#4ec5a5", "#ffaf12")

dot_plot <- plotting_data %>%

left_join(sample_sizes, by = "treatment") %>%

arrange(treatment) %>%

mutate(treatment = factor(paste(treatment, "\n(n = ", n, ")", sep = ""),

unique(paste(treatment, "\n(n = ", n, ")", sep = "")))) %>%

ggplot(aes(100 * prop, treatment)) +

stat_dotsh(quantiles = 100, fill = "grey40", colour = "grey40") +

stat_pointintervalh(aes(colour = outcome, fill = outcome),

.width = c(0.5, 0.95),

position = position_nudge(y = -0.07), point_colour = "grey26", pch = 21, stroke = 0.4) +

scale_colour_manual(values = cols) +

scale_fill_manual(values = cols) +

facet_wrap( ~ outcome, scales = "free_x") +

xlab("% bees (posterior estimate)") + ylab("Treatment") +

theme_bw() +

coord_cartesian(ylim=c(1.4, 2.2)) +

theme(

text = element_text(family = nice_font),

strip.background = element_rect(fill = "#eff0f1"),

panel.grid.major.y = element_blank(),

legend.position = "none"

)

get_log_odds <- function(trt1, trt2){ # positive effect = odds of this outcome are higher for trt2 than trt1 (put control as trt1)

log((trt2 / (1 - trt2) / (trt1 / (1 - trt1))))

}

LOR <- plotting_data %>%

spread(treatment, prop) %>%

mutate(LOR = get_log_odds(Ringers, LPS)) %>%

select(posterior_sample, outcome, LOR)

LOR_plot <- LOR %>%

ggplot(aes(LOR, outcome, colour = outcome)) +

geom_vline(xintercept = 0, linetype = 2) +

geom_vline(xintercept = log(2), linetype = 2, colour = "pink") +

geom_vline(xintercept = -log(2), linetype = 2, colour = "pink") +

stat_pointintervalh(aes(colour = outcome, fill = outcome),

position = position_dodge(0.4),

.width = c(0.5, 0.95),

point_colour = "grey26", pch = 21, stroke = 0.4) +

scale_colour_manual(values = cols) +

scale_fill_manual(values = cols) +

xlab("Effect size of LPS\n(log odds ratio)") + ylab("Mode of exit") +

theme_bw() +

theme(

text = element_text(family = nice_font),

panel.grid.major.y = element_blank(),

legend.position = "none"

)

diff_in_forced_out_plot <- plotting_data %>%

spread(outcome, prop) %>%

mutate(prop_leavers_that_were_forced_out = `Forced out` / (`Forced out` + `Left voluntarily`)) %>%

select(posterior_sample, treatment, prop_leavers_that_were_forced_out) %>%

spread(treatment, prop_leavers_that_were_forced_out) %>%

mutate(difference_prop_forced_out_LOR = get_log_odds(Ringers, LPS)) %>%

ggplot(aes(difference_prop_forced_out_LOR, y =1)) +

geom_vline(xintercept = 0, linetype = 2) +

stat_dotsh(quantiles = 100, fill = "grey40", colour = "grey40") +

xlab("Effect of LPS on proportion\nbees leaving by force\n(log odds ratio)") +

ylab("Posterior density") +

theme_bw() +

theme(

text = element_text(family = nice_font),

panel.grid.major.y = element_blank(),

legend.position = "none"

)

bottom_row <- cowplot::plot_grid(LOR_plot, diff_in_forced_out_plot,

labels = c("B", "C"),

nrow = 1, align = 'hv', axis = 'l', rel_heights = c(1.6, 1))

top_row <- cowplot::plot_grid(dot_plot, labels = "A")

p <- cowplot::plot_grid(top_row, bottom_row,

nrow = 2, align = 'v', axis = 'l', rel_heights = c(1.4, 1))

ggsave(plot = p, filename = "figures/fig2.pdf", height = 6, width = 6)

p

The dashed lines mark \(LOR = 0\), indicating no effect, and \(LOR = \pm log(2)\), i.e. the point at which the odds are twice as high in one treatment as the other.

Hypothesis testing and effect sizes

This section calculates the posterior difference in treatment group means, in order to perform some null hypothesis testing, calculate effect size, and calculate the 95% credible intervals on the effect size. In all cases, the effect size expresses the effect of the “LPS-treated bee CHCs” treatment relative to the “Ringer-treated bee CHCs” control.

Stats associated with Figure 2B

Making Table S4

my_summary <- function(df, columns, outcome) {

lapply(columns, function(x){

p <- 1 - (df %>% pull(!! x) %>%

bayestestR::p_direction() %>% as.numeric())

df %>% pull(!! x) %>% posterior_summary() %>% as_tibble() %>%

mutate(p=p, Outcome = outcome, Metric = x) %>%

select(Outcome, Metric, everything())

}) %>% do.call("rbind", .)

}

stats_table <- rbind(

plotting_data %>%

filter(outcome == "Stayed inside") %>%

spread(treatment, prop) %>%

mutate(`Absolute difference in % bees staying inside` = 100 * (LPS - Ringers),

`Log odds ratio` = get_log_odds(Ringers, LPS)) %>%

my_summary(c("Absolute difference in % bees staying inside",

"Log odds ratio"),

outcome = "Stayed inside") %>%

mutate(p = c(" ", format(round(p[2], 4), nsmall = 4))),

plotting_data %>%

filter(outcome == "Left voluntarily") %>%

spread(treatment, prop) %>%

mutate(`Absolute difference in % bees leaving voluntarily` = 100 * (LPS - Ringers),

`Log odds ratio` = get_log_odds(Ringers, LPS)) %>%

my_summary(c("Absolute difference in % bees leaving voluntarily",

"Log odds ratio"),

outcome = "Left voluntarily") %>%

mutate(p = c(" ", format(round(p[2], 4), nsmall = 4))),

plotting_data %>%

filter(outcome == "Forced out") %>%

spread(treatment, prop) %>%

mutate(`Absolute difference in % bees forced out` = 100 * (LPS - Ringers),

`Log odds ratio` = get_log_odds(Ringers, LPS)) %>%

my_summary(c("Absolute difference in % bees forced out",

"Log odds ratio"),

outcome = "Forced out") %>%

mutate(p = c(" ", format(round(p[2], 4), nsmall = 4)))

) %>%

mutate(` ` = ifelse(p < 0.05, "\\*", ""),

` ` = replace(` `, p < 0.01, "**"),

` ` = replace(` `, p < 0.001, "***"),

` ` = replace(` `, p == " ", ""))

stats_table[c(2,4,6), 1] <- " "

stats_table %>%

select(-Outcome) %>%

kable(digits = 3) %>% kable_styling(full_width = FALSE) %>%

row_spec(c(0,2,4,6), extra_css = "border-bottom: solid;") %>%

pack_rows("% bees staying inside", 1, 2) %>%

pack_rows("% bees leaving voluntarily", 3, 4) %>%

pack_rows("% bees forced out", 5, 6)| Metric | Estimate | Est.Error | Q2.5 | Q97.5 | p | |

|---|---|---|---|---|---|---|

| % bees staying inside | ||||||

| Absolute difference in % bees staying inside | -11.949 | 4.942 | -22.208 | -2.753 | ||

| Log odds ratio | -0.836 | 0.331 | -1.520 | -0.199 | 0.0048 | ** |

| % bees leaving voluntarily | ||||||

| Absolute difference in % bees leaving voluntarily | 1.335 | 2.623 | -3.720 | 7.086 | ||

| Log odds ratio | 0.242 | 0.440 | -0.621 | 1.106 | 0.2881 | |

| % bees forced out | ||||||

| Absolute difference in % bees forced out | 10.614 | 4.627 | 2.538 | 20.464 | ||

| Log odds ratio | 1.079 | 0.433 | 0.270 | 1.967 | 0.0047 | ** |

Table S4: This table gives statistics associated with each of the contrasts plotted in Figure 2B. Each pair of rows gives the absolute and standardised effect size (as log odds ratio; LOR) for the LPS treatment, relative to the Ringers treatment, for one of the three possible outcomes (stayed inside, left voluntarily, or forced out). A LOR of \(|log(x)|\) indicates that the outcome is \(x\) times more frequent in one treatment compared to the other, e.g. \(log(2) = 0.69\) indicates a two-fold difference in frequency. The \(p\) column gives the posterior probability that the true effect size has the same sign as is shown in the Estimate column; this metric has a similar interpretation to a one-tailed \(p\) value in frequentist statistics.

Evaluating evidence for the null hypothesis

Here, we derive the result present in prose in the Results, that the true effect size

LOR_left <- LOR %>%

filter(outcome == "Left voluntarily") %>% # Forced out

pull(LOR)

sum(LOR_left > -log(1.5) & LOR_left < log(1.5)) / length(LOR_left)[1] 0.5837sum(LOR_left > -log(2) & LOR_left < log(2)) / length(LOR_left)[1] 0.8323Stats associated with Figure 2C

Here, we derive the result presented in prose in the Results, regarding the effect of LPS on the proportion of bees that left the hive by force.

difference_prop_forced_out_LOR <- plotting_data %>%

spread(outcome, prop) %>%

mutate(prop_leavers_that_were_forced_out = `Forced out` / (`Forced out` + `Left voluntarily`)) %>%

select(posterior_sample, treatment, prop_leavers_that_were_forced_out) %>%

spread(treatment, prop_leavers_that_were_forced_out) %>%

mutate(difference_prop_forced_out_LOR = get_log_odds(Ringers, LPS)) %>%

pull(difference_prop_forced_out_LOR)

hypothesis(difference_prop_forced_out_LOR, "x > 0")$hypothesis Hypothesis Estimate Est.Error CI.Lower CI.Upper Evid.Ratio Post.Prob Star

1 (x) > 0 0.7281842 0.5984938 -0.2336917 1.712823 8.082652 0.8899

sessionInfo()R version 3.6.3 (2020-02-29)

Platform: x86_64-apple-darwin15.6.0 (64-bit)

Running under: macOS Catalina 10.15.4

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/3.6/Resources/lib/libRlapack.dylib

locale:

[1] en_AU.UTF-8/en_AU.UTF-8/en_AU.UTF-8/C/en_AU.UTF-8/en_AU.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] cowplot_1.0.0 tidybayes_2.0.1 bayestestR_0.5.1 kableExtra_1.1.0

[5] gridExtra_2.3 forcats_0.5.0 stringr_1.4.0 dplyr_0.8.5

[9] purrr_0.3.3 readr_1.3.1 tidyr_1.0.2 tibble_3.0.0

[13] ggplot2_3.3.0 tidyverse_1.3.0 bayesplot_1.7.1 brms_2.12.0

[17] Rcpp_1.0.3 showtext_0.7-1 showtextdb_2.0 sysfonts_0.8

[21] workflowr_1.6.0

loaded via a namespace (and not attached):

[1] colorspace_1.4-1 ellipsis_0.3.0 ggridges_0.5.2

[4] rsconnect_0.8.16 rprojroot_1.3-2 markdown_1.1

[7] base64enc_0.1-3 fs_1.3.1 rstudioapi_0.11

[10] farver_2.0.3 rstan_2.19.3 svUnit_0.7-12

[13] DT_0.13 fansi_0.4.1 mvtnorm_1.1-0

[16] lubridate_1.7.8 xml2_1.3.1 bridgesampling_1.0-0

[19] knitr_1.28 shinythemes_1.1.2 jsonlite_1.6.1

[22] broom_0.5.4 dbplyr_1.4.2 shiny_1.4.0

[25] compiler_3.6.3 httr_1.4.1 backports_1.1.6

[28] assertthat_0.2.1 Matrix_1.2-18 fastmap_1.0.1

[31] cli_2.0.2 later_1.0.0 htmltools_0.4.0

[34] prettyunits_1.1.1 tools_3.6.3 igraph_1.2.5

[37] coda_0.19-3 gtable_0.3.0 glue_1.4.0

[40] reshape2_1.4.4 cellranger_1.1.0 vctrs_0.2.4

[43] nlme_3.1-147 crosstalk_1.1.0.1 insight_0.8.1

[46] xfun_0.13 ps_1.3.0 rvest_0.3.5

[49] mime_0.9 miniUI_0.1.1.1 lifecycle_0.2.0

[52] gtools_3.8.2 zoo_1.8-7 scales_1.1.0

[55] colourpicker_1.0 hms_0.5.3 promises_1.1.0

[58] Brobdingnag_1.2-6 parallel_3.6.3 inline_0.3.15

[61] RColorBrewer_1.1-2 shinystan_2.5.0 curl_4.3

[64] yaml_2.2.1 loo_2.2.0 StanHeaders_2.19.2

[67] stringi_1.4.6 highr_0.8 dygraphs_1.1.1.6

[70] pkgbuild_1.0.6 rlang_0.4.5 pkgconfig_2.0.3

[73] matrixStats_0.56.0 evaluate_0.14 lattice_0.20-41

[76] labeling_0.3 rstantools_2.0.0 htmlwidgets_1.5.1

[79] processx_3.4.2 tidyselect_1.0.0 plyr_1.8.6

[82] magrittr_1.5 R6_2.4.1 generics_0.0.2

[85] DBI_1.1.0 pillar_1.4.3 haven_2.2.0

[88] whisker_0.4 withr_2.1.2 xts_0.12-0

[91] abind_1.4-5 modelr_0.1.5 crayon_1.3.4

[94] arrayhelpers_1.1-0 rmarkdown_2.1 grid_3.6.3

[97] readxl_1.3.1 callr_3.4.3 git2r_0.26.1

[100] threejs_0.3.3 reprex_0.3.0 digest_0.6.25

[103] webshot_0.5.2 xtable_1.8-4 httpuv_1.5.2

[106] stats4_3.6.3 munsell_0.5.0 viridisLite_0.3.0

[109] shinyjs_1.1