Burn-in simulations for cassava GS

Marnin Wolfe

2021-Aug-26

Last updated: 2021-08-27

Checks: 7 0

Knit directory: BreedingSchemeOpt/

This reproducible R Markdown analysis was created with workflowr (version 1.6.2). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20210422) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 9d369ee. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: analysis/.DS_Store

Ignored: analysis/images/.DS_Store

Ignored: data/.DS_Store

Untracked files:

Untracked: data/baselineScheme.gsheet

Untracked: data/siErrorVarEst_byTrialType_directApproach_2021Aug25.rds

Untracked: output/benchmark_sim.rds

Untracked: output/benchmark_sims5.rds

Untracked: output/burnIn_test.rds

Untracked: output/postBurnIn_test.rds

Untracked: output/test_burnIn_sim.rds

Unstaged changes:

Modified: data/README.md

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/burnInSims.Rmd) and HTML (docs/burnInSims.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 9d369ee | wolfemd | 2021-08-27 | Publish burnInSims with the toy example completed and the full analysis almost ready to run. |

Previous step

I developed an empirical approach to estimate TrialType-specific error variances in terms of the IITA selection index (SELIND). See that analysis here.

Generate burn-in simulations

- Build a

runBurnInScheme()forAlphaSimHlpR. - Output of

runBurnInScheme()is input for downstream optimization and comparison of scenarios.

Previously, used control files to set-up bsp. Implemented specifyBSP(), which creates a bsp using a data.frame of stage-specific breeding scheme plus all other AlphaSimHlpR arguments as inputs.

- See full function reference here in my forked-repo of

AlphaSimHlpR

Set-up a singularity shell with R+OpenBLAS

This is not required. If you want the advantage of multi-threaded BLAS to speed up predictions within the simulations, you need an R instance that is linked to OpenBLAS (another example is Microsoft R Open). For CBSU, the recommended approach is currently to use singularity shells provided by the “rocker” project. They even come pre-installed with tidyverse :).

Linked to OpenBLAS, using a simple function RhpcBLASctl::blas_set_num_threads() I can add arguments to functions to control this feature.

For optimal performance, it is import to balance the number of threads each R session uses for BLAS against any other form of parallel processing being used and considering total available system resources.

# 0) Pull a singularity image with OpenBLAS enabled R + tidyverse from rocker/

singularity pull ~/rocker2.sif docker://rocker/tidyverse:latest;

# only do above first time

# 1) start a screen shell

screen; # or screen -r if re-attaching...

# 3) start the singularity Linux shell inside that

singularity shell ~/rocker2.sif;

# Project directory, so R will use as working dir.

cd /home/mw489/BreedingSchemeOpt/;

# 3) Start R

R# Install genomicMateSelectR to user-accessible libPath

### In a singularity shell, sintall as follows:

libPath<-"/home/mw489/R/x86_64-pc-linux-gnu-library/4.1" # should be YOUR libPath

withr::with_libpaths(new=libPath, devtools::install_github("wolfemd/genomicMateSelectR", ref = 'master'))

### Else, simply

devtools::install_github("wolfemd/genomicMateSelectR", ref = 'master')

# Install my own forked repo of AlphaSimHlpR

withr::with_libpaths(new=libPath, install.packages("Rcpp"))

withr::with_libpaths(new=libPath, install.packages("AlphaSimR"))

withr::with_libpaths(new=libPath, install.packages("optiSel"))

withr::with_libpaths(new=libPath, install.packages("rgl"))

withr::with_libpaths(new=libPath, devtools::install_github("wolfemd/AlphaSimHlpR", ref = 'master', force=T))A small example

Test the code with a small example. Source functions not yet included in AlphaSimHlpR from code/ directory.

Use my newly created specifyBSP() function to create the bsp input for sims.

- 3 chrom, Ne = 100, 300 SNP (100/chrom)

- Select 10 parents, make 4 random crosses with 50 progeny each

suppressMessages(library(AlphaSimHlpR))

suppressMessages(library(tidyverse))

suppressMessages(library(genomicMateSelectR))

select <- dplyr::select

schemeDF<-read.csv(here::here("data","baselineScheme - Test.csv"),

header = T, stringsAsFactors = F)

bsp<-specifyBSP(schemeDF = schemeDF,

nChr = 3,effPopSize = 100,quickHaplo = F,

segSites = 400, nQTL = 40, nSNP = 100, genVar = 40,

gxeVar = NULL, gxyVar = 15, gxlVar = 10,gxyxlVar = 5,

meanDD = 0.5,varDD = 0.01,relAA = 0.5,

stageToGenotype = "PYT",

nParents = 10, nCrosses = 4, nProgeny = 50,nClonesToNCRP = 3,

phenoF1toStage1 = T,errVarPreStage1 = 500,

useCurrentPhenoTrain = F,

nCyclesToKeepRecords = 30,

selCritPipeAdv = selCritIID,

selCritPopImprov = selCritIID)

source(here::here("code","runBurnInSchemes.R"))I created a CSV to specify a data.frame schemeDF defining stage-specific breeding scheme inputs.

schemeDF %>% rmarkdown::paged_table()runBurnInSchemes(): basically runBreedingScheme() but without the final call records <- lastCycStgOut(records, bsp, SP) so that sims can be continued based on the records and bsp.

Also, set up function include the potentially parallel execution of multiple replications of each sim scheme.

Run 16 replicate simulations of 10 cycles phenotypic selection with a small breeding scheme on a laptop.

burnIn_test<-runBurnInSchemes(bsp = bsp,

nBurnInCycles=10,

selCritPop="selCritIID",

selCritPipe="selCritIID",

iniFunc="initializeScheme",

productFunc="productPipeline",

popImprovFunc="popImprov1Cyc",

nReplications=16,ncores=8,

nBLASthreads=1,nThreadsMacs2=1)

saveRDS(burnIn_test,file = here::here("output","burnIn_test.rds"))burnIn_test<-readRDS(here::here("output","burnIn_test.rds"))

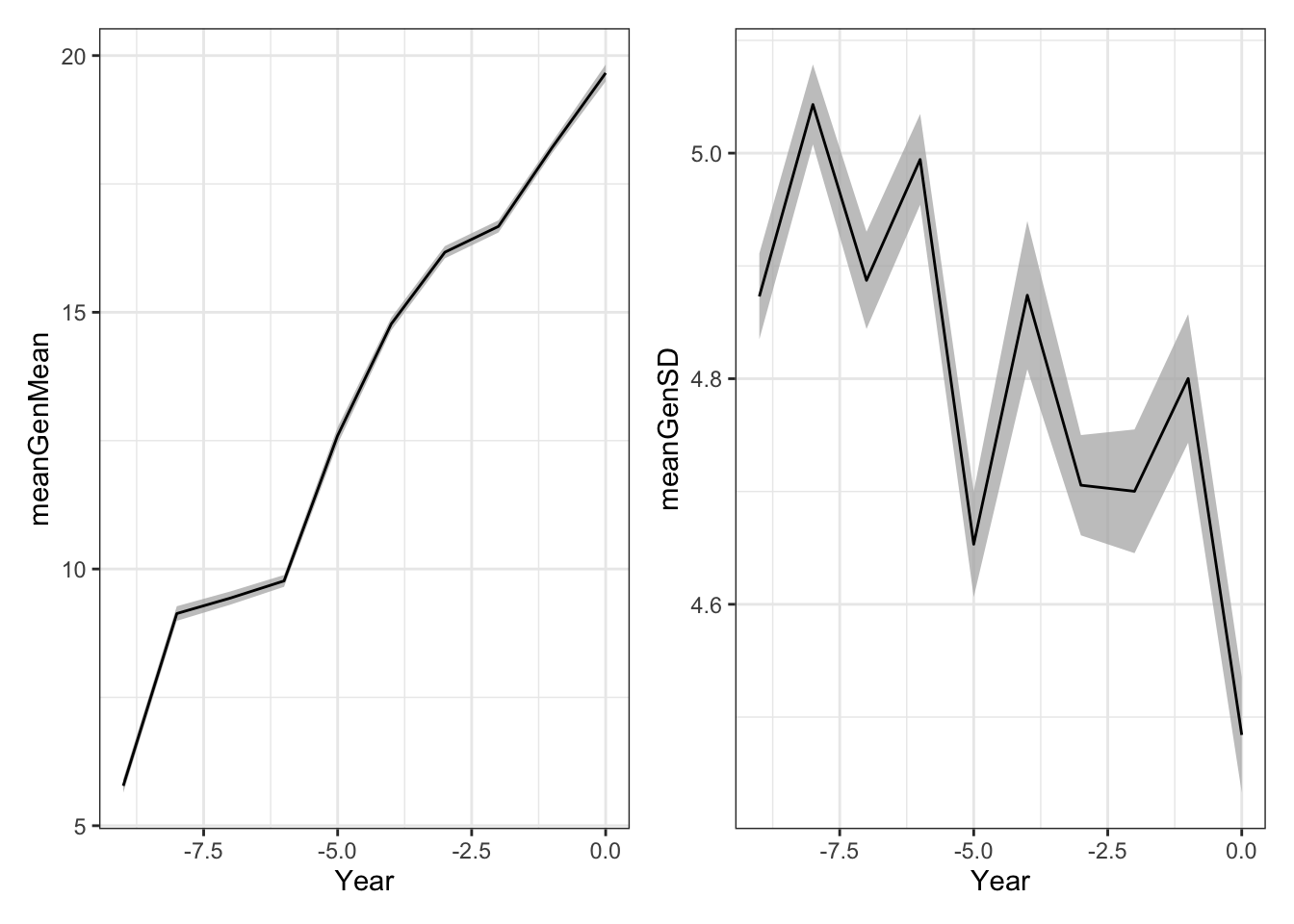

forSimPlot<-burnIn_test %>%

unnest_wider(burnInSim) %>%

select(SimRep,records) %>%

unnest_wider(records) %>%

select(SimRep,stageOutputs) %>%

unnest() %>%

filter(stage=="F1") %>%

mutate(Year=year-max(year))

library(patchwork)

meanGplot<-forSimPlot %>%

group_by(Year,year,stage) %>%

summarize(meanGenMean=mean(genValMean),

seGenMean=sd(genValMean)/n()) %>%

ggplot(.,aes(x=Year)) +

geom_ribbon(aes(ymin = meanGenMean - seGenMean,

ymax = meanGenMean + seGenMean),

fill = "grey70", alpha=0.75) +

geom_line(aes(y = meanGenMean))

sdGplot<-forSimPlot %>%

group_by(Year,year,stage) %>%

summarize(meanGenSD=mean(genValSD),

seGenSD=sd(genValSD)/n()) %>%

ggplot(.,aes(x=Year)) +

geom_ribbon(aes(ymin = meanGenSD - seGenSD,

ymax = meanGenSD + seGenSD),

fill = "grey70", alpha=0.75) +

geom_line(aes(y = meanGenSD))

(meanGplot | sdGplot) & theme_bw()

Ribbon plot mean and std. error computed across replicated simulations.

Next, execute the runSchemesPostBurnIn() function to continue running the 16 replicate initiated sims for an additional 10 post-burn in cycles of phenotypic selection.

source(here::here("code","runSchemesPostBurnIn.R"))

simulations<-readRDS(here::here("output","burnIn_test.rds"))

postBurnIn_test<-runSchemesPostBurnIn(simulations = simulations,

newBSP=NULL, # so you can change the scheme after burn-in

nPostBurnInCycles=10,

selCritPop="selCritIID",

selCritPipe="selCritIID",

productFunc="productPipeline",

popImprovFunc="popImprov1Cyc",

ncores=8,

nBLASthreads=1,nThreadsMacs2=1)

saveRDS(postBurnIn_test,file = here::here("output","postBurnIn_test.rds"))postBurnIn_test<-readRDS(here::here("output","postBurnIn_test.rds"))

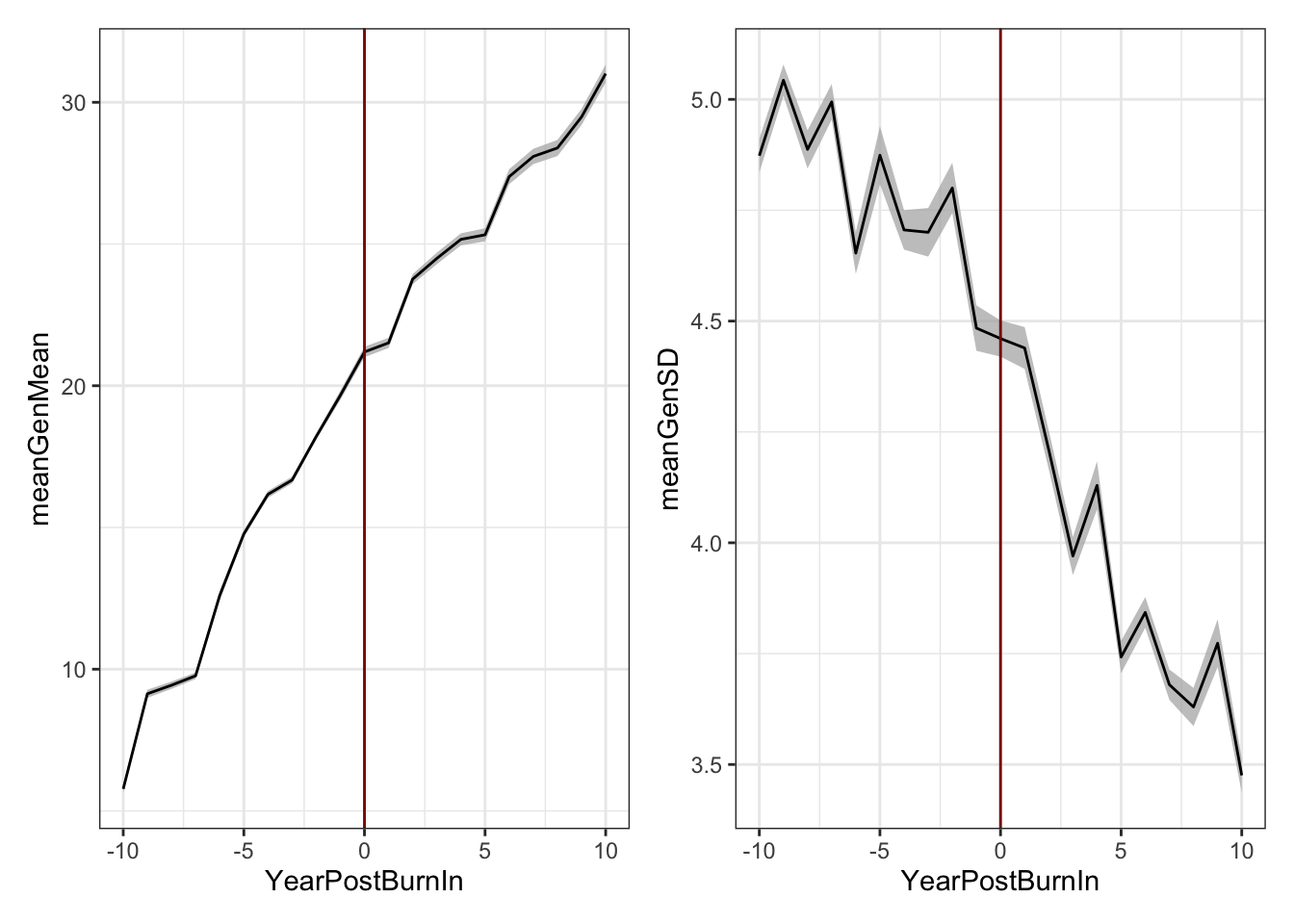

forSimPlot<-postBurnIn_test %>%

unnest_wider(SimOutput) %>%

select(SimRep,records) %>%

unnest_wider(records) %>%

select(SimRep,stageOutputs) %>%

unnest() %>%

filter(stage=="F1") %>%

mutate(YearPostBurnIn=year-10)

library(patchwork)

meanGplot<-forSimPlot %>%

group_by(YearPostBurnIn,year,stage) %>%

summarize(meanGenMean=mean(genValMean),

seGenMean=sd(genValMean)/n()) %>%

ggplot(.,aes(x=YearPostBurnIn)) +

geom_ribbon(aes(ymin = meanGenMean - seGenMean,

ymax = meanGenMean + seGenMean),

fill = "grey70", alpha=0.75) +

geom_line(aes(y = meanGenMean))

sdGplot<-forSimPlot %>%

group_by(YearPostBurnIn,year,stage) %>%

summarize(meanGenSD=mean(genValSD),

seGenSD=sd(genValSD)/n()) %>%

ggplot(.,aes(x=YearPostBurnIn)) +

geom_ribbon(aes(ymin = meanGenSD - seGenSD,

ymax = meanGenSD + seGenSD),

fill = "grey70", alpha=0.75) +

geom_line(aes(y = meanGenSD))

(meanGplot | sdGplot) & theme_bw() & geom_vline(xintercept = 0, color='darkred')

Now run the same 16 burnt-in sims for 10 cycles of GS.

# source(here::here("code","runSchemesPostBurnIn.R"))

# simulations<-readRDS(here::here("output","burnIn_test.rds"))

# postBurnInGS_test<-runSchemesPostBurnIn(simulations = simulations,

# newBSP=NULL,

# nPostBurnInCycles=10,

# selCritPop="parentSelCritGEBV",

# selCritPipe="selCritIID",

# productFunc="productPipeline",

# popImprovFunc="popImprov1Cyc",

# ncores=8,

# nBLASthreads=1,nThreadsMacs2=1)

# saveRDS(postBurnInGS_test,file = here::here("output","postBurnInGS_test.rds"))This actually ran all night on my laptop without finishing…. even the toy example with GS is non-trivial to run.

Run full-scale burn-in sims

Set-up multiple iterations of a simulation with selCritIID as burn-in.

20 burn-in cycles to match examples by EiB.

Genome / Pop specs

18 chrom,

Ne = 1000,

nSNP = 300 SNP/chrom (matches EiB examples)

nQTLperChr = 1000

nSegSites = 2000

Genetic architecture and Error variance

genVar = 750and stage-specificerrVarinput from hereThe max estimated

errVarwas for CET at ~3500,so a

genVarof 750 is to set up a entry level h2 around 0.2

meanDD = 0.3varDD = ???Var(GxYr) == Var(G), again matching EiB example

- What about GxL and GxLxYr?

read.csv(here::here("data","baselineScheme - IITA.csv"),

header = T, stringsAsFactors = F) %>% rmarkdown::paged_table()Breeding Scheme (

schemeDFprinted above)Skips SDN stage. Is there an UYT2 (second year of UYT) to sim?

phenoF1toStage1 = FALSEPopulation Improvement

nParents = 50, nCrosses = 100, nProgeny = 25,nClonesToNCRP = 3- Or

nParents = 100, nCrosses = 250, nProgeny = 10,nClonesToNCRP = 3(EiB example)

Additional Settings

nCyclesToKeepRecords = 30(all)… what effect does this actually have? Just on storage of output?trainingPopCycles = 15…means 15 years of each stage used in each prediction…

What about an alternative: set a fixed TP size e.g. 5000 clones.

- This might be faster since the number of clones / dimension of kinship matrix is primary slow point.

screen;

singularity shell ~/rocker2.sif;

cd /home/mw489/BreedingSchemeOpt/;

R# suppressMessages(library(AlphaSimHlpR))

# suppressMessages(library(tidyverse))

# suppressMessages(library(genomicMateSelectR))

# select <- dplyr::select

# # This scheme _excludes_ the seedling stage from the simulation.

# schemeDF<-read.csv(here::here("data","baselineScheme - IITA.csv"),

# header = T, stringsAsFactors = F) %>%

# select(-PlantsPerPlot)

#

# bsp<-specifyBSP(schemeDF = schemeDF,

# nChr = 18,effPopSize = 1000,quickHaplo = F,

# segSites = 500, nQTL = 50, nSNP = 100, genVar = 100,

# gxeVar = NULL, gxyVar = 15, gxlVar = 10,gxyxlVar = 5,

# meanDD = 1,varDD = 5,relAA = 0.1,

# stageToGenotype = "CET",

# nParents = 50, nCrosses = 100, nProgeny = 25,nClonesToNCRP = 3,

# phenoF1toStage1 = F,errVarPreStage1 = 500,

# useCurrentPhenoTrain = F,

# nCyclesToKeepRecords = 30,

# # selCrits are overwritten by runBreedingScheme_wBurnIn

# selCritPipeAdv = selCritIID, # thus have no actual effect

# selCritPopImprov = selCritIID) Next steps

Complete burn-in simulations

Conduct a baseline GS vs. Conv. simulation

Begin the actual interesting sims

Optimize budgets

Compare alternative VDPs

Test mate selection, optimal contributions and ultimately optimizing mating plans.

sessionInfo()R version 4.1.0 (2021-05-18)

Platform: x86_64-apple-darwin17.0 (64-bit)

Running under: macOS Big Sur 10.16

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.1/Resources/lib/libRblas.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.1/Resources/lib/libRlapack.dylib

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] patchwork_1.1.1 genomicMateSelectR_0.2.0 forcats_0.5.1

[4] stringr_1.4.0 purrr_0.3.4 readr_2.0.1

[7] tidyr_1.1.3 tibble_3.1.3 ggplot2_3.3.5

[10] tidyverse_1.3.1 AlphaSimHlpR_0.2.1 dplyr_1.0.7

[13] AlphaSimR_1.0.3 R6_2.5.0 workflowr_1.6.2

loaded via a namespace (and not attached):

[1] Rcpp_1.0.7 here_1.0.1 lubridate_1.7.10 assertthat_0.2.1

[5] rprojroot_2.0.2 digest_0.6.27 utf8_1.2.2 cellranger_1.1.0

[9] backports_1.2.1 reprex_2.0.1 evaluate_0.14 highr_0.9

[13] httr_1.4.2 pillar_1.6.2 rlang_0.4.11 readxl_1.3.1

[17] rstudioapi_0.13 whisker_0.4 jquerylib_0.1.4 rmarkdown_2.10

[21] labeling_0.4.2 munsell_0.5.0 broom_0.7.9 compiler_4.1.0

[25] httpuv_1.6.1 modelr_0.1.8 xfun_0.25 pkgconfig_2.0.3

[29] htmltools_0.5.1.1 tidyselect_1.1.1 fansi_0.5.0 crayon_1.4.1

[33] tzdb_0.1.2 dbplyr_2.1.1 withr_2.4.2 later_1.2.0

[37] grid_4.1.0 jsonlite_1.7.2 gtable_0.3.0 lifecycle_1.0.0

[41] DBI_1.1.1 git2r_0.28.0 magrittr_2.0.1 scales_1.1.1

[45] cli_3.0.1 stringi_1.7.3 farver_2.1.0 fs_1.5.0

[49] promises_1.2.0.1 xml2_1.3.2 bslib_0.2.5.1 ellipsis_0.3.2

[53] generics_0.1.0 vctrs_0.3.8 tools_4.1.0 glue_1.4.2

[57] hms_1.1.0 yaml_2.2.1 colorspace_2.0-2 rvest_1.0.1

[61] knitr_1.33 haven_2.4.3 sass_0.4.0