Posterior Results Analysis

Last updated: 2024-07-15

Checks: 7 0

Knit directory: MATHPOP/

This reproducible R Markdown analysis was created with workflowr (version 1.7.1). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20240702) was run prior to running

the code in the R Markdown file. Setting a seed ensures that any results

that rely on randomness, e.g. subsampling or permutations, are

reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 225599a. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for

the analysis have been committed to Git prior to generating the results

(you can use wflow_publish or

wflow_git_commit). workflowr only checks the R Markdown

file, but you know if there are other scripts or data files that it

depends on. Below is the status of the Git repository when the results

were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: analysis/.DS_Store

Ignored: code/.DS_Store

Ignored: data/.DS_Store

Ignored: data/GC_prob/.DS_Store

Ignored: data/point_source_data/.DS_Store

Ignored: data/prob_GC_data/.DS_Store

Ignored: data/sim/.DS_Store

Ignored: data/v10acs/.DS_Store

Ignored: data/v10wfc3/.DS_Store

Ignored: data/v11acs/.DS_Store

Ignored: data/v11wfc3/.DS_Store

Ignored: data/v12acs/.DS_Store

Ignored: data/v12wfc3/.DS_Store

Ignored: data/v13acs/.DS_Store

Ignored: data/v13wfc3/.DS_Store

Ignored: data/v14acs/.DS_Store

Ignored: data/v14wfc3/.DS_Store

Ignored: data/v15acs/.DS_Store

Ignored: data/v15wfc3/.DS_Store

Ignored: data/v6acs/.DS_Store

Ignored: data/v6wfc3/.DS_Store

Ignored: data/v7acs/.DS_Store

Ignored: data/v8acs/.DS_Store

Ignored: data/v9acs/.DS_Store

Ignored: data/v9wfc3/.DS_Store

Ignored: source/.DS_Store

Ignored: source/v8wfc3/

Unstaged changes:

Modified: analysis/CMD_plot_2-1.aux

Deleted: analysis/CMD_plot_2-1.log

Deleted: analysis/CMD_plot_2-1.pdf

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were

made to the R Markdown (analysis/posterior.Rmd) and HTML

(docs/posterior.html) files. If you’ve configured a remote

Git repository (see ?wflow_git_remote), click on the

hyperlinks in the table below to view the files as they were in that

past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 79bc13d | david.li | 2024-07-15 | wflow_git_commit(all = T) |

| html | 4144232 | david.li | 2024-07-12 | Build site. |

| html | d207061 | david.li | 2024-07-12 | Build site. |

| Rmd | 5c60e32 | david.li | 2024-07-12 | wflow_git_commit(all = T) |

| html | 5c60e32 | david.li | 2024-07-12 | wflow_git_commit(all = T) |

| Rmd | fec0afd | david.li | 2024-07-12 | wflow_git_commit(all = T) |

| html | e750655 | david.li | 2024-07-08 | Build site. |

| html | c8ce157 | david.li | 2024-07-07 | Build site. |

| Rmd | 757f319 | david.li | 2024-07-07 | wflow_publish("analysis/posterior.Rmd") |

| html | 8fb288d | david.li | 2024-07-07 | Build site. |

| Rmd | 2e42542 | david.li | 2024-07-07 | wflow_git_commit(all = T) |

| html | 3dee3e9 | david.li | 2024-07-06 | Build site. |

| html | dc09c08 | david.li | 2024-07-06 | Build site. |

| html | 64686bd | david.li | 2024-07-06 | Build site. |

| html | 0a9d403 | david.li | 2024-07-05 | Build site. |

| html | c1fd386 | david.li | 2024-07-04 | Build site. |

| Rmd | 33fc566 | david.li | 2024-07-04 | wflow_git_commit(all = T) |

| html | 33fc566 | david.li | 2024-07-04 | wflow_git_commit(all = T) |

| html | 6ff1ccc | david.li | 2024-07-04 | Build site. |

| html | 65a7d8c | david.li | 2024-07-04 | Build site. |

| Rmd | 98834e5 | david.li | 2024-07-04 | wflow_publish(c("analysis/_site.yml", "analysis/posterior.Rmd", |

Introduction

This vignette provides the code for the analysis of the posterior results of MATHPOP model fitted to the 40 Perseus LSBGs considered in the original Li et al. (2024) paper. It also reproduces Figure 4 and provides the data contained in Table 3 of the paper. To fit the MATHPOP model, see here.

Note that the MCMC results in the MATHPOP paper were obtained using

an older version of the code contained in the source/

folder of this repo. The current version of the code, i.e., the main

fit_MATHPOP function was rewritten so that it is more

user-friendly. Due to the update, it is unavoidable that the results

from fit_MATHPOP do not match exactly with the results from

the older code since various tuning and starting parameter values are

specified individually for each data set that was fitted, while these

are completely automatized in the current code. Because of the

computational constraints, we did not rerun everything using the new

code, but our testing shows that the new code provides entirely

consistent results as the old code. If you wish to use the old code,

please see the source files in the source/ folder.

Post Inference Results

Due to the computational time to fit the MATHPOP model and the size

of the MCMC results, we will not be fitting the model in any of the

vignettes in this Github repo. We instead have post-processed and saved

three individual runs of the MCMC runs of the MATHPOP model for each

individual image we considered. These are contained in the

data/v#### folders, where v### is the imaging

field ID. For example, data/v11acs/res_prob_v11acs.RDS

contains the MCMC results for the MATHPOP model fitted to the DOLPHOT GC

data.

We first load all required packages and read in the MCMC results for MATHPOP models fitted to probabilistic GC catalog from DOLPHOT:

library(tidyverse)

library(ggpubr)

library(ggridges)

library(tikzDevice)

library(posterior)

library(xtable)

library(wesanderson)

library(ggnewscale)

library(modeest)

library(reshape2)

library(HDInterval)

library(MATHPOP)

# read in file paths

fnames <- list.files('data/')

fnames <- fnames[grepl('v', fnames)]

file_paths <- paste0('data/',fnames,'/')

# construct data frames for each model parameter of the GC system of a UDG

summary_NGC <- data.frame()

summary_RGC <- data.frame()

summary_muGC <- data.frame()

# generate a data frame containing all summary statistics of the model parameters

set.seed(123456)

# loop through each image

for(i in 1:length(fnames)){

res_i_name <- paste0(file_paths[i], 'res_prob_', fnames[i], '.RDS')

file_read <- readRDS(res_i_name)

# GC count summary

N_GC <- as.data.frame(apply(file_read[, grepl( "N_" , names(file_read)) & !grepl( "gal" , names(file_read))], 2,

function(x) rpois(nrow(file_read), x)))

# Half-number radius summary

R_GC <- as.data.frame(file_read[, grepl( "R_" , names(file_read)) & !grepl( "gal" , names(file_read))])

# GCLF TO summary

mu_GC <- as.data.frame(file_read[, grepl( "mu_" , names(file_read)) & !grepl( "gal" , names(file_read)) & !grepl( "_0" , names(file_read))])

UDG_ID <- gsub('N_', '', colnames(N_GC))

colnames(N_GC) <- UDG_ID

colnames(R_GC) <- UDG_ID

colnames(mu_GC) <- UDG_ID

# obtain summary statistics for GC counts

N_GC <- melt(N_GC)

summary_N <- N_GC %>%

rename(ID = variable) %>%

group_by(ID) %>%

summarise_all(list(N_mode = mlv, N_median = median, N_mean = mean,

N_HDI_0.68_lower = function(x) hdi(x, 0.68)[1],

N_HDI_0.68_upper = function(x) hdi(x, 0.68)[2],

`P(N_GC = 0)` = function(x) mean(x == 0)))

# obtain summary statistics for half-number radius

R_GC <- melt(R_GC)

summary_R <- R_GC %>%

rename(ID = variable) %>%

group_by(ID) %>%

summarise_all(list(R_mode = mlv, R_median = median, R_mean = mean,

R_HDI_0.68_lower = function(x) hdi(x, 0.68)[1],

R_HDI_0.68_upper = function(x) hdi(x, 0.68)[2]))

# obtain summary statistics for GCLF TO

mu_GC <- melt(mu_GC)

summary_mu <- mu_GC %>%

rename(ID = variable) %>%

group_by(ID) %>%

summarise_all(list(mu_mode = mlv, mu_median = median, mu_mean = mean,

mu_HDI_0.68_lower = function(x) hdi(x, 0.68)[1],

mu_HDI_0.68_upper = function(x) hdi(x, 0.68)[2],

mu_HDI_0.95_lower = function(x) hdi(x, 0.95)[1],

mu_HDI_0.95_upper = function(x) hdi(x, 0.95)[2]))

summary_NGC <- bind_rows(summary_NGC, summary_N)

summary_RGC <- bind_rows(summary_RGC, summary_R)

summary_muGC <- bind_rows(summary_muGC, summary_mu)

}

summary_NGC$ID <- as.character(summary_NGC$ID)

summary_RGC$ID <- as.character(summary_RGC$ID)

summary_muGC$ID <- as.character(summary_muGC$ID)

# W7 is observed in both V12-ACS and V14-ACS

summary_NGC$ID[13] <- summary_RGC$ID[13] <- summary_muGC$ID[13] <- 'W7 (V12ACS)'

summary_NGC$ID[22] <- summary_RGC$ID[22] <- summary_muGC$ID[22] <- 'W7 (V14ACS)'

# post-processing

summary_muGC <- summary_muGC %>%

mutate(minus_mu = mu_mean - mu_HDI_0.68_lower, plus_mu = mu_HDI_0.68_upper - mu_mean)

summary_RGC <- summary_RGC %>%

mutate(minus_R = R_mode - R_HDI_0.68_lower, plus_R = R_HDI_0.68_upper - R_mode)

summary_NGC <- summary_NGC %>%

mutate_at(2:7, as.numeric) %>%

arrange(`P(N_GC = 0)`)

# GC count summary from Janssens et al. 2024

Jans_NGC <- data.frame(ID = summary_NGC$ID) %>%

mutate(Janssens = c(36, 52, 43, 20, 24, 23, 13, 20, 4, 9, 6, 13, 2, -2, 0, 8, 1, 9, 7, 9, 0, -2, 3,

0, 7, -2, 0, 1, -4, -1, 1, -4, 4, 2, 6, 4, -1, -5, -2, -1, -1),

J_se = c(8, 8, 6, 6, 13, 7, 6, 14, 8, 9, 8, 5, 7, 8, 10, 10, 7, 6, 6, 7, 10, 8, 12, 7,

8, 10, 9, 10, 9, 7, 8, 9, 8, 9, 7, 6, 5, 6, 7, 7, 7),

Re = c(2.16, 2.23, 1.99, 1.42, 5.44, 4.57, 2.72, 1.83, 1.15, 1.5, 2.22, 2.31, 1.59, 2.11,

1.17, 1.8, 1.31, 2.58, 1.92, 1.99, 1.17, 1.72, 1.67, 1.82, 1.5, 0.83, 0.97, 3.7,

3.13, 1.5, 0.91, 1.5, 0.96, 0.63, 1, 1.31, 1.54, 1.84, 1.31, 0.64, 0.81))

summary_NGC <- merge(summary_NGC, Jans_NGC)

summary_dat <- merge(merge(summary_NGC, summary_muGC, by = 'ID'), summary_RGC, by = 'ID') %>%

arrange(desc(N_mode)) %>%

mutate(data = 'DOLPHOT')We will do the same for results obtained using SExtractor (J24) probabilistic GC catalog, and combine the summary table with the one from DOLPHOT:

# everything here is the same as above, only changing the object names to identify it is based on J24.

summary_NGC_J <- data.frame()

summary_RGC_J <- data.frame()

summary_muGC_J <- data.frame()

set.seed(123456)

for(i in 1:length(fnames)){

res_i_name <- paste0(file_paths[i], 'res_prob_', fnames[i], '_Jans.RDS')

file_read <- readRDS(res_i_name)

N_GC <- as.data.frame(apply(file_read[, grepl( "N_" , names(file_read)) & !grepl( "gal" , names(file_read))], 2,

function(x) rpois(nrow(file_read), x)))

R_GC <- as.data.frame(file_read[, grepl( "R_" , names(file_read)) & !grepl( "gal" , names(file_read))])

mu_GC <- as.data.frame(file_read[, grepl( "mu_" , names(file_read)) & !grepl( "gal" , names(file_read)) & !grepl( "_0" , names(file_read))])

UDG_ID <- gsub('N_', '', colnames(N_GC))

colnames(N_GC) <- UDG_ID

colnames(R_GC) <- UDG_ID

colnames(mu_GC) <- UDG_ID

N_GC <- melt(N_GC)

summary_N <- N_GC %>%

rename(ID = variable) %>%

group_by(ID) %>%

summarise_all(list(N_mode = mlv, N_median = median, N_mean = mean,

N_HDI_0.68_lower = function(x) hdi(x, 0.68)[1],

N_HDI_0.68_upper = function(x) hdi(x, 0.68)[2],

`P(N_GC = 0)` = function(x) mean(x == 0)))

R_GC <- melt(R_GC)

summary_R <- R_GC %>%

rename(ID = variable) %>%

group_by(ID) %>%

summarise_all(list(R_mode = mlv, R_median = median, R_mean = mean,

R_HDI_0.68_lower = function(x) hdi(x, 0.68)[1],

R_HDI_0.68_upper = function(x) hdi(x, 0.68)[2]))

mu_GC <- melt(mu_GC)

summary_mu <- mu_GC %>%

rename(ID = variable) %>%

group_by(ID) %>%

summarise_all(list(mu_mode = mlv, mu_median = median, mu_mean = mean,

mu_HDI_0.68_lower = function(x) hdi(x, 0.68)[1],

mu_HDI_0.68_upper = function(x) hdi(x, 0.68)[2],

mu_HDI_0.95_lower = function(x) hdi(x, 0.95)[1],

mu_HDI_0.95_upper = function(x) hdi(x, 0.95)[2]))

summary_NGC_J <- bind_rows(summary_NGC_J, summary_N)

summary_RGC_J <- bind_rows(summary_RGC_J, summary_R)

summary_muGC_J <- bind_rows(summary_muGC_J, summary_mu)

}

summary_NGC_J$ID <- as.character(summary_NGC_J$ID)

summary_RGC_J$ID <- as.character(summary_RGC_J$ID)

summary_muGC_J$ID <- as.character(summary_muGC_J$ID)

summary_NGC_J$ID[13] <- summary_RGC_J$ID[13] <- summary_muGC_J$ID[13] <- 'W7 (V12ACS)'

summary_NGC_J$ID[22] <- summary_RGC_J$ID[22] <- summary_muGC_J$ID[22] <- 'W7 (V14ACS)'

summary_NGC_J <- summary_NGC_J %>%

mutate_at(2:7, as.numeric) %>%

arrange(`P(N_GC = 0)`)

summary_NGC_J <- merge(summary_NGC_J, Jans_NGC)

summary_dat_J <- merge(merge(summary_NGC_J, summary_muGC_J, by = 'ID'), summary_RGC_J, by = 'ID') %>%

arrange(desc(N_mode)) %>%

mutate(data = 'SExtractor (J24)')

## save the combined summary results in a data object (only do it once)

# summary_all_dat <- merge(summary_dat, summary_dat_J, by = 'ID')

# saveRDS(summary_all_dat, 'data/summary_results.RDS')Results Comparison

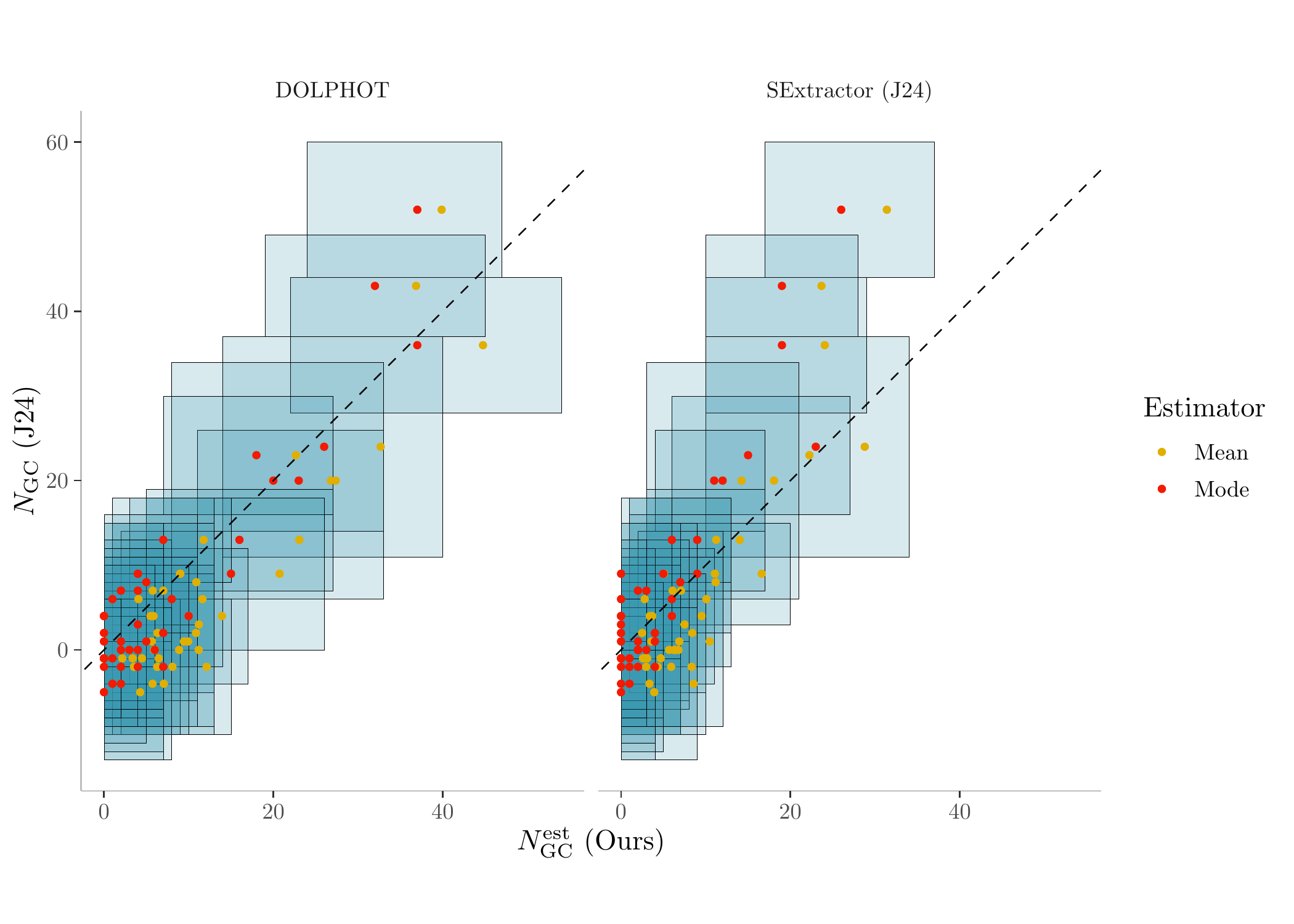

Now we can plot the GC count comparison results using the two summary data sets:

# grabbing the summary statistics of GC counts from the two summary datasets

N_sum <- bind_rows(summary_dat, summary_dat_J) %>%

dplyr::select(ID, N_mean, N_mode, N_HDI_0.68_lower, N_HDI_0.68_upper, Janssens, J_se, data) %>%

melt(., id = c('ID', 'data', 'Janssens', 'J_se','N_HDI_0.68_lower', 'N_HDI_0.68_upper')) %>%

mutate(variable = ifelse(variable == 'N_mean', 'Mean', 'Mode'))

# plot it

color <- c('Mean' = "#E1AF00", 'Mode' = "#F21A00")

ggplot(N_sum, aes(value, Janssens, color = variable)) +

geom_rect(aes(xmin = N_HDI_0.68_lower, xmax = N_HDI_0.68_upper, ymin = Janssens - J_se, ymax = Janssens + J_se), fill = '#3B9AB2', color = 'black', alpha = 0.1, size = 0.1) +

geom_point(size = 1) + geom_abline(slope = 1, intercept = 0, linetype = 'dashed') +

xlab('$N_{\\mathrm{GC}}^{\\mathrm{est}}$ (Ours)') + ylab('$N_{\\mathrm{GC}}$ (J24)') + coord_fixed() + labs(color = 'Estimator') +

theme(panel.grid.major = element_blank(), panel.background = element_blank(),

panel.grid.minor = element_blank(), axis.line = element_line(colour = "gray"),

strip.background = element_blank()) +

scale_color_manual(values = color) +

facet_wrap(.~data)

GC count estimates comparison. (See Figure 4 in the original Li et al. (2024) paper for more details)

Note that Table 3 in the paper are not generated through code,

although the numerical results are all contained in

summary_dat and summary_dat_J.

sessionInfo()R version 4.3.2 (2023-10-31)

Platform: aarch64-apple-darwin20 (64-bit)

Running under: macOS Sonoma 14.1.1

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.3-arm64/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.3-arm64/Resources/lib/libRlapack.dylib; LAPACK version 3.11.0

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

time zone: America/Toronto

tzcode source: internal

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] MATHPOP_0.0.0.9000 HDInterval_0.2.4 reshape2_1.4.4 modeest_2.4.0

[5] ggnewscale_0.4.10 wesanderson_0.3.7 xtable_1.8-4 posterior_1.6.0

[9] tikzDevice_0.12.6 ggridges_0.5.6 ggpubr_0.6.0 lubridate_1.9.3

[13] forcats_1.0.0 stringr_1.5.1 dplyr_1.1.4 purrr_1.0.2

[17] readr_2.1.5 tidyr_1.3.1 tibble_3.2.1 ggplot2_3.5.1

[21] tidyverse_2.0.0 workflowr_1.7.1

loaded via a namespace (and not attached):

[1] splines_4.3.2 later_1.3.2 klippy_0.0.0.9500

[4] polyclip_1.10-6 rpart_4.1.21 lifecycle_1.0.4

[7] sf_1.0-16 rstatix_0.7.2 rprojroot_2.0.4

[10] processx_3.8.4 lattice_0.22-5 MASS_7.3-60

[13] backports_1.5.0 magrittr_2.0.3 plotly_4.10.4

[16] sass_0.4.9 rmarkdown_2.27 jquerylib_0.1.4

[19] yaml_2.3.9 httpuv_1.6.12 askpass_1.2.0

[22] sp_2.1-4 spatstat.sparse_3.1-0 DBI_1.2.3

[25] abind_1.4-5 spatstat.model_3.2-11 mixtools_2.0.0

[28] tensorA_0.36.2.1 git2r_0.33.0 rmutil_1.1.10

[31] spatstat.utils_3.0-5 terra_1.7-78 units_0.8-5

[34] goftest_1.2-3 spatial_7.3-17 spatstat.random_3.2-3

[37] codetools_0.2-19 pdftools_3.4.0 tidyselect_1.2.1

[40] filehash_2.4-6 raster_3.6-26 farver_2.1.2

[43] stable_1.1.6 matrixStats_1.3.0 stats4_4.3.2

[46] spatstat.explore_3.2-7 jsonlite_1.8.8 e1071_1.7-14

[49] survival_3.5-7 segmented_2.1-0 tools_4.3.2

[52] progress_1.2.3 ggstar_1.0.4 Rcpp_1.0.12

[55] glue_1.7.0 gridExtra_2.3 xfun_0.45

[58] mgcv_1.9-0 distributional_0.4.0 priorsense_1.0.1

[61] loo_2.7.0 withr_3.0.0 timeSeries_4032.109

[64] fastmap_1.2.0 fansi_1.0.6 callr_3.7.6

[67] digest_0.6.36 timechange_0.3.0 R6_2.5.1

[70] qpdf_1.3.3 colorspace_2.1-0 tensor_1.5

[73] spatstat.data_3.1-2 utf8_1.2.4 generics_0.1.3

[76] data.table_1.15.4 class_7.3-22 prettyunits_1.2.0

[79] httr_1.4.7 htmlwidgets_1.6.4 whisker_0.4.1

[82] pkgconfig_2.0.3 gtable_0.3.5 timeDate_4032.109

[85] htmltools_0.5.8.1 carData_3.0-5 clue_0.3-65

[88] scales_1.3.0 knitr_1.48 rstudioapi_0.16.0

[91] tzdb_0.4.0 spatstat.linnet_3.1-5 statip_0.2.3

[94] checkmate_2.3.1 nlme_3.1-163 proxy_0.4-27

[97] cachem_1.1.0 KernSmooth_2.23-22 parallel_4.3.2

[100] fBasics_4032.96 pillar_1.9.0 grid_4.3.2

[103] vctrs_0.6.5 VGAM_1.1-11 promises_1.3.0

[106] car_3.1-2 cluster_2.1.4 evaluate_0.24.0

[109] tinytex_0.51 magick_2.8.3 cli_3.6.3

[112] compiler_4.3.2 rlang_1.1.4 crayon_1.5.3

[115] ggsignif_0.6.4 labeling_0.4.3 classInt_0.4-10

[118] ps_1.7.7 getPass_0.2-4 plyr_1.8.9

[121] fs_1.6.4 stringi_1.8.4 viridisLite_0.4.2

[124] deldir_2.0-4 assertthat_0.2.1 munsell_0.5.1

[127] lazyeval_0.2.2 spatstat.geom_3.2-9 Matrix_1.6-3

[130] hms_1.1.3 stabledist_0.7-1 highr_0.11

[133] spatstat_3.0-8 kernlab_0.9-32 broom_1.0.6

[136] bslib_0.7.0