Response time correction of Contros HydroC pCO2 data

Jens Daniel Müller

04 May, 2020

Last updated: 2020-05-04

Checks: 7 0

Knit directory: BloomSail/

This reproducible R Markdown analysis was created with workflowr (version 1.6.1). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

Great! Since the R Markdown file has been committed to the Git repository, you know the exact version of the code that produced these results.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20191021) was run prior to running the code in the R Markdown file. Setting a seed ensures that any results that rely on randomness, e.g. subsampling or permutations, are reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 2ab39d7. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for the analysis have been committed to Git prior to generating the results (you can use wflow_publish or wflow_git_commit). workflowr only checks the R Markdown file, but you know if there are other scripts or data files that it depends on. Below is the status of the Git repository when the results were generated:

Ignored files:

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: data/Finnmaid_2018/

Ignored: data/GETM/

Ignored: data/Maps/

Ignored: data/Ostergarnsholm/

Ignored: data/TinaV/

Ignored: data/_merged_data_files/

Ignored: data/_summarized_data_files/

Ignored: data/backup/

Ignored: output/Plots/Figures_publication/.tmp.drivedownload/

Untracked files:

Untracked: output/Plots/merging_interpolation/

Untracked: output/Plots/response_time/all_plots.pdf

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were made to the R Markdown (analysis/response_time.Rmd) and HTML (docs/response_time.html) files. If you’ve configured a remote Git repository (see ?wflow_git_remote), click on the hyperlinks in the table below to view the files as they were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 2ab39d7 | jens-daniel-mueller | 2020-05-04 | All profiles and timeseries in one plot pdf |

| html | 3832733 | jens-daniel-mueller | 2020-04-30 | Build site. |

| Rmd | 4f4ab08 | jens-daniel-mueller | 2020-04-30 | harmonized code until RT determination |

| html | 1b6480f | jens-daniel-mueller | 2020-04-30 | Build site. |

| Rmd | fe72316 | jens-daniel-mueller | 2020-04-30 | revised variable and object names, used temp-dependent tau only, rerun code |

| html | b5722a7 | jens-daniel-mueller | 2020-04-28 | Build site. |

| html | 6fdf22f | jens-daniel-mueller | 2020-04-27 | Build site. |

| Rmd | f73f073 | jens-daniel-mueller | 2020-04-27 | removed Date-ID column causing error |

| html | 472c2b4 | jens-daniel-mueller | 2020-04-21 | Build site. |

| html | f8fcf50 | jens-daniel-mueller | 2020-04-19 | created pub figures for time series |

| html | a6c4c22 | jens-daniel-mueller | 2020-03-30 | Build site. |

| html | 80c78b3 | jens-daniel-mueller | 2020-03-30 | Build site. |

| html | 5f8ca30 | jens-daniel-mueller | 2020-03-20 | Build site. |

| html | 2a20453 | jens-daniel-mueller | 2020-03-20 | Build site. |

| html | 473ab25 | jens-daniel-mueller | 2020-03-19 | Build site. |

| html | 81f022e | jens-daniel-mueller | 2020-03-18 | Build site. |

| html | 1e39d85 | jens-daniel-mueller | 2020-03-18 | Build site. |

| html | 2105236 | jens-daniel-mueller | 2020-03-18 | Build site. |

| html | 05b9bdc | jens-daniel-mueller | 2020-03-17 | Build site. |

| html | 0202742 | jens-daniel-mueller | 2020-03-16 | Build site. |

| html | 8e83afd | jens-daniel-mueller | 2020-03-12 | Build site. |

| html | a3ddea4 | jens-daniel-mueller | 2020-03-12 | Build site. |

| html | 52621ea | jens-daniel-mueller | 2020-03-12 | Build site. |

| html | e43a6f2 | jens-daniel-mueller | 2019-12-19 | Build site. |

| html | 3042ff3 | jens-daniel-mueller | 2019-12-19 | Build site. |

| Rmd | 282c3ac | jens-daniel-mueller | 2019-12-19 | whole data set RT corrected |

| html | 0bbafef | jens-daniel-mueller | 2019-12-13 | Build site. |

| Rmd | b9c2b8f | jens-daniel-mueller | 2019-12-13 | Finalized RT correction |

| html | b9c2b8f | jens-daniel-mueller | 2019-12-13 | Finalized RT correction |

| html | 9a4e5c3 | jens-daniel-mueller | 2019-12-13 | Build site. |

| Rmd | cb16002 | jens-daniel-mueller | 2019-12-13 | SD of RT check up vs downcast |

| html | cb16002 | jens-daniel-mueller | 2019-12-13 | SD of RT check up vs downcast |

| html | 5e3fcfb | jens-daniel-mueller | 2019-12-12 | Build site. |

| Rmd | f1cd72d | jens-daniel-mueller | 2019-12-12 | High res tau and RT evaluation |

| html | f1cd72d | jens-daniel-mueller | 2019-12-12 | High res tau and RT evaluation |

| html | 8abe41d | jens-daniel-mueller | 2019-12-10 | Build site. |

| Rmd | 0fcfdbf | jens-daniel-mueller | 2019-12-10 | excluded partial profiles from summary stats |

| html | 70e07ef | jens-daniel-mueller | 2019-12-10 | Build site. |

| Rmd | 935bc85 | jens-daniel-mueller | 2019-12-10 | dep interval profiles decreased to 1m |

| html | 78710ee | jens-daniel-mueller | 2019-12-09 | Build site. |

| Rmd | c6cfca5 | jens-daniel-mueller | 2019-12-09 | RT correction incl OGB data |

| html | 4d3910a | jens-daniel-mueller | 2019-12-09 | Build site. |

| Rmd | 25aeefd | jens-daniel-mueller | 2019-12-09 | data_base included OGB data |

| html | 0e13786 | jens-daniel-mueller | 2019-12-03 | Build site. |

| Rmd | 8da790d | jens-daniel-mueller | 2019-12-03 | tau optimum included |

| html | 4f1a268 | jens-daniel-mueller | 2019-12-03 | Build site. |

| Rmd | 216ad8a | jens-daniel-mueller | 2019-12-03 | response time correction extended |

| html | 216ad8a | jens-daniel-mueller | 2019-12-03 | response time correction extended |

| html | fd0f33d | jens-daniel-mueller | 2019-11-26 | Build site. |

| Rmd | 421c48c | jens-daniel-mueller | 2019-11-26 | found temperature dependence of RT |

| html | 421c48c | jens-daniel-mueller | 2019-11-26 | found temperature dependence of RT |

| html | bc6f19b | jens-daniel-mueller | 2019-11-22 | Build site. |

| Rmd | 03b1b97 | jens-daniel-mueller | 2019-11-22 | updated RT determination |

| html | d921065 | jens-daniel-mueller | 2019-11-14 | Build site. |

| Rmd | 252f84d | jens-daniel-mueller | 2019-11-14 | included EDA in data base |

| html | d61a468 | jens-daniel-mueller | 2019-11-14 | Build site. |

| Rmd | 76e38c6 | jens-daniel-mueller | 2019-11-14 | rebuild site, new toc depth |

| html | 08b9a38 | jens-daniel-mueller | 2019-11-08 | Build site. |

| Rmd | ad4aa12 | jens-daniel-mueller | 2019-11-08 | Finalized RT determination |

| html | ad4aa12 | jens-daniel-mueller | 2019-11-08 | Finalized RT determination |

| html | b8dac9c | jens-daniel-mueller | 2019-11-08 | Build site. |

| Rmd | efcf0d6 | jens-daniel-mueller | 2019-11-08 | Finalized and cleaned RT determination |

| html | f3277a5 | jens-daniel-mueller | 2019-11-08 | Build site. |

| Rmd | aa27fd4 | jens-daniel-mueller | 2019-11-08 | Finalized RT determination |

| html | 4256bcf | jens-daniel-mueller | 2019-11-08 | Build site. |

| Rmd | 7f52e66 | jens-daniel-mueller | 2019-11-08 | response_time pdf updated |

| html | 72687ee | jens-daniel-mueller | 2019-11-08 | Build site. |

| Rmd | 74632d6 | jens-daniel-mueller | 2019-11-08 | response_time pdf updated |

| html | 74212a6 | jens-daniel-mueller | 2019-11-08 | Build site. |

| Rmd | 6cb1935 | jens-daniel-mueller | 2019-11-08 | response_time updated |

| html | 33e3659 | jens-daniel-mueller | 2019-10-22 | Build site. |

| Rmd | efcafd1 | jens-daniel-mueller | 2019-10-22 | Added data base, merging, and RT determination |

| html | 1595fe9 | jens-daniel-mueller | 2019-10-21 | Build site. |

| html | a059c41 | jens-daniel-mueller | 2019-10-21 | Build site. |

| Rmd | eff54ce | jens-daniel-mueller | 2019-10-21 | Added CTD read-in |

| html | 32ec4f7 | jens-daniel-mueller | 2019-10-21 | Build site. |

| Rmd | b2d2bbb | jens-daniel-mueller | 2019-10-21 | Structured data base and response time Rmd |

| html | bafa88f | jens-daniel-mueller | 2019-10-21 | Build site. |

| Rmd | 53ad162 | jens-daniel-mueller | 2019-10-21 | Structured data base and response time Rmd |

| html | 076a36b | jens-daniel-mueller | 2019-10-21 | Build site. |

| Rmd | 3e8a32e | jens-daniel-mueller | 2019-10-21 | Structured data base and response time Rmd |

| html | b2d0164 | jens-daniel-mueller | 2019-10-21 | Build site. |

| Rmd | 53ae361 | jens-daniel-mueller | 2019-10-21 | Added data base and response time Rmd |

library(tidyverse)

library(seacarb)

library(broom)

library(lubridate)

library(tibbletime)

library(patchwork)1 List of relevant parameters

Response time determination

- Fit interval length: Use tau from one or all fit interval length? Scale fit interval length to expected tau?

- tau residual threshold (mean absolute residula <1% of final pCO2, entire group removed)

- Mean vs T-dependent tau

Response time correction

- correction procedure (Bittig vs. Fiedler, Miloshevich, Fietzek)

- Smoothing

Quality assesment of response time correction

- Down-Up-difference vs reference value

- Depth interval width for offset calculation

- Max depth for Down-Up-difference (currently upper 20m)

- NA criterion for included Down-Up-difference (currently 2)

2 Response time determination

2.1 HydroC sensor settings

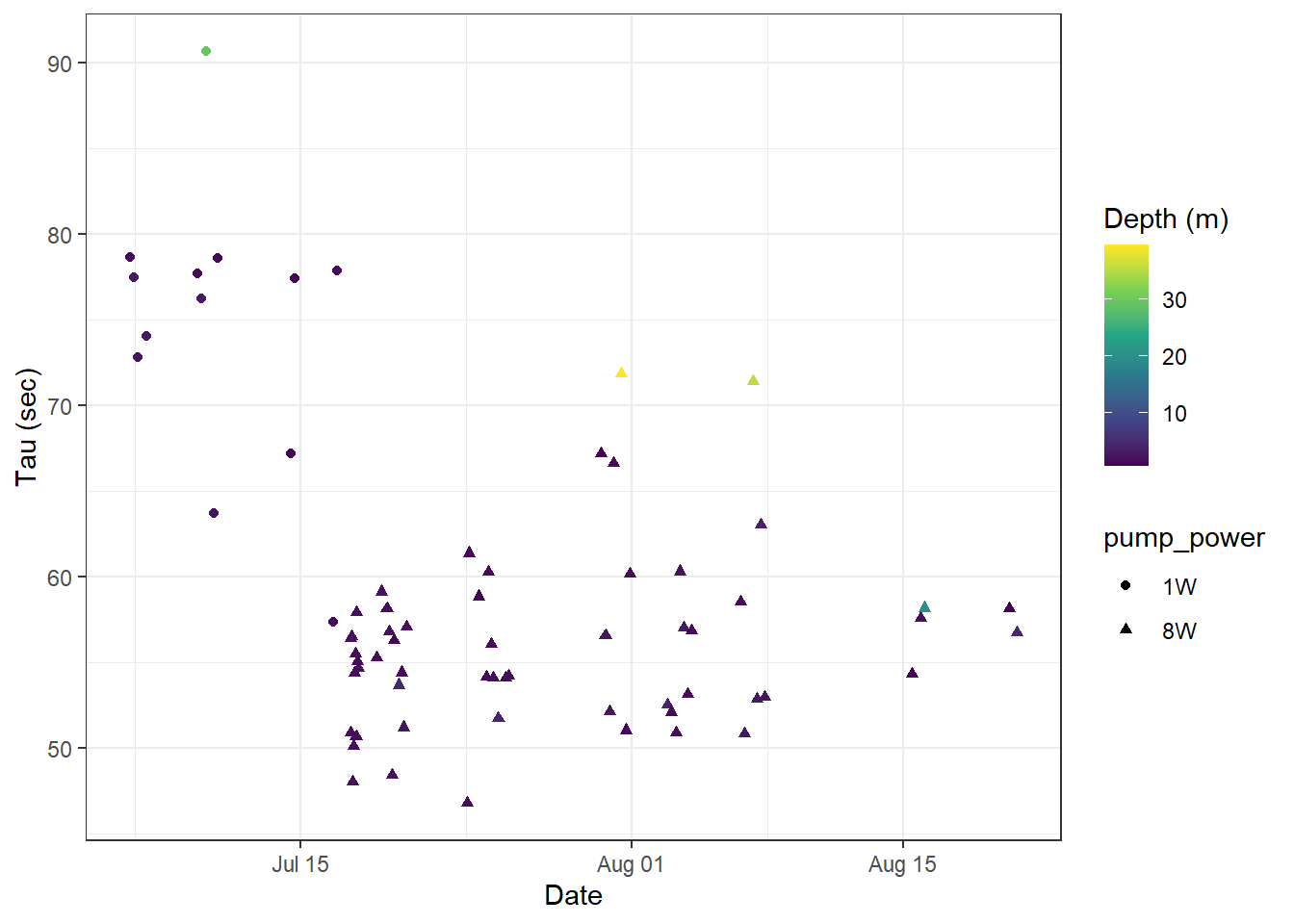

The sensor was first run with a low power pump (1W). Later - and for most parts of the expedition - with a stronger (8W) pump. Pumps were switched between recordings (data file: SD_datafile_20180718_170417CO2-0618-001.txt):

- 2018-07-17;13:08:34

- 2018-07-17;13:08:35

Logging frequency for all measurement modes (Measure, Zero, Flush) was increased in two steps, It was:

10 sec for all recordings including SD_datafile_20180714_073641CO2-0618-001.txt

2 sec after change in SD_datafile_20180717_121052CO2-0618-001.txt at:

- 2018-07-14;07:52:02

- 2018-07-14;07:52:12

- 2018-07-14;07:52:14

1 sec after change in SD_datafile_20180718_170417CO2-0618-001 at:

- 2018-07-17;12:27:25

- 2018-07-17;12:27:27

- 2018-07-17;12:27:28

2.2 Model fitting

Response times were determined by fitting a nonlinear least-squares model with the nls function as described here by Douglas Watson.

- Flush period length: variable

- Flush period restricted to equilibration phase, avoiding initial gas mixing effects occuring at the start of each Flush period

- only completed Flush periods (duration > 500 sec) included

tm <- read_csv(here::here("data/_merged_data_files/merging_interpolation",

"tm.csv"),

col_types = cols(ID = col_character(),

pCO2_analog = col_double(),

pCO2_corr = col_double(),

Zero = col_factor(),

Flush = col_factor(),

Zero_counter = col_integer(),

deployment = col_integer(),

duration = col_double(),

mixing = col_character(),

lat = col_double(),

lon = col_double()))

tm <- tm %>%

select(date_time, ID, dep, tem, Flush, pCO2_corr, Zero_counter, duration, mixing)

tm_flush <- tm %>%

filter(Flush == 1, mixing == "equilibration")

rm(tm)

tm_flush <- tm_flush %>%

group_by(Zero_counter) %>%

mutate(duration = duration- min(duration)) %>%

ungroup()2.2.1 Example plot

An example plot for a nls model fitted to pCO2 observations during a Flush phase is shown below.

i <- unique(tm_flush$Zero_counter)[30]

tm_flush_counter <- tm_flush %>%

filter(Zero_counter == i, duration <= 300)

fit <- tm_flush_counter %>%

nls(pCO2_corr ~ SSasymp(duration, yf, y0, log_alpha), data = .)

tau <- as.numeric(exp(-tidy(fit)[3,2]))

pCO2_corr_end <- as.numeric(tidy(fit)[1,2])

pCO2_corr_start <- as.numeric(tidy(fit)[2,2])

pCO2_corr_delta = pCO2_corr_end - pCO2_corr_start

resid_abs_mean <- mean(abs(resid(fit)))

augment(fit) %>%

ggplot(aes(duration, pCO2_corr))+

geom_point()+

geom_line(aes(y = .fitted))+

geom_vline(xintercept = tau)+

geom_hline(yintercept = pCO2_corr_start + 0.63 *(pCO2_corr_delta))+

labs(y=expression(pCO[2]~(µatm)), x="Duration of Flush period (s)")

Example response time determination by non-linear least squares fit to the pCO2 recovery signal after zeroing. The vertical line indicates the determined response time tau. The horizontal line indicates 63% of the difference between start and final fitted pCO2.

rm(tm_flush_counter, fit, i, tau,

pCO2_corr_delta, pCO2_corr_end, pCO2_corr_start,

resid_abs_mean)2.2.2 Flush intervals

duration_intervals <- seq(150,500,50)As there was some speculation about the dependence of determined response times (\(\tau\)) on the chosen duration of the fit interval, the response time \(\tau\) was determined for all zeroings and for total durations of:

150, 200, 250, 300, 350, 400, 450, 500 secs

2.2.3 Residual limits

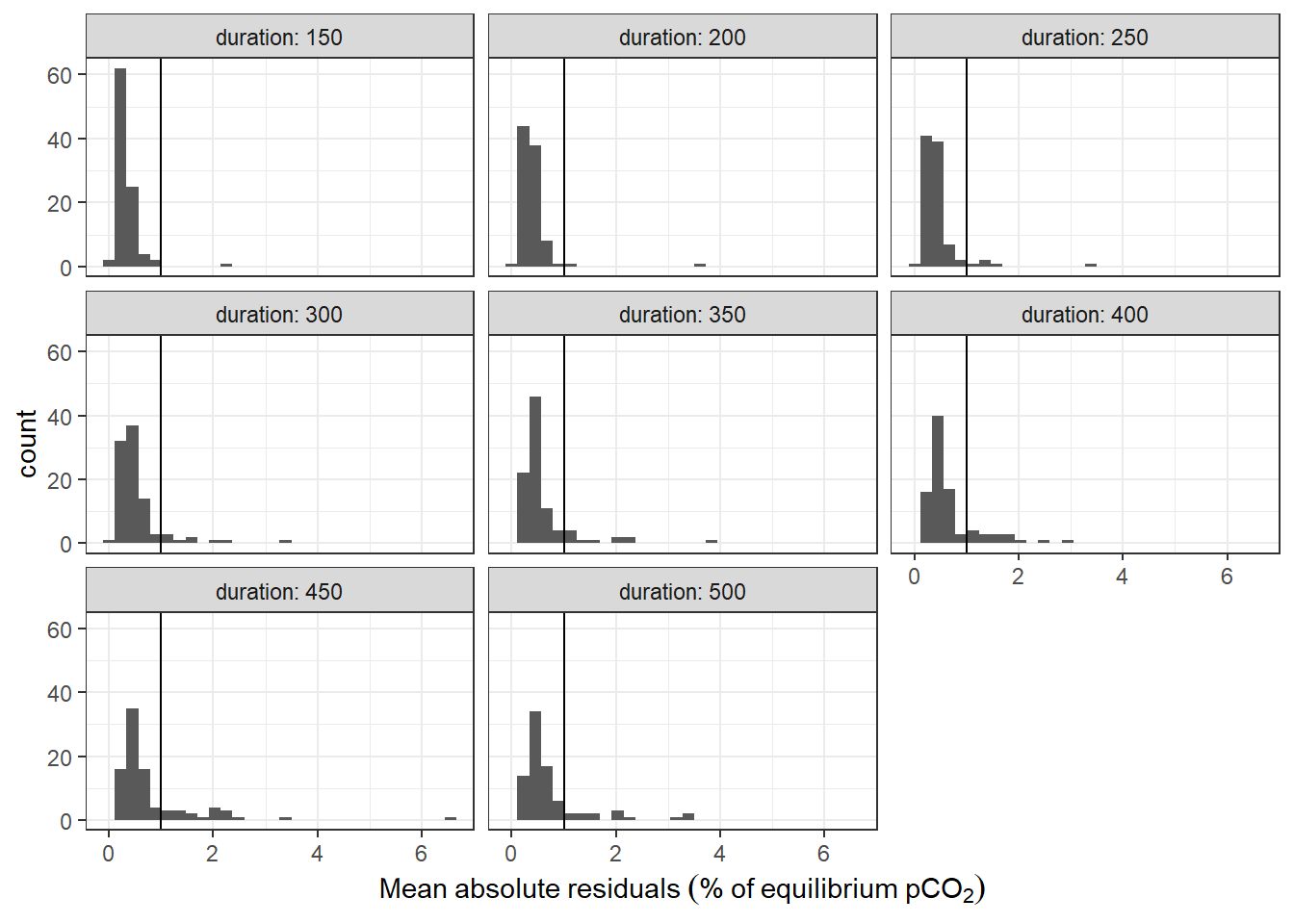

resid_lim <- 1A mean absolute residual threshold of >1% of final pCO2 was defined.

2.2.4 Fitting algorithm

pdf(file=here::here("output/Plots/response_time",

"tau_determination_pCO2_corr_flushperiods_nls.pdf"),

onefile = TRUE, width = 7, height = 4)

for (i in unique(tm_flush$Zero_counter)) {

for (max_duration in duration_intervals) {

tm_flush_counter <- tm_flush %>%

filter(Zero_counter == i, duration <= max_duration)

fit <-

try(

tm_flush_counter %>%

nls(pCO2_corr ~ SSasymp(duration, yf, y0, log_alpha), data = .),

TRUE)

if (class(fit) == "nls"){

tau <- as.numeric(exp(-tidy(fit)[3,2]))

pCO2_corr_end <- as.numeric(tidy(fit)[1,2])

pCO2_corr_start <- as.numeric(tidy(fit)[2,2])

pCO2_corr_delta = pCO2_corr_end - pCO2_corr_start

resid_abs_mean <- mean(abs(resid(fit))/pCO2_corr_end)*100

temp <- as_tibble(bind_cols(Zero_counter = i,

duration = max_duration,

date_time = mean(tm_flush_counter$date_time),

dep = mean(tm_flush_counter$dep),

tem = mean(tm_flush_counter$tem),

pCO2_corr = pCO2_corr_end,

tau = tau,

resid = resid_abs_mean))

if (exists("tau_values")){tau_values <- bind_rows(tau_values, temp)}

else {tau_values <- temp}

if (resid_abs_mean > resid_lim){warn <- "orange"}

else {warn <- "black"}

print(

augment(fit) %>%

ggplot(aes(duration, pCO2_corr))+

geom_point(col = warn)+

geom_line(aes(y = .fitted))+

geom_vline(xintercept = tau)+

geom_hline(yintercept = pCO2_corr_start + 0.63 *(pCO2_corr_delta))+

labs(y=expression(pCO[2]~(µatm)), x="Duration of Flush period (s)",

title = paste("Zero_counter: ", i,

"Tau: ", round(tau,1),

"Mean absolute residual (%): ", round(resid_abs_mean, 2)))+

xlim(0,600)

)

}

else {

temp <- as_tibble(bind_cols(Zero_counter = i,

duration = max_duration,

date_time = mean(tm_flush_counter$date_time),

dep = mean(tm_flush_counter$dep),

tem = mean(tm_flush_counter$tem),

pCO2_corr = pCO2_corr_end,

tau = NaN,

resid = NaN))

if (exists("tau_values")){tau_values <- bind_rows(tau_values, temp)}

else {tau_values <- temp}

print(

tm_flush_counter %>%

ggplot(aes(duration, pCO2_corr))+

geom_point(col="red")+

labs(y=expression(pCO[2]~(µatm)), x="Duration of Flush period (s)",

title = paste("Zero_counter: ", i,

"nls model failed"))+

xlim(0,600)

)

}

}

}

dev.off()

rm(tm_flush_counter, fit, i, tau, pCO2_corr_delta, pCO2_corr_end, pCO2_corr_start, temp, max_duration, resid_abs_mean, warn)

tau_values %>%

write_csv(here::here("data/_merged_data_files/response_time",

"tau_values.csv"))

rm(tau_values, tm_flush)A pdf with plots of all individual response time fits can be accessed here. In this pdf, response time fits that exceed the residual criterion (Mean absolute residual >1% of final pCO2) are printed in orange. Data from flush periods without succesful fit are printed red.

tau_values <-

read_csv(here::here("data/_merged_data_files/response_time",

"tau_values.csv"))

# define periods of different pumps used

max_Zero_counter <- max(unique(tau_values[tau_values$date_time < ymd_hms("2018-07-17;13:08:34"),]$Zero_counter))

tau_values <- tau_values %>%

mutate(pump_power = if_else(Zero_counter <= max_Zero_counter, "1W", "8W"))

# subset determined tau values by residual threshold

tau_resid <- tau_values %>%

group_by(Zero_counter) %>%

mutate(resid_max = max(resid, na.rm = TRUE)) %>%

filter(resid_max < resid_lim) %>%

select(-resid_max) %>%

ungroup()

tau_resid_out <- tau_values %>%

group_by(Zero_counter) %>%

mutate(resid_max = max(resid, na.rm = TRUE)) %>%

filter(resid_max > resid_lim) %>%

select(-resid_max) %>%

ungroup()

# Flush periods where model failure occured

tau_values %>%

filter(is.na(resid)) %>%

group_by(Zero_counter) %>%

summarise(n()) %>%

ungroup()

# Flush periods removed due to residual criterion

tau_resid_out %>%

group_by(Zero_counter) %>%

summarise(n()) %>%

ungroup()

# mean tau of first RT determination

tau_resid %>%

filter(Zero_counter == 2) %>%

summarise(tau = mean(tau))

# mean tau of all RT determinations before pump switch, except first

tau_resid %>%

filter(Zero_counter != 2, Zero_counter <= 20) %>%

summarise(tau = mean(tau))

tau_resid <- tau_resid %>%

filter(Zero_counter != 2)

# calculate some metrics for the subsetting

n_Zero_counters <- tau_values %>%

group_by(Zero_counter) %>%

n_groups()

n_duration_intervals <- length(duration_intervals)

n_tau_max <- n_Zero_counters * length(duration_intervals)

n_tau_total <- nrow(tau_values %>% filter(!is.na(resid)))

n_tau_resid <- nrow(tau_resid)2.3 Outcome

2.3.1 General considerations

Estimated \(\tau\) values were only taken into account when stable environmental pCO2 levels were present. Absence of stable environmental pCO2 was assumed when the mean absolute fit residual was above 1 % of the final equilibrium pCO2. If one model fit (irrespective the chosen fit interval length) of a particular flush period did not match that criterion, the flush period was ignored entirely. Usually, fits with higher duration did not meet this criterion. For some unexplained reason the first \(\tau\) determination resulted in values about twice as high as all other flush periods and was therefore removed as an outlier.

Metrics to characterize the fitting procedure include the number of:

- Flush periods: 97

- Duration intervals: 8

- Exercised response time fits: 776

- Succesful response times determinations: 741 (95.5)%

- \(\tau\)’s after removing groups of fits with high absolute fit residual: 560 (72.2 %)

It should be noted that all failed model fits occured in flush periods where the residual criterion was not meet by at least one other fit (i.e. fitting only failed under unstable conditions).

tau_values %>%

ggplot(aes(resid))+

geom_histogram()+

facet_wrap(~duration, labeller = label_both)+

geom_vline(xintercept = resid_lim)+

labs(x=expression(Mean~absolute~residuals~("%"~of~equilibrium~pCO[2])))

Histogram of residuals from fit displayed for the investigate durations of the fit interval. Vertical line represents the chosen threshold.

2.3.2 Fit interval length

No clear dependence of \(\tau\) on the length of the flushing period was found.

tau_resid %>%

group_by(Zero_counter) %>%

mutate(d_tau = tau - mean(tau)) %>%

ggplot(aes(duration, d_tau))+

geom_hline(yintercept = 0)+

geom_smooth()+

geom_point()+

facet_wrap(~Zero_counter, ncol = 4, labeller = label_both)+

labs(x="Duration (sec)", y="Deviation from mean tau (sec)")

Determined tau values as a function of the fit interval duration, displayed individually for each flush period.

tau_resid %>%

group_by(Zero_counter) %>%

mutate(d_tau = tau - mean(tau)) %>%

ggplot(aes(duration, d_tau, group=duration))+

geom_violin()+

geom_point()+

labs(x="Duration (sec)", y="Deviation from mean tau (sec)")+

facet_wrap(~pump_power)

Determined tau values as a function of the fit interval duration, pooled for all flush period.

# tau_resid %>%

# group_by(Zero_counter) %>%

# mutate(d_tau = tau - mean(tau)) %>%

# ungroup() %>%

# group_by(duration) %>%

# summarise(d_tau_sd = sd(d_tau, na.rm = TRUE)) %>%

# ungroup()

tau_resid <- tau_resid %>%

filter(duration == 300) %>%

select(-duration)2.3.3 Time series

tau_resid %>%

ggplot(aes(date_time, tau, col=dep, shape=pump_power))+

geom_point()+

scale_color_viridis_c(name="Depth (m)")+

labs(y="Tau (sec)", x="Date")

Tau for all Zeroings with color representing water depth.

2.3.4 Temperature dependence

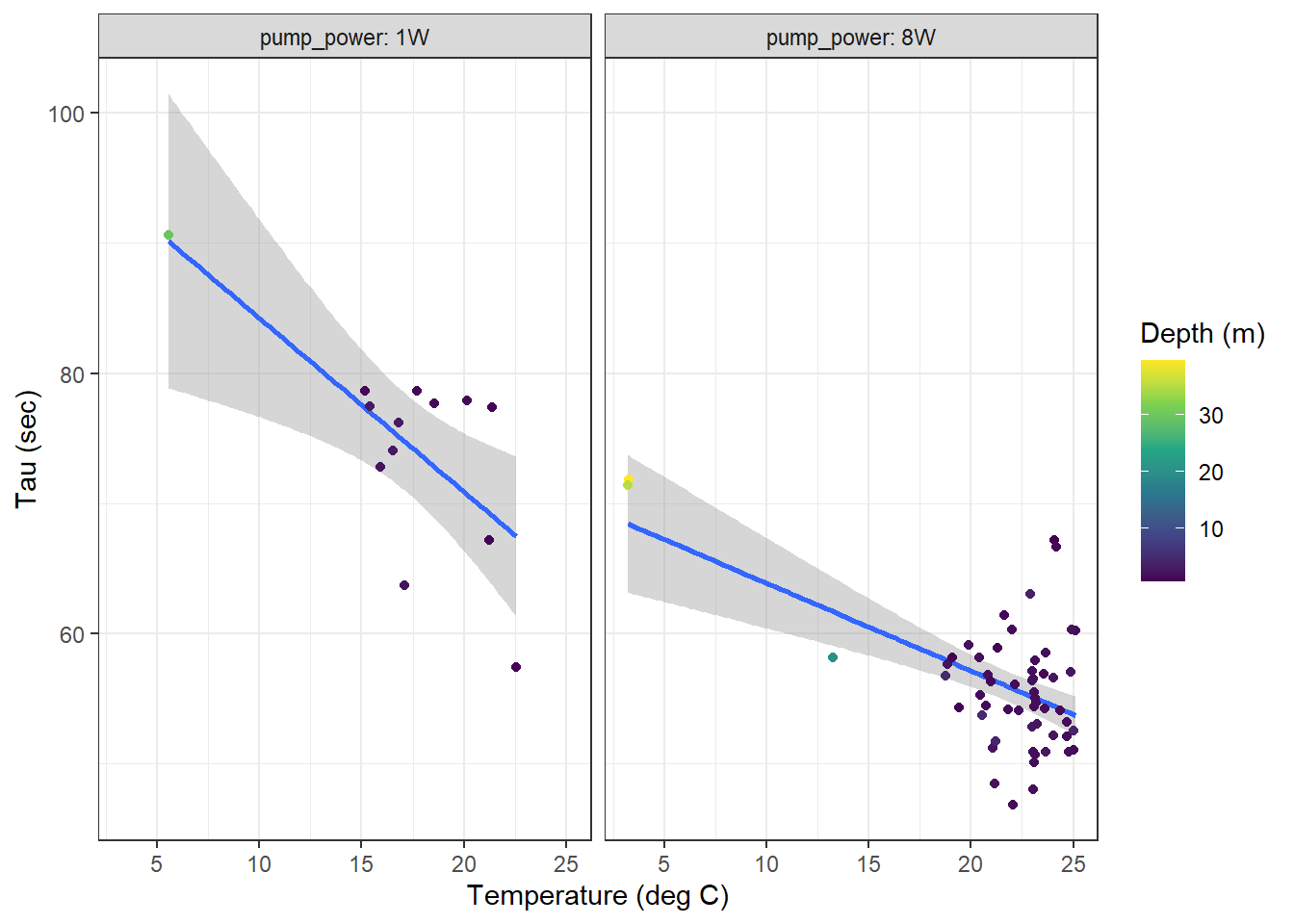

A temperature dependence of determined response times \(\tau\) was found, with similar slopes but different intercepts for both pumps used.

tau_resid %>%

ggplot(aes(tem, tau, col=dep))+

geom_smooth(method = "lm")+

geom_point()+

scale_color_viridis_c(name="Depth (m)")+

labs(y="Tau (sec)", x="Temperature (deg C)")+

facet_wrap(~pump_power, labeller = label_both)

Tau as a function of temperature for all zeroings determined with low power (left) and strong (right) pump. Color represents the water depth.

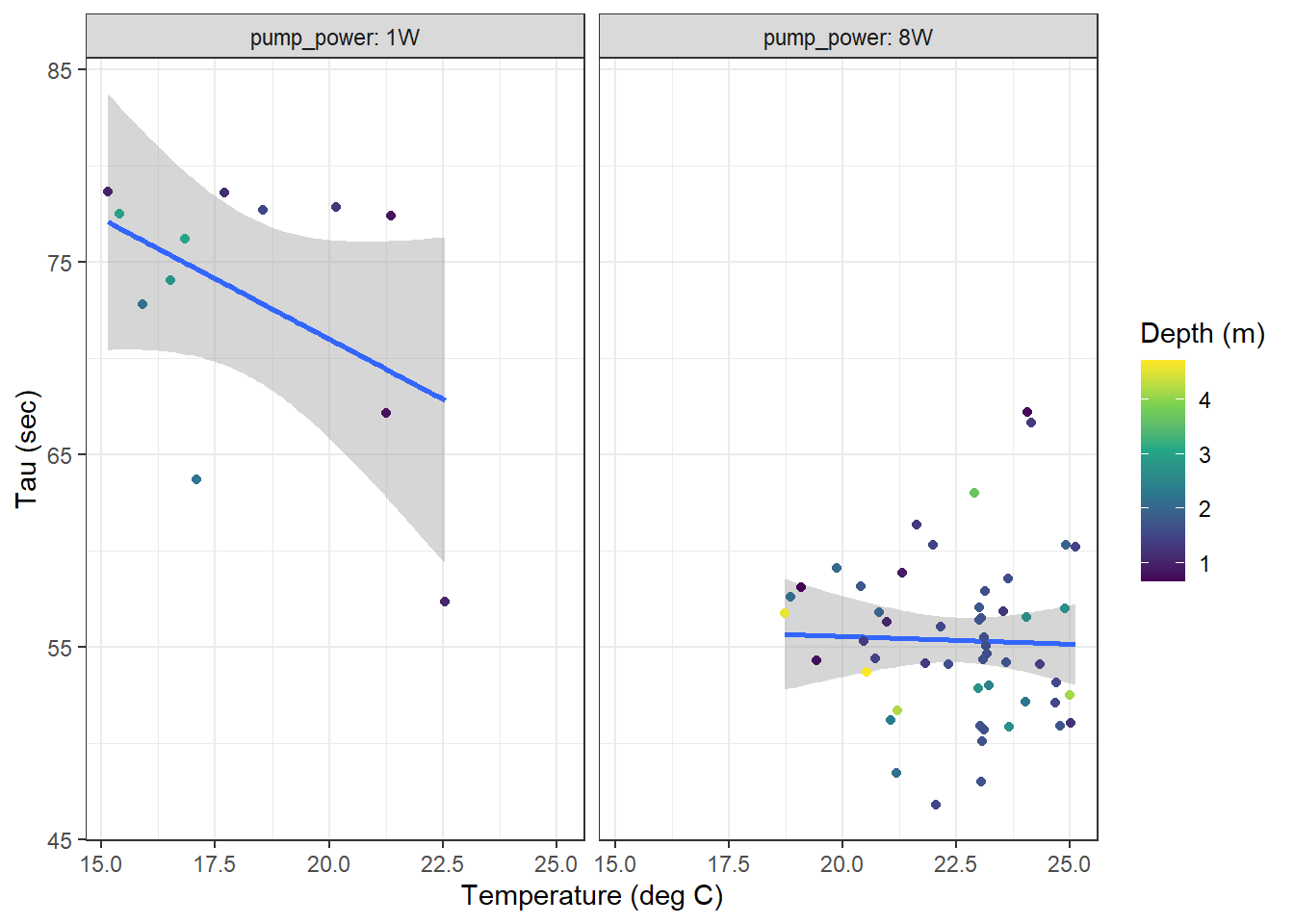

For the response times determined near the surface (<10m, restricted temperature range), no clear temperature dependence of \(\tau\) was detected.

tau_resid %>%

filter(dep < 10) %>%

ggplot(aes(tem, tau, col=dep))+

geom_smooth(method = "lm")+

geom_point()+

scale_color_viridis_c(name="Depth (m)")+

labs(y="Tau (sec)", x="Temperature (deg C)")+

facet_wrap(~pump_power, labeller = label_both)

Surface tau (<10m) as a function of temperature for all zeroings determined with low power (left) and strong (right) pump. Color represents the water depth.

2.4 Final tau

Finally, the mean response times are:

tau_mean <- tau_resid %>%

group_by(pump_power) %>%

summarise(tau = mean(tau, na.rm = TRUE))

tau_mean# A tibble: 2 x 2

pump_power tau

<chr> <dbl>

1 1W 74.6

2 8W 56.0But we can also fit response times as a function of water temperature:

tau_fit <- tau_resid %>%

group_by(pump_power) %>%

do(fit = lm(tau ~ tem, data = .)) %>%

tidy(fit) %>%

select(pump_power, term, estimate) %>%

spread(term, estimate)

tau_fit# A tibble: 2 x 3

# Groups: pump_power [2]

pump_power `(Intercept)` tem

<chr> <dbl> <dbl>

1 1W 97.6 -1.33

2 8W 70.6 -0.672tau_fit %>% write_csv(here::here("data/_merged_data_files/response_time",

"tau_fit.csv"))

rm(list=setdiff(ls(), c("tau_resid", "tau_fit")))Both response time estimated (constant mean vs T-dependent) will be applied to correct the recorded pCO2 profiles.

3 Response time correction

3.1 Data preparation

Following tasks were performed to prepare data for the response time correction:

- Select only profiles

- Assign deployment periods with 1W- and 8W- pump

tm <- read_csv(here::here("data/_merged_data_files/merging_interpolation",

"tm.csv"),

col_types = cols(ID = col_character(),

pCO2_analog = col_double(),

pCO2_corr = col_double(),

Zero = col_factor(),

Flush = col_factor(),

Zero_counter = col_integer(),

deployment = col_integer(),

duration = col_double(),

mixing = col_character(),

lat = col_double(),

lon = col_double()))

# extract relevant parts

tm <- tm %>%

select(date_time, ID, type, station, dep, sal, tem,

Zero, Flush, pCO2_corr, deployment, Zero_counter)

tm <- tm %>%

filter(type == "P")

tm <- tm %>%

group_by(ID, station) %>%

mutate(duration = as.numeric(date_time - min(date_time)),

pump_power = if_else(date_time < ymd_hms("2018-07-17;13:08:34"), "1W", "8W")) %>%

arrange(date_time)- Include manually derived meta-information about the profiling status

# Load profile meta data

meta <- read_csv(here::here("Data/TinaV/Sensor",

"Sensor_meta.csv"),

col_types = cols(ID = col_character()))

# Merge data and meta information

tm <- full_join(tm, meta)

rm(meta)

# creating descriptive variables ------------------------------------------

tm <- tm %>%

mutate(phase = "standby",

phase = if_else(duration >= start &

duration < down &

!is.na(down) &

!is.na(start),

"down", phase),

phase = if_else(duration >= down &

duration < lift &

!is.na(lift) &

!is.na(down ),

"low", phase),

phase = if_else(duration >= lift &

duration < up &

!is.na(up ) &

!is.na(lift ),

"mid", phase),

phase = if_else(duration >= up &

duration < end &

!is.na(end ) &

!is.na(up ),

"up", phase))

tm <- tm %>%

select(-c(start, down, lift, up, end, comment))

tm <- tm %>%

filter(Zero == 0, Flush == 0)- Subset reference pCO2 recordings at the end of equilibration periods executed at constant depth

tm_pCO2_equi <- tm %>%

filter(phase %in% c("mid")) %>%

group_by(ID, station) %>%

top_n(5, row_number()) %>%

summarise(date_time = mean(date_time),

duration = mean(duration),

pCO2_corr = mean(pCO2_corr, na.rm = TRUE),

dep = mean(dep, na.rm = TRUE)) %>%

ungroup()

tm_pCO2_equi %>%

write_csv(here::here("data/_merged_data_files/response_time",

"tm_pCO2_equi.csv"))- Select profile for example plots

example_ID <- "180730"

example_station <- "P01"cast_dep <- tm %>%

pivot_longer(c(dep, pCO2_corr), names_to = "parameter", values_to = "value")

cast_dep_equi <- tm_pCO2_equi %>%

pivot_longer(c(dep, pCO2_corr), names_to = "parameter", values_to = "value")

max_duration <- round(max(cast_dep$duration)/1000,0)*1000

i_ID <- example_ID

i_station <- example_station

cast_dep_equi_sub <- cast_dep_equi %>%

filter(ID == i_ID,

station == i_station)

cast_dep %>%

filter(ID == i_ID,

station == i_station) %>%

ggplot(aes(duration, value, col=phase))+

geom_point(size=0.5)+

geom_point(data = cast_dep_equi_sub, aes(duration, value), col="black")+

scale_y_reverse()+

scale_x_continuous(breaks = seq(0,6000,500))+

labs(title = str_c("Date: ",i_ID," | Station: ",i_station))+

facet_grid(parameter~., scales = "free_y")

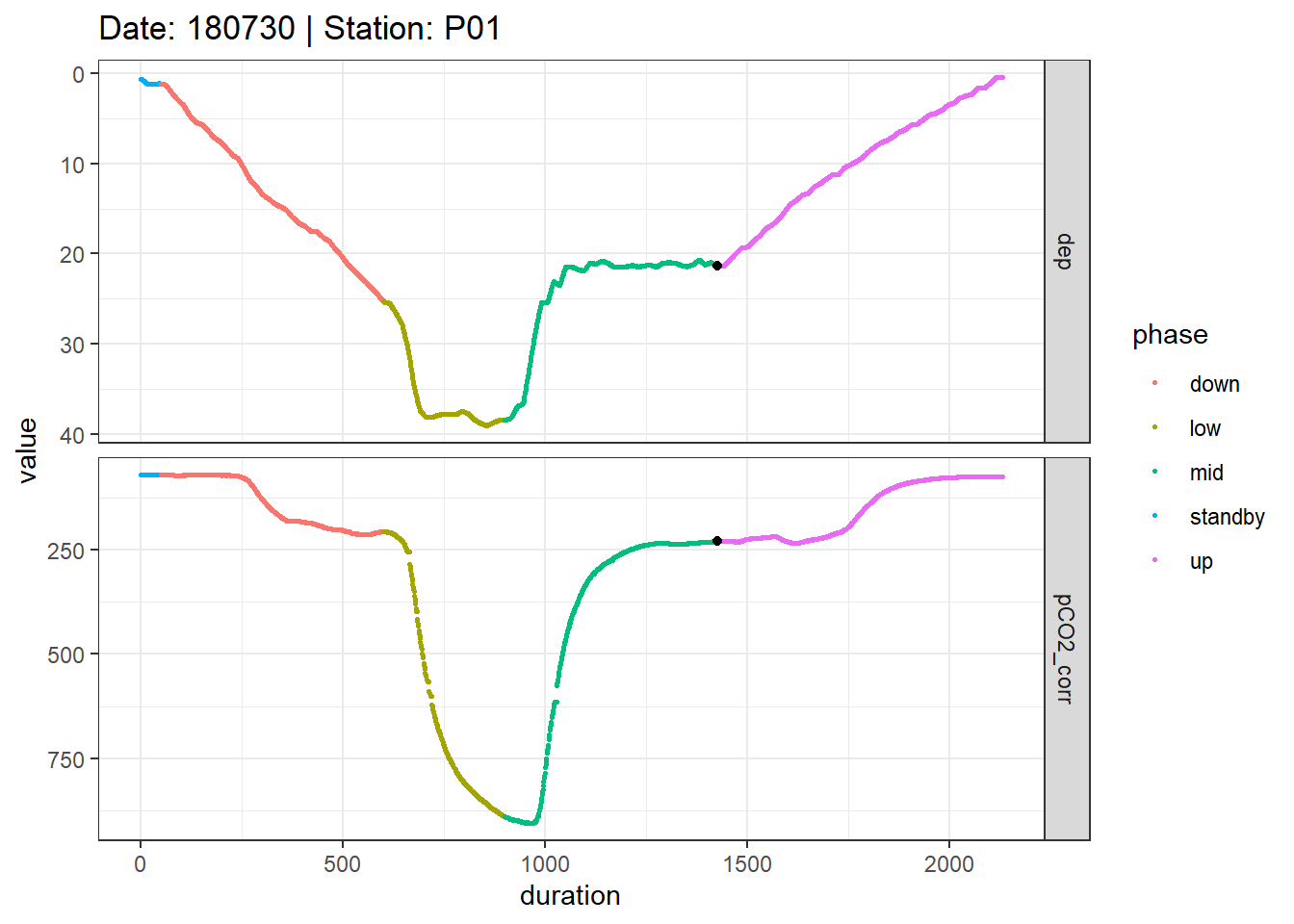

Example timeseries of profiling depth and pCO2. Colors represent manually assigned profiling phases. The black points represent reference data collected at the end of the mid equilibration period.

rm(cast_dep, cast_dep_equi, cast_dep_equi_sub, i_station, i_ID, max_duration)A pdf with all timeseries plots of profiling depth and pCO2 can be accessed here.

cast_dep <- tm %>%

pivot_longer(c(dep, pCO2_corr), names_to = "parameter", values_to = "value")

cast_dep_equi <- tm_pCO2_equi %>%

pivot_longer(c(dep, pCO2_corr), names_to = "parameter", values_to = "value")

max_duration <- round(max(cast_dep$duration)/1000,0)*1000

pdf(file=here::here("output/Plots/response_time",

"time_series_depth_pCO2_corr_by_profile.pdf"),

onefile = TRUE, width = 7, height = 4)

for(i_ID in unique(cast_dep$ID)){

for(i_station in unique(cast_dep$station)){

if (nrow(cast_dep %>% filter(ID == i_ID, station == i_station)) > 0){

cast_dep_equi_sub <- cast_dep_equi %>%

filter(ID == i_ID,

station == i_station)

print(

cast_dep %>%

filter(ID == i_ID,

station == i_station) %>%

ggplot(aes(duration, value, col=phase))+

geom_point(size=0.5)+

geom_point(data = cast_dep_equi_sub, aes(duration, value), col="black")+

scale_y_reverse()+

scale_x_continuous(breaks = seq(0,6000,500))+

labs(title = str_c("Date: ",i_ID," | Station: ",i_station))+

facet_grid(parameter~., scales = "free_y")+

theme_bw()

)

}

}

}

dev.off()

rm(cast_dep, cast_dep_equi, cast_dep_equi_sub, i_station, i_ID, max_duration)3.2 Reponse time correction

The executed response time correction featured the following aspects:

- Correction according to Bittig et al. (2018, supplement)

- RT: Constant mean vs. T-dependent response times applied (both independently quantified for 1W- and 8W-pump)

- tau_factor: Factor ranging from 0.8 - 1.6 applied to determined tau values

- Post-smoothing: 30 sec running mean (eg across 15 observations at 2 sec measurement frequency)

# Response time correction approach after Bittig --------------------------

RT_corr <- function(c1, c0, dt, tau) {

( 1 / ( 2* (( 1+(2*tau/dt) )^(-1) ))) * (c1 - (1-(2* (( 1+(2*tau/dt) )^(-1) ))) * c0)

}

# Assign mean response time (tau) values ----------------------------------------------

# df_mean <- full_join(df, tau_mean)

#

# df_mean <- df_mean %>%

# mutate(RT = "constant")

# Assign T-dependent response time (tau) values ----------------------------------------------

tau_fit <- tau_fit %>%

rename(tau_intercept = `(Intercept)`, tau_slope=tem)

tm_fit <- full_join(tm, tau_fit)

tm_fit <- tm_fit %>%

mutate(tau = tau_intercept + tau_slope *tem) %>%

select(-tau_intercept, -tau_slope)

# tm_fit <- tm_fit %>%

# mutate(RT = "T-dependent")

# Merge data sets with constand and T-dependent tau

tm <- tm_fit

#tm <- bind_rows(tm_fit, tm_mean)

rm(tm_fit)

# p1 <- tm %>%

# ggplot(aes(tau, dep, col=pump_power))+

# geom_point()+

# scale_y_reverse()

#

# p2 <- tm %>%

# ggplot(aes(tem, dep, col=pump_power))+

# geom_point()+

# scale_y_reverse()

#

# p1 | p2

#

# rm(p1, p2)

# Prepare data set for RT correction --------------------------------------

tm <- tm %>%

#group_by(RT) %>%

arrange(date_time) %>%

mutate(dt = as.numeric(as.character(date_time - lag(date_time))))

# ungroup()

# measurement frequency

freq <- tm %>%

filter(dt < 13) %>%

group_by(ID) %>%

summarise(dt_mean = round(mean(dt, na.rm = TRUE),0))

tm <- full_join(tm, freq)

# tau factors

tm <- expand_grid(tm, tau_factor = seq(0.8, 1.6, 0.2))

tm <- tm %>%

mutate(tau_test = tau*tau_factor)

# Apply RT correction to entire data set

for(i_ID in unique(tm$ID)){

#i_ID <- "180716"

freq_sub <- freq %>% filter(ID == i_ID) %>% pull(dt_mean)

window <- 30 / freq_sub

rolling_mean <- rollify(~mean(.x, na.rm = TRUE), window = window)

tm_sub <- tm %>%

filter(ID == i_ID) %>%

group_by(station, tau_factor) %>%

mutate(pCO2_RT = RT_corr(pCO2_corr, lag(pCO2_corr), dt, tau_test),

pCO2_RT = if_else(pCO2_RT %in% c(Inf, -Inf), NaN, pCO2_RT),

window = window,

pCO2_mean = rolling_mean(pCO2_RT)

#pCO2_RT_median = rolling_median(pCO2_RT)

) %>%

ungroup()

# time shift RT corrected data

shift <- as.integer(as.character(window/2))

tm_sub <- tm_sub %>%

group_by(station, tau_factor) %>%

mutate(pCO2_mean = lead(pCO2_mean, shift)) %>%

ungroup()

if (exists("tm_RT")){tm_RT <- bind_rows(tm_RT, tm_sub)}

else{tm_RT <- tm_sub}

rm(tm_sub, freq_sub, rolling_mean, shift, window)

}

tm_RT %>%

write_csv(here::here("data/_merged_data_files/response_time",

"tm_RT_profiles_by_tau_factor.csv"))

rm(i_ID, freq, RT_corr, tm_RT, tau_fit, tau_resid)As an alternative, the high resolution RT correction can be run.

# Response time correction approach after Bittig --------------------------

RT_corr <- function(c1, c0, dt, tau) {

( 1 / ( 2* (( 1+(2*tau/dt) )^(-1) ))) * (c1 - (1-(2* (( 1+(2*tau/dt) )^(-1) ))) * c0)

}

# Assign mean response time (tau) values ----------------------------------------------

# df_mean <- full_join(df, tau_mean)

#

# df_mean <- df_mean %>%

# mutate(RT = "constant")

# Assign T-dependent response time (tau) values ----------------------------------------------

tau_fit <- tau_fit %>%

rename(tau_intercept = `(Intercept)`, tau_slope=tem)

tm_fit <- full_join(tm, tau_fit)

tm_fit <- tm_fit %>%

mutate(tau = tau_intercept + tau_slope *tem) %>%

select(-tau_intercept, -tau_slope)

# tm_fit <- tm_fit %>%

# mutate(RT = "T-dependent")

# Merge data sets with constand and T-dependent tau

tm <- tm_fit

#tm <- bind_rows(tm_fit, tm_mean)

rm(tm_fit)

# p1 <- tm %>%

# ggplot(aes(tau, dep, col=pump_power))+

# geom_point()+

# scale_y_reverse()

#

# p2 <- tm %>%

# ggplot(aes(tem, dep, col=pump_power))+

# geom_point()+

# scale_y_reverse()

#

# p1 | p2

#

# rm(p1, p2)

# Prepare data set for RT correction --------------------------------------

tm <- tm %>%

#group_by(RT) %>%

arrange(date_time) %>%

mutate(dt = as.numeric(as.character(date_time - lag(date_time))))

# ungroup()

# measurement frequency

freq <- tm %>%

filter(dt < 13) %>%

group_by(ID) %>%

summarise(dt_mean = round(mean(dt, na.rm = TRUE),0))

tm <- full_join(tm, freq)

# tau factors

tm <- expand_grid(tm, tau_factor = seq(0.8, 1.6, 0.05))

tm <- tm %>%

mutate(tau_test = tau*tau_factor)

# Apply RT correction to entire data set

for(i_ID in unique(tm$ID)){

#i_ID <- "180716"

freq_sub <- freq %>% filter(ID == i_ID) %>% pull(dt_mean)

window <- 30 / freq_sub

rolling_mean <- rollify(~mean(.x, na.rm = TRUE), window = window)

tm_sub <- tm %>%

filter(ID == i_ID) %>%

group_by(station, tau_factor) %>%

mutate(pCO2_RT = RT_corr(pCO2_corr, lag(pCO2_corr), dt, tau_test),

pCO2_RT = if_else(pCO2_RT %in% c(Inf, -Inf), NaN, pCO2_RT),

window = window,

pCO2_mean = rolling_mean(pCO2_RT)

#pCO2_RT_median = rolling_median(pCO2_RT)

) %>%

ungroup()

# time shift RT corrected data

shift <- as.integer(as.character(window/2))

tm_sub <- tm_sub %>%

group_by(station, tau_factor) %>%

mutate(pCO2_mean = lead(pCO2_mean, shift)) %>%

ungroup()

if (exists("tm_RT")){tm_RT <- bind_rows(tm_RT, tm_sub)}

else{tm_RT <- tm_sub}

rm(tm_sub, freq_sub, rolling_mean, shift, window)

}

tm_RT %>%

write_csv(here::here("data/_merged_data_files/response_time",

"tm_RT_profiles_by_tau_factor_high_res.csv"))

rm(i_ID, freq, RT_corr, tm_RT, tau_fit, tau_resid, tm)tm <- read_csv(here::here("data/_merged_data_files/response_time",

"tm_RT_profiles_by_tau_factor.csv"),

col_types = cols(ID = col_character(),

Zero = col_factor(),

Flush = col_factor(),

p_type = col_factor(),

Zero_counter = col_integer(),

deployment = col_integer()))

i_ID <- example_ID

i_station <- example_station

equi_cast <- tm_pCO2_equi %>%

filter(ID == i_ID,

station == i_station)

tm %>%

filter(ID == i_ID,

station == i_station,

phase %in% c("up", "down")) %>%

ggplot()+

geom_path(aes(pCO2_corr, dep, linetype = phase, col="raw"))+

geom_path(aes(pCO2_mean, dep, linetype = phase, col="corrected"))+

geom_point(data = equi_cast, aes(pCO2_corr, dep))+

scale_y_reverse()+

scale_color_brewer(palette = "Set1", name="")+

labs(y="Depth [m]", x=expression(pCO[2]~(µatm)),

title = str_c("Date: ",i_ID," | Station: ",i_station))+

facet_grid(tau_factor~., labeller = label_both)

Example plot of response time corrected and raw pCO2 profiles. Panels highlight the effect of constant vs T-dependent tau estimates (columns) and the optimization by applying a constant factor (rows).

rm(equi_cast)A pdf with all timeseries plots of profiling depth and pCO2 can be accessed here

pdf(file=here::here("output/Plots/response_time",

"profiles_pCO2_mean.pdf"), onefile = TRUE, width = 7, height = 11)

for(i_ID in unique(tm$ID)){

for(i_station in unique(tm$station)){

if (nrow(tm %>% filter(ID == i_ID, station == i_station)) > 0){

equi_cast <- tm_pCO2_equi %>%

filter(ID == i_ID,

station == i_station)

print(

tm %>%

filter(ID == i_ID,

station == i_station,

phase %in% c("up", "down")) %>%

ggplot()+

geom_path(aes(pCO2_corr, dep, linetype = phase, col="raw"))+

geom_path(aes(pCO2_mean, dep, linetype = phase, col="corrected"))+

geom_point(data = equi_cast, aes(pCO2_corr, dep))+

scale_y_reverse()+

scale_color_brewer(palette = "Set1", name="")+

labs(y="Depth [m]", title = str_c("Date: ",i_ID," | Station: ",i_station))+

theme_bw()+

facet_grid(tau_factor~., labeller = label_both)

)

}

}

}

dev.off()

rm(equi_cast, i_ID, i_station)4 Diagnosis

In the following, the success of the response time correction is assessed through the offset between the downcast and:

- Upcast

- pCO2 reference value (recorded after equilibration period during upcast)

The offset comparison requires to discretize the depth recording. Depth intervals of 1m were chosen.

First, we analyse all profiles individually. Later we’ll merge the information across profiles and come up with a single metric to quantive the quality of the response time correction

4.1 Down- vs upcast

# pCO2 offset up - down cast

tm_grid <- tm %>%

filter(phase %in% c("down", "up")) %>%

mutate(dep_grid = as.numeric(as.character( cut(dep, seq(0,40,1), seq(0.5,39.5,1)))),

tau_factor = as.factor(tau_factor)) %>%

select(ID, station, tau_factor, p_type, dep_grid, phase, pCO2_corr, pCO2_mean) %>%

group_by(ID, station, tau_factor, p_type, dep_grid, phase) %>%

summarise_all("mean", na.rm = TRUE) %>%

ungroup() %>%

pivot_longer(cols = c(pCO2_corr, pCO2_mean), names_to = "correction") %>%

pivot_wider(names_from = phase, values_from = value) %>%

mutate(pCO2_delta = up - down,

pCO2_mean = (down + up)/2,

pCO2_delta_rel = 100 * pCO2_delta / pCO2_mean)

# descritize depth recordings of equilibrated pCO2 values

tm_pCO2_equi_grid <- tm_pCO2_equi %>%

mutate(dep_grid = as.numeric(as.character(cut(dep, seq(0,40,1), seq(0.5,39.5,1))))) %>%

select(ID, station, dep_grid, pCO2_equi=pCO2_corr)

tm_grid %>%

write_csv(here::here("data/_merged_data_files/response_time",

"tm_grid.csv"))

tm_pCO2_equi_grid %>%

write_csv(here::here("data/_merged_data_files/response_time",

"tm_pCO2_equi_grid.csv"))

rm(tm_grid, tm_pCO2_equi_grid)tm_grid <-

read_csv(here::here("data/_merged_data_files/response_time",

"tm_grid.csv"),

col_types = cols(ID = col_character()))

tm_pCO2_equi_grid <-

read_csv(here::here("data/_merged_data_files/response_time",

"tm_pCO2_equi_grid.csv"),

col_types = cols(ID = col_character()))

i_ID <- example_ID

i_station <- example_station

tm_pCO2_equi_grid_sub <- tm_pCO2_equi_grid %>%

filter(ID == i_ID,

station == i_station)

tm_grid %>%

filter(ID == i_ID,

station == i_station) %>%

arrange(dep_grid) %>%

ggplot()+

geom_path(aes(down, dep_grid, col=correction, linetype="down"))+

geom_path(aes(up, dep_grid, col=correction, linetype ="up"))+

geom_point(data = tm_pCO2_equi_grid_sub, aes(pCO2_equi, dep_grid))+

scale_y_reverse(breaks=seq(0,40,2))+

scale_linetype(name="cast")+

scale_color_brewer(palette = "Set1", direction = -1)+

labs(y="Depth [m]", x=expression(pCO[2]~(µatm)),

title = str_c("Date: ",i_ID," | Station: ",i_station))+

facet_grid(tau_factor~., labeller = label_both)

Example plot of discretized, response time corrected and raw pCO2 profiles. Panels highlight the effect of constant vs T-dependent tau estimates (columns) and the optimization by applying a constant factor (rows). The black point indicates the reference pCO2 value.

rm(tm_pCO2_equi_grid_sub, i_ID, i_station)A pdf with all discretized pCO2 profiles can be assessed here

pdf(file=here::here("output/Plots/response_time",

"profiles_pCO2_mean_grid.pdf"), onefile = TRUE, width = 7, height = 11)

for(i_ID in unique(tm_grid$ID)){

for(i_station in unique(tm_grid$station)){

if (nrow(tm_grid %>% filter(ID == i_ID, station == i_station)) > 0){

tm_pCO2_equi_grid_sub <- tm_pCO2_equi_grid %>%

filter(ID == i_ID,

station == i_station)

print(

tm_grid %>%

filter(ID == i_ID,

station == i_station) %>%

arrange(dep_grid) %>%

ggplot()+

geom_path(aes(down, dep_grid, col=correction, linetype="down"))+

geom_path(aes(up, dep_grid, col=correction, linetype ="up"))+

geom_point(data = tm_pCO2_equi_grid_sub, aes(pCO2_equi, dep_grid))+

scale_y_reverse(breaks=seq(0,40,2))+

scale_linetype(name="cast")+

scale_color_brewer(palette = "Set1", direction = -1)+

labs(y="Depth [m]", title = str_c("Date: ",i_ID," | Station: ",i_station))+

theme_bw()+

facet_grid(tau_factor~., labeller = label_both)

)

rm(tm_pCO2_equi_grid_sub)

}

}

}

dev.off()i_ID <- example_ID

i_station <- example_station

tm_grid %>%

filter(ID == i_ID,

station == i_station,

correction == "pCO2_mean") %>%

arrange(dep_grid) %>%

ggplot(aes(pCO2_delta, dep_grid, col=as.factor(tau_factor)))+

geom_path()+

geom_point()+

scale_y_reverse(breaks=seq(0,40,2))+

scale_color_discrete(name="tau factor")+

labs(x=expression(Delta~pCO[2]~(µatm)),

y="Depth [m]",

title = str_c("Date: ",i_ID," | Station: ",i_station))+

geom_vline(xintercept = 0)+

geom_vline(xintercept = c(-10,10), col="red")

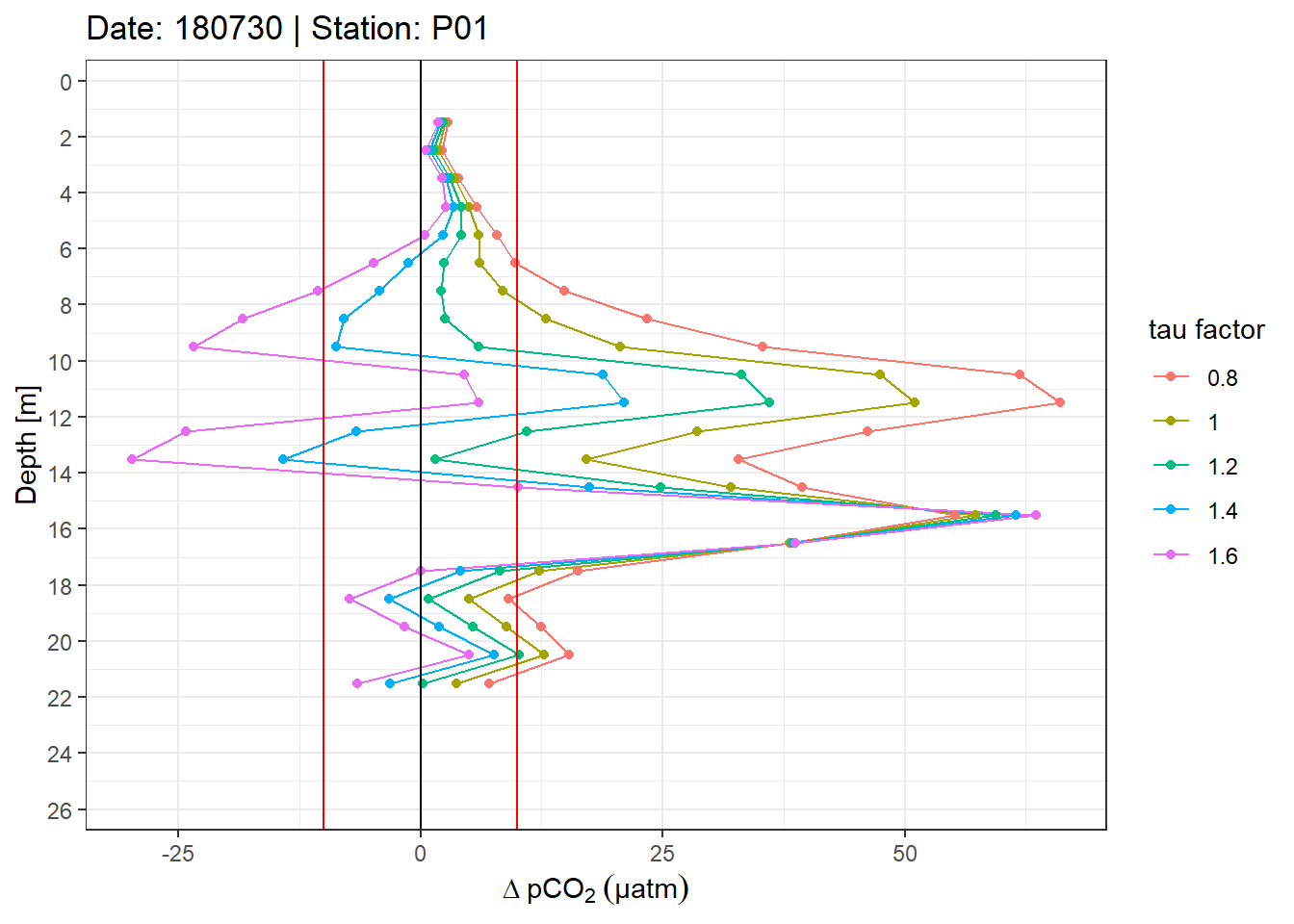

Example plot of absolute pCO2 offset profiles. Panels highlight the effect of constant vs T-dependent tau estimates. Colour indicates the optimization by applying a constant factor to tau. Vertical red lines mark an arbitray 10 µatm pCO2 threshold.

rm(i_ID, i_station)pdf(file=here::here("output/Plots/response_time",

"profiles_pCO2_delta_grid.pdf"), onefile = TRUE, width = 7, height = 7)

for(i_ID in unique(tm_grid$ID)){

for(i_station in unique(tm_grid$station)){

if (nrow(tm_grid %>% filter(ID == i_ID, station == i_station)) > 0){

print(

tm_grid %>%

filter(ID == i_ID,

station == i_station,

correction == "pCO2_mean") %>%

arrange(dep_grid) %>%

ggplot(aes(pCO2_delta, dep_grid, col=as.factor(tau_factor)))+

geom_path()+

geom_point()+

scale_y_reverse(breaks=seq(0,40,2))+

scale_color_discrete(name="tau factor")+

labs(x = "delta pCO2 [µatm]", y="Depth [m]", title = str_c("Date: ",i_ID," | Station: ",i_station))+

geom_vline(xintercept = 0)+

geom_vline(xintercept = c(-10,10), col="red")+

theme_bw()

)

}

}

}

dev.off()A pdf with all absolute pCO2 offset profiles can be assessed here.

i_ID <- example_ID

i_station <- example_station

tm_grid %>%

filter(ID == i_ID,

station == i_station,

correction == "pCO2_mean") %>%

arrange(dep_grid) %>%

ggplot(aes(pCO2_delta_rel, dep_grid, col=as.factor(tau_factor)))+

geom_path()+

geom_point()+

scale_y_reverse(breaks=seq(0,40,2))+

scale_color_discrete(name="tau factor")+

labs(x=expression(Delta~pCO[2]~("%"~of~absolute~value)), y="Depth [m]",

title = str_c("Date: ",i_ID," | Station: ",i_station))+

geom_vline(xintercept = 0)+

geom_vline(xintercept = c(-10,10), col="red")

Example plot of relative offset pCO2 profiles. Panels highlight the effect of constant vs T-dependent tau estimates. Colour indicates the optimization by applying a constant factor to tau. Vertical red lines mark an arbitray 10% threshold.

A pdf with all relative pCO2 offset profiles can be assessed here.

pdf(file=here::here("output/Plots/response_time",

"profiles_pCO2_delta_rel_grid.pdf"), onefile = TRUE, width = 7, height = 7)

for(i_ID in unique(tm_grid$ID)){

for(i_station in unique(tm_grid$station)){

if (nrow(tm_grid %>% filter(ID == i_ID, station == i_station)) > 0){

print(

tm_grid %>%

filter(ID == i_ID,

station == i_station,

correction == "pCO2_mean") %>%

arrange(dep_grid) %>%

ggplot(aes(pCO2_delta_rel, dep_grid, col=as.factor(tau_factor)))+

geom_path()+

geom_point()+

scale_y_reverse(breaks=seq(0,40,2))+

scale_color_discrete(name="tau factor")+

labs(x = "delta pCO2 [% of absolute value]",

y="Depth [m]",

title = str_c("Date: ",i_ID," | Station: ",i_station))+

geom_vline(xintercept = 0)+

geom_vline(xintercept = c(-10,10), col="red")+

theme_bw()

)

}

}

}

dev.off()4.2 Downcast vs reference value

tm_equi_delta <- full_join(tm_grid, tm_pCO2_equi_grid) %>%

filter(!is.na(pCO2_equi)) %>%

mutate(pCO2_delta_equi = down - pCO2_equi,

pCO2_delta_equi_rel = 100 * pCO2_delta_equi / pCO2_equi)tm_equi_delta %>%

filter(tau_factor == 1.2, correction=="pCO2_mean") %>%

ggplot(aes(pCO2_equi, pCO2_delta_equi))+

geom_hline(yintercept = 0)+

geom_point()+

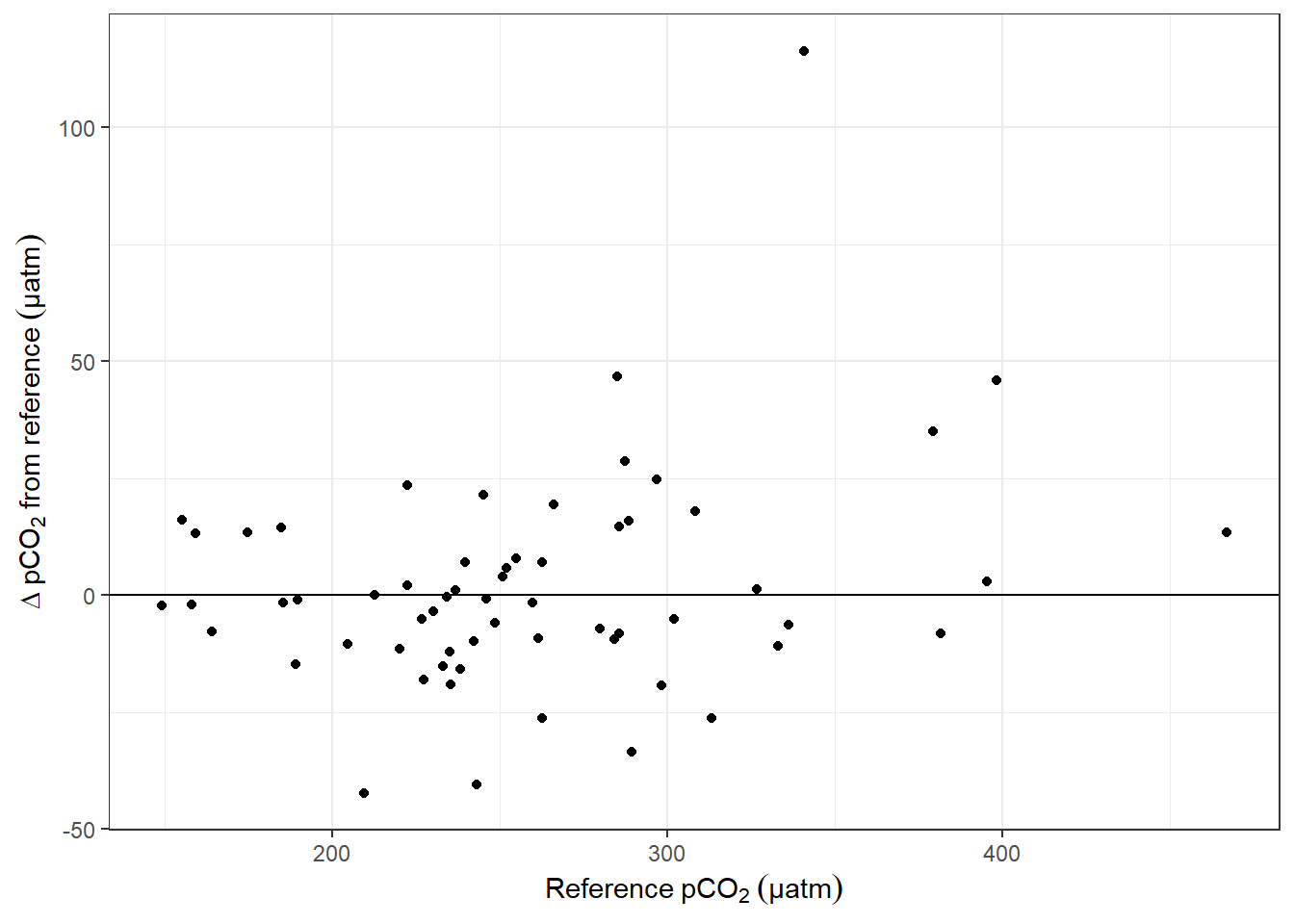

labs(x=expression(Reference~pCO[2]~(µatm)), y=expression(Delta~pCO[2]~from~reference~(µatm)))

Offset between pCO2 downcast and upcast reference value as a function of absolute pCO2. (Conditions: T-dependent tau, Factor = 1.2.

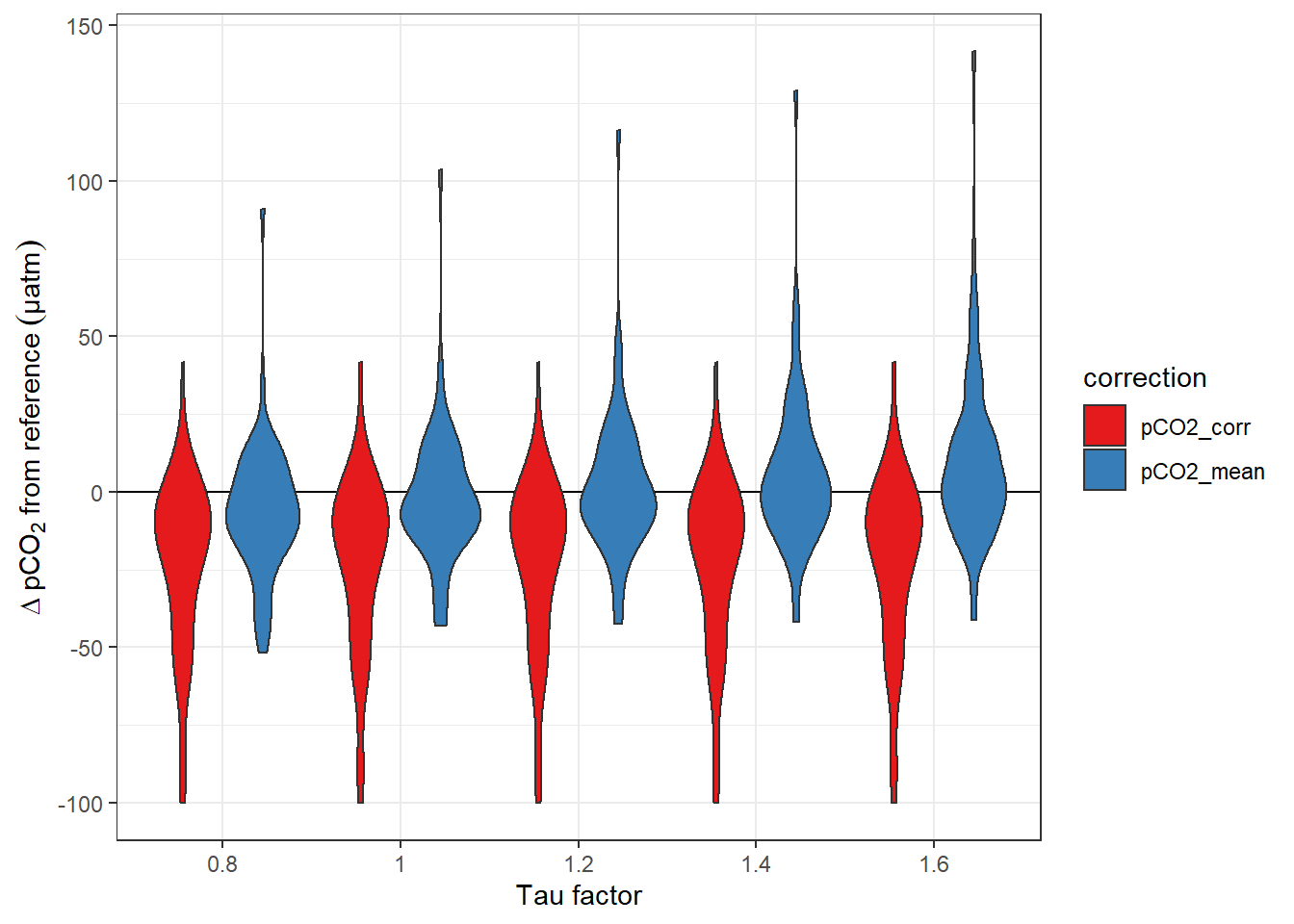

tm_equi_delta %>%

ggplot(aes(as.factor(tau_factor), pCO2_delta_equi, fill=correction))+

geom_hline(yintercept = 0)+

geom_violin()+

labs(y=expression(Delta~pCO[2]~from~reference~(µatm)), x="Tau factor")+

scale_fill_brewer(palette = "Set1")

Offset between pCO2 downcast and upcast reference value. Panels highlight the effect of constant vs T-dependent tau estimates. Colour distinguish raw and corrected offsets.

4.3 Summary metrics

In order to decide, which conditions resulted in the best response correction the mean absoulte and relative pCO2 offset across all profiles was calculated for:

- the offset between downcast and reference value

- the offset between downcast and upcast

- constant and T-dependent tau

- applied tau factors

Summary statistics were restricted to complete shallow profiles (not more than 2 observations missing from 1m depth intervals, maximum depth 20m).

tm_grid_stat <- tm_grid %>%

filter(correction == "pCO2_mean") %>%

group_by(ID, station) %>%

summarise(dep_max = max(dep_grid),

pCO2_max = max(down)) %>%

ungroup()

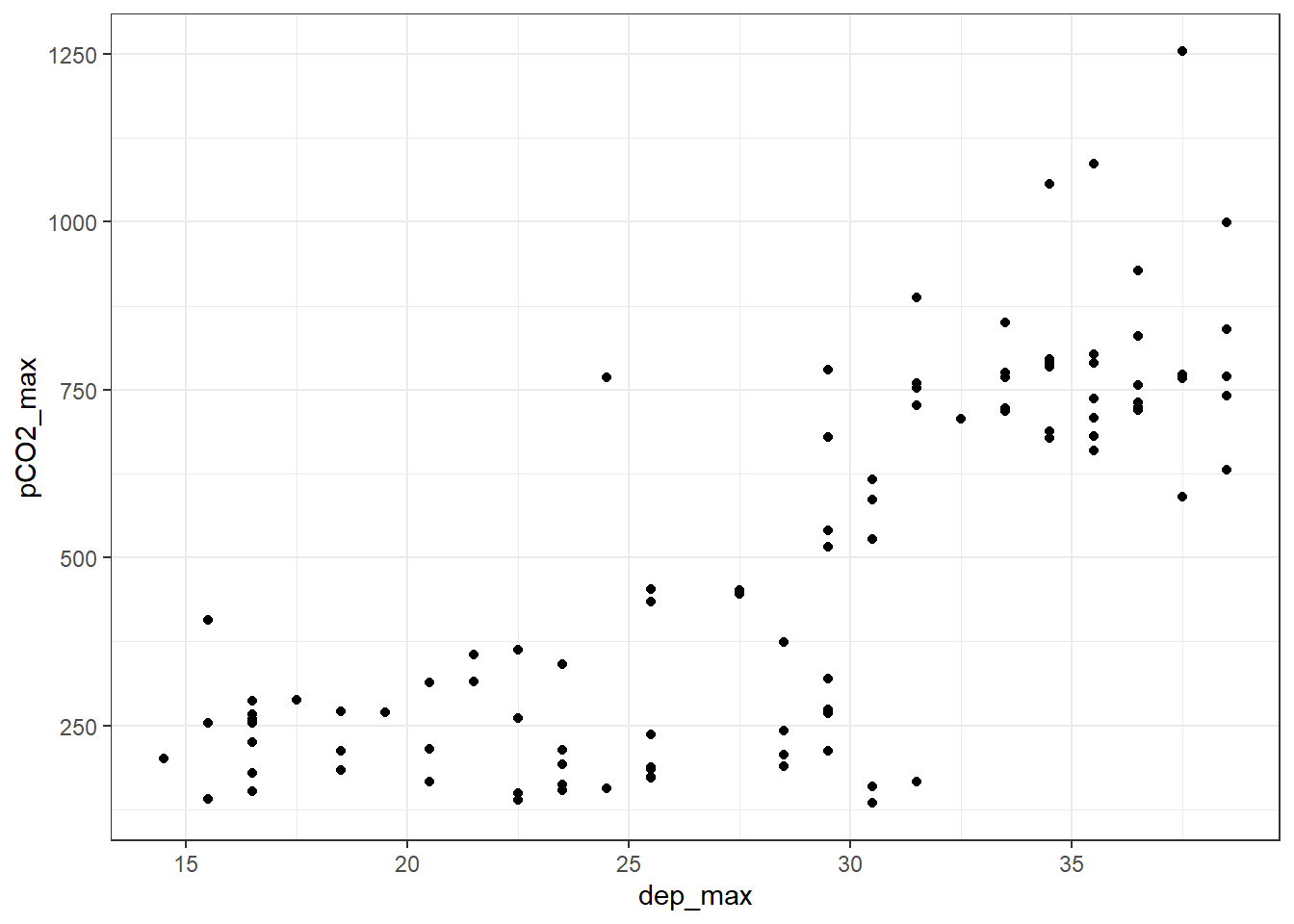

tm_grid_stat %>%

ggplot(aes(dep_max, pCO2_max))+

geom_point()

tm_grid <- full_join(tm_grid, tm_grid_stat)

rm(tm_grid_stat)4.3.1 Define dep and pCO2 thresholds

dep_max_lim <- 25

pCO2_max_lim <- 250Summary metric are restricted to profiles that did not exceed a maximum sampling depth of 25 m and pCO2 of 250 µatm.

tm_grid_shallow <- tm_grid %>%

filter(dep_max <= dep_max_lim,

pCO2_max <= pCO2_max_lim) %>%

group_by(ID, station, tau_factor, correction) %>%

mutate(nr_na = sum(is.na(pCO2_delta))) %>%

ungroup() %>%

filter(nr_na <= 2)

tm_grid_shallow_sum <- tm_grid_shallow %>%

mutate(pCO2_delta_abs = abs(pCO2_delta),

pCO2_delta_rel_abs = abs(pCO2_delta_rel)) %>%

group_by(tau_factor, dep_grid, correction) %>%

summarise(mean = mean(pCO2_delta, na.rm = TRUE),

sd = sd(pCO2_delta, na.rm = TRUE),

mean_abs = mean(pCO2_delta_abs, na.rm = TRUE),

mean_rel = mean(pCO2_delta_rel, na.rm = TRUE),

sd_rel = sd(pCO2_delta_rel, na.rm = TRUE),

mean_rel_abs = mean(pCO2_delta_rel_abs, na.rm = TRUE)) %>%

ungroup() %>%

pivot_longer(cols = sd:mean_rel_abs,

names_to = "estimate", values_to = "pCO2_delta")

tm_equi_delta_sum <- tm_equi_delta %>%

mutate(pCO2_delta_equi_abs = abs(pCO2_delta_equi),

pCO2_delta_equi_rel_abs = abs(pCO2_delta_equi_rel)) %>%

group_by(correction, tau_factor) %>%

summarise(mean = mean(pCO2_delta_equi, na.rm = TRUE),

mean_abs = mean(pCO2_delta_equi_abs, na.rm = TRUE),

mean_rel = mean(pCO2_delta_equi_rel, na.rm = TRUE),

mean_rel_abs = mean(pCO2_delta_equi_rel_abs, na.rm = TRUE)) %>%

ungroup() %>%

pivot_longer(cols = mean:mean_rel_abs,

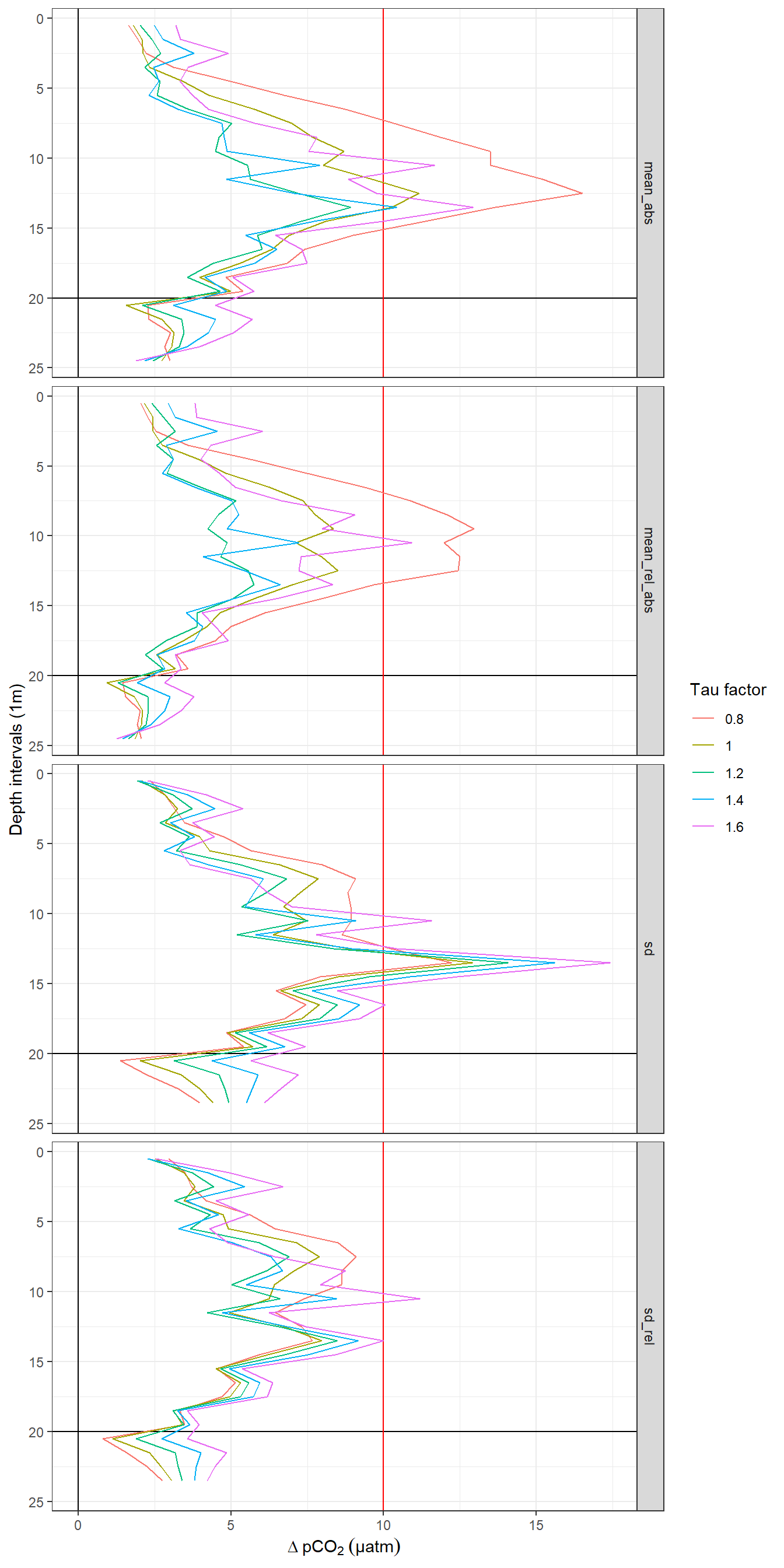

names_to = "estimate", values_to = "dpCO2")tm_grid_shallow_sum %>%

filter(correction == "pCO2_mean",

estimate %in% c("mean_abs", "mean_rel_abs", "sd", "sd_rel")) %>%

ggplot()+

geom_vline(xintercept = 0)+

geom_hline(yintercept = 20)+

geom_vline(xintercept = c(10), col="red")+

geom_path(aes(pCO2_delta, dep_grid, col=as.factor(tau_factor)))+

scale_y_reverse()+

scale_color_discrete(name="Tau factor")+

labs(x=expression(Delta~pCO[2]~(µatm)), y="Depth intervals (1m)")+

facet_grid(estimate~.)

Offset between up- and downcast. Panel columns: Constant and T-dependent tau. Panel rows from top to bottom: Mean of absolute offset, mean of relative absolute offset, standard deviation of offset, standard deviation of relative offset.

# RT_diff_sum_mean_highres <-

# read_csv(here::here("data/_merged_data_files",

# "X_BloomSail_CTD_HydroC_profiles_RT_cast-offset_highres_taufactor_mean.csv")) %>%

# filter(estimate %in% c("mean_abs", "mean_rel_abs"))

tm_grid_shallow_sum_mean <- tm_grid_shallow_sum %>%

group_by(estimate, correction, tau_factor) %>%

summarise(pCO2_delta_mean = mean(pCO2_delta)) %>%

ungroup()

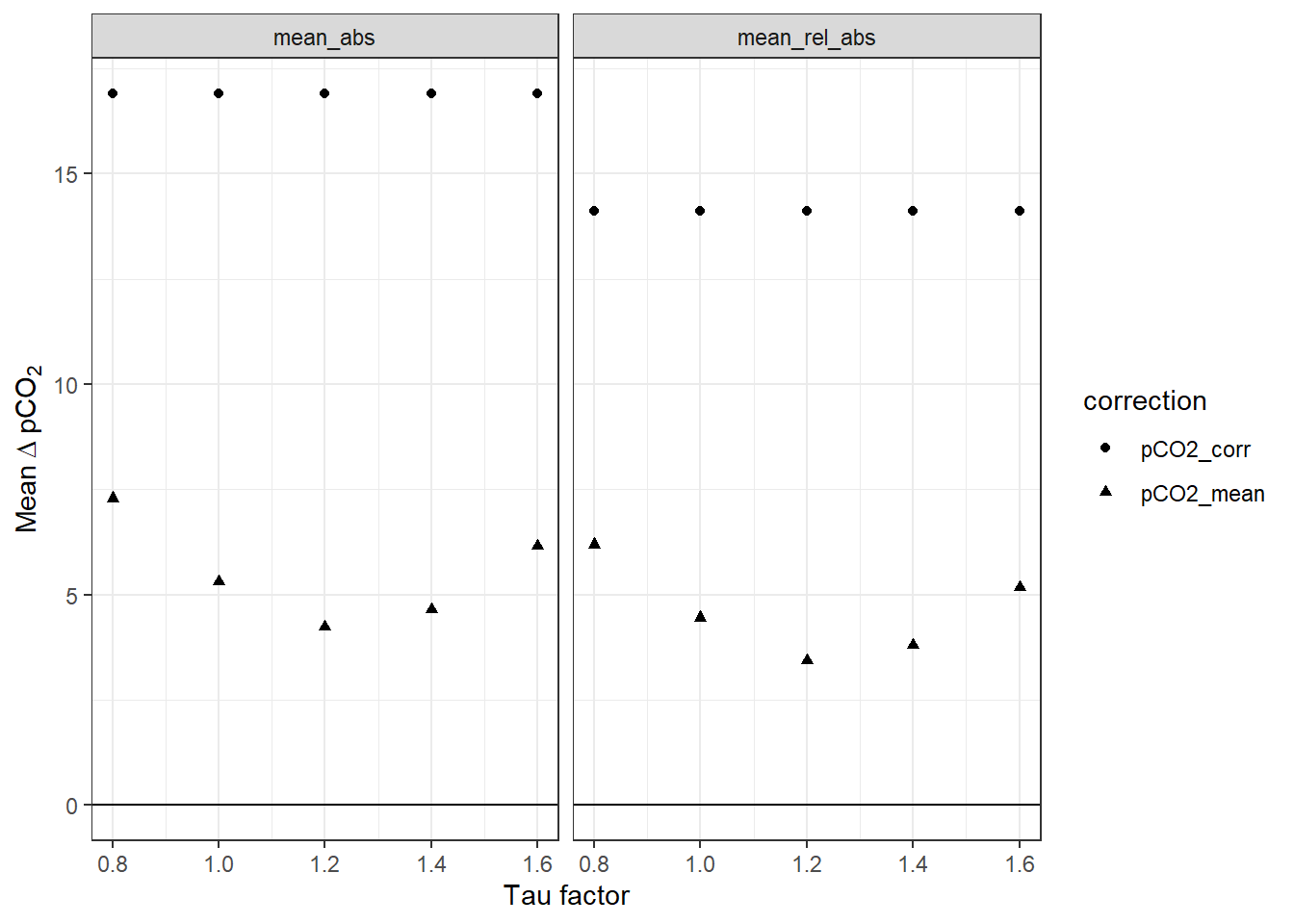

tm_grid_shallow_sum_mean %>%

filter(estimate %in% c("mean_abs", "mean_rel_abs")) %>%

ggplot(aes(tau_factor, pCO2_delta_mean, linetype=correction, shape=correction))+

# geom_line(data = RT_diff_sum_mean_highres,

# aes(tau_factor, mean_dpCO2))+

geom_point()+

geom_hline(yintercept = 0)+

labs(x="Tau factor", y=expression(Mean~Delta~pCO[2]))+

facet_wrap(~estimate)

Mean offset between up- and downcast for all profiles up to 20m water depth. The lines between discrete tau factors result from the same analysis performed with high resolution of the tau factor. Left Panel: Mean absolute offset (µatm). Right panel: Mean relative offset (% of absolute value).

Below we determined the tau factor that corresponds to lowest absolute and relative mean offsets, respectively.

RT_diff_sum_mean_highres %>%

filter(correction == "pCO2_mean", estimate %in% c("mean_abs")) %>%

slice(which.min(mean_dpCO2)) %>%

select(tau_factor, mean_dpCO2) %>%

rename(mean_abs=mean_dpCO2)

RT_diff_sum_mean_highres %>%

filter(correction == "pCO2_mean", estimate == "mean_rel_abs") %>%

slice(which.min(mean_dpCO2)) %>%

select(tau_factor, mean_dpCO2) %>%

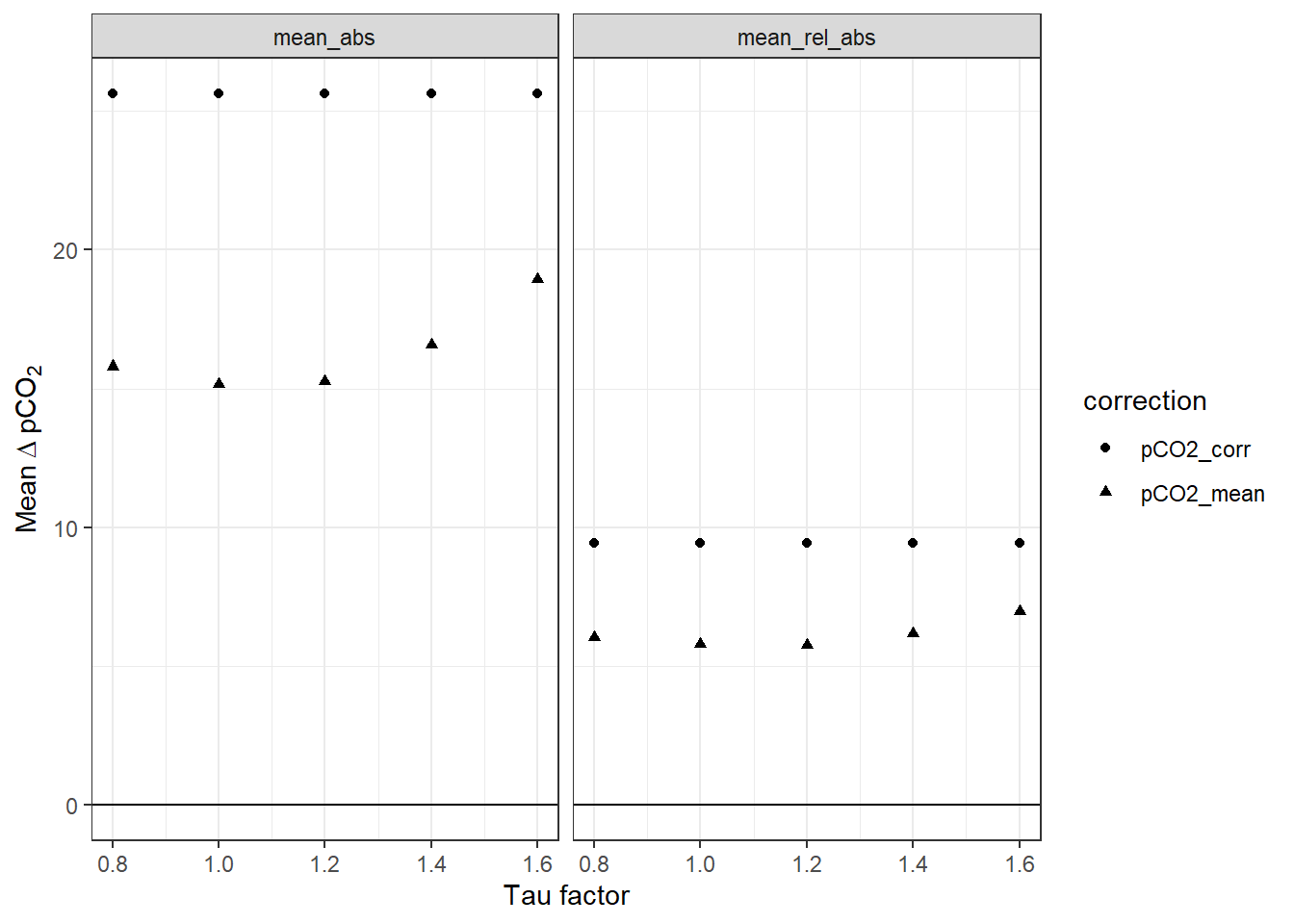

rename(mean_rel_abs=mean_dpCO2)Likewise, we analyse the offset from the pCO2 reference value:

# tm_equi_delta_sum_highres <-

# read_csv(here::here("data/_merged_data_files",

# "X_BloomSail_CTD_HydroC_profiles_RT_reference-offset_highres_taufactor_mean.csv")) %>%

# pivot_longer(cols = 4:7, names_to = "estimate", values_to = "dpCO2") %>%

# filter(estimate %in% c("mean_abs", "mean_rel_abs"))

tm_equi_delta_sum %>%

filter(estimate %in% c("mean_abs", "mean_rel_abs")) %>%

ggplot(aes(tau_factor, dpCO2, linetype=correction, shape=correction))+

# geom_line(data = tm_equi_delta_sum_highres,

# aes(tau_factor, dpCO2))+

geom_point()+

geom_hline(yintercept = 0)+

labs(x="Tau factor", y=expression(Mean~Delta~pCO[2]))+

facet_wrap(~estimate)

Mean pCO2 offset from reference values as a function of the factor applied to tau. The lines between discrete tau factors result from the same analysis performed with high resolution of the tau factor. Left Panel: Mean absolute offset (µatm). Right panel: Mean relative offset (% of absolute value).

Below we determined the tau factor that corresponds to lowest absolute and relative mean offsets, respectively.

tm_equi_delta_sum_highres %>%

filter(correction == "pCO2_mean", estimate %in% c("mean_abs")) %>%

slice(which.min(dpCO2)) %>%

select(tau_factor, dpCO2) %>%

rename(mean_abs=dpCO2)

tm_equi_delta_sum_highres %>%

filter(correction == "pCO2_mean", estimate == "mean_rel_abs") %>%

slice(which.min(dpCO2)) %>%

select(tau_factor, dpCO2) %>%

rename(mean_rel_abs=dpCO2)4.4 Summary plots

i_tau_factor <- "1.2"

cast_dep <- tm %>%

filter(tau_factor == i_tau_factor) %>%

pivot_longer(c(dep, pCO2_corr, pCO2_mean),

names_to = "parameter", values_to = "value")

cast_dep_equi <- tm_pCO2_equi %>%

pivot_longer(c(dep, pCO2_corr), names_to = "parameter", values_to = "value")

tm_sub <- tm %>%

filter(tau_factor == i_tau_factor)

tm_grid_sub <- tm_grid %>%

filter(tau_factor == i_tau_factor)

max_duration <- round(max(cast_dep$duration)/1000,0)*1000

pdf(file=here::here("output/Plots/response_time",

"all_plots.pdf"),

onefile = TRUE, width = 7, height = 10)

for(i_ID in unique(tm$ID)){

for(i_station in unique(tm$station)){

# i_ID <- unique(cast_dep$ID)[1]

# i_station <- unique(cast_dep$station)[1]

i_ID

i_station

if (nrow(cast_dep %>% filter(ID == i_ID, station == i_station)) > 0){

if (nrow(tm %>% filter(ID == i_ID, station == i_station)) > 0){

if (nrow(tm_grid %>% filter(ID == i_ID, station == i_station)) > 0){

cast_dep_equi_sub <- cast_dep_equi %>%

filter(ID == i_ID,

station == i_station)

p_time_series <- cast_dep %>%

filter(ID == i_ID,

station == i_station) %>%

ggplot(aes(duration, value, col=phase))+

geom_point(size=0.5)+

geom_point(data = cast_dep_equi_sub, aes(duration, value), col="black")+

scale_y_reverse()+

scale_x_continuous(breaks = seq(0,6000,500))+

labs(title = str_c("ID: ",i_ID," | Station: ",i_station))+

facet_grid(parameter~., scales = "free_y")+

theme_bw()

p_profile <- tm_sub %>%

filter(ID == i_ID,

station == i_station,

phase %in% c("up", "down")) %>%

ggplot()+

geom_path(aes(pCO2_corr, dep, linetype = phase, col="raw"))+

geom_path(aes(pCO2_mean, dep, linetype = phase, col="corrected"))+

#geom_point(data = equi_cast, aes(pCO2_corr, dep))+

scale_y_reverse()+

coord_cartesian(ylim = c(25,0), xlim = c(70,250))+

scale_color_brewer(palette = "Set1", name="", guide = FALSE)+

scale_linetype(guide = FALSE)+

labs(y="Depth [m]", x="pCO2",

title = "full res")

tm_pCO2_equi_grid_sub <- tm_pCO2_equi_grid %>%

filter(ID == i_ID,

station == i_station)

p_profile_grid <- tm_grid_sub %>%

filter(ID == i_ID,

station == i_station) %>%

arrange(dep_grid) %>%

ggplot()+

geom_path(aes(down, dep_grid, col=correction, linetype="down"))+

geom_path(aes(up, dep_grid, col=correction, linetype ="up"))+

geom_point(data = tm_pCO2_equi_grid_sub, aes(pCO2_equi, dep_grid))+

scale_y_reverse()+

coord_cartesian(ylim = c(25,0), xlim = c(70,250))+

scale_linetype(name="cast")+

scale_color_brewer(palette = "Set1", direction = -1)+

labs(x="pCO2",

title = "1m grid")+

theme(axis.title.y = element_blank(),

axis.text.y = element_blank())

p_delta_abs <- tm_grid %>%

filter(ID == i_ID,

station == i_station,

correction == "pCO2_mean") %>%

arrange(dep_grid) %>%

ggplot(aes(pCO2_delta, dep_grid, col=as.factor(tau_factor)))+

geom_path()+

geom_point()+

scale_y_reverse(breaks=seq(0,40,2))+

scale_color_discrete(name="tau factor", guide=FALSE)+

labs(x = "delta pCO2 [µatm]", y="Depth [m]")+

geom_vline(xintercept = 0)+

geom_vline(xintercept = c(-10,10), col="red")

p_delta_rel <- tm_grid %>%

filter(ID == i_ID,

station == i_station,

correction == "pCO2_mean") %>%

arrange(dep_grid) %>%

ggplot(aes(pCO2_delta_rel, dep_grid, col=as.factor(tau_factor)))+

geom_path()+

geom_point()+

scale_y_reverse(breaks=seq(0,40,2))+

scale_color_discrete(name="tau factor")+

labs(x = "delta pCO2 [% of absolute value]", y="Depth [m]")+

geom_vline(xintercept = 0)+

geom_vline(xintercept = c(-10,10), col="red")+

theme(axis.title.y = element_blank(),

axis.text.y = element_blank())

print(

p_time_series / (p_profile | p_profile_grid) / (p_delta_abs | p_delta_rel)

)

rm(p_time_series, p_profile, p_profile_grid, p_delta_abs, p_delta_rel)

}}}

}

}

dev.off()

rm(cast_dep, cast_dep_equi, cast_dep_equi_sub, i_station, i_ID,

max_duration, tm_pCO2_equi_grid_sub)5 Conclusion

- Taking the temperature dependence of tau into account resulted in a slightly better agreement between up- and downcast, as well as downcast and reference value

- For all quality metrics we find improved agreement for slightly positive tau factor ranging from 1.04 - 1.24

- As the focus is on pCO2 changes in the upper 10 m of the water column, we will apply the tau factor 1.24 leading to the lowest relative offset between up- and downcast

6 Correct entire data set

Finally, the response time correction was applied to the full data set (not only profile data) based on the optimum parameterization determined above.

# Response time correction approach after Bittig --------------------------

RT_corr <- function(c1, c0, dt, tau) {

( 1 / ( 2* (( 1+(2*tau/dt) )^(-1) ))) * (c1 - (1-(2* (( 1+(2*tau/dt) )^(-1) ))) * c0)

}

tm <- read_csv(here::here("data/_merged_data_files/merging_interpolation",

"tm.csv"),

col_types = cols(ID = col_character(),

pCO2_analog = col_double(),

pCO2_corr = col_double(),

Zero = col_factor(),

Flush = col_factor(),

Zero_counter = col_integer(),

deployment = col_integer(),

duration = col_double(),

mixing = col_character(),

lat = col_double(),

lon = col_double()))

tm <- tm %>%

group_by(ID, station) %>%

mutate(duration = as.numeric(date_time - min(date_time)),

pump_power = if_else(date_time < ymd_hms("2018-07-17;13:08:34"), "1W", "8W")) %>%

arrange(date_time)

tau_fit <- read_csv(here::here("data/_merged_data_files/response_time",

"tau_fit.csv"))

# Assign T-dependent response time (tau) values ----------------------------------------------

tau_fit <- tau_fit %>%

rename(tau_intercept = `(Intercept)`, tau_slope=tem)

tm <- full_join(tm, tau_fit)

#Assign tau

tm <- tm %>%

mutate(tau = tau_intercept + tau_slope *tem) %>%

select(-tau_intercept, -tau_slope)

# Prepare data set for RT correction --------------------------------------

tm <- tm %>%

group_by(ID, station) %>%

arrange(date_time) %>%

mutate(dt = as.numeric(as.character(date_time - lag(date_time)))) %>%

ungroup()

# measurement frequency

freq <- tm %>%

filter(dt < 13) %>%

group_by(ID) %>%

summarise(dt_mean = round(mean(dt, na.rm = TRUE),0))

tm <- full_join(tm, freq)

# tau factors

tm <- expand_grid(tm, tau_factor = seq(1.2))

tm <- tm %>%

mutate(tau_test = tau*tau_factor)

# Apply RT correction to entire data set

for(i_ID in unique(tm$ID)){

#i_ID <- "180716"

freq_sub <- freq %>% filter(ID == i_ID) %>% pull(dt_mean)

window <- 30 / freq_sub

rolling_mean <- rollify(~mean(.x, na.rm = TRUE), window = window)

tm_sub <- tm %>%

filter(ID == i_ID) %>%

group_by(station) %>%

mutate(pCO2_RT = RT_corr(pCO2_corr, lag(pCO2_corr), dt, tau_test),

pCO2_RT = if_else(pCO2_RT %in% c(Inf, -Inf), NaN, pCO2_RT),

window = window,

pCO2_mean = rolling_mean(pCO2_RT)

#pCO2_RT_median = rolling_median(pCO2_RT)

) %>%

ungroup()

# time shift RT corrected data

shift <- as.integer(as.character(window/2))

tm_sub <- tm_sub %>%

group_by(station) %>%

mutate(pCO2_mean = lead(pCO2_mean, shift)) %>%

ungroup()

if (exists("tm_corr")){tm_corr <- bind_rows(tm_corr, tm_sub)}

else{tm_corr <- tm_sub}

rm(tm_sub, freq_sub, rolling_mean, shift, window)

}

rm(RT_corr, i_ID, freq)

tm_corr <- tm_corr %>%

select(-c(tau, tau_factor, tau_test, window))

tm_corr %>%

write_csv(here::here("data/_merged_data_files/response_time",

"tm_RT_all.csv"))

rm(tm, tm_corr)7 Sensitivity considerations

A change in DIC of 1 µmol kg-1 corresponds to a change in pCO2 of around 1 µatm, in the Central Baltic Sea at a pCO2 of around 100 µatm (summertime conditions).

df <- data.frame(cbind(

(c(1720)),

(c(7))))

Tem <- seq(5,25,5)

pCO2<-seq(50,500,20)

df<-merge(df, Tem)

names(df) <- c("AT", "S", "Tem")

df<-merge(df, pCO2)

names(df) <- c("AT", "S", "Tem", "pCO2")

df$AT<-df$AT*1e-6

df$DIC<-carb(flag=24, var1=df$pCO2, var2=df$AT, S=df$S, T=df$Tem, k1k2="m10", kf="dg", pHscale="T")[,16]

df$pCO2.corr<-carb(flag=15, var1=df$AT, var2=df$DIC, S=df$S, T=df$Tem, k1k2="m10", kf="dg", pHscale="T")[,9]

df$pCO2.2<-df$pCO2.corr + 25

df$DIC.2<-carb(flag=24, var1=df$pCO2.2, var2=df$AT, S=df$S, T=df$Tem, k1k2="m10", kf="dg", pHscale="T")[,16]

df$ratio<-(df$pCO2.2-df$pCO2.corr)/(df$DIC.2*1e6-df$DIC*1e6)

df %>%

ggplot(aes(pCO2, ratio, col=as.factor(Tem)))+

geom_line()+

scale_color_viridis_d(option = "C",name="Tem [°C]")+

labs(x=expression(pCO[2]~(µatm)), y=expression(Delta~pCO[2]~"/"~Delta~DIC~(µatm~µmol^{-1}~kg)))+

scale_y_continuous(limits = c(0,8), breaks = seq(0,10,1))

pCO2 sensitivity to changes in DIC.

rm(df, Tem, pCO2)8 Open tasks / questions

- Condering the summary metrics: If the standard deviation of the offset between up- and downcast is as large as the offset itself, does it make sense to apply the tau factor? In other words: Does it make the profiles significantly better?

- Clean workspace for plotting code after RT correction

- include high res tau factor output

sessionInfo()R version 3.6.3 (2020-02-29)

Platform: i386-w64-mingw32/i386 (32-bit)

Running under: Windows 10 x64 (build 18363)

Matrix products: default

locale:

[1] LC_COLLATE=English_Germany.1252 LC_CTYPE=English_Germany.1252

[3] LC_MONETARY=English_Germany.1252 LC_NUMERIC=C

[5] LC_TIME=English_Germany.1252

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] patchwork_1.0.0 tibbletime_0.1.3 lubridate_1.7.4 broom_0.5.5

[5] seacarb_3.2.13 oce_1.2-0 gsw_1.0-5 testthat_2.3.2

[9] forcats_0.5.0 stringr_1.4.0 dplyr_0.8.5 purrr_0.3.3

[13] readr_1.3.1 tidyr_1.0.2 tibble_3.0.0 ggplot2_3.3.0

[17] tidyverse_1.3.0 workflowr_1.6.1

loaded via a namespace (and not attached):

[1] Rcpp_1.0.4 whisker_0.4 knitr_1.28 xml2_1.3.0

[5] magrittr_1.5 splines_3.6.3 hms_0.5.3 rvest_0.3.5

[9] tidyselect_1.0.0 viridisLite_0.3.0 here_0.1 colorspace_1.4-1

[13] lattice_0.20-41 R6_2.4.1 rlang_0.4.5 fansi_0.4.1

[17] xfun_0.12 dbplyr_1.4.2 modelr_0.1.6 withr_2.1.2

[21] git2r_0.26.1 ellipsis_0.3.0 htmltools_0.4.0 assertthat_0.2.1

[25] rprojroot_1.3-2 digest_0.6.25 lifecycle_0.2.0 Matrix_1.2-18

[29] haven_2.2.0 rmarkdown_2.1 compiler_3.6.3 cellranger_1.1.0

[33] pillar_1.4.3 scales_1.1.0 backports_1.1.5 generics_0.0.2

[37] jsonlite_1.6.1 httpuv_1.5.2 pkgconfig_2.0.3 rstudioapi_0.11

[41] munsell_0.5.0 highr_0.8 httr_1.4.1 tools_3.6.3

[45] grid_3.6.3 nlme_3.1-145 gtable_0.3.0 mgcv_1.8-31

[49] utf8_1.1.4 DBI_1.1.0 cli_2.0.2 readxl_1.3.1

[53] yaml_2.2.1 crayon_1.3.4 RColorBrewer_1.1-2 later_1.0.0

[57] farver_2.0.3 promises_1.1.0 fs_1.4.0 vctrs_0.2.4

[61] glue_1.3.2 evaluate_0.14 labeling_0.3 reprex_0.3.0

[65] stringi_1.4.6