Data wrangling for Class Project

Maggie Douglas

04 March, 2026

Last updated: 2026-03-04

Checks: 6 1

Knit directory: dickinson_power/

This reproducible R Markdown analysis was created with workflowr (version 1.7.1). The Checks tab describes the reproducibility checks that were applied when the results were created. The Past versions tab lists the development history.

The R Markdown file has unstaged changes. To know which version of

the R Markdown file created these results, you’ll want to first commit

it to the Git repo. If you’re still working on the analysis, you can

ignore this warning. When you’re finished, you can run

wflow_publish to commit the R Markdown file and build the

HTML.

Great job! The global environment was empty. Objects defined in the global environment can affect the analysis in your R Markdown file in unknown ways. For reproduciblity it’s best to always run the code in an empty environment.

The command set.seed(20260107) was run prior to running

the code in the R Markdown file. Setting a seed ensures that any results

that rely on randomness, e.g. subsampling or permutations, are

reproducible.

Great job! Recording the operating system, R version, and package versions is critical for reproducibility.

Nice! There were no cached chunks for this analysis, so you can be confident that you successfully produced the results during this run.

Great job! Using relative paths to the files within your workflowr project makes it easier to run your code on other machines.

Great! You are using Git for version control. Tracking code development and connecting the code version to the results is critical for reproducibility.

The results in this page were generated with repository version 5c8882c. See the Past versions tab to see a history of the changes made to the R Markdown and HTML files.

Note that you need to be careful to ensure that all relevant files for

the analysis have been committed to Git prior to generating the results

(you can use wflow_publish or

wflow_git_commit). workflowr only checks the R Markdown

file, but you know if there are other scripts or data files that it

depends on. Below is the status of the Git repository when the results

were generated:

Ignored files:

Ignored: .DS_Store

Ignored: .Rhistory

Ignored: .Rproj.user/

Ignored: analysis/.DS_Store

Ignored: data/.DS_Store

Ignored: data/FY25 Main Meter Data.xlsx

Ignored: data/building_list_FY25_updated.xlsx

Ignored: data/graph_data_life_exp.csv

Ignored: data/housing_counts.csv

Ignored: keys/.DS_Store

Ignored: output/annual_kwh.csv

Ignored: output/building_check.csv

Ignored: output/building_check.xlsx

Ignored: output/daily_kwh.csv

Ignored: output/kwh_annual.csv

Ignored: output/kwh_annual_2026-03-04.csv

Ignored: output/kwh_annual_20260225.csv

Ignored: output/kwh_annual_20260226.csv

Ignored: output/kwh_daily.csv

Ignored: output/kwh_daily_2026-03-04.csv

Ignored: output/kwh_daily_20260225.csv

Ignored: output/kwh_daily_20260226.csv

Ignored: output/kwh_main_annual.csv

Ignored: output/kwh_main_daily.csv

Unstaged changes:

Modified: analysis/data_wrangling_final.Rmd

Note that any generated files, e.g. HTML, png, CSS, etc., are not included in this status report because it is ok for generated content to have uncommitted changes.

These are the previous versions of the repository in which changes were

made to the R Markdown (analysis/data_wrangling_final.Rmd)

and HTML (docs/data_wrangling_final.html) files. If you’ve

configured a remote Git repository (see ?wflow_git_remote),

click on the hyperlinks in the table below to view the files as they

were in that past version.

| File | Version | Author | Date | Message |

|---|---|---|---|---|

| Rmd | 5c8882c | maggiedouglas | 2026-03-04 | try again |

| html | 5c8882c | maggiedouglas | 2026-03-04 | try again |

| Rmd | 81a318c | maggiedouglas | 2026-03-04 | update data to add period of the year + example boxplot |

| html | 81a318c | maggiedouglas | 2026-03-04 | update data to add period of the year + example boxplot |

| html | 2a86883 | maggiedouglas | 2026-03-04 | Build site. |

| html | e50511f | maggiedouglas | 2026-03-04 | attempt to update website |

| html | 5f7e5dd | maggiedouglas | 2026-03-04 | Build site. |

| Rmd | bfe7b73 | maggiedouglas | 2026-03-04 | fix data wrangling! |

| html | bfe7b73 | maggiedouglas | 2026-03-04 | fix data wrangling! |

| Rmd | d04b276 | maggiedouglas | 2026-03-04 | fix data wrangling! |

| html | d04b276 | maggiedouglas | 2026-03-04 | fix data wrangling! |

| Rmd | 1ea78ae | maggiedouglas | 2026-02-27 | update summary code |

| html | 1ea78ae | maggiedouglas | 2026-02-27 | update summary code |

| Rmd | e752845 | maggiedouglas | 2026-02-26 | adjust data processing + building summary |

| html | e752845 | maggiedouglas | 2026-02-26 | adjust data processing + building summary |

| Rmd | 08cd7e1 | maggiedouglas | 2026-02-25 | update wrangling script |

| html | 08cd7e1 | maggiedouglas | 2026-02-25 | update wrangling script |

| Rmd | 8c6712f | maggiedouglas | 2026-02-24 | update script to fix issues |

| html | 8c6712f | maggiedouglas | 2026-02-24 | update script to fix issues |

| Rmd | 2fef649 | maggiedouglas | 2026-02-24 | updated to integrate individual meter data |

| html | 2fef649 | maggiedouglas | 2026-02-24 | updated to integrate individual meter data |

| Rmd | a379c87 | maggiedouglas | 2026-02-23 | fixed issue with East College |

| html | a379c87 | maggiedouglas | 2026-02-23 | fixed issue with East College |

| Rmd | 1e465a5 | maggiedouglas | 2026-02-23 | updated data and building case study with new info |

| html | 1e465a5 | maggiedouglas | 2026-02-23 | updated data and building case study with new info |

| html | 10507be | maggiedouglas | 2026-02-14 | Build site. |

| html | 661b13b | maggiedouglas | 2026-02-14 | Build site. |

| Rmd | dfaee9a | maggiedouglas | 2026-02-14 | Integrate occupancy data |

| html | dfaee9a | maggiedouglas | 2026-02-14 | Integrate occupancy data |

| html | 40c81af | maggiedouglas | 2026-02-14 | Build site. |

| Rmd | f2835df | maggiedouglas | 2026-02-14 | adjust gitignore and improve data wrangling and main meter case study |

| html | f2835df | maggiedouglas | 2026-02-14 | adjust gitignore and improve data wrangling and main meter case study |

Purpose

This code is meant to match PPL data to building information and reorganize it to generate electricity data by building (or combinations of buildings for those metered together).

The main steps involved are:

- Read in the PPL electricity data, building information data, and a

key to connect them by name

- Create and store an annual summary of the electricity data by meter

- Restructure the building data to generate a single row for the Main Meter and Weis Meter buildings

- Join PPL electricity data (daily and annual) to building information using a key that connects the two by building name

- Aggregate electricity and square footage data by building

- Inspect the data using a table

- Generate a few summary graphs

- Export the cleaned and reorganized data for downstream analyses

Load libraries + data

library(tidyverse) # load tidyverse── Attaching core tidyverse packages ──────────────────────── tidyverse 2.0.0 ──

✔ dplyr 1.1.4 ✔ readr 2.1.5

✔ forcats 1.0.0 ✔ stringr 1.5.1

✔ ggplot2 3.5.1 ✔ tibble 3.2.1

✔ lubridate 1.9.3 ✔ tidyr 1.3.1

✔ purrr 1.0.2

── Conflicts ────────────────────────────────────────── tidyverse_conflicts() ──

✖ dplyr::filter() masks stats::filter()

✖ dplyr::lag() masks stats::lag()

ℹ Use the conflicted package (<http://conflicted.r-lib.org/>) to force all conflicts to become errorslibrary(RColorBrewer)# load electricity data + reformat date

kwh <- read.csv("./data/FY25 PPL Electricity Data.csv", strip.white = T) %>%

mutate(date = mdy(date)) %>%

complete(nesting(account_number, meter_origin, meter_number), date)

kwh_sub <- read.csv("./output/kwh_main_daily.csv", strip.white = T) %>%

mutate(date = ymd(date))

# store temp data for later

temp <- select(kwh, date, ave_temp) %>%

unique()

# load building data + clean up building names

buildings <- read.csv("./keys/fy25_building_list_updated.csv",

strip.white = T) %>%

mutate(NAME = str_remove_all(NAME, "/"),

NAME = str_replace_all(NAME, " "," "),

NAME = str_replace_all(NAME, " "," "))

# load keys to link datasets

key <- read.csv("./keys/meter_building_key.csv", strip.white = T) %>%

mutate(NAME = str_remove_all(NAME, "/"),

NAME = str_replace_all(NAME, " "," "),

NAME = str_replace_all(NAME, " "," "))

key_sub <- read.csv("./keys/submeter_building_key.csv", strip.white = T) %>%

mutate(NAME = str_remove_all(NAME, "/"),

NAME = str_replace_all(NAME, " "," "),

NAME = str_replace_all(NAME, " "," "))

key_occ <- read.csv("./keys/occupancy_key.csv",

strip.white = T) %>%

mutate(NAME = str_remove_all(NAME, "/"),

NAME = str_replace_all(NAME, " "," "),

NAME = str_replace_all(NAME, " "," "))

# load occupancy data + generate mean for AY

occupants <- read.csv("./data/housing_counts.csv",

strip.white = T) %>%

mutate(sem_yr = paste(semester, year)) %>% # create semester column

filter(sem_yr %in% c("Fall 2024", "Spring 2025")) %>% # filter to FY25

group_by(building_name) %>%

summarize(occupants = mean(occupants, na.rm = T)) %>% # generate mean occupants across semester

left_join(key_occ, by = "building_name") %>% # join to building names

group_by(NAME) %>%

summarize(occupants = sum(occupants, na.rm = T)) # sum across buildings with multiple rows

# store annual totals for kWh

kwh_annual <- kwh %>%

filter(!is.na(total_kwh)) %>%

group_by(meter_origin) %>%

summarize(kwh = sum(total_kwh, na.rm = T),

days_perc = (n()/365)*100)

kwh_sub_annual <- kwh_sub %>%

filter(!is.na(kwh)) %>%

group_by(building) %>%

summarize(kwh = sum(kwh, na.rm = T),

days_perc = (n()/365)*100)Check key data

str(kwh)tibble [56,210 × 6] (S3: tbl_df/tbl/data.frame)

$ account_number: num [1:56210] 2.38e+08 2.38e+08 2.38e+08 2.38e+08 2.38e+08 ...

$ meter_origin : chr [1:56210] "131 S College St" "131 S College St" "131 S College St" "131 S College St" ...

$ meter_number : int [1:56210] 300087452 300087452 300087452 300087452 300087452 300087452 300087452 300087452 300087452 300087452 ...

$ date : Date[1:56210], format: "2024-07-01" "2024-07-02" ...

$ total_kwh : num [1:56210] 77 70.6 85.7 100.3 106.6 ...

$ ave_temp : int [1:56210] 69 73 78 81 84 86 84 84 86 87 ...str(buildings)'data.frame': 135 obs. of 14 variables:

$ TYPE : chr "Academic" "Academic" "Academic" "Academic" ...

$ type_new : chr "Academic" "Academic" "Academic" "Academic" ...

$ banner_code: chr "1110" "1540" "1035" "1810" ...

$ NAME : chr "162-164 Dickinson Ave." "46 S. West St." "57 S. College" "Green Valley Sanctuary" ...

$ occupant : chr "DEAL Archeology Labs" "Music office/rehearsal space" "Education Dept. Offices" "Research Facility" ...

$ address : chr "162-164 Dickinson Ave." "46 S. West St." "57 S. College St." "" ...

$ date_constr: chr "" "" "" "" ...

$ date_acqd : int 1998 1982 1979 1966 NA NA NA 1950 NA NA ...

$ date_reno : int 2010 NA NA NA 1997 2009 1940 2002 2001 NA ...

$ sqft : int 2500 1775 4576 2500 4000 29133 33692 11039 22000 112800 ...

$ rental : int 0 0 0 0 1 0 0 0 0 0 ...

$ main_meter : int 0 0 0 0 0 1 1 1 1 1 ...

$ main_disagg: int 0 0 0 0 0 1 1 1 1 1 ...

$ weis_meter : int 0 0 0 0 0 0 0 0 0 0 ...str(occupants)tibble [66 × 2] (S3: tbl_df/tbl/data.frame)

$ NAME : chr [1:66] "100 S. West St." "133 N. College St." "133 W. High St. (2, 5)" "135 Cedar St." ...

$ occupants: num [1:66] 19 5 4.5 4 8 20 7 8 4 6 ...Restructure building data

- Generate total square footage for buildings on the Main Meter and fill in values for other variables

- Similar operation for buildings on the Weis Meter

- Filter building info to those with individual meters and then add back in the Main Meter and Weis aggregated information

# Generate lookup table for each meter status

buildings_main <- buildings %>%

filter(main_meter == 1) %>% # filter to main meter buildings

summarize(sqft = sum(sqft, na.rm = T)) %>% # sum sqft for these buildings

mutate(meter = "Main Meter - Total",

NAME = "Main Meter",

type_new = "Main Meter") %>%

select(type_new, NAME, sqft, meter)

buildings_weis <- buildings %>%

filter(weis_meter == 1) %>% # filter to weis buildings

summarize(sqft = sum(sqft, na.rm = T)) %>%

mutate(meter = "Weis Meter - Total",

NAME = "Weis Meter",

type_new = "Weis Meter") %>%

select(type_new, NAME, sqft, meter)

buildings_agg <- rbind(buildings_main, buildings_weis)

buildings_individual <- buildings %>%

filter(main_meter == 0 & weis_meter == 0) %>% # keep only buildings on individual meters

mutate(meter = "Individual") %>%

select(meter, NAME, type_new, occupant, address, date_constr, date_acqd, date_reno, sqft, rental) %>% # select relevant columns

# rbind(buildings_main, buildings_weis) %>%

select(type_new, NAME, sqft, meter)

buildings_submeter <- buildings %>%

filter(main_disagg ==1) %>% # keep only buildings on individual meters

mutate(meter = "Submeter") %>%

select(meter, NAME, type_new, occupant, address, date_constr, date_acqd, date_reno, sqft, rental) %>% # select relevant columns

# rbind(buildings_main, buildings_weis) %>%

select(type_new, NAME, sqft, meter)

# Store summary of building meter status

buildings_sum <- buildings %>%

mutate(meter = ifelse(weis_meter == 1, "Weis Meter",

ifelse(main_meter == 1 & main_disagg == 0, "Main Meter",

ifelse(main_disagg == 1, "Submeter", "Individual"))))Wrangle datasets

Annual totals

# generate annual summary for individually metered buildings

joined_individual <- kwh_annual %>%

left_join(key, by = "meter_origin") %>% # join to key to match meters to building names

right_join(buildings_individual, by = "NAME", relationship = "many-to-one") %>% # join to building info by name

group_by(type_new, NAME, days_perc, meter) %>% # group by building

summarize(kwh = sum(kwh, na.rm = T), # sum kwh by building

sqft = mean(sqft, na.rm = T)) %>% # take mean to preserve sqft data

mutate(type = type_new) %>%

left_join(occupants, by = "NAME") %>%

ungroup() %>%

mutate_all(~ifelse(is.nan(.), NA, .)) %>%

select(type, meter, NAME, days_perc, kwh, sqft, occupants)`summarise()` has grouped output by 'type_new', 'NAME', 'days_perc'. You can

override using the `.groups` argument.# generate annual summary for submetered buildings

joined_sub <- kwh_sub_annual %>%

left_join(key_sub, by = "building") %>%

left_join(buildings_submeter, by = "NAME", relationship = "many-to-one") %>% # join to building info by name

filter(building != "CHW_Base") %>% # remove duplicate CHW value - not sure what this is?

group_by(type_new, NAME, days_perc, meter) %>% # group by building

summarize(kwh = sum(kwh, na.rm = T), # sum kwh by building

sqft = mean(sqft, na.rm = T)) %>% # preserve sqft as is

mutate(type = type_new) %>%

# filter(!is.na(type_new)) %>% # filter out those with NA for type

left_join(occupants, by = "NAME") %>%

ungroup() %>%

mutate_all(~ifelse(is.nan(.), NA, .)) %>%

select(type, meter, NAME, days_perc, kwh, sqft, occupants)`summarise()` has grouped output by 'type_new', 'NAME', 'days_perc'. You can

override using the `.groups` argument.# generate annual summary for main and weis meters

joined_agg <- kwh_annual %>%

left_join(key, by = "meter_origin") %>%

right_join(buildings_agg, by = "NAME") %>%

group_by(type_new, NAME, days_perc, meter) %>% # group by building

summarize(kwh = sum(kwh, na.rm = T), # sum kwh by building

sqft = mean(sqft, na.rm = T)) %>% # preserve sqft as is

mutate(type = type_new) %>%

# filter(!is.na(type_new)) %>% # filter out those with NA for type

left_join(occupants, by = "NAME") %>%

ungroup() %>%

mutate_all(~ifelse(is.nan(.), NA, .)) %>%

select(type, meter, NAME, days_perc, kwh, sqft, occupants)`summarise()` has grouped output by 'type_new', 'NAME', 'days_perc'. You can

override using the `.groups` argument.# combine into one data frame

joined_full <- rbind(joined_individual, joined_sub, joined_agg) %>%

filter(kwh != 0) %>%

mutate(kwh_corr = kwh/(days_perc/100)) %>% # estimate kwh for all days in the year

arrange(type, meter) %>%

select(type, meter, NAME, days_perc, kwh, kwh_corr, sqft, occupants)

# store lookup for data coverage

data_cov <- joined_full %>%

select(NAME, days_perc)Daily data

# generate daily summary for individually metered buildings

daily_individual <- kwh %>%

left_join(key, by = "meter_origin") %>% # join to key to match meters to building names

right_join(buildings_individual, by = "NAME", relationship = "many-to-many") %>% # join to building info by name

group_by(type_new, NAME, date, meter) %>% # group by building

summarize(kwh = sum(total_kwh, na.rm = T), # sum kwh by building

sqft = mean(sqft, na.rm = T)) %>%

mutate(type = type_new) %>%

ungroup() %>%

mutate_all(~ifelse(is.nan(.), NA, .)) %>% # convert NaN to NA

select(type, meter, date, NAME, kwh, sqft) %>%

complete(nesting(type, meter, NAME), date) %>% # restore NA values for missing date-building combos

filter(!is.na(date)) # remove rows without value for date`summarise()` has grouped output by 'type_new', 'NAME', 'date'. You can

override using the `.groups` argument.# generate daily summary for submetered buildings

daily_sub <- kwh_sub %>%

left_join(key_sub, by = "building") %>%

left_join(buildings_submeter, by = "NAME", relationship = "many-to-one") %>% # join to building info by name

filter(building != "CHW_Base") %>% # remove duplicate CHW value - not sure what this is?

group_by(type_new, NAME, date, meter) %>% # group by building

summarize(kwh = sum(kwh, na.rm = T),

sqft = mean(sqft, na.rm = T)) %>%

mutate(type = type_new) %>%

filter(!is.na(type_new)) %>%

ungroup() %>%

select(type, meter, date, NAME, kwh, sqft) %>%

mutate_all(~ifelse(is.nan(.), NA, .)) %>% # convert NaN to NA

mutate(kwh = ifelse(kwh == 0, NA, kwh)) %>% # restore NA values for missing date-building combos

filter(!is.na(date)) # remove rows without value for date`summarise()` has grouped output by 'type_new', 'NAME', 'date'. You can

override using the `.groups` argument.# generate daily summary for main and weis meters

daily_agg <- kwh %>%

left_join(key, by = "meter_origin") %>%

right_join(buildings_agg, by = "NAME") %>%

group_by(type_new, NAME, date, meter) %>% # group by building

summarize(kwh = sum(total_kwh, na.rm = T), # sum kwh by building

sqft = mean(sqft, na.rm = T)) %>% # preserve sqft as is

mutate(type = type_new) %>%

ungroup() %>%

mutate_all(~ifelse(is.nan(.), NA, .)) %>%

select(type, meter, date, NAME, kwh, sqft)`summarise()` has grouped output by 'type_new', 'NAME', 'date'. You can

override using the `.groups` argument.# generate complete daily dataset

daily_full <- rbind(daily_individual, daily_agg, daily_sub) %>%

mutate(date = as.Date(date)) %>%

arrange(type, meter, NAME, date) %>%

select(type, meter, NAME, date, kwh, sqft) %>%

left_join(occupants, by = "NAME") %>%

left_join(data_cov, by = "NAME") %>%

merge(temp, by = "date") %>% # for some reason the join by date is not fully working... duplicating rows

filter(!is.na(ave_temp)) %>% # remove duplicate rows

mutate(period = ifelse((date > ymd("2024-08-28") & date < ymd("2024-12-21")), "Fall",

ifelse((date > ymd("2025-01-07") & date < ymd("2025-05-18")), "Spring",

ifelse(date > ymd("2024-12-21") & date < ymd("2025-01-07"), "Winter", "Summer")))) %>%

select(type, meter, NAME, days_perc, sqft, occupants, period, date, kwh, ave_temp) %>%

arrange(NAME)Data availability by type

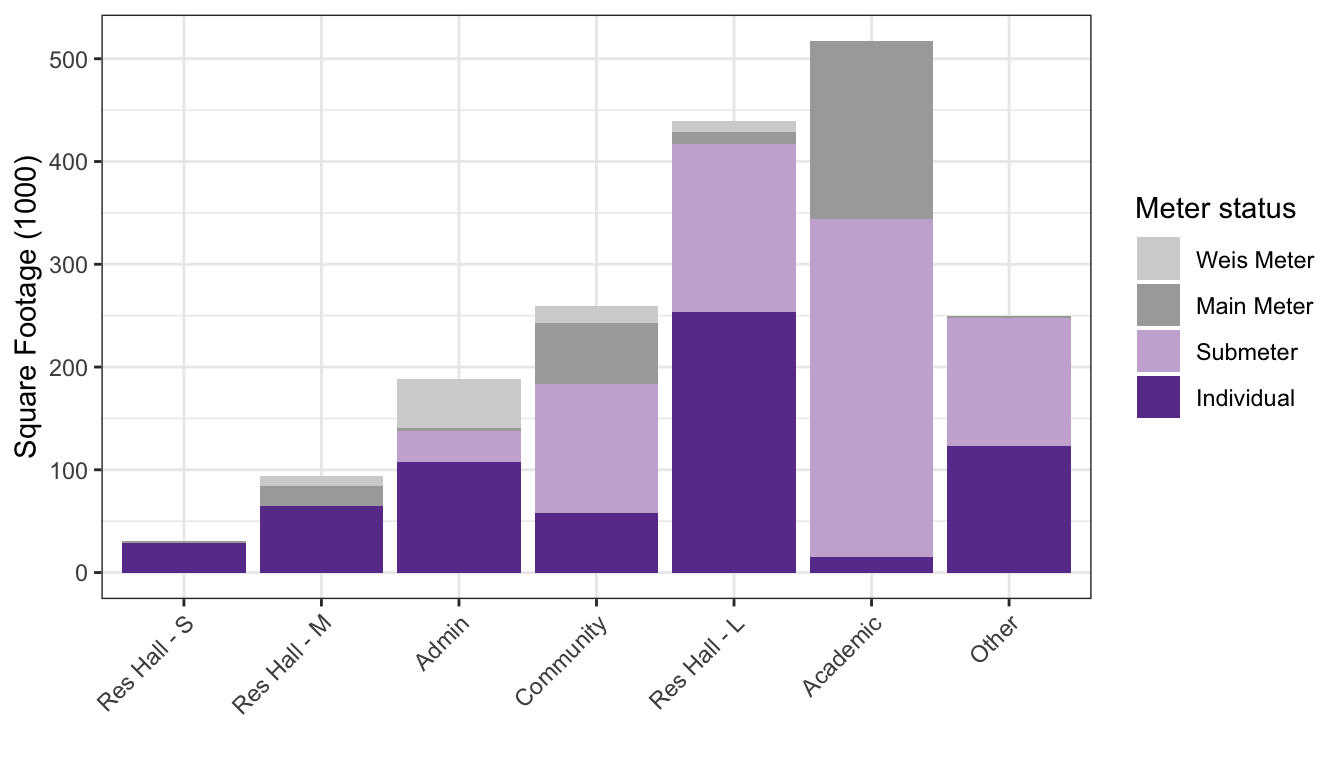

buildings_sum$meter <- factor(buildings_sum$meter,

levels = c("Weis Meter", "Main Meter",

"Submeter", "Individual"))

pal <- c("lightgrey","darkgrey","#CAB2D6","#6A3D9A")

buildings_graph <- buildings_sum %>%

filter(!is.na(meter) & !type_new %in% c("Res Hall - U","Non-building","Production","Mixed"))

ggplot(buildings_graph,

aes(x = reorder(type_new, sqft, FUN = "sum"), y = sqft/1000, fill = meter)) +

geom_col(position = "stack") +

scale_fill_manual(values = pal) +

theme_bw() +

labs(x = "", y = "Square Footage (1000)", fill = "Meter status") +

theme(axis.text.x = element_text(angle = 45, vjust = 1, hjust = 1))

| Version | Author | Date |

|---|---|---|

| bfe7b73 | maggiedouglas | 2026-03-04 |

| d04b276 | maggiedouglas | 2026-03-04 |

| 1ea78ae | maggiedouglas | 2026-02-27 |

| e752845 | maggiedouglas | 2026-02-26 |

| 08cd7e1 | maggiedouglas | 2026-02-25 |

| 8c6712f | maggiedouglas | 2026-02-24 |

| 2fef649 | maggiedouglas | 2026-02-24 |

| a379c87 | maggiedouglas | 2026-02-23 |

| 1e465a5 | maggiedouglas | 2026-02-23 |

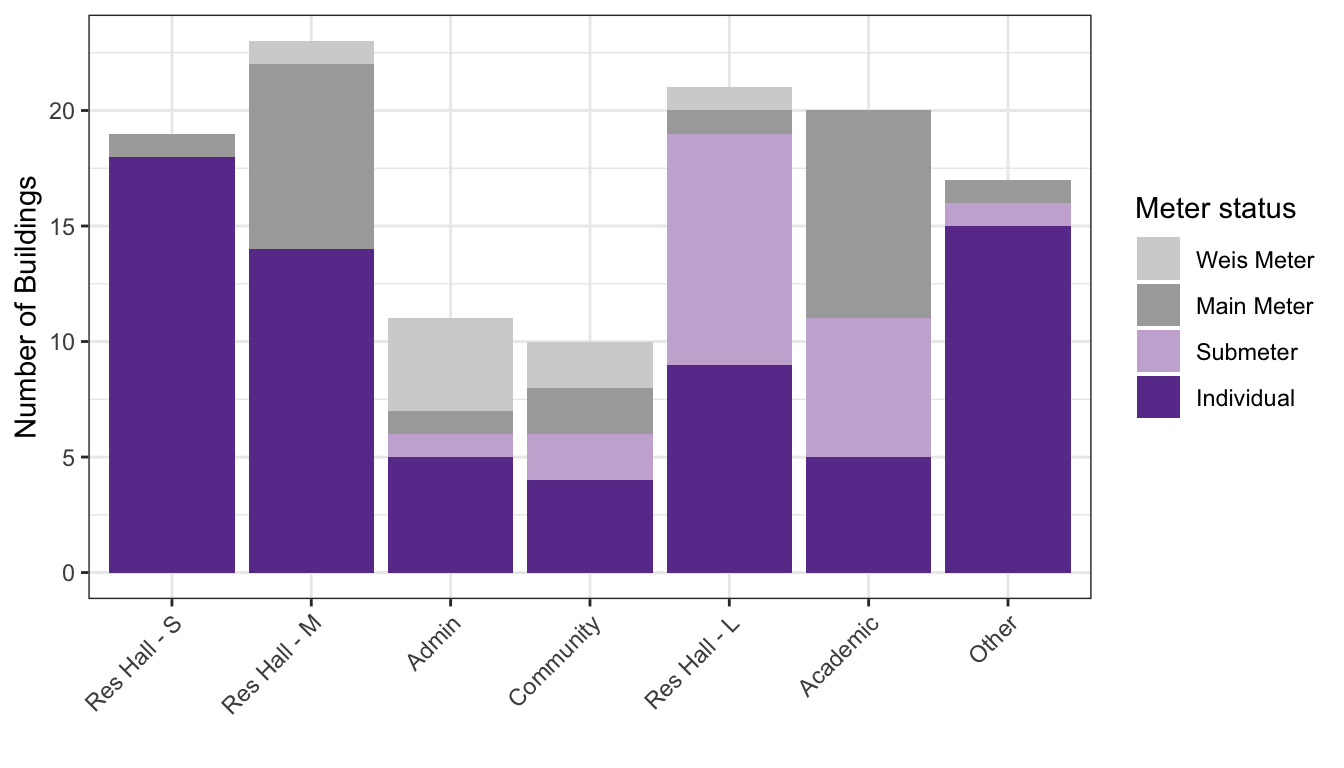

ggplot(buildings_graph,

aes(x = reorder(type_new, sqft, FUN = "sum"), fill = meter)) +

geom_bar(position = "stack") +

scale_fill_manual(values = pal) +

theme_bw() +

labs(x = "", y = "Number of Buildings", fill = "Meter status") +

theme(axis.text.x = element_text(angle = 45, vjust = 1, hjust = 1))

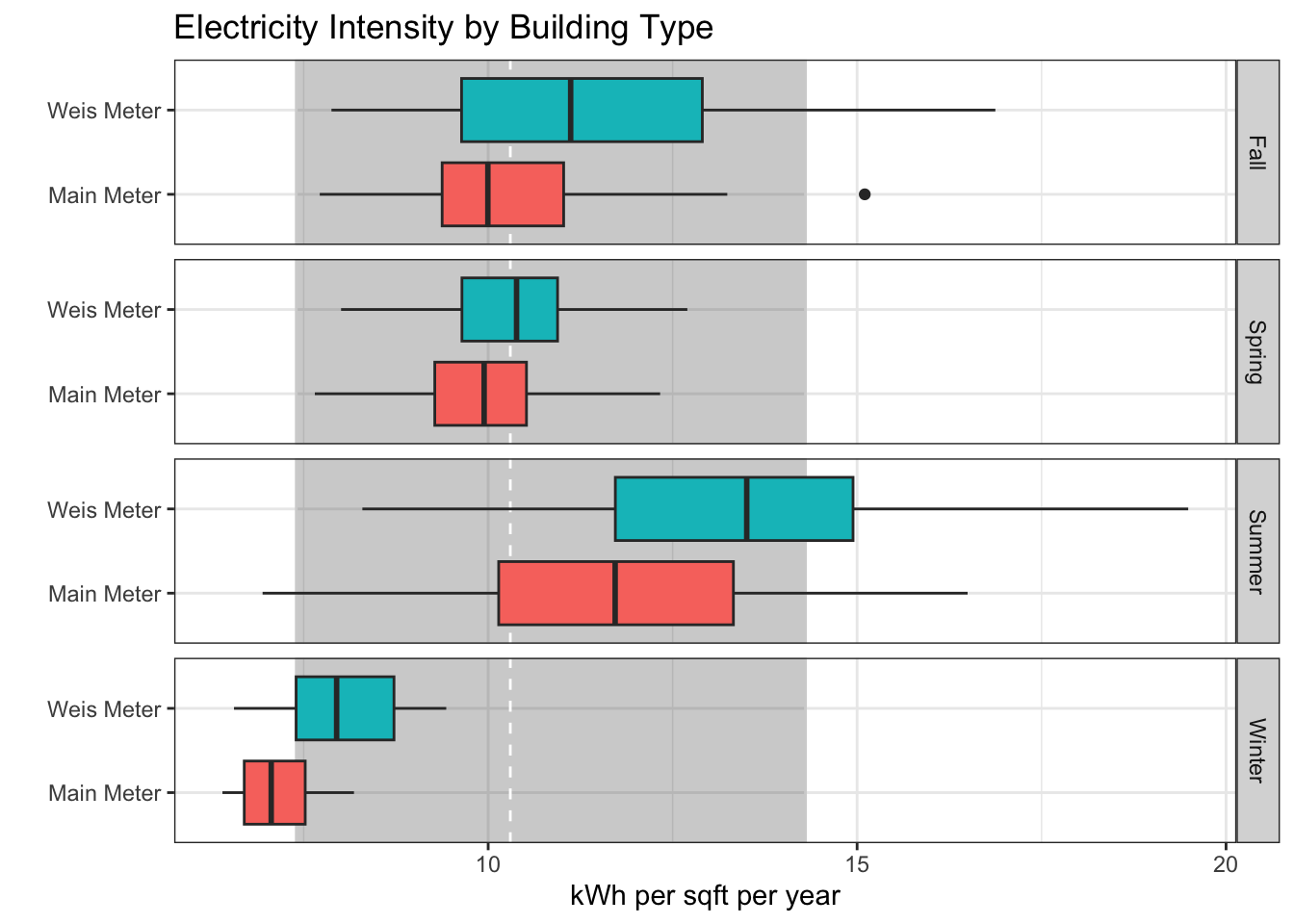

Practice boxplot

daily_test <- daily_full %>%

filter(NAME %in% c("Main Meter","Weis Meter")) %>%

mutate(kwh_sqft = (kwh/sqft*365)) # adjust daily values to per year equivalent

ggplot(daily_test,

aes(x = type, y = kwh_sqft, fill = type)) +

annotate("rect", xmin = -Inf, xmax = Inf, ymin = 7.4, ymax = 14.3, color = "lightgray", alpha = 0.3) +

geom_hline(yintercept = 10.3, linetype = "dashed", color = "white") +

geom_boxplot() +

coord_flip() +

facet_grid(period ~ .) +

theme_bw() +

theme(legend.position = "none") +

labs(x = "", y = "kWh per sqft per year",

title = "Electricity Intensity by Building Type")

| Version | Author | Date |

|---|---|---|

| 81a318c | maggiedouglas | 2026-03-04 |

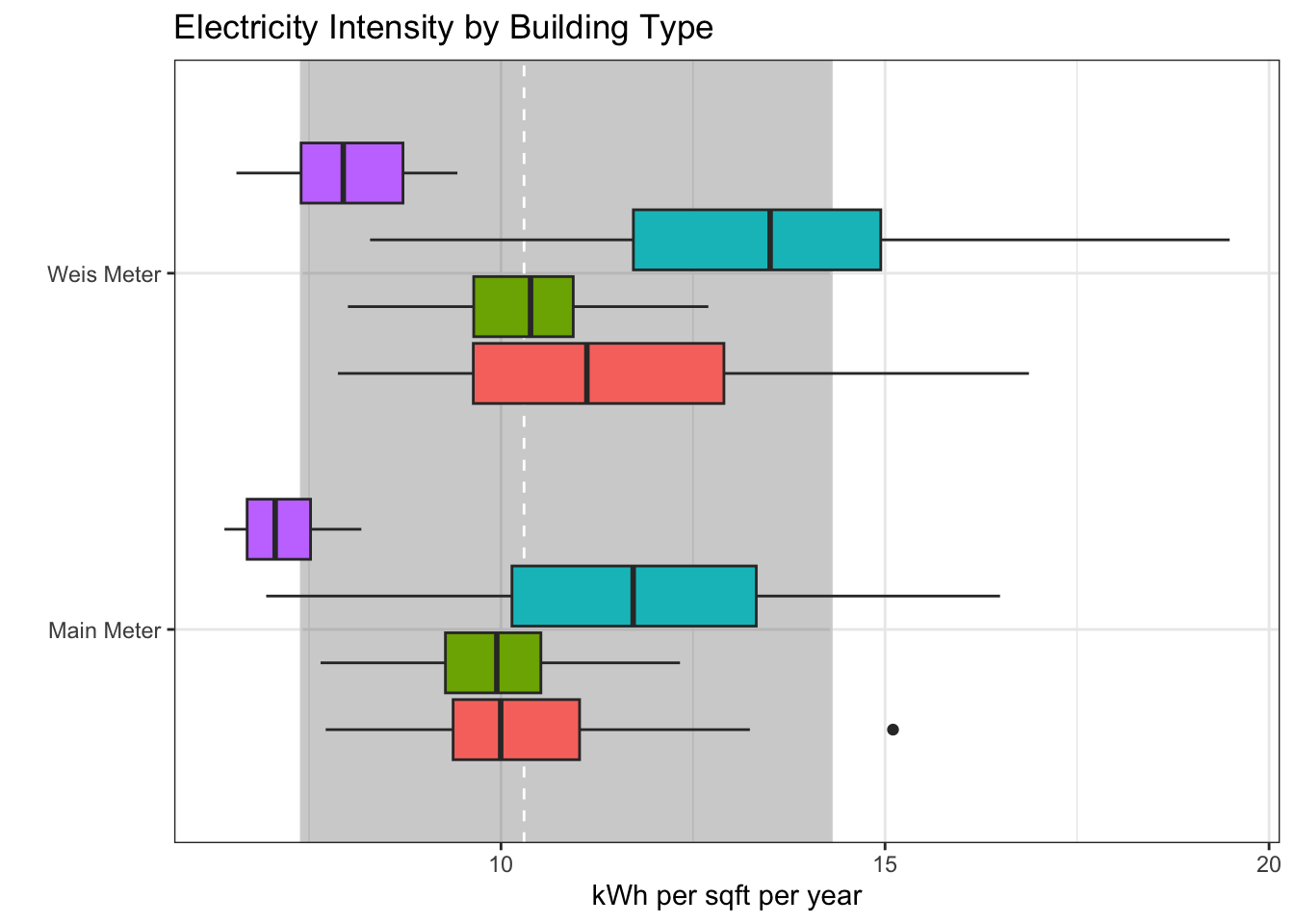

ggplot(daily_test,

aes(x = type, y = kwh_sqft, fill = period)) +

annotate("rect", xmin = -Inf, xmax = Inf, ymin = 7.4, ymax = 14.3, color = "lightgray", alpha = 0.3) +

geom_hline(yintercept = 10.3, linetype = "dashed", color = "white") +

geom_boxplot() +

coord_flip() +

theme_bw() +

theme(legend.position = "none") +

labs(x = "", y = "kWh per sqft per year",

title = "Electricity Intensity by Building Type")

| Version | Author | Date |

|---|---|---|

| 81a318c | maggiedouglas | 2026-03-04 |

Export for downstream analyses

# final data check

str(daily_full)'data.frame': 38690 obs. of 10 variables:

$ type : chr "Res Hall - M" "Res Hall - M" "Res Hall - M" "Res Hall - M" ...

$ meter : chr "Individual" "Individual" "Individual" "Individual" ...

$ NAME : chr "100 S. West St." "100 S. West St." "100 S. West St." "100 S. West St." ...

$ days_perc: num 100 100 100 100 100 100 100 100 100 100 ...

$ sqft : num 7190 7190 7190 7190 7190 7190 7190 7190 7190 7190 ...

$ occupants: num 19 19 19 19 19 19 19 19 19 19 ...

$ period : chr "Summer" "Summer" "Summer" "Summer" ...

$ date : Date, format: "2024-07-01" "2024-07-02" ...

$ kwh : num 98.5 106.7 122.8 135.9 138 ...

$ ave_temp : int 69 73 78 81 84 86 84 84 86 87 ...str(joined_full)tibble [104 × 8] (S3: tbl_df/tbl/data.frame)

$ type : chr [1:104] "Academic" "Academic" "Academic" "Academic" ...

$ meter : chr [1:104] "Individual" "Individual" "Individual" "Individual" ...

$ NAME : chr [1:104] "162-164 Dickinson Ave." "46 S. West St." "57 S. College" "Carlisle Theatre" ...

$ days_perc: num [1:104] 100 100 100 100 100 ...

$ kwh : num [1:104] 9217 6493 12221 29070 21575 ...

$ kwh_corr : num [1:104] 9217 6493 12221 29070 21575 ...

$ sqft : num [1:104] 2500 1775 4576 4000 2500 ...

$ occupants: num [1:104] NA NA NA NA NA NA NA NA NA NA ...today <- today("EST") %>%

str_remove(" UTC")

write.csv(daily_full, paste0("./output/kwh_daily_", today, ".csv"), row.names = F)

write.csv(joined_full, paste0("./output/kwh_annual_", today, ".csv"), row.names = F)

sessionInfo()R version 4.3.2 (2023-10-31)

Platform: x86_64-apple-darwin20 (64-bit)

Running under: macOS Ventura 13.7.8

Matrix products: default

BLAS: /Library/Frameworks/R.framework/Versions/4.3-x86_64/Resources/lib/libRblas.0.dylib

LAPACK: /Library/Frameworks/R.framework/Versions/4.3-x86_64/Resources/lib/libRlapack.dylib; LAPACK version 3.11.0

locale:

[1] en_US.UTF-8/en_US.UTF-8/en_US.UTF-8/C/en_US.UTF-8/en_US.UTF-8

time zone: America/New_York

tzcode source: internal

attached base packages:

[1] stats graphics grDevices utils datasets methods base

other attached packages:

[1] RColorBrewer_1.1-3 lubridate_1.9.3 forcats_1.0.0 stringr_1.5.1

[5] dplyr_1.1.4 purrr_1.0.2 readr_2.1.5 tidyr_1.3.1

[9] tibble_3.2.1 ggplot2_3.5.1 tidyverse_2.0.0

loaded via a namespace (and not attached):

[1] sass_0.4.8 utf8_1.2.4 generics_0.1.3 stringi_1.8.3

[5] hms_1.1.3 digest_0.6.37 magrittr_2.0.3 timechange_0.3.0

[9] evaluate_0.23 grid_4.3.2 fastmap_1.1.1 rprojroot_2.0.4

[13] workflowr_1.7.1 jsonlite_1.8.8 whisker_0.4.1 promises_1.2.1

[17] fansi_1.0.6 scales_1.3.0 jquerylib_0.1.4 cli_3.6.2

[21] rlang_1.1.3 munsell_0.5.0 withr_3.0.0 cachem_1.0.8

[25] yaml_2.3.8 tools_4.3.2 tzdb_0.4.0 colorspace_2.1-0

[29] httpuv_1.6.13 vctrs_0.6.5 R6_2.5.1 lifecycle_1.0.4

[33] git2r_0.33.0 fs_1.6.3 pkgconfig_2.0.3 pillar_1.9.0

[37] bslib_0.6.1 later_1.3.2 gtable_0.3.4 glue_1.7.0

[41] Rcpp_1.1.0 highr_0.10 xfun_0.41 tidyselect_1.2.0

[45] rstudioapi_0.16.0 knitr_1.45 farver_2.1.1 htmltools_0.5.7

[49] labeling_0.4.3 rmarkdown_2.25 compiler_4.3.2